The Basics

High-level usage and scripting references for GS_Play feature sets. Drop-in functionality for intermediate developers and designers.

GS_Play is a full production set of modular features that can all be individually toggled on to enable only the features you need for your project. The “Basics” section covers what each feature set does, how to work with it from the editor and scripts, and what events and nodes are available — without requiring deep architectural knowledge.

If you are new to GS_Play, start with Get Started first. If you need architecture-level detail, component internals, or extension guides, use the Framework API section instead.

How This Section Is Organized

Each gem section follows this structure:

Gem overview page — What the gem does, the problems it solves, and a summary of its feature sets with links to feature sub-pages.

Feature sub-pages — One page per feature set. Each covers:

- What it does and what components are involved.

- Editor setup for drop-in use.

- Relevant ScriptCanvas nodes and EBus events.

- A quick reference table.

- Links to the Framework API for deeper reference.

Pages in this section deliberately omit architecture internals, extension code, and low-level component details. If you need those, follow the Framework API links at the bottom of each page.

Sections

1 - GS_Core

The foundation gem for the GS_Play framework — game lifecycle, save system, stage management, input, actions, and utility libraries.

GS_Core is the required foundation for every GS_Play enabled project. All other GS gems depend on it to drive their complex behaviour, and utilize its systemic features. It provides the game startup sequence, persistence, level loading, input handling, a triggerable action system, and a shared utility library.

If you have not set up GS_Core yet, start with the Simple Project Setup guide before reading further.

For architecture details, component properties, and extending the system in C++, see the GS_Core API.

Quick Navigation

| I want to… | Feature | API |

|---|

| Start a new game, continue from a save, load a specific file, or return to the title screen | GS_Managers | API |

| Save and load game data, or track persistent flags and counters across sessions | GS_Save | API |

| Move between levels, or configure per-level spawn points and navigation settings | GS_StageManager | API |

| Read player input, disable input during menus, or swap control schemes at runtime | GS_Options | API |

| Use easing curves, detect physics zones, smooth values, pick randomly, or work with splines | Utilities | API |

| Trigger a reusable behavior on an entity from a script, physics zone, or another action | Systems: GS_Actions | API |

| Animate a transform, color, or value smoothly over time | Systems: GS_Motion | API |

Installation

GS_Core is a required gem. It will be added to your project when you enable any other GS_Play gem.

For a complete guided setup, follow the Simple Project Setup guide or video tutorial.

Follow these steps in particular:

- Configure Project

- Prepare Managers

- Prepare Startup

Quick Installation Summary

Once the gem is registered to your project:

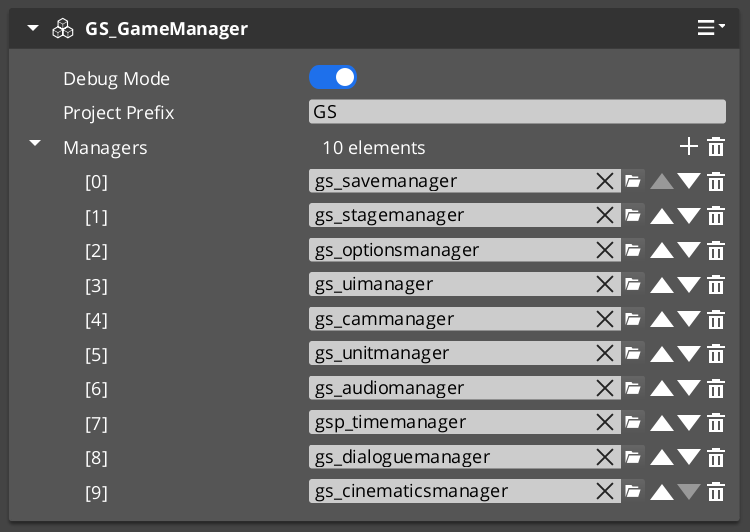

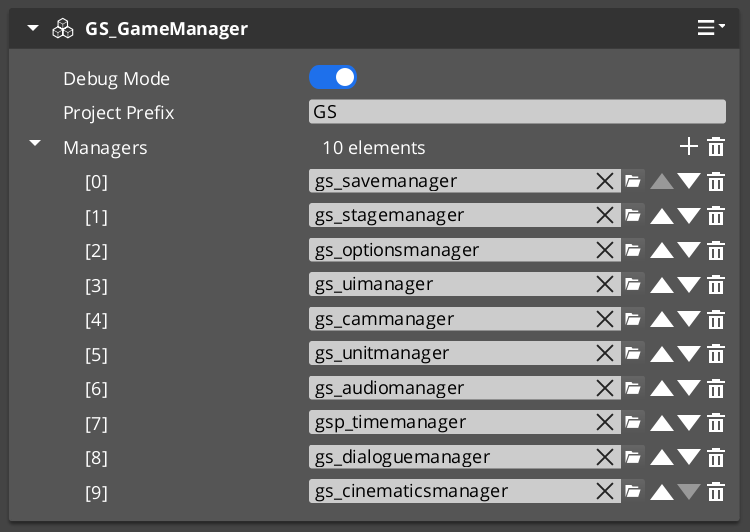

- Create a Game Manager prefab and place it in every level.

- Create prefabs of any managers you wish to utilize in your project

- Add all the manager prefabs to your GameManager Managers list.

- Implement a way to activate “Begin Game”

- Create a UI to fire New Game, or Load Game.

- Create a Script to fire New Game, or Load Game OnStartupComplete.

- Toggle “Debug Mode” on. This skips through the begin game process.

GS_Managers

Controls the game startup lifecycle — spawning and initializing all manager systems in a guaranteed order, then providing top-level game navigation: New Game, Continue, Load Game, Return to Title, and Quit. The starting point for any game-wide behavior.

GS_Managers

API

GS_Save

Handles all save and load operations, including entity state persistence across level loads and simple key-value record tracking for global flags and counters.

GS_Save

API

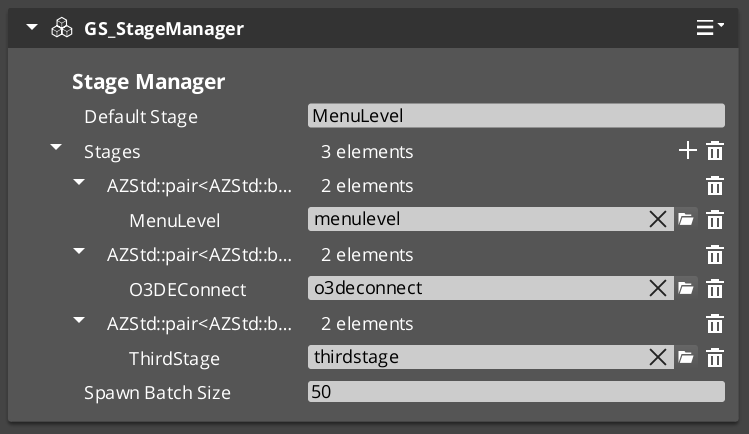

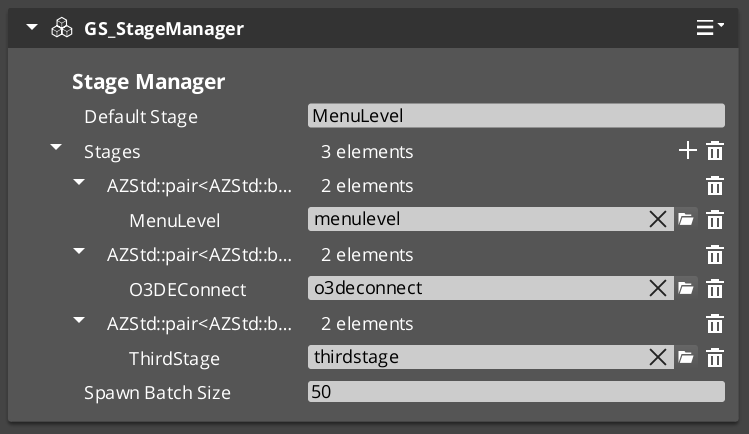

GS_StageManager

Manages level loading and navigation. Place named Exit Points in your levels to control where the player arrives, and use Stage Data components to configure per-level settings like NavMesh references and spawn configuration.

GS_StageManager

API

GS_Options

Manages player input through swappable Input Profile assets and Input Reader components, with group-level enable/disable for suppressing input during menus, cutscenes, or transitions.

GS_Options

API

Systems

Core framework systems used across multiple gems: the GS_Actions triggerable behavior system and the GS_Motion track-based animation engine.

Systems

API

Utilities

A collection of shared tools: easing curves (40+ types), spring dampers for smooth value following, weighted random selection, color and float gradients, spline helpers, and Physics Trigger Volume components.

Utilities

API

See Also

For the full API, component properties, and C++ extension guide:

For step-by-step project setup:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.1 - Managers System

How to work with the GS_Play manager system — startup events, game navigation, and standby mode from scripts.

The Managers system is how GS_Play starts your game. The Game Manager spawns all other managers in a guaranteed order, coordinates their initialization stages, and then broadcasts events that signal when each stage is complete and when the game is fully ready to run.

This gives you the ability to ensure startup happens as expected, can create your own managers of any type, and can toggle full-game standby.

For architecture details, component properties, and extending the system in C++, see the GS_Managers API.

Contents

Startup Sequence

Breakdown

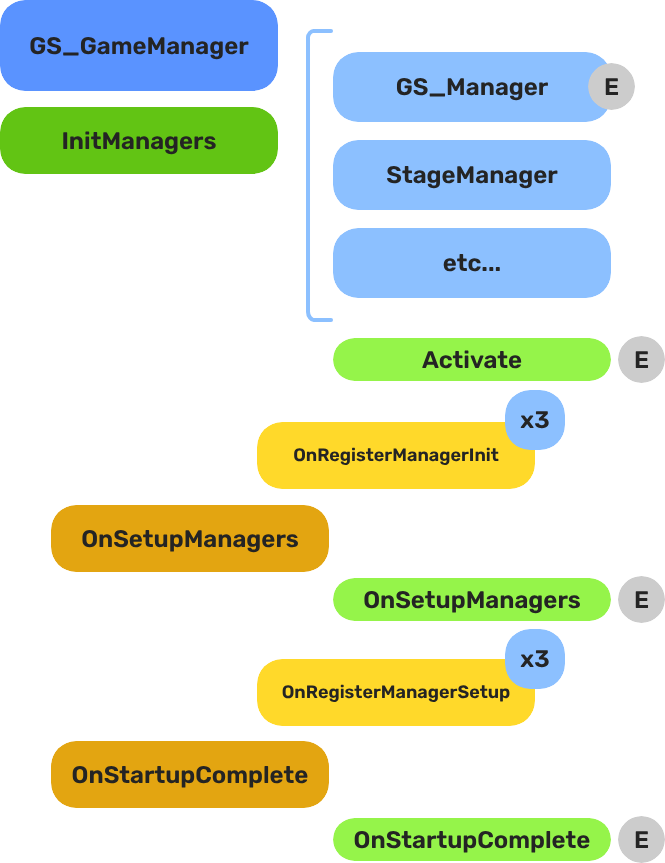

When the project starts, the Game Manager runs three stages before the game is considered ready:

| Stage | Broadcast Event | What It Means |

|---|

| 1 — Initialize | (internal) | Each manager is spawned. They activate, then report ready. |

| 2 — Setup | OnSetupManagers | Setup stage. Now safe to query other managers. |

| 3 — Complete | OnStartupComplete | Last stage. Everything is ready.

Do any last minute things. Now safe to begin gameplay. |

For most scripts, you only need OnStartupComplete. Wait for this event before doing anything that depends on managers to be completely setup.

E Indicates extensible classes and methods.

Patterns - Complete list of system patterns used in GS_Play.

Responding to Startup

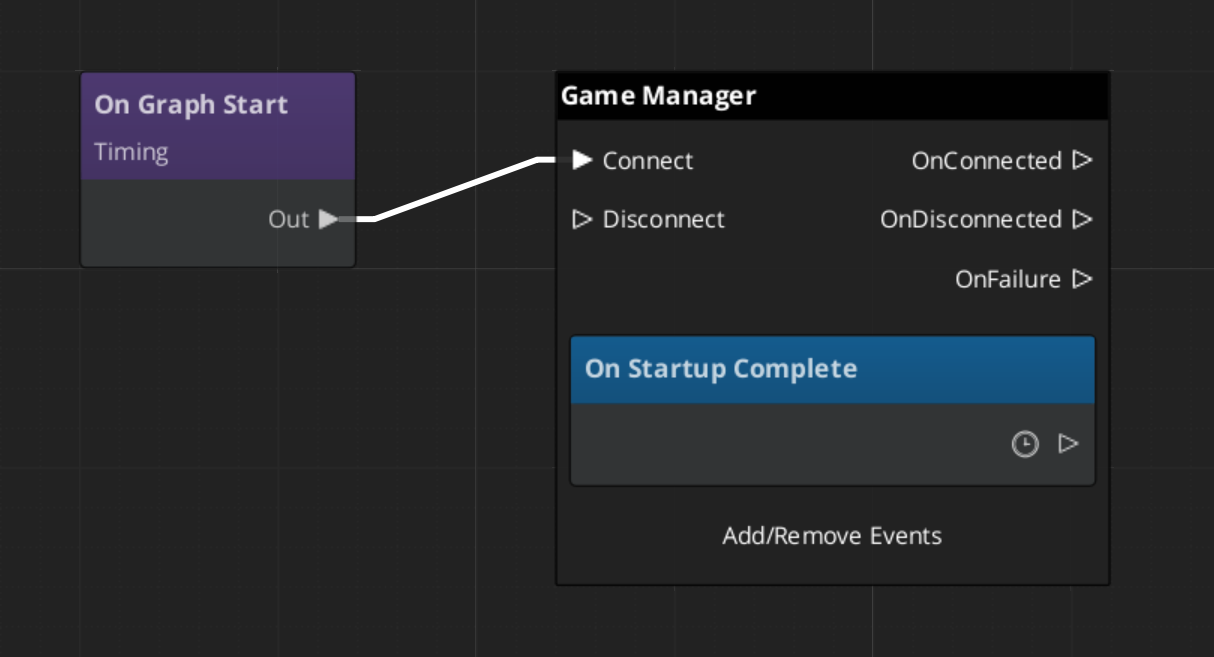

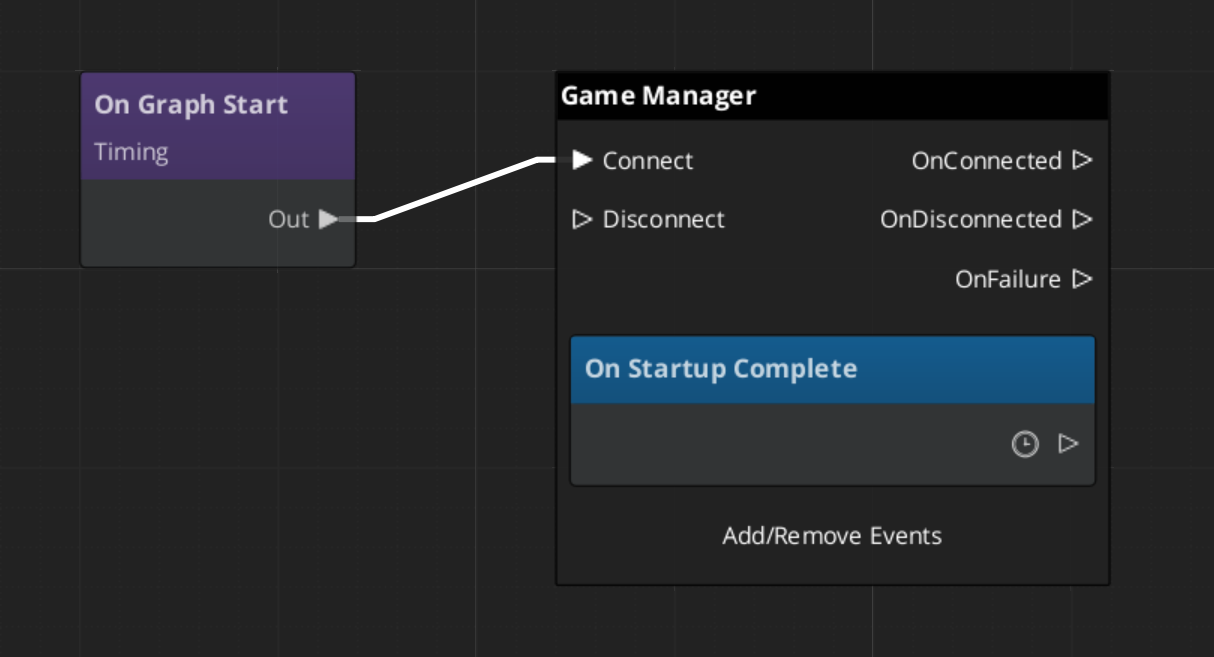

ScriptCanvas

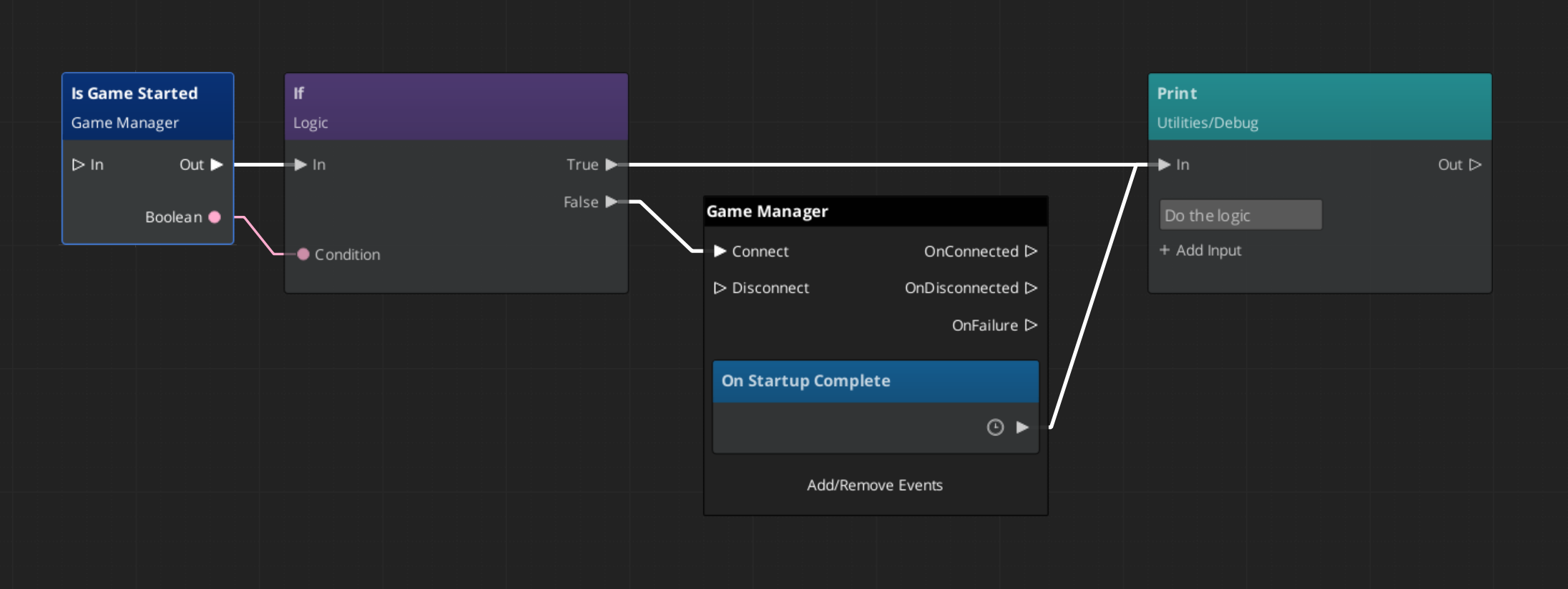

Connect to GameManagerNotificationBus and handle OnStartupComplete to know when the game is fully ready:

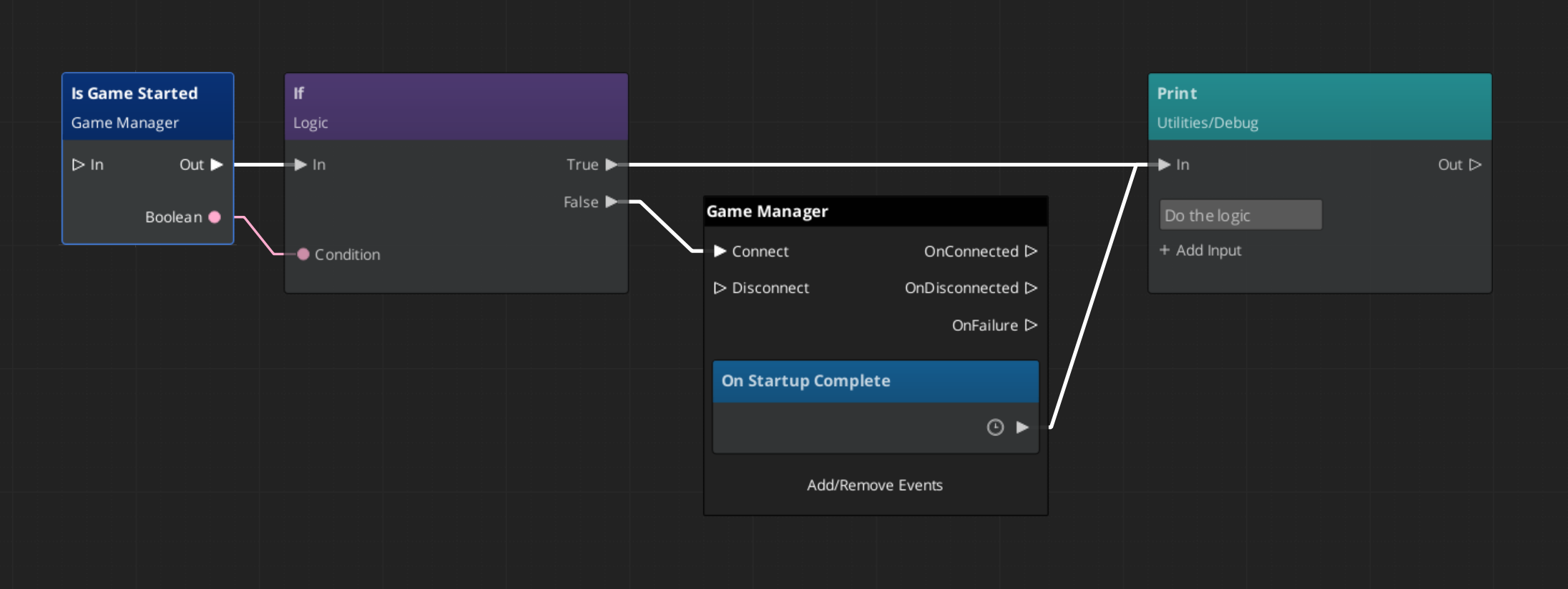

To check at any point whether the game has already finished starting, use the IsStarted request:

Game Navigation

The Game Manager owns the top-level game flow. Call these from title screens, pause menus, and end-game sequences. They coordinate the Save Manager and Stage Manager automatically.

| ScriptCanvas Node | What It Does |

|---|

TriggerNewGame | Starts a new game with the default save name. |

TriggerNewGameWithName(saveName) | Starts a new game and writes to a named save file. |

TriggerContinueGame | Loads the most recent save and continues from it. |

TriggerLoadGame(saveName) | Loads a specific save file by name. |

TriggerReturnToTitle | Returns to the title stage, tearing down the current session. |

TriggerSaveAndExitGame | Saves the current state and exits the application. |

TriggerExitGame | Exits the application without saving. |

Standby Mode

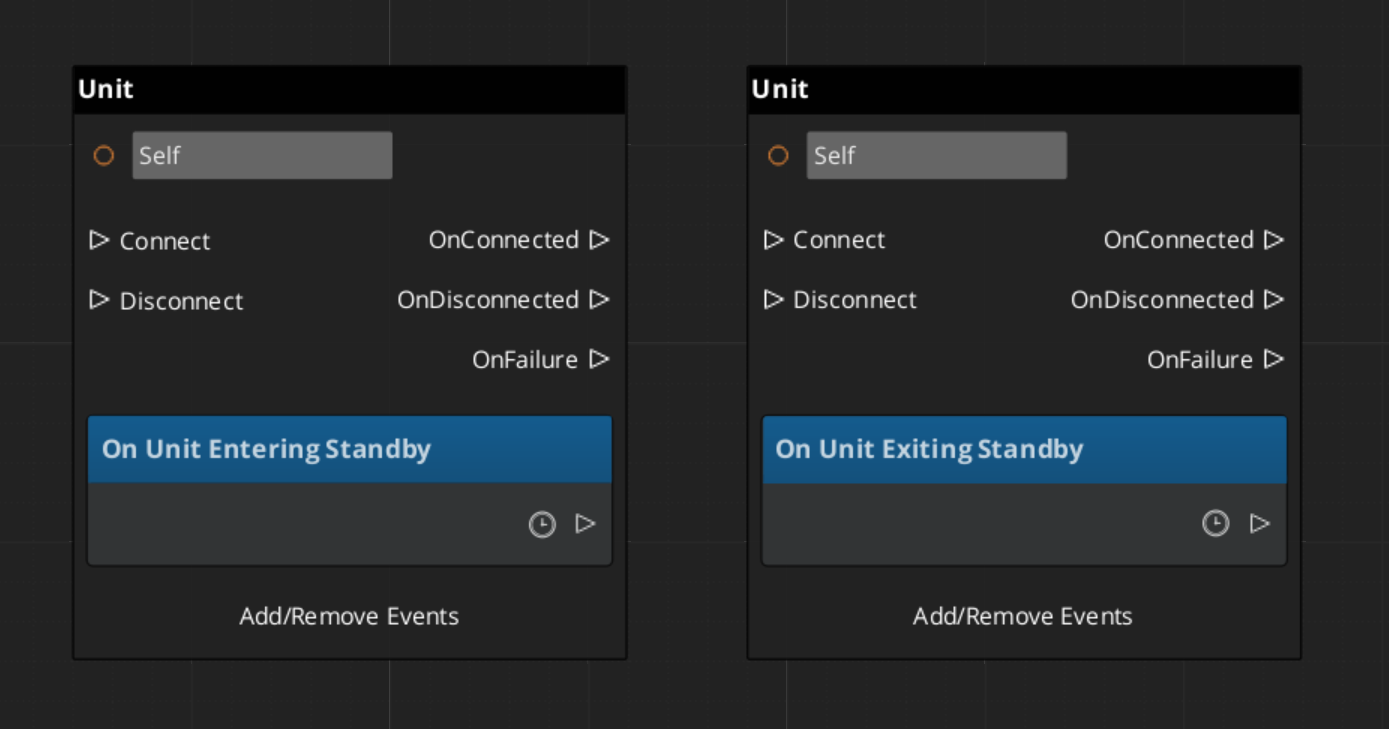

Standby is a global pause. The Game Manager enters standby automatically during level transitions and other blocking operations. It broadcasts OnEnterStandby to halt all gameplay systems, and OnExitStandby when the operation completes.

Listen to these in any script that drives continuous logic — timers, ticks, or animation sequences:

| Event | What to Do |

|---|

OnEnterStandby | Pause timers, halt ticks, stop animations. |

OnExitStandby | Resume timers, re-enable ticks. |

Both events are on GameManagerNotificationBus.

Debug Mode

When Debug Mode is enabled on the Game Manager component in the editor, the game starts in the current level instead of navigating to your title stage. This allows rapid iteration on any level without going through the full boot flow.

Debug Mode only changes startup navigation. All manager initialization and event broadcasting proceed normally.

Quick Reference

| Need | Bus | Method / Event |

|---|

| Know when startup is complete | GameManagerNotificationBus | OnStartupComplete |

| Check if game has started | GameManagerRequestBus | IsStarted |

| Start a new game | GameManagerRequestBus | NewGame / TriggerNewGame (SC) |

| Continue from last save | GameManagerRequestBus | ContinueGame / TriggerContinueGame (SC) |

| Load a specific save | GameManagerRequestBus | LoadGame / TriggerLoadGame (SC) |

| Return to title | GameManagerRequestBus | ReturnToTitle / TriggerReturnToTitle (SC) |

| Pause all systems | GameManagerRequestBus | EnterStandby |

| Resume all systems | GameManagerRequestBus | ExitStandby |

| Know when standby changes | GameManagerNotificationBus | OnEnterStandby / OnExitStandby |

Glossary

| Term | Meaning |

|---|

| Standby | Global pause broadcast to all managers and their subsystems |

| Startup Sequence | The three-stage lifecycle (Initialize → SetupManagers → StartupComplete) before gameplay is ready |

| Manager | A component that extends GS_ManagerComponent and registers with the Game Manager |

| Debug Mode | Starts the game in the current editor level instead of navigating to the title stage |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For step-by-step project setup:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

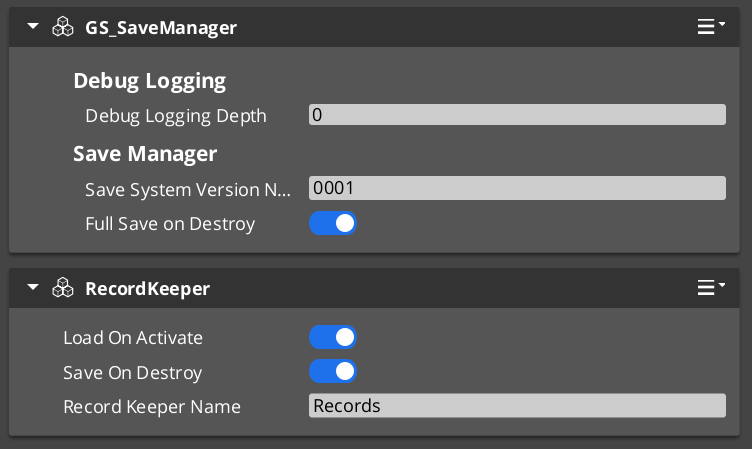

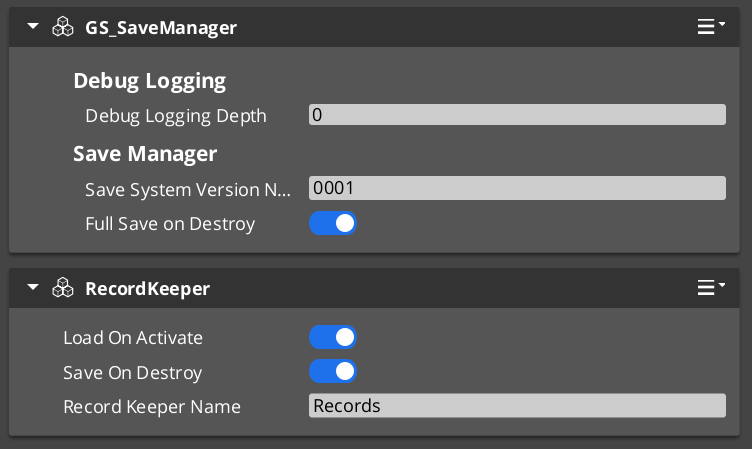

1.2 - Save System

How to work with the GS_Play save system — saving game state, loading saves, and tracking progression with the Record Keeper.

The Save system handles all persistence in a GS_Play project. The Save Manager coordinates file operations, Savers serialize per-entity state, and the Record Keeper tracks flat progression data. Together they give you a complete save/load pipeline that works out of the box and extends cleanly for custom data.

For architecture details, component properties, and extending the system in C++, see the Framework API reference.

Contents

How Saving Works

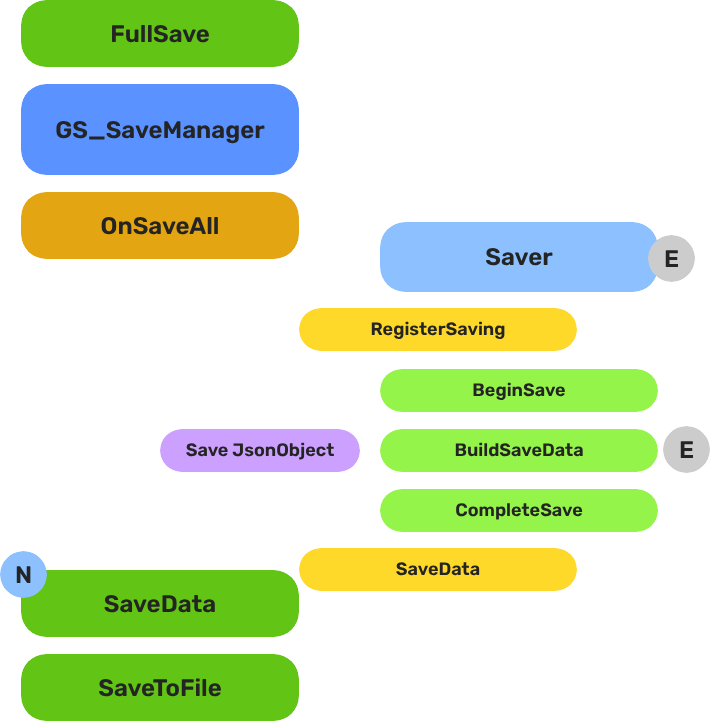

Breakdown

When a save is triggered, the Save Manager broadcasts OnSaveAll to every Saver component in the scene. Each Saver serializes its entity’s relevant state into the save file. When loading, the Save Manager broadcasts OnLoadAll, and each Saver restores its entity from the save data.

The Save Manager also maintains a list of all save files with metadata (timestamps, names), so you can present a save/load UI to the player.

| Operation | What Happens |

|---|

| New save | Creates a new save file, broadcasts OnSaveAll to all Savers. |

| Load save | Reads save file, broadcasts OnLoadAll to all Savers. |

| Save data | Writes current game state to the active save file. |

| Load data | Reads data from the active save file into memory. |

E Indicates extensible classes and methods.

Patterns - Complete list of system patterns used in GS_Play.

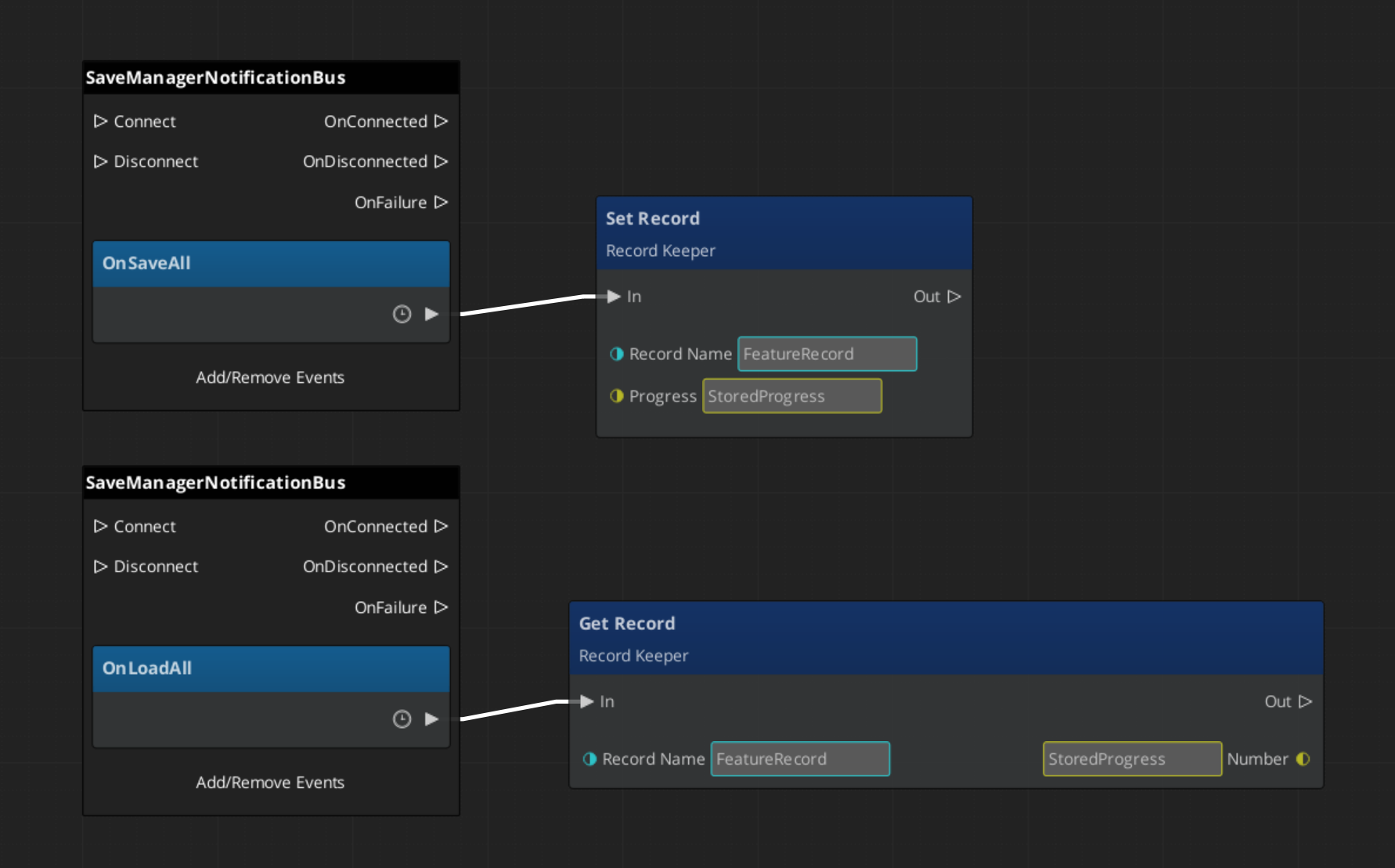

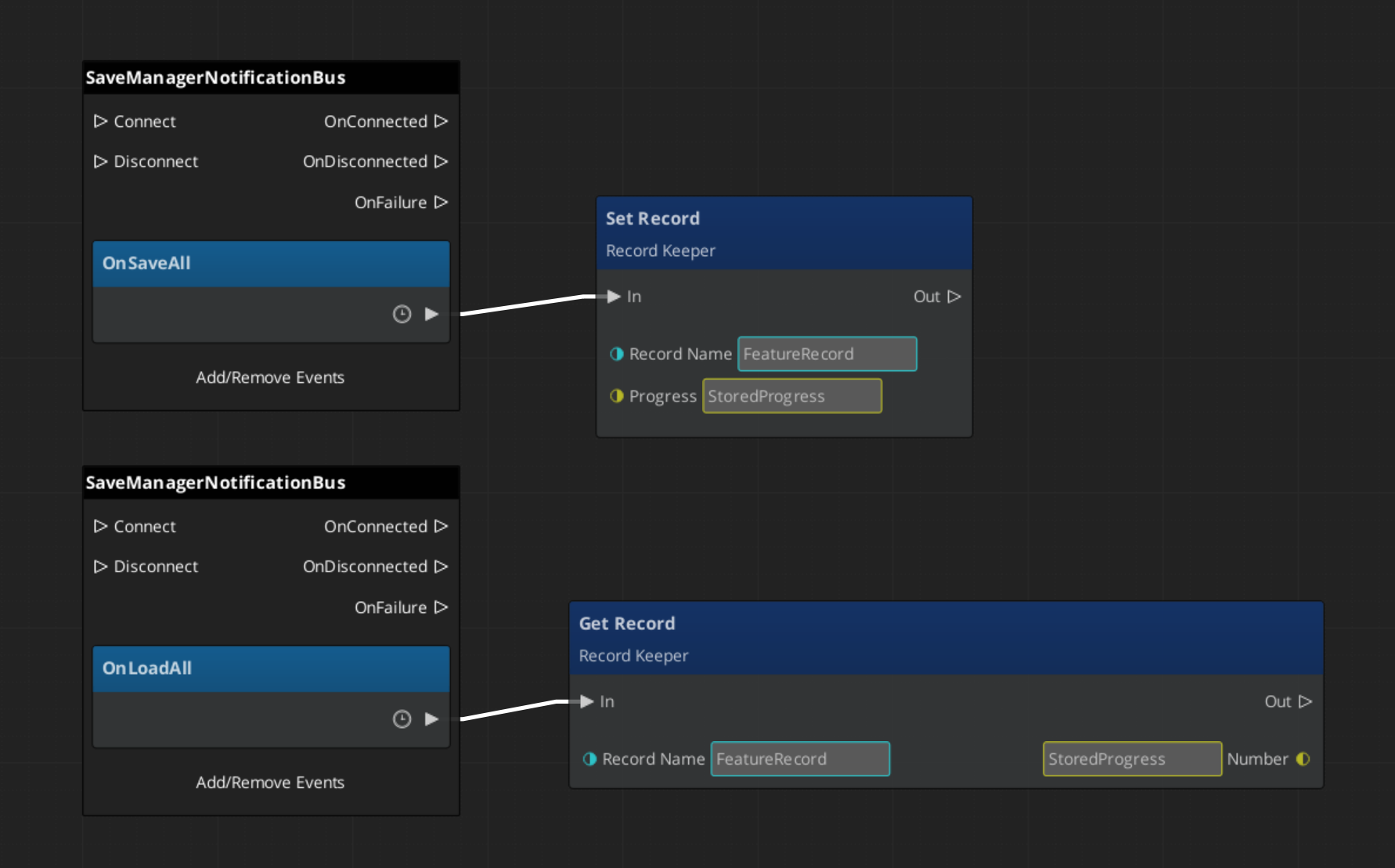

Responding to Save Events

ScriptCanvas

Connect to SaveManagerNotificationBus to know when save or load operations occur:

Triggering Saves and Loads

These methods are available on SaveManagerRequestBus:

| ScriptCanvas Node | What It Does |

|---|

NewGameSave | Creates a fresh save file for a new game. |

LoadGame(saveName) | Loads a specific save file by name. |

SaveData | Writes current state to the active save file. |

LoadData | Reads the active save file into memory. |

GetOrderedSaveList | Returns all save files sorted by most recent. |

ConvertEpochToReadable(epoch) | Converts a save file timestamp to a human-readable string. |

Record Keeper

The Record Keeper is a lightweight key-value store for tracking game-wide progression — quest flags, counters, unlock states, completion markers. It lives on the Save Manager prefab and is automatically persisted with the save system.

Unlike Savers (which are per-entity), the Record Keeper is a global singleton. Any script or component can read and write records by name.

| ScriptCanvas Node | What It Does |

|---|

HasRecord(name) | Returns whether a record with the given name exists. |

SetRecord(name, value) | Creates or updates a record. Value is a float. |

GetRecord(name) | Returns the current value of a record. |

DeleteRecord(name) | Removes a record. |

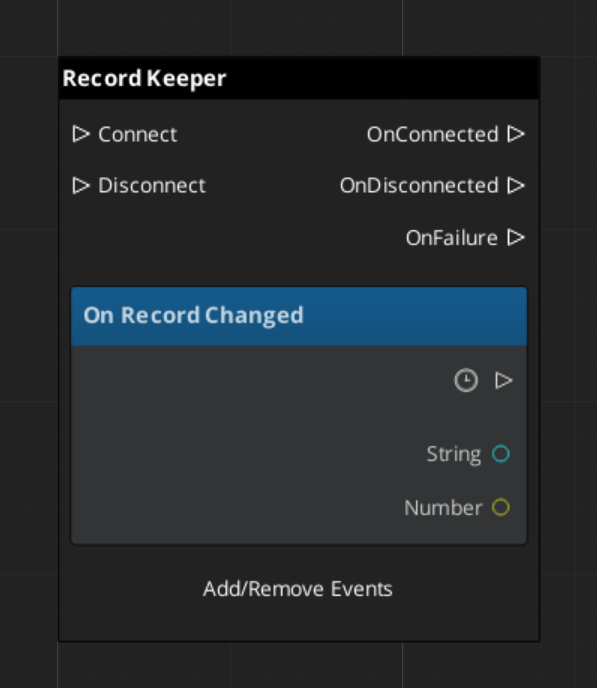

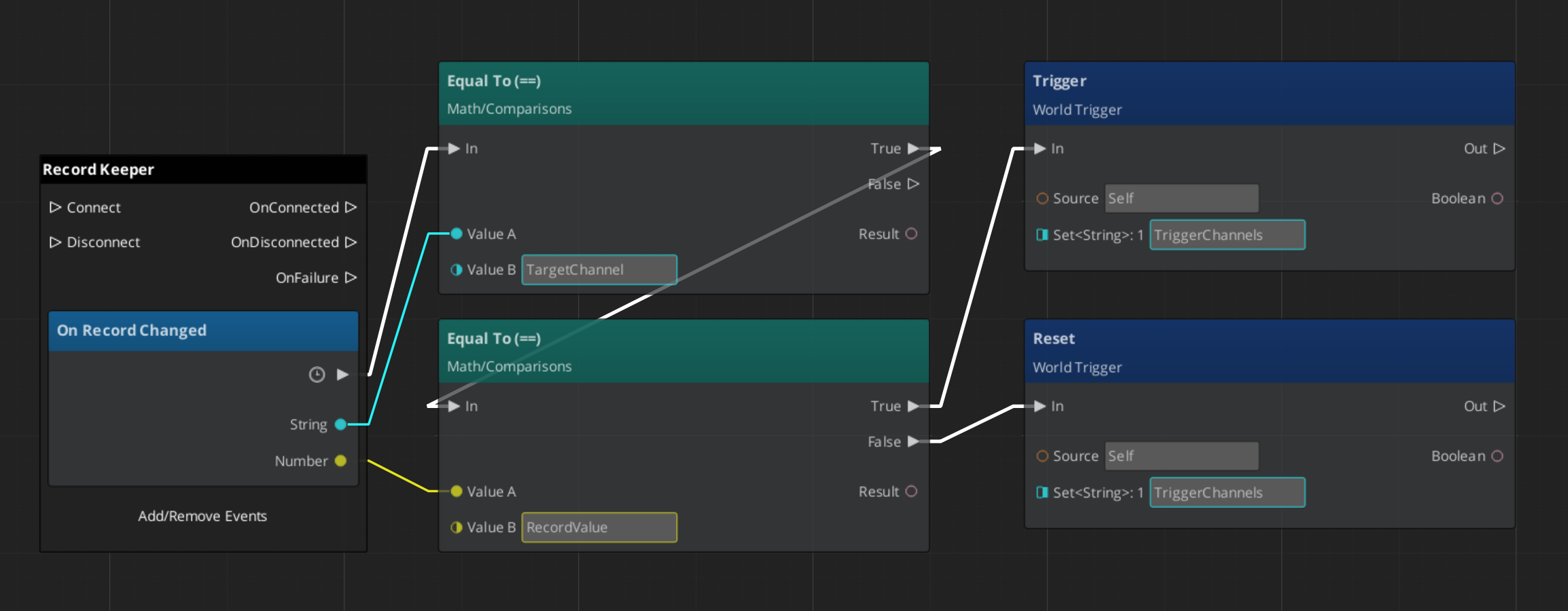

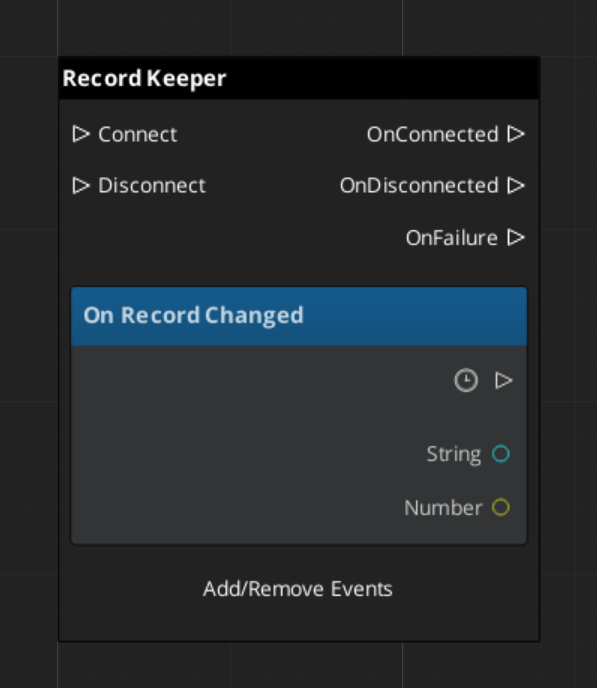

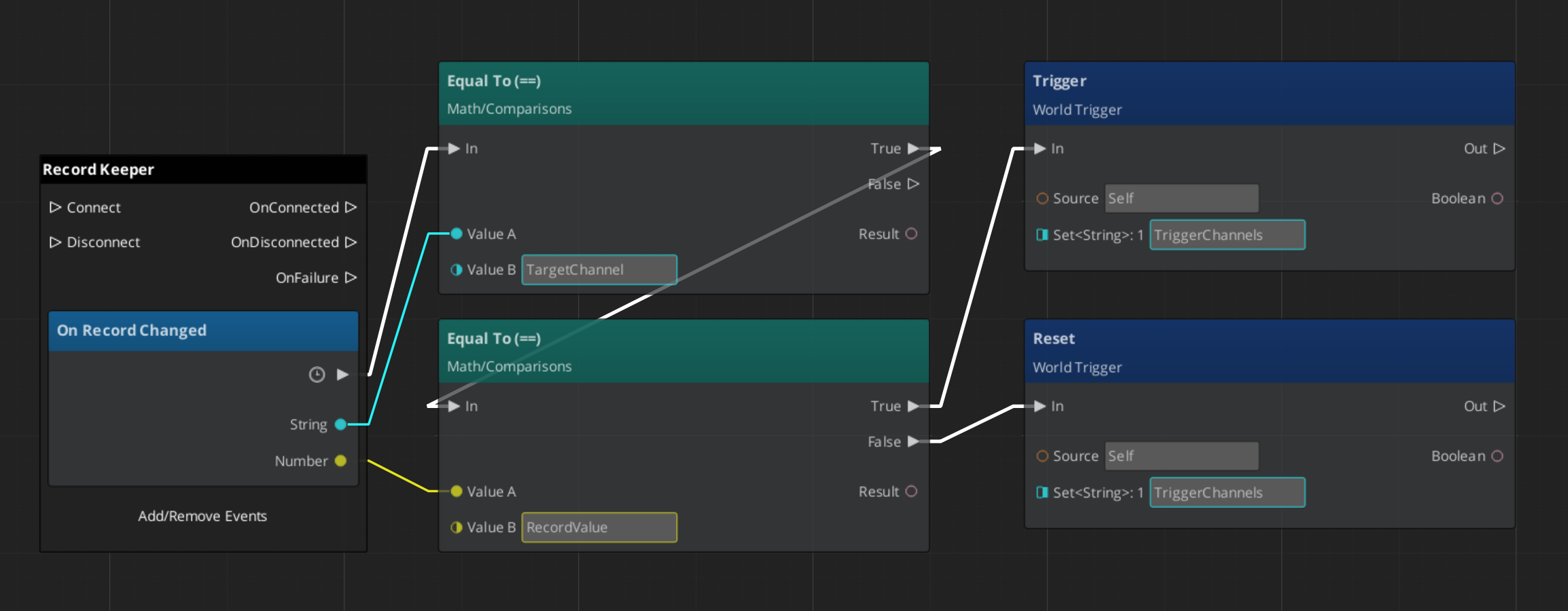

Responding to Record Changes

Listen on RecordKeeperNotificationBus for the RecordChanged event. This fires whenever any record is created, updated, or deleted — useful for UI that displays progression state.

Built-In Savers

Two Savers ship with GS_Core for the most common use cases:

| Saver | What It Saves |

|---|

| BasicEntitySaver | Entity transform (position, rotation, scale). |

| BasicPhysicsEntitySaver | Entity transform plus rigidbody velocity and angular velocity. |

Add these components to any entity that needs to persist its position across save/load cycles. They handle serialization and restoration automatically.

Quick Reference

| Need | Bus | Method / Event |

|---|

| Trigger a save | SaveManagerRequestBus | SaveData |

| Trigger a load | SaveManagerRequestBus | LoadGame(saveName) |

| Create a new save | SaveManagerRequestBus | NewGameSave |

| List all saves | SaveManagerRequestBus | GetOrderedSaveList |

| Know when saving | SaveManagerNotificationBus | OnSaveAll |

| Know when loading | SaveManagerNotificationBus | OnLoadAll |

| Check a progress flag | RecordKeeperRequestBus | HasRecord(name) / GetRecord(name) |

| Set a progress flag | RecordKeeperRequestBus | SetRecord(name, value) |

| Know when a record changes | RecordKeeperNotificationBus | RecordChanged |

Glossary

| Term | Meaning |

|---|

| Saver | A component that serializes one entity’s state into the save file |

| Record Keeper | A global key-value store for tracking progression flags and counters |

| Save File | A serialized snapshot of all Saver data plus Record Keeper state |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For step-by-step project setup:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.3 - Stage Management

How to work with the GS_Play stage management system — level loading, stage transitions, exit points, and stage data.

The Stage Manager handles all level-to-level navigation in a GS_Play project. It owns the master list of stages, processes transition requests, and coordinates with the Game Manager’s standby mode to ensure clean load/unload cycles. Stage Data components in each level control how that level initializes.

For architecture details, component properties, and extension patterns, see the Framework API reference.

Contents

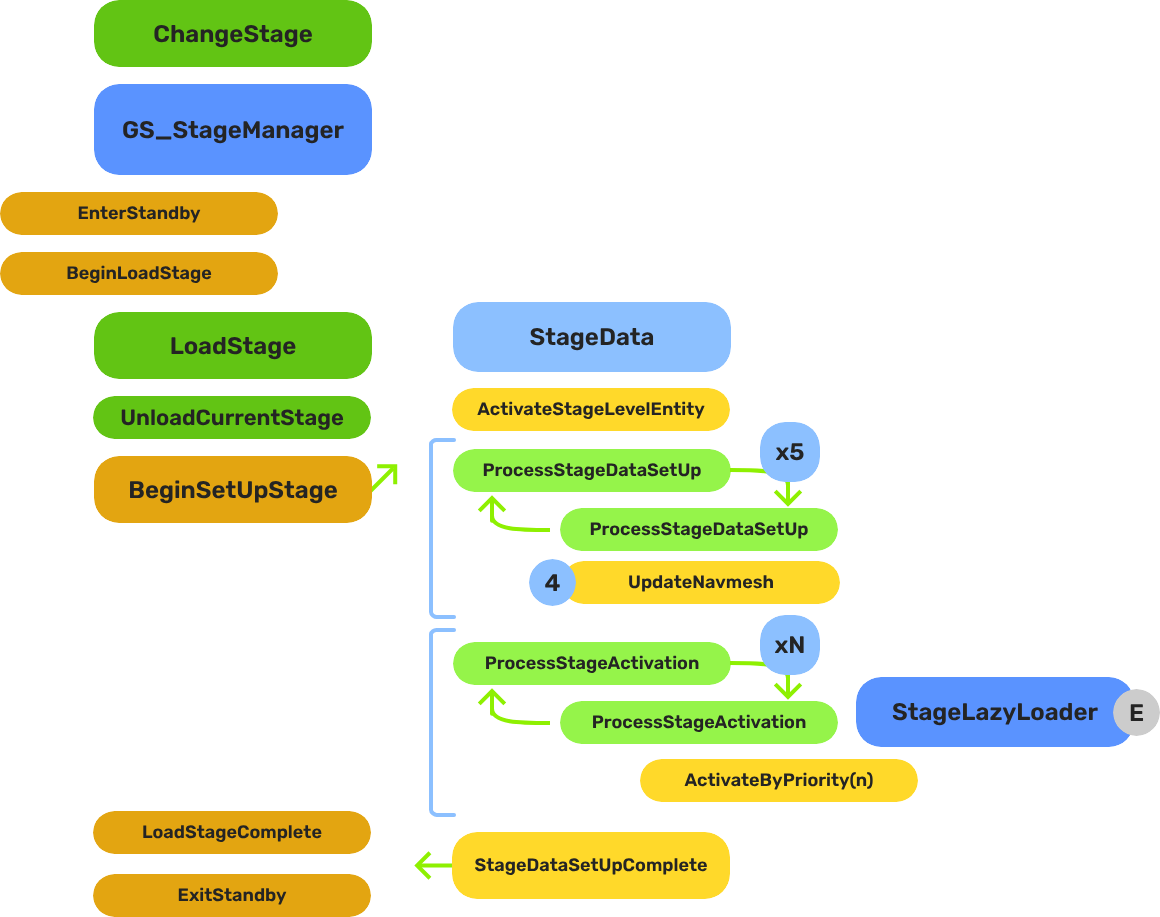

How Stage Transitions Work

Breakdown

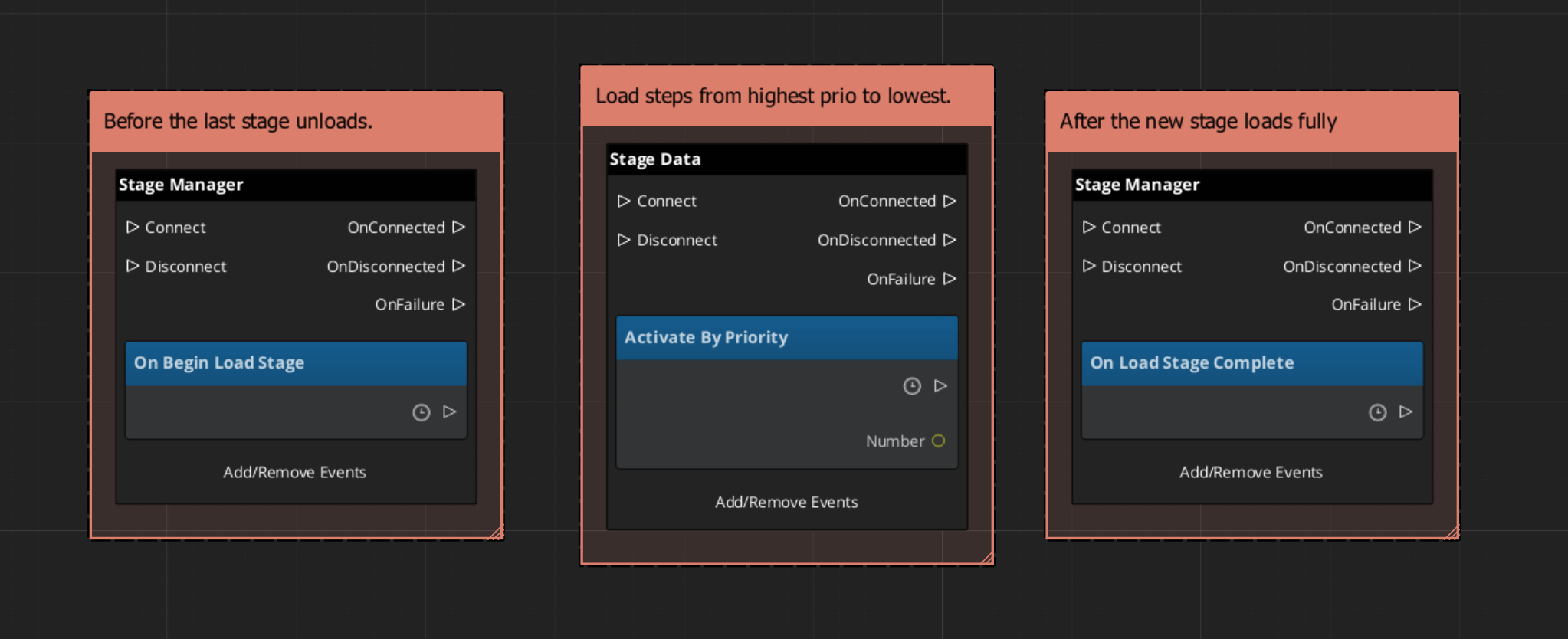

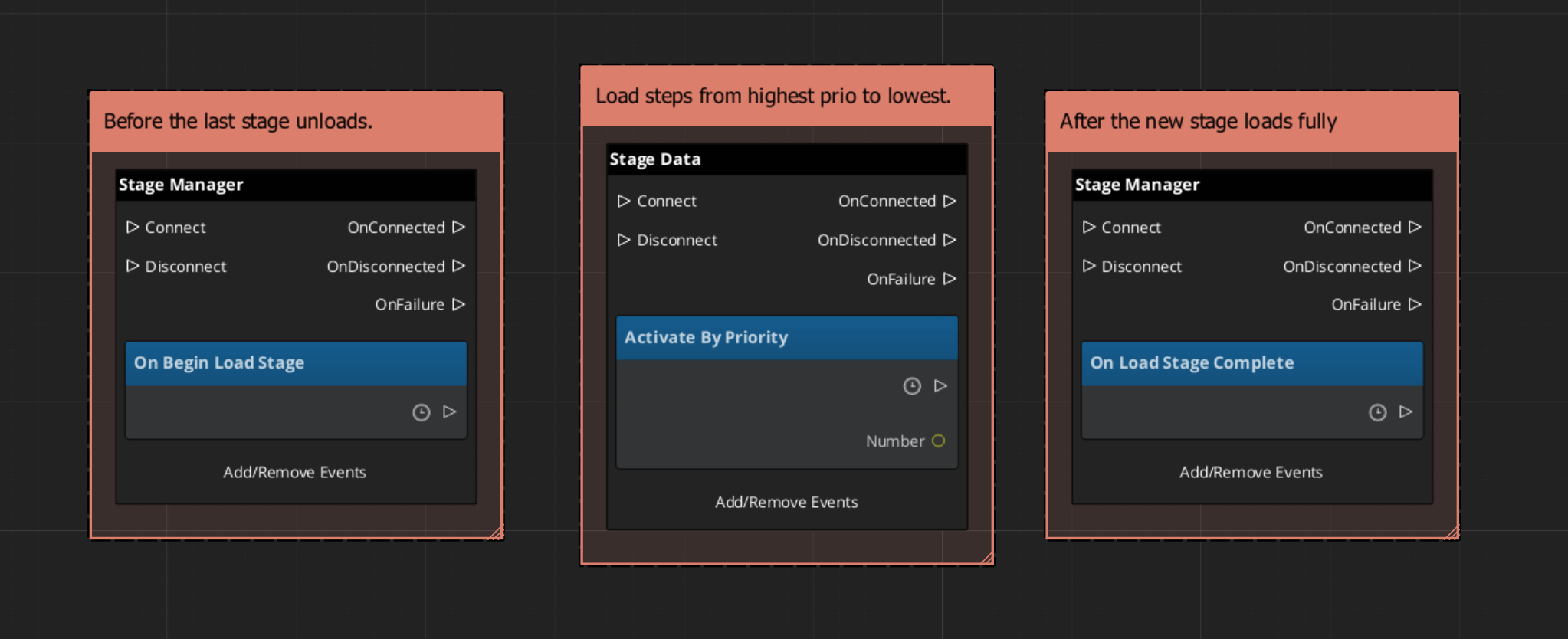

When you request a stage change, the system follows this sequence:

| Step | What Happens |

|---|

| 1 — Standby | The Game Manager enters standby, pausing all gameplay systems. |

| 2 — Unload | The current stage’s entities are torn down. |

| 3 — Spawn | The target stage’s prefab is instantiated. |

| 4 — Set Up | The Stage Data component in the new level runs its layered startup sequence. |

| 5 — Complete | The Stage Manager broadcasts LoadStageComplete. Standby exits. |

The Stage Data startup is layered — SetUpStage, ActivateByPriority, then Complete — so heavy levels can initialize incrementally without causing frame-time spikes.

E Indicates extensible classes and methods.

Patterns - Complete list of system patterns used in GS_Play.

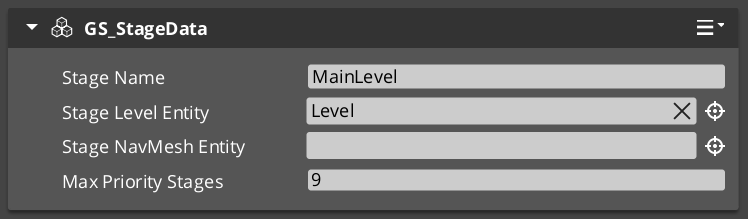

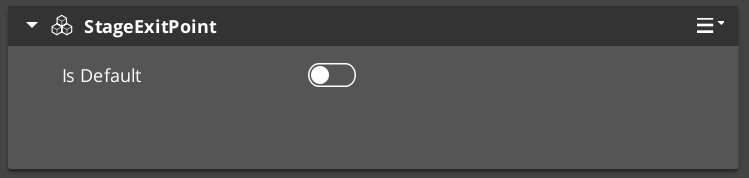

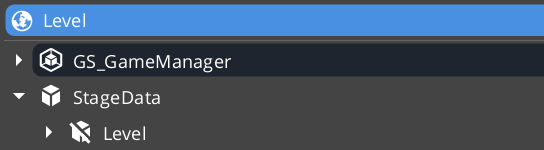

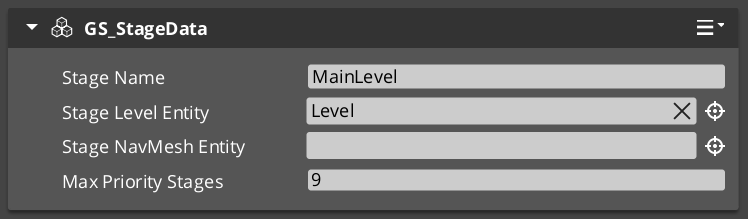

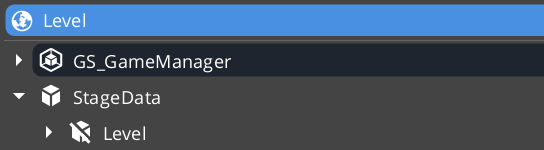

Stage Data

Each level should have a Stage Data component as its root entity. Using a start inactive child “Level” entity, the Stage Data system controls the initialization sequence for that new level. Stage Data holds level-specific configuration, and scripts.

| Event | What It Means |

|---|

OnBeginSetUpStage | The level is starting its setup. Initialize per-level systems. |

ActivateByPriority | Activate heavy entities in priority order (lazy loading). |

OnLoadStageComplete | The level is fully loaded and ready. |

OnTearDownStage | The level is being unloaded. Clean up per-level state. |

Listen to these on StageDataNotificationBus in any script that needs to react to level lifecycle.

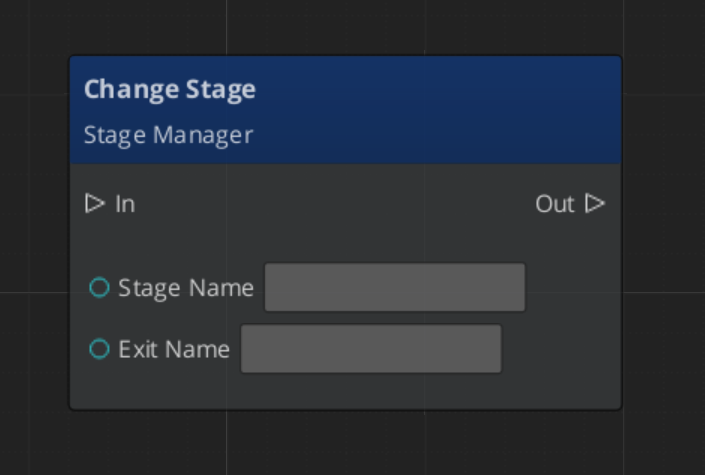

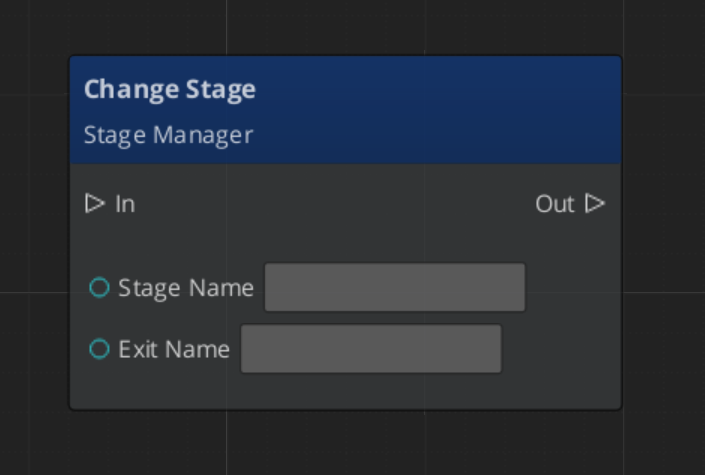

Triggering Stage Changes

ScriptCanvas

The exitPointName parameter is optional. If provided, the system will position the player at the named exit point in the target level.

Exit Points

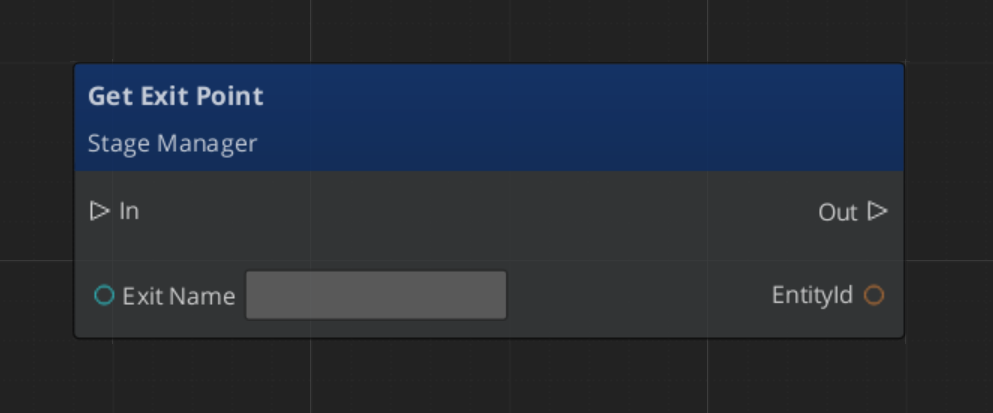

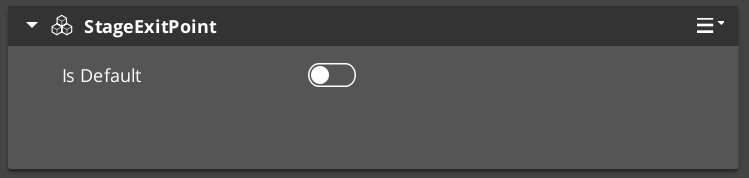

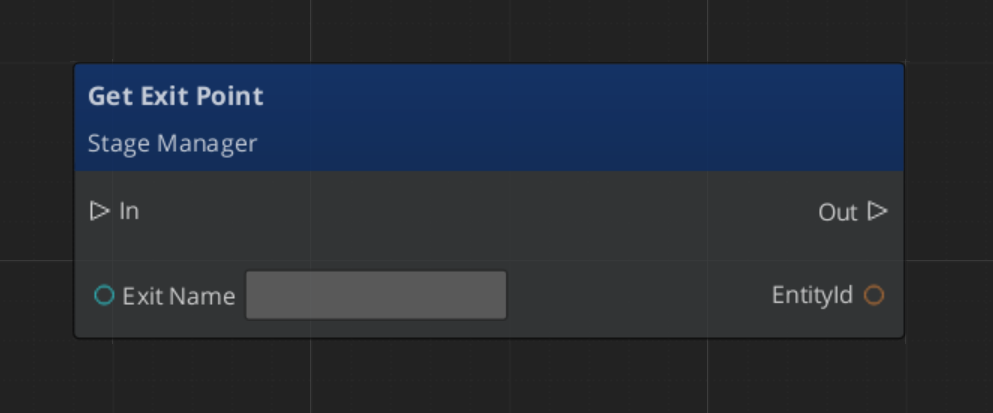

Exit Points are named position markers placed in a level. They define where entities spawn when arriving from another stage. A door in Level A can specify that when transitioning to Level B, the player should appear at Exit Point “DoorB_Entry”.

Exit Points are registered and unregistered with the Stage Manager automatically when they activate and deactivate.

| ScriptCanvas Node | What It Does |

|---|

ChangeStageRequest(stageName, exitPoint) | Transitions to a stage and positions at the named exit point. |

RegisterExitPoint(name, entity) | Manually registers an exit point (usually automatic). |

UnregisterExitPoint(name) | Manually unregisters an exit point. |

GetExitPoint(name) | Returns the entity ID of a registered exit point. |

Responding to Stage Events

ScriptCanvas

Entity Level Configuration

The Stage Data entity must live outside of the levels Game Manager prefab. It is left active.

Inside, it has a secondary level “wrapper” entity that you set to “Start Inactive” by default. This enables the Stage Data to control exactly when the level begins loading.

Quick Reference

| Need | Bus | Method / Event |

|---|

| Change to a different level | StageManagerRequestBus | ChangeStageRequest(stageName, exitPoint) |

| Load the default stage | StageManagerRequestBus | LoadDefaultStage |

| Know when a load starts | StageManagerNotificationBus | BeginLoadStage |

| Track loading progress | StageManagerNotificationBus | StageLoadProgress |

| Know when a load finishes | StageManagerNotificationBus | LoadStageComplete |

| React to level setup | StageDataNotificationBus | OnBeginSetUpStage |

| React to level teardown | StageDataNotificationBus | OnTearDownStage |

| Find an exit point | StageManagerRequestBus | GetExitPoint(name) |

Glossary

| Term | Meaning |

|---|

| Stage | A spawnable prefab representing a game level or screen |

| Exit Point | A named position marker in a level that defines where entities arrive from another stage |

| Stage Data | A per-level component that controls level initialization and holds level-specific settings |

| Default Stage | The first stage loaded on application start (typically the title screen) |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.4 - Options & Input

How to work with the GS_Play options system — input profiles, input groups, and runtime binding management.

The Options system manages player-facing configuration and input handling. Its primary feature is the Input Profile system, which provides group-based input binding management that can be toggled at runtime without code changes.

For architecture details, component properties, and extension patterns, see the Framework API reference.

Contents

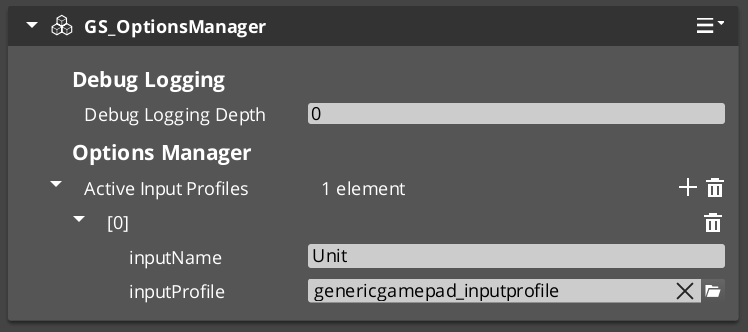

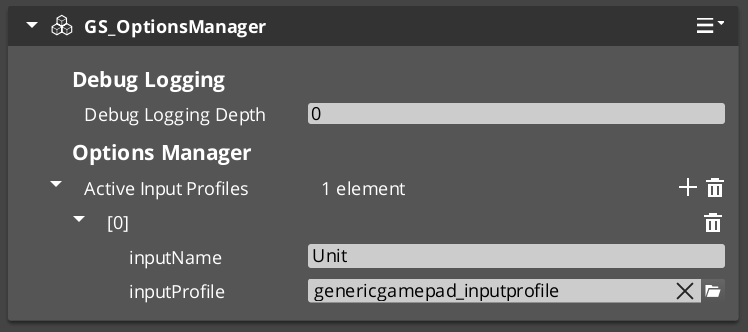

Options Manager

The Options Manager is a singleton that holds the active Input Profile and makes it available to all Input Reader components. It responds to the Game Manager lifecycle automatically.

| ScriptCanvas Node | What It Does |

|---|

GetActiveInputProfile | Returns the currently active Input Profile asset. |

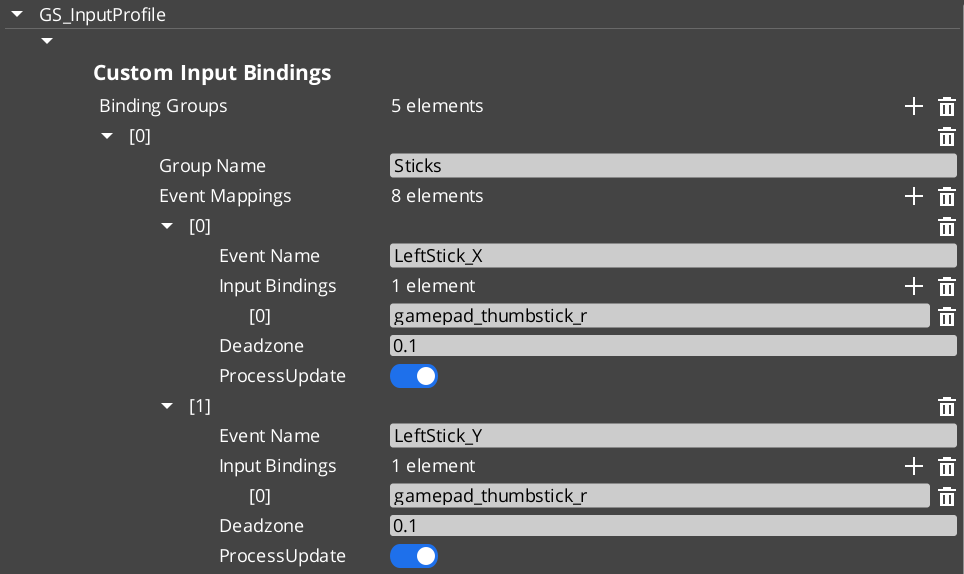

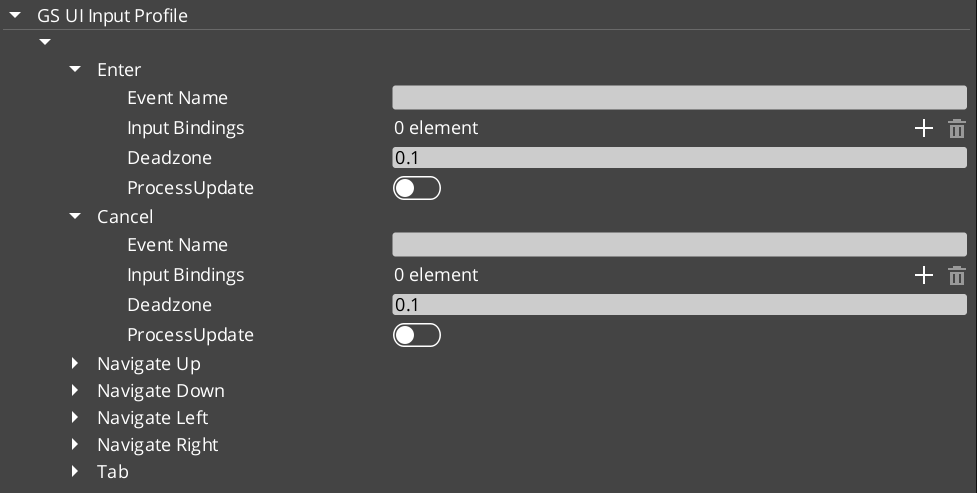

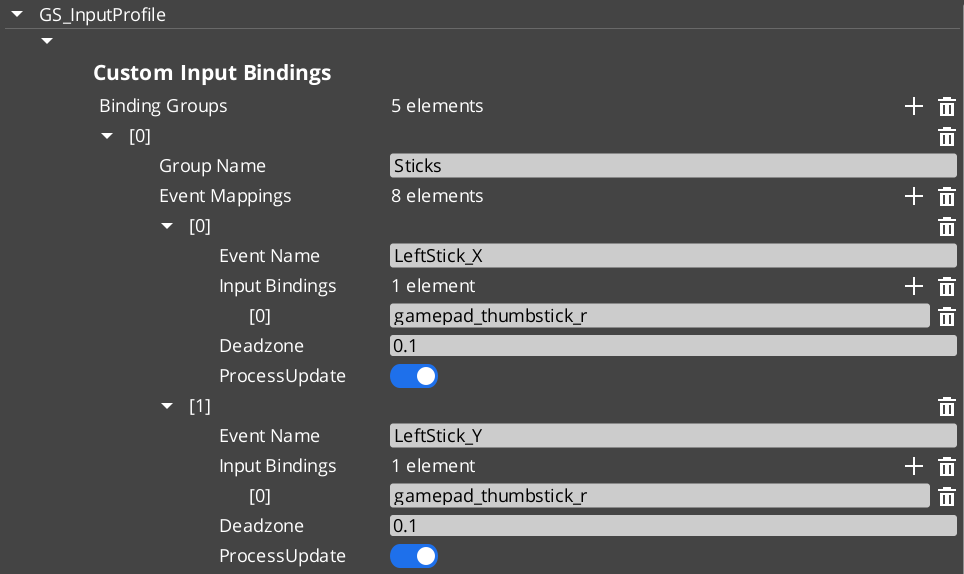

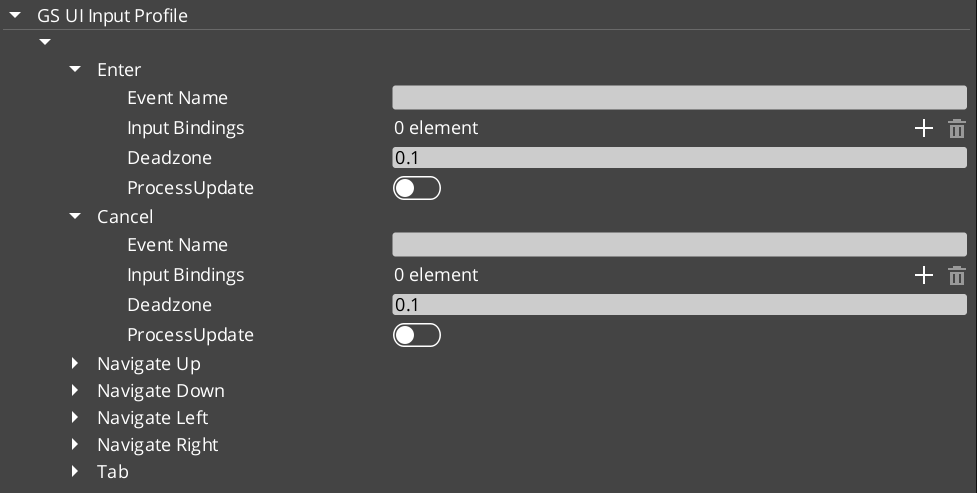

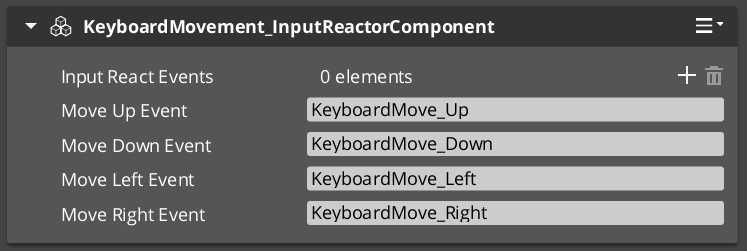

An Input Profile is a data asset created in the O3DE Asset Editor. It contains named input groups, each holding a set of event mappings. Each event mapping binds a gameplay event name to one or more raw input bindings (key presses, axis movements, button presses) with configurable deadzones.

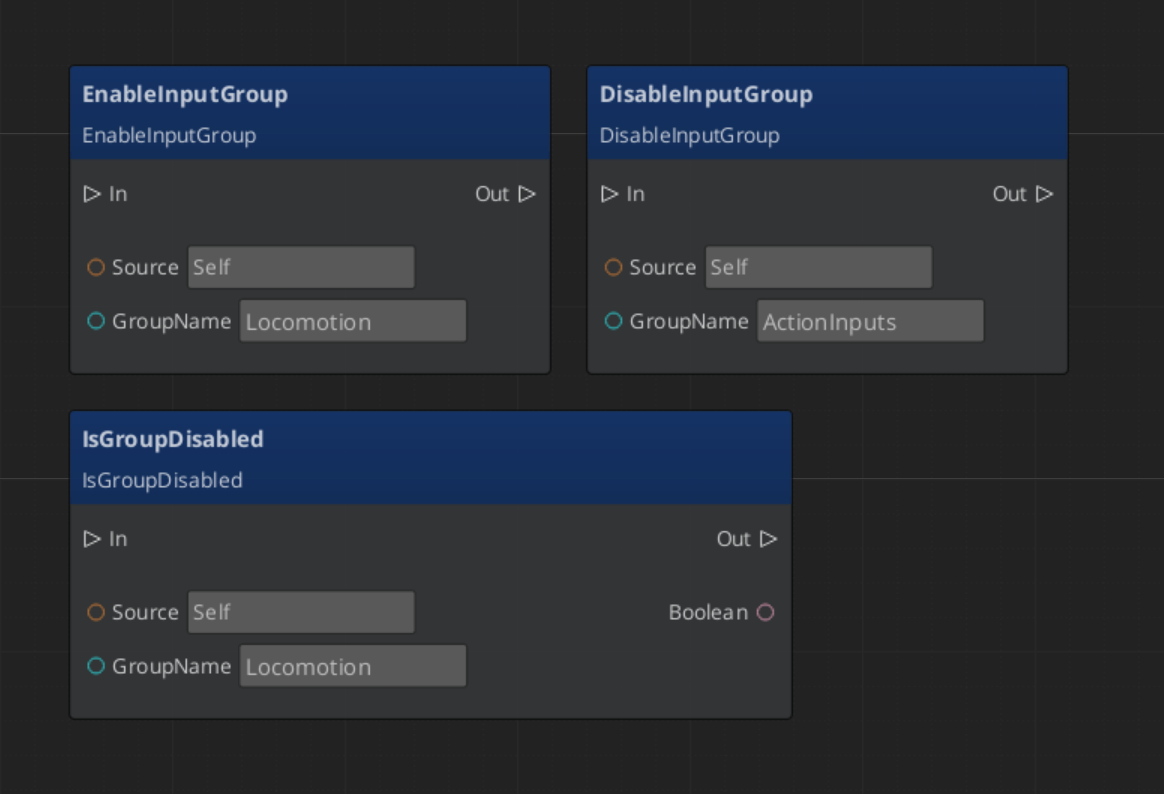

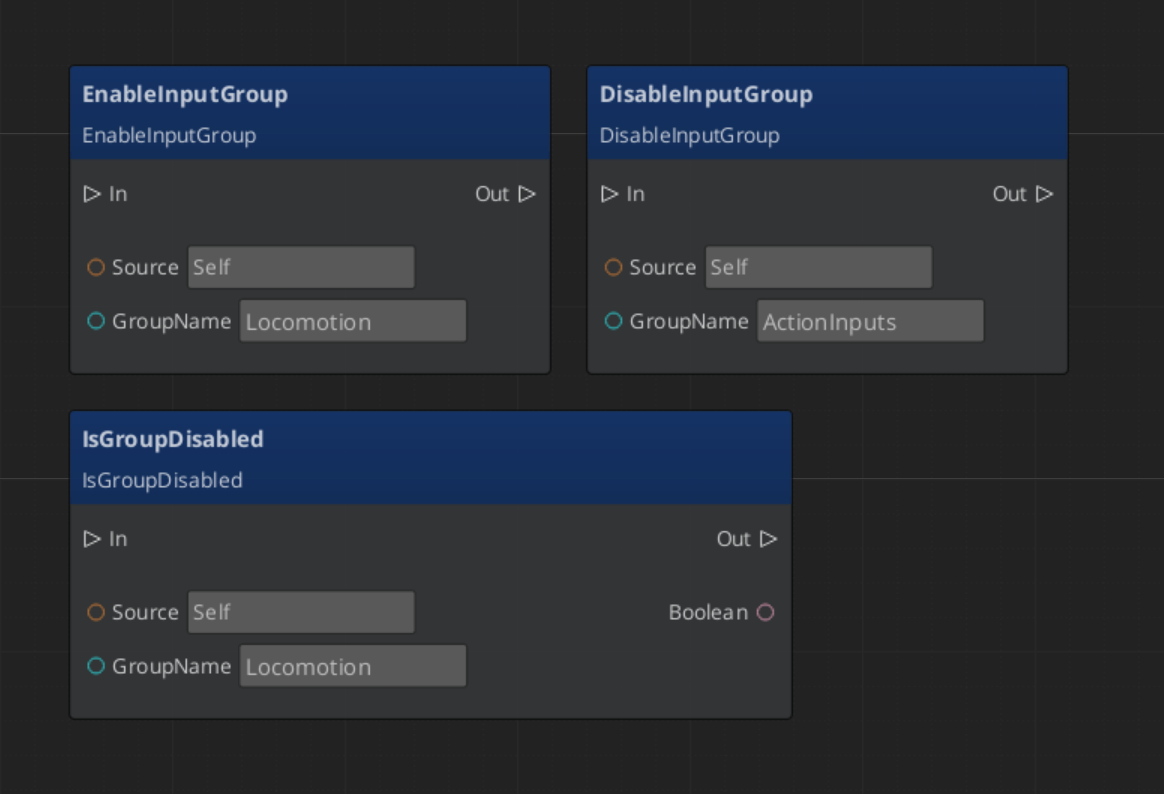

The key advantage over raw O3DE input bindings is the group system. Groups can be enabled and disabled independently at runtime — a pause menu can suppress gameplay input by disabling the “Gameplay” group, without tearing down and rebuilding bindings.

| Stage | What Happens |

|---|

| 1 — Raw Input | O3DE’s input system captures key/axis/button events. |

| 2 — Input Reader | The GS_InputReaderComponent on the entity matches raw input against the active Input Profile’s event mappings. |

| 3 — Event Mapping | Matched input fires a named gameplay event (e.g., “Jump”, “MoveForward”). |

| 4 — Consumer | Other components on the entity (controllers, reactors) handle the gameplay event. |

Input Readers filter by group — if a group is disabled, none of its event mappings fire.

Quick Reference

| Need | Bus | Method / Event |

|---|

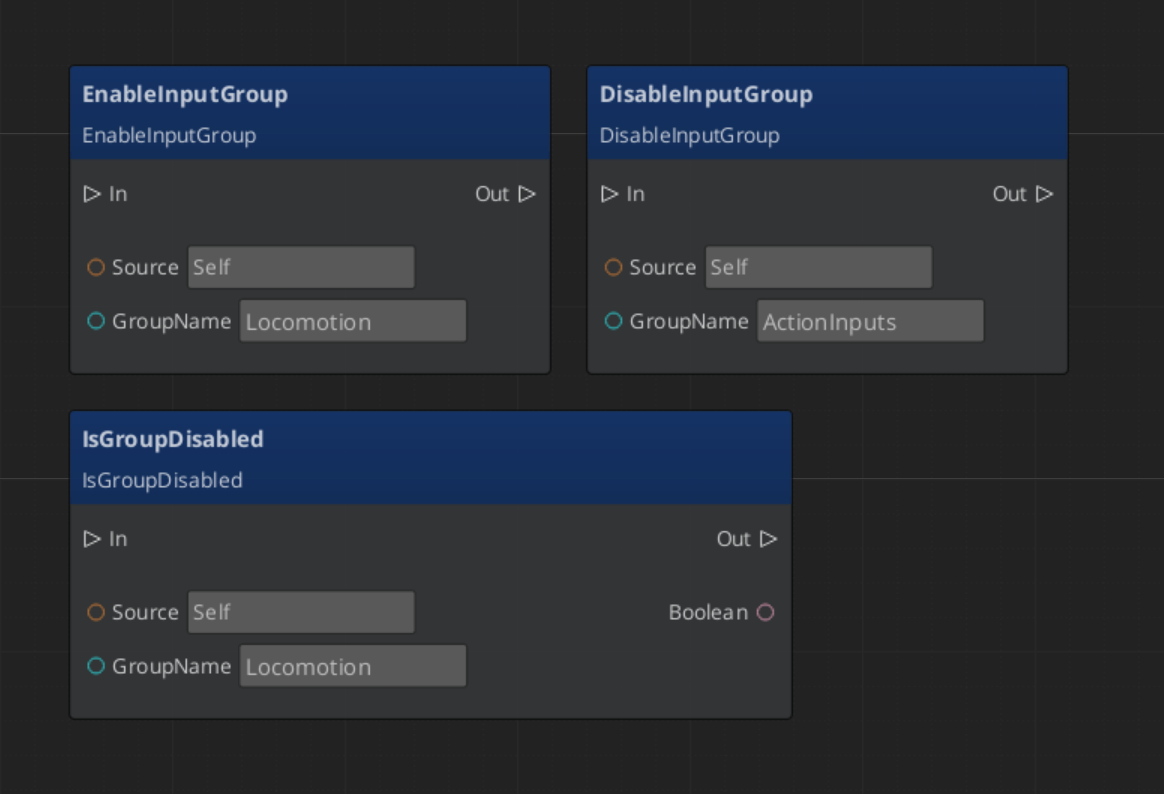

| Disable an input group | InputReaderRequestBus | DisableInputGroup(groupName) |

| Enable an input group | InputReaderRequestBus | EnableInputGroup(groupName) |

| Check if group is disabled | InputReaderRequestBus | IsGroupDisabled(groupName) |

| Get the active profile | OptionsManagerRequestBus | GetActiveInputProfile |

Glossary

| Term | Meaning |

|---|

| Input Profile | A data asset containing named input groups with event mappings |

| Input Group | A named collection of event mappings that can be enabled or disabled at runtime |

| Event Mapping | A binding from a gameplay event name to one or more raw input sources |

| Input Reader | A component that matches raw input against the active Input Profile |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.5 - Systems

Core framework systems — the GS_Actions triggerable behavior system and the GS_Motion track-based animation engine.

GS_Core provides a growing number of standalone systems that are used across multiple gems. Currently, the GS_Motion track-based animation engine powers UIAnimation and Juice Feedback playback.

For architecture details, component properties, and C++ extension guides, see the Framework API: Systems.

Contents

GS_Motion

Provides tween-style Motion Track components for animating transforms, colors, and values over time. Multiple tracks on the same entity run in parallel; chains are configured by setting an On Complete motion name.

GS_Motion

API

See Also

For the full API, component properties, and C++ extension guides:

For related systems:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.5.1 - Actions System

How to work with the GS_Play action system — triggerable, composable behaviors that fire from scripts, triggers, or code.

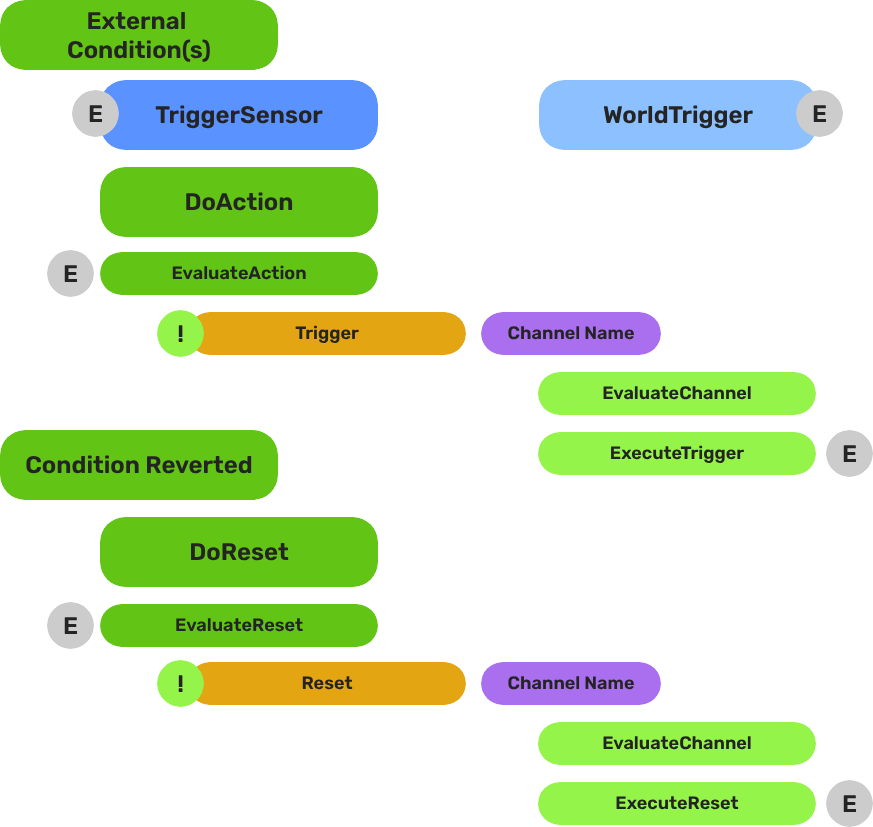

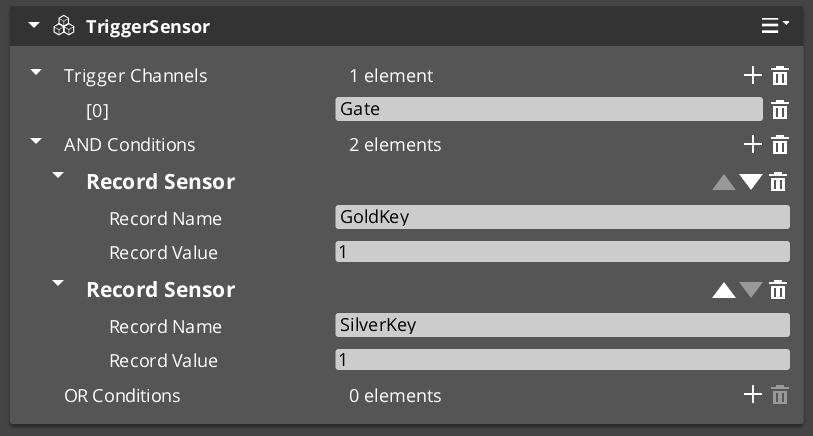

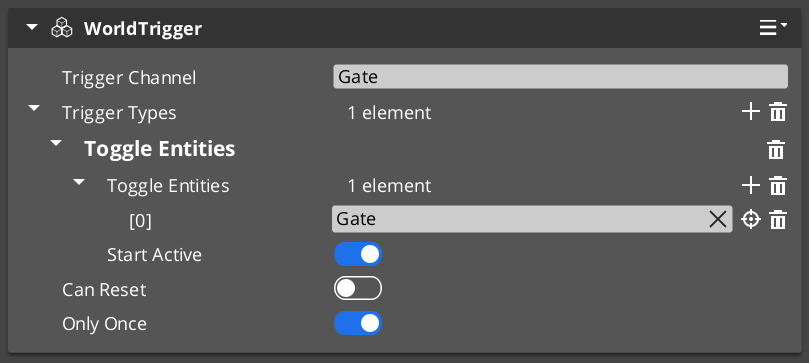

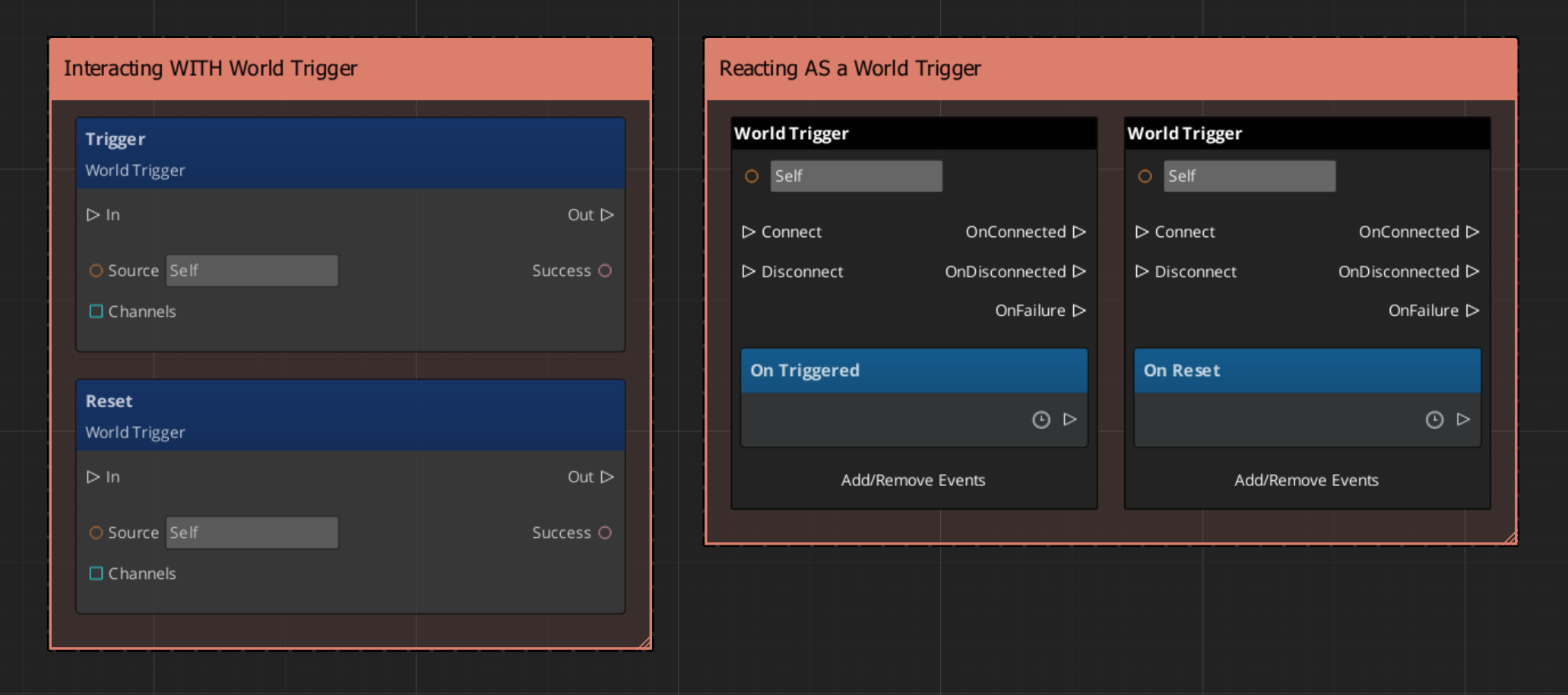

The Actions system provides a universal pattern for attaching discrete, reusable behaviors to entities and triggering them from any source — ScriptCanvas, World Triggers, UI buttons, or C++ code. Actions are data-driven components that fire on named channels, enabling composition without custom scripting.

For architecture details, component properties, and creating custom actions in C++, see the Framework API reference.

Contents

How Actions Work

An Action is a component you attach to an entity. Each Action has a channel name. When something calls DoAction(channelName) on that entity’s bus, every Action component whose channel matches the name will execute.

This decoupling is the core value — the system that fires DoAction does not need to know what kind of action is attached. You can change, add, or remove action components on an entity without modifying any calling code.

| Concept | What It Means |

|---|

| Channel | A named string. Actions on the same channel fire together. |

| Composition | Multiple actions on the same channel execute in parallel — stack components to compose behaviors. |

| Chaining | An action can fire a different channel on completion, enabling lightweight sequences. |

Triggering Actions

ScriptCanvas

[ActionRequestBus → DoAction(channelName)]

└─► All Action components on this entity with matching channel execute

To know when an action completes:

[ActionNotificationBus → OnActionComplete]

└─► Action has finished executing

Built-In Actions

GS_Core ships with these ready-to-use actions:

| Action | What It Does |

|---|

| PrintLog | Logs a configurable message to the console. Useful for debugging trigger chains. |

| ToggleMouseCursor | Shows or hides the system mouse cursor. |

Additional actions are available in other gems (e.g., World Trigger actions in GS_Interaction, dialogue effects in GS_Cinematics).

Common Patterns

World Trigger → Action

A World Trigger detects a collision or interaction event and fires DoAction on its entity. Action components on the same entity respond — one might play a sound, another might set a record, another might toggle an entity.

A UI button press fires DoAction with a channel name. Actions handle the response — navigate to a different UI page, start a new game, or toggle the pause menu.

Chaining Actions

Set an action’s “Chain Channel” property to fire a different channel when it completes:

Channel "OpenDoor" → [ToggleEntity action] → chains to "PlayDoorSound" → [AudioEvent action]

Quick Reference

| Need | Bus | Method / Event |

|---|

| Fire an action | ActionRequestBus | DoAction(channelName) |

| Know when an action completes | ActionNotificationBus | OnActionComplete |

Glossary

| Term | Meaning |

|---|

| Action | A component that executes a discrete behavior when triggered on a named channel |

| Channel | A named string identifier that groups actions — all actions on the same channel fire together |

| Chaining | Configuring an action to fire a different channel on completion, creating lightweight sequences |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.5.2 - Motion System

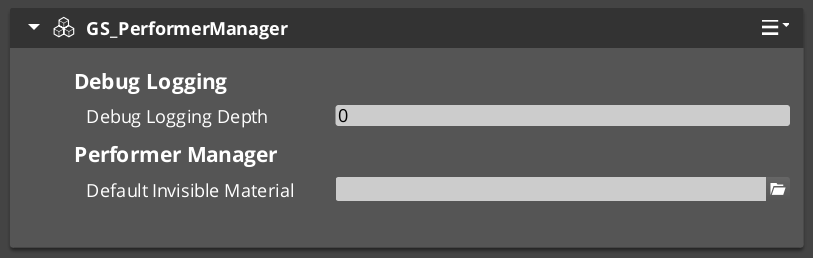

How to work with GS_Motion — the track-based animation and tween system that powers UI transitions, feedback effects, and custom animations.

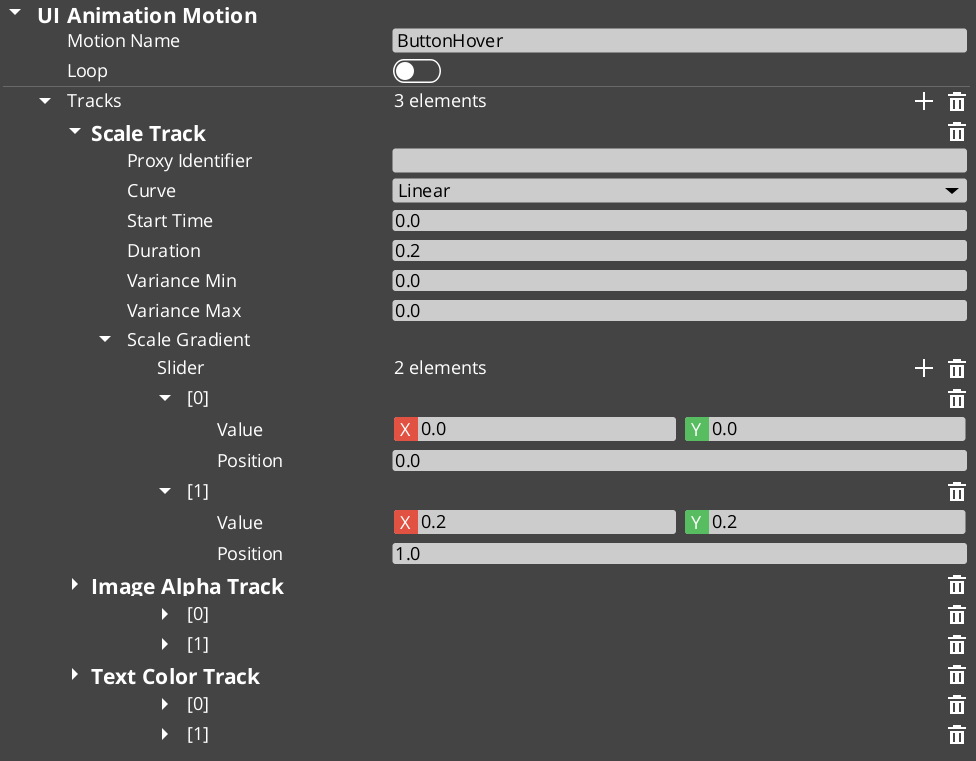

GS_Motion is a track-based animation engine built into GS_Core. It drives timed property changes — position, rotation, scale, color, opacity — through authored data assets rather than hand-coded interpolation scripts. Domain gems extend GS_Motion with their own track types: GS_UI adds 8 LyShine-specific tracks for UI animation, and GS_Juice adds transform and material tracks for game feel feedback.

For architecture details, the domain extension pattern, and all track types, see the Framework API reference.

Contents

Key Concepts

| Concept | What It Is |

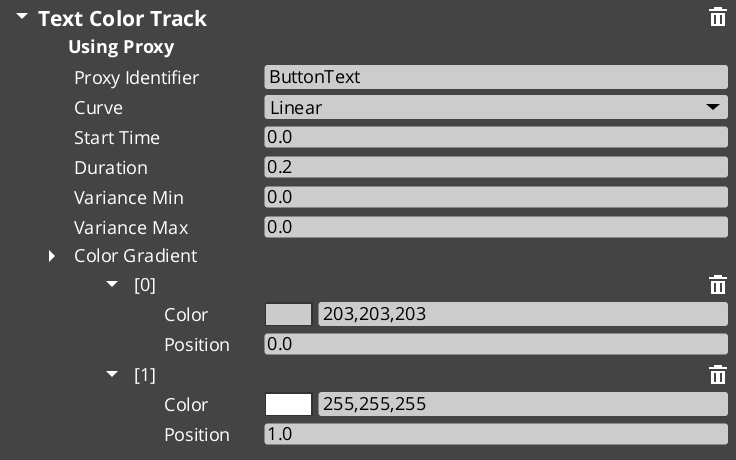

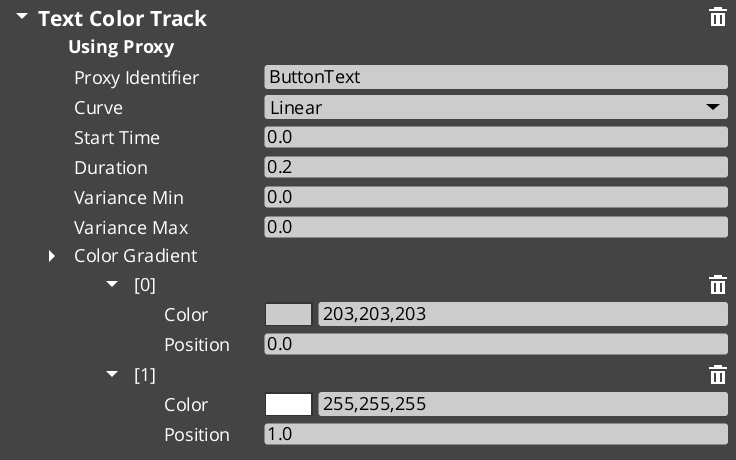

|---|

| Track | A single animated property — what changes, how long, and which easing curve. |

| Motion | A collection of tracks that play together. Tracks can start at different times within the motion. |

| Motion Asset | A data asset authored in the O3DE Asset Editor containing the tracks and their configuration. |

| Proxy | An optional entity redirect — lets a track target a child entity instead of the motion’s owner. |

| Composite | The runtime instance created from an asset. Each entity gets its own deep copy. |

How It Works

- Author a motion asset in the Asset Editor. Each domain has its own asset type (

.uiam for UI, .feedbackmotion for Juice). - Assign the asset to a component or embed it in a serialized field (e.g., a page’s show/hide transitions).

- Play the motion from ScriptCanvas or C++. The system initializes a runtime composite, resolves proxies, and ticks all tracks.

- Each track receives an eased progress value (0 → 1) every frame and applies its property change to the target entity.

- When all tracks complete, the motion fires its OnComplete callback.

Easing Curves

Every track can use any of the 40+ easing curves from the GS_Core curves library. Curves are configured per-track in the asset editor.

Available families: Linear, Quad, Cubic, Sine, Expo, Circ, Back, Elastic, Bounce — each with In, Out, and InOut variants.

Proxy Targeting

When a motion asset has tracks with identifiers (named labels), those tracks appear in the proxy list on the component. Proxies let you redirect a track to a different entity in the hierarchy — for example, a page show animation might animate the background separately from the content panel.

Each proxy entry maps a track label to a target entity. If no proxy is set, the track targets the motion’s owner entity.

Domain Extensions

GS_Motion is not used directly — it provides the base system that domain gems extend with concrete track types.

UI Animation (GS_UI)

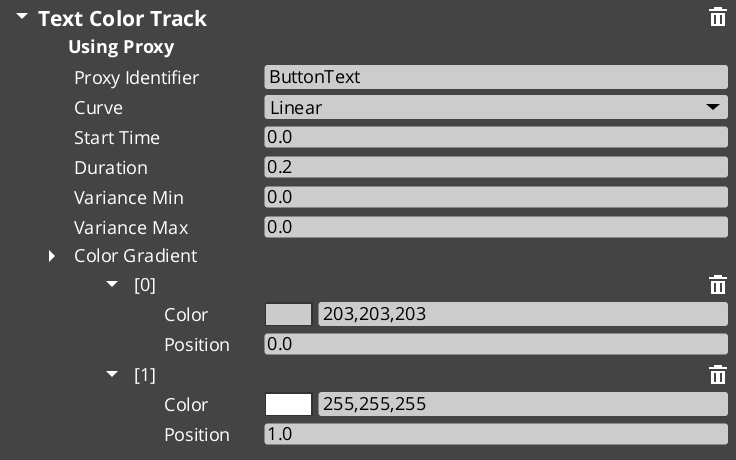

Eight tracks for LyShine UI elements (position, scale, rotation, alpha, color, text). Asset extension: .uiam. Used for page transitions, button hover/select effects, and standalone UI animation.

UI Animation API

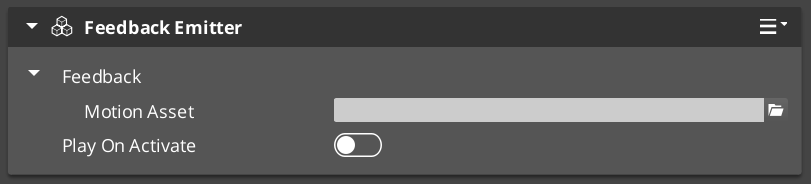

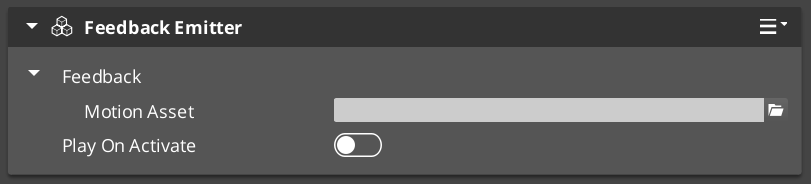

Feedback Motions (GS_Juice)

Two tracks for game feel effects — transform (position, scale, rotation) and material (opacity, emissive, color tint). Asset extension: .feedbackmotion. Used for screen shake, hit flash, and visual feedback.

Feedback Motions API

Quick Reference

| Need | Where |

|---|

| Animate UI elements | Use .uiam assets with UiAnimationMotionComponent or page transitions |

| Create feedback effects | Use .feedbackmotion assets with FeedbackEmitter component |

| Change easing curve | Edit the curve type on individual tracks in the asset editor |

| Redirect a track to another entity | Configure proxy entries on the component |

| Loop an animation | Enable loop on the motion asset |

Glossary

| Term | Meaning |

|---|

| Track | A single animated property within a motion — defines what changes, duration, and easing |

| Motion | A collection of tracks that play together as a single animation |

| Motion Asset | A data asset authored in the Asset Editor containing track configurations |

| Proxy | An entity redirect that lets a track target a child entity instead of the motion’s owner |

| Composite | The runtime instance created from a motion asset — each entity gets its own deep copy |

| Domain Extension | A gem-specific set of track types that extends GS_Motion for a particular use case |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

1.6 - Utilities

General-purpose components and math helpers — physics triggers, easing curves, spring dampers, gradients, splines, and more.

GS_Core includes a library of general-purpose components and math helpers available to every system in the framework. These utilities handle common game development tasks — physics overlap detection, value interpolation, animation curves, gradient sampling, and spatial math — so you can focus on gameplay logic rather than reimplementing fundamental patterns.

For full API details and code examples, see the Framework API reference.

Contents

Physics Trigger Volume

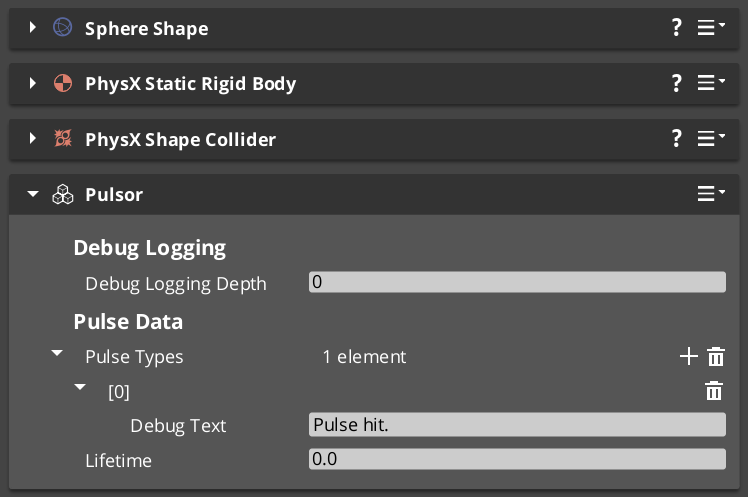

A reusable physics overlap detector. Handles trigger enter, stay, and exit events with filtering and callback support. Used internally by Pulsors, Targeting Fields, and World Triggers — and available for your own components.

Physics Trigger Volume API

Easing Curves

40+ easing functions organized into families. Every GS_Motion track, spring, and interpolation system in the framework can reference these curves by enum.

| Family | Variants |

|---|

| Linear | Linear |

| Quad | In, Out, InOut |

| Cubic | In, Out, InOut |

| Sine | In, Out, InOut |

| Expo | In, Out, InOut |

| Circ | In, Out, InOut |

| Back | In, Out, InOut |

| Elastic | In, Out, InOut |

| Bounce | In, Out, InOut |

Select a curve type via the CurveType enum in component properties, asset fields, or C++ code.

Curves API

Spring Dampers

15+ spring functions for physically-grounded value interpolation. Springs produce natural-feeling motion that reacts to velocity and acceleration, making them ideal for camera smoothing, UI follow, and any value that should “settle” rather than snap.

| Function | Use Case |

|---|

| Simple Spring | Basic spring with damping |

| Acceleration Spring | Spring with acceleration bias |

| Double Spring | Two-stage spring for overshoot effects |

| Timed Spring | Spring that reaches target in a fixed time |

| Velocity Spring | Spring driven by velocity |

| Quaternion Spring | Spring for rotation values |

Springs API

Gradients

Multi-stop gradient types for sampling values over a range. Used extensively by GS_Motion tracks and GS_Juice feedback tracks to define animation curves.

| Type | What It Samples |

|---|

| ColorGradient | RGBA color with positioned markers |

| FloatGradient | Single float value |

| Vector2Gradient | 2D vector |

Gradients are editable in the Inspector with visual marker placement.

Gradients API

Entity Helpers

Utility functions for finding entities in the scene by name.

| Function | What It Does |

|---|

GetEntityByName(name) | Returns the entity with the given name. |

GetEntityIdByName(name) | Returns the EntityId of the named entity. |

Entity Helper API

Weighted Random

Template-based weighted random selection. Given a collection of items with weights, returns a randomly selected item biased by weight. Useful for loot tables, dialogue variation, and procedural placement.

GS_Random API

Angle Helpers

Functions for angle and orientation math, plus 22 preset section configurations for direction classification.

| Function | What It Does |

|---|

YawFromDir(direction) | Extracts yaw angle from a direction vector. |

QuatFromYaw(yaw) | Creates a quaternion from a yaw angle. |

PickByAngle(angle, sections) | Maps an angle to a section index using a preset configuration. |

Section presets range from 2-section (left/right) to 16-section (compass-style), plus diagonal and cardinal configurations. Useful for animation direction selection and 2D-style facing.

Angle Helper API

Spline Helpers

Utility functions for working with O3DE spline components.

| Function | What It Does |

|---|

FindClosestWorldPoint(spline, point) | Returns the closest point on the spline in world space. |

FindClosestLocalPoint(spline, point) | Returns the closest point in local space. |

FindClosestFraction(spline, point) | Returns the 0–1 fraction along the spline. |

Spline Helper API

Serialization Helpers

Utility functions for common O3DE serialization patterns. Simplifies working with SerializeContext and EditContext in component reflection.

Serialization Helper API

Common Enums

Shared enumeration types used across the framework.

Common Enums API

Glossary

| Term | Meaning |

|---|

| Easing Curve | A function that maps linear progress (0→1) to a shaped output for smooth animation |

| Spring Damper | A physically-modeled interpolation function that settles naturally toward a target |

| Gradient | A multi-stop sampler that returns interpolated values (color, float, vector) over a 0→1 range |

| Physics Trigger Volume | A reusable overlap detector that fires enter, stay, and exit callbacks |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and code examples:

For related systems:

Get GS_Core

GS_Core — Explore this gem on the product page and add it to your project.

2 - GS_AI

Foundation scaffold for AI-driven entity behavior in the GS_Play framework.

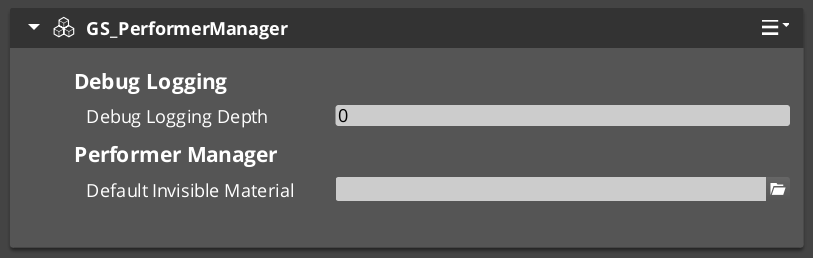

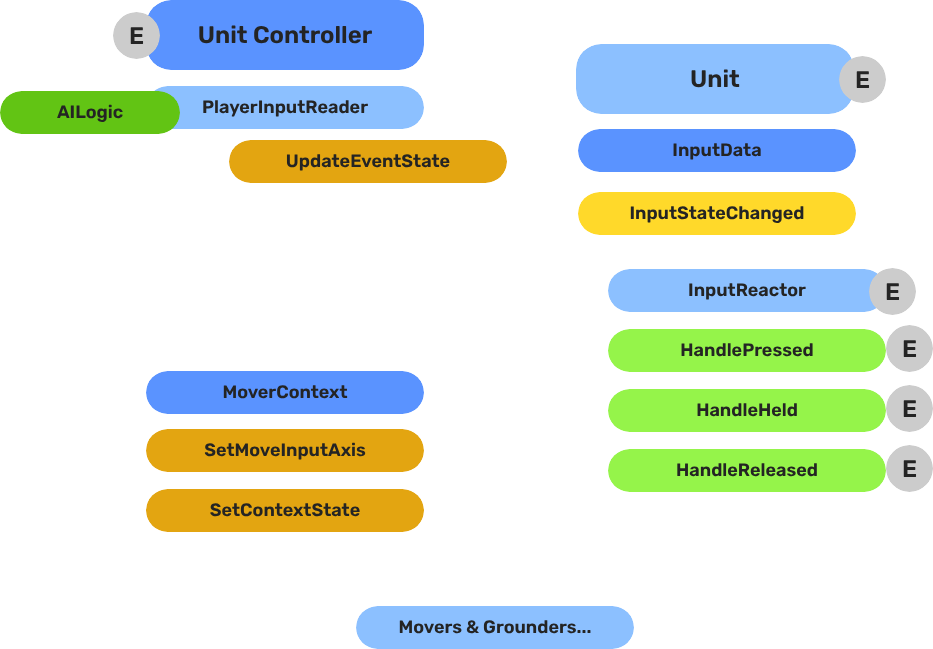

GS_AI provides the structural foundation for AI-driven entity behavior. It defines the base architecture that AI controller implementations build upon, integrating with the Unit system’s controller pattern to drive NPC entities through behavior logic rather than player input.

For architecture details and extension patterns, see the GS_AI API.

GS_AI is a foundation scaffold. It provides the base infrastructure and extension points that your project’s AI implementation builds on top of. Concrete AI behavior (behavior trees, utility AI, state machines) is implemented in your project or via third-party integration.

How It Works

GS_AI establishes the conventions and base classes for AI in the GS_Play framework. AI controllers extend the Unit Controller pattern — the same mechanism that player controllers use to drive units. Switching an entity between player control and AI control is a standard possession swap with no special handling required.

AI implementations listen to the same game lifecycle events as every other GS_Play system. AI controllers respond to standby mode, stage transitions, and manager lifecycle broadcasts automatically through the manager pattern.

Integration Points

| System | How AI Connects |

|---|

| Unit Controllers | AI controllers extend the unit controller pattern to drive NPC movement and actions. |

| GS_Managers | AI systems respond to startup, standby, and shutdown lifecycle events. |

| Interaction | AI entities can be targeting targets, trigger world triggers, and emit/receive pulsors. |

| Cinematics | The Cinematic Controller (in GS_Complete) demonstrates AI-to-cinematic handoff. |

See Also

For the full API and extension patterns:

For related systems:

Get GS_AI

GS_AI — Explore this gem on the product page and add it to your project.

3 - GS_Audio

Audio management, event-based sound, music scoring, mixing, and Klatt voice synthesis for the GS_Play framework.

GS_Audio is the audio management gem for GS_Play. It provides event-based sound playback, a multi-layer music scoring system, mixing buses with effects chains, and a built-in Klatt formant voice synthesizer with 3D spatial audio. All audio features integrate with the GS_Play manager lifecycle and respond to standby mode automatically.

For architecture details, component properties, and extension patterns, see the GS_Audio API.

Quick Navigation

| I want to… | Feature | API |

|---|

| Manage the audio engine, load event libraries, or control master volume | Audio Manager | API |

| Play sounds with pooling, 3D spatialization, and concurrency control | Audio Events | API |

| Configure mixing buses with filters, EQ, and environmental influence effects | Mixing & Effects | API |

| Layer music tracks dynamically based on gameplay state | Score Arrangement | API |

| Generate text-to-speech with configurable voice parameters and 3D spatial audio | Klatt Voice | API |

Installation

GS_Audio requires GS_Core and the MiniAudio gem. Add both to your project’s gem dependencies.

For a complete guided setup, follow the Simple Project Setup guide.

Quick Installation Summary

- Enable the GS_Audio gem in your project configuration.

- Create an Audio Manager prefab and add it to the Game Manager’s Managers list.

- Create Audio Event Library assets for your sound effects.

- Place GS_AudioEventComponent on entities that need to play sounds.

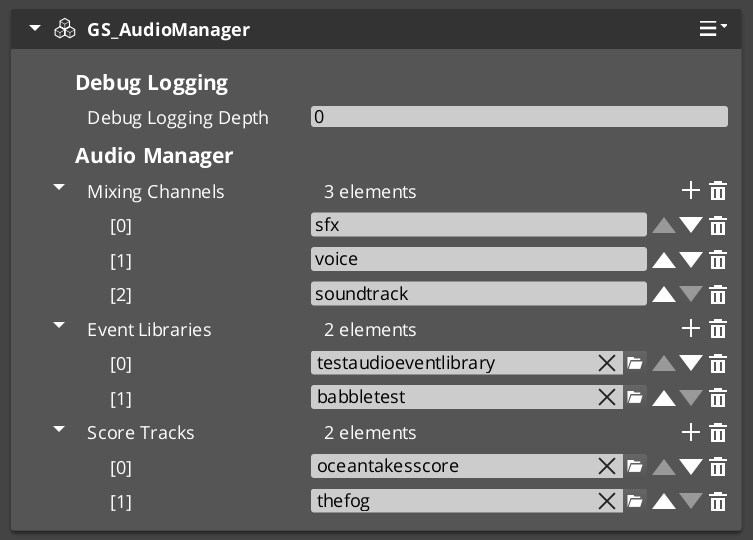

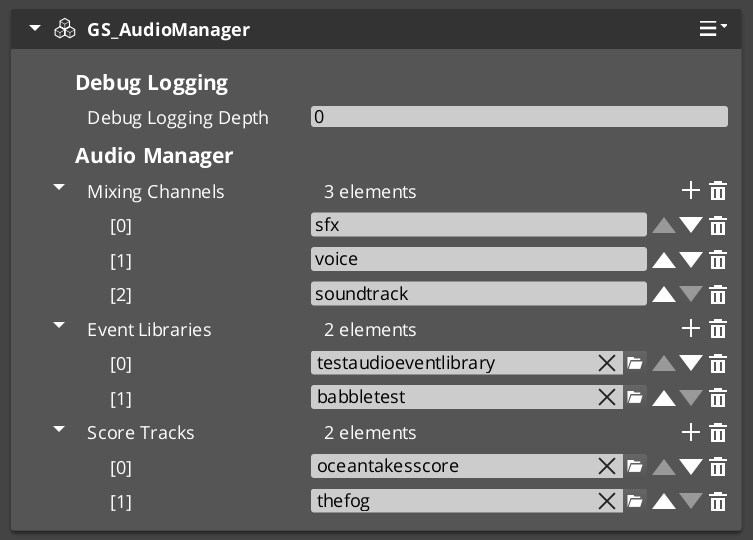

Audio Manager

The Audio Manager is the singleton controller for the entire audio system. It initializes the audio engine, manages mixing buses, loads audio event libraries, and coordinates score playback. Like all GS_Play managers, it extends the Manager base class and plugs into the Game Manager’s startup sequence automatically.

Audio Manager

API

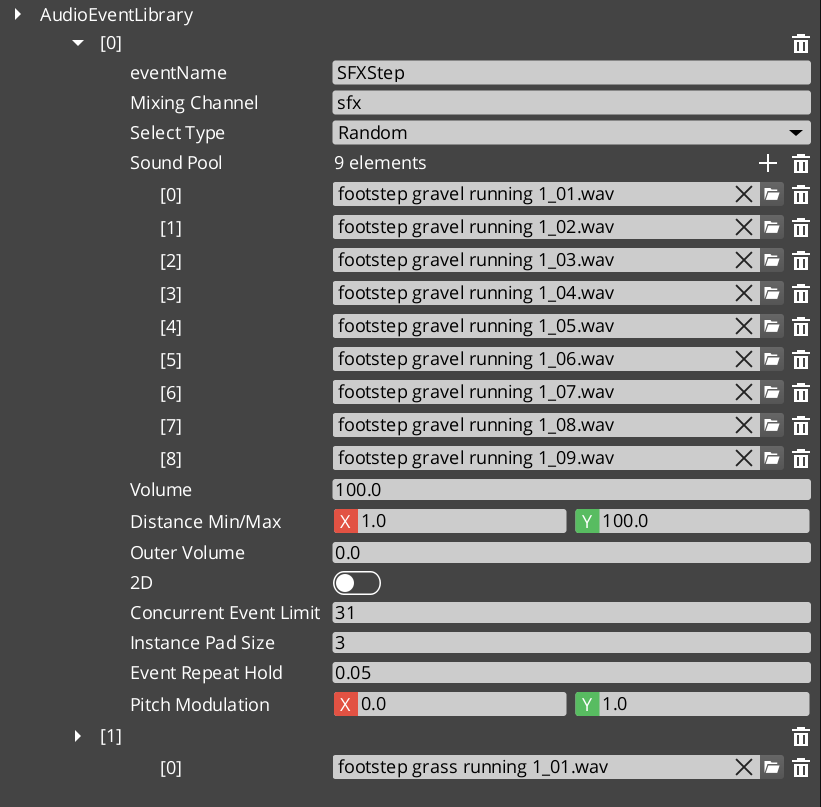

Audio Events

Audio Events are the primary way to play sounds. Each event defines a pool of audio clips with selection rules (random or sequential), 2D or 3D playback mode, concurrent instance limiting, and repeat-hold behavior. Events are grouped into Audio Event Library assets that the Audio Manager loads at startup.

Audio Events

API

Mixing & Effects

The mixing system provides named audio buses with configurable effects chains. Each bus can have filters applied — low pass, high pass, band pass, notch, peaking EQ, shelving, delay, and reverb. Audio Bus Influence Effects allow environmental zones to dynamically modify bus effects based on the listener’s position.

Mixing & Effects

API

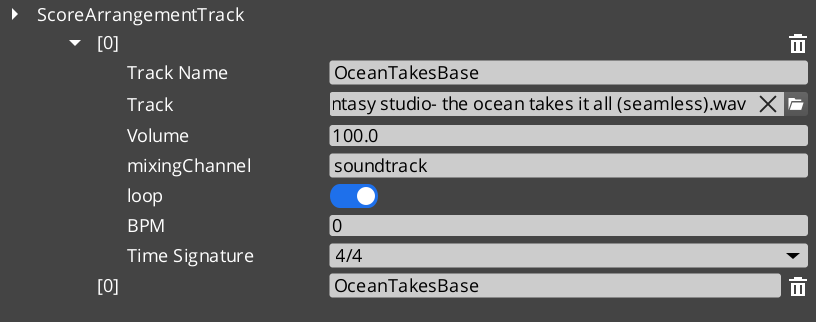

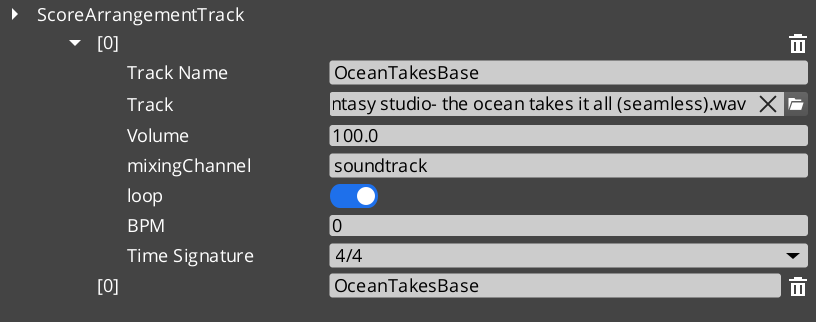

Score Arrangement

Score Arrangement Tracks are multi-layer music assets. Each arrangement defines a time signature, BPM, fade behavior, and a set of Score Layers — individual audio tracks that can be enabled or disabled independently. This allows dynamic music that adds or removes instrument layers based on gameplay state.

Score Arrangement

API

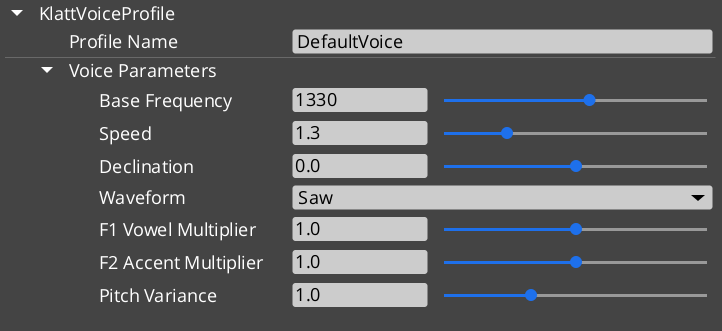

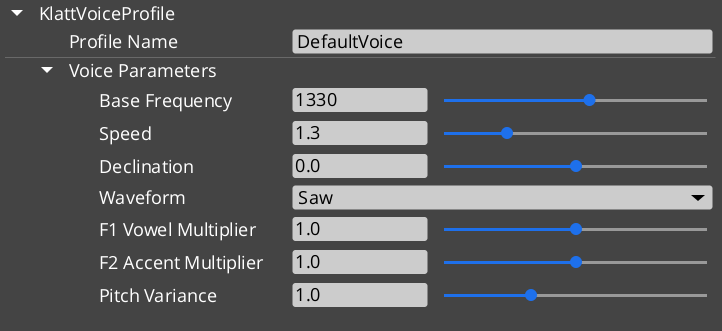

Klatt Voice Synthesis

GS_Play includes a built-in text-to-speech system based on Klatt formant synthesis. The Klatt Voice component converts text to speech in real time with configurable voice parameters — frequency, speed, waveform, formants, and pitch variance. The system supports 3D spatial audio and inline KTT tags for expressive delivery.

Klatt Voice

API

See Also

For the full API, component properties, and C++ extension guide:

For step-by-step project setup:

Get GS_Audio

GS_Audio — Explore this gem on the product page and add it to your project.

3.1 - Audio Manager

How to work with the GS_Play Audio Manager — engine initialization, bus routing, and master control.

The Audio Manager is the singleton controller for the entire audio system. It initializes the MiniAudio engine, manages mixing buses, loads audio event libraries, and coordinates score track playback. Like all GS_Play managers, it extends the Manager base class and responds to the Game Manager lifecycle automatically.

For component properties and API details, see the Framework API reference.

Contents

What It Manages

| Responsibility | What It Does |

|---|

| Engine lifecycle | Initializes and shuts down the MiniAudio audio engine. |

| Mixing buses | Creates and routes named audio buses with effects chains. |

| Event libraries | Loads Audio Event Library assets at startup. |

| Score playback | Manages Score Arrangement Track assets for dynamic music. |

| Master volume | Controls global volume levels per bus. |

Quick Reference

| Need | Bus | Method |

|---|

| Audio management requests | AudioManagerRequestBus | PlayAudioEvent, StopAudioEvent, SetMixerVolume |

Glossary

| Term | Meaning |

|---|

| Audio Event Library | A data asset containing named audio event definitions loaded at startup |

| Mixing Bus | A named audio channel with an effects chain for routing and processing sounds |

| Score Arrangement | A multi-layer music asset managed by the Audio Manager for dynamic playback |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Audio

GS_Audio — Explore this gem on the product page and add it to your project.

3.2 - Audio Events

How to work with GS_Play audio events — data-driven sound playback with pooling, spatialization, and concurrency control.

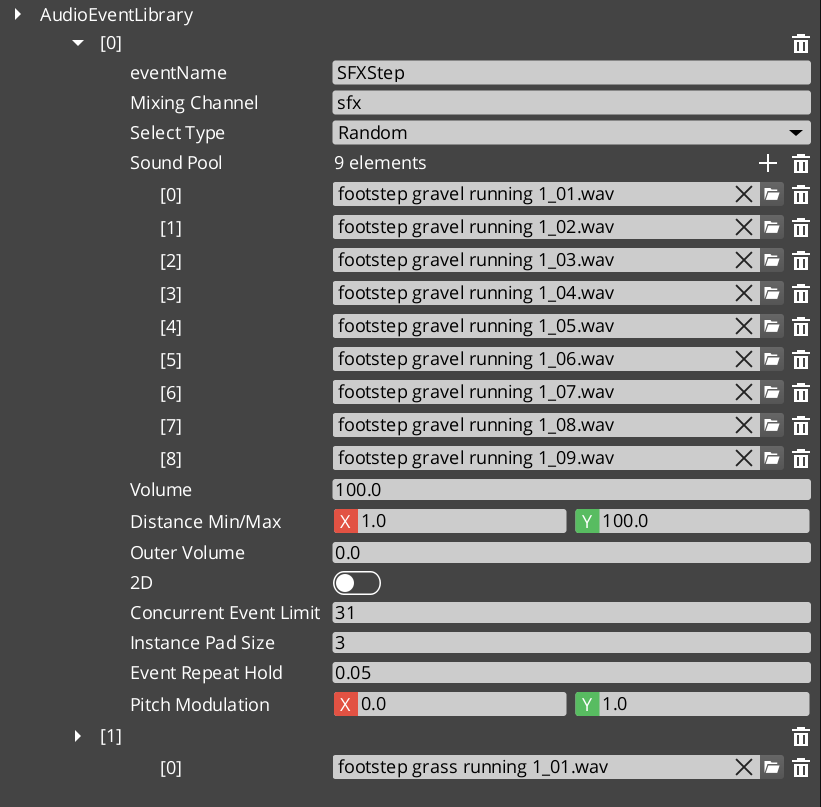

Audio Events are the primary way to play sounds in GS_Play. Each event defines a pool of audio clips with selection rules, playback mode (2D or 3D), concurrent instance limiting, and repeat-hold behavior. Events are grouped into Audio Event Library assets that the Audio Manager loads at startup.

For asset structure and component properties, see the Framework API reference.

Contents

How It Works

- Create an Audio Event Library asset in the O3DE Asset Editor.

- Define audio events — each with a name, clip pool, and playback settings.

- Load the library by assigning it to the Audio Manager.

- Play events by name from ScriptCanvas or C++.

Event Configuration

| Setting | What It Controls |

|---|

| Pool Selection | Random — picks a random clip from the pool. Increment — plays clips sequentially. |

| 2D / 3D | 2D plays without spatialization. 3D positions the sound in the world. |

| Concurrent Limit | Maximum instances of this event that can play simultaneously. |

| Repeat Hold | Minimum time between repeated plays of the same event. |

GS_AudioEventComponent

Place a GS_AudioEventComponent on any entity that needs to play sounds. The component references events by name from loaded libraries and handles 3D positioning automatically based on the entity’s transform.

Quick Reference

| Need | Bus | Method |

|---|

| Play a sound event | AudioManagerRequestBus | PlayAudioEvent(eventName) |

| Stop a sound event | AudioManagerRequestBus | StopAudioEvent(eventName) |

Glossary

| Term | Meaning |

|---|

| Audio Event | A named, data-driven sound definition with clip pool, playback mode, and concurrency settings |

| Audio Event Library | A data asset grouping multiple audio events for batch loading |

| Pool Selection | The strategy for choosing clips — Random or Increment |

| Concurrent Limit | Maximum simultaneous instances of the same event |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Audio

GS_Audio — Explore this gem on the product page and add it to your project.

3.3 - Mixing & Effects

How to work with GS_Play audio mixing — named buses, effects chains, and environmental audio influence.

The mixing system provides named audio buses with configurable effects chains. Each bus can have multiple audio filters applied for real-time audio processing. Audio Bus Influence Effects allow environmental zones to dynamically modify bus parameters based on the listener’s position.

For component properties and filter types, see the Framework API reference.

GS_Audio is in Early Development. Full support planned soon: 2026.

Contents

Available Filters

| Filter | What It Does |

|---|

| Low Pass | Removes high frequencies. Simulates muffling (underwater, behind walls). |

| High Pass | Removes low frequencies. Simulates thin/tinny audio (radio, phone). |

| Band Pass | Passes a frequency band. Isolates specific ranges. |

| Notch | Removes a narrow frequency band. |

| Peaking EQ | Boosts or cuts a frequency band. |

| Low Shelf | Boosts or cuts frequencies below a threshold. |

| High Shelf | Boosts or cuts frequencies above a threshold. |

| Delay | Adds echo/delay effect. |

| Reverb | Adds room/space reverb. |

Environmental Audio Influence

Audio Bus Influence Effects allow spatial zones to modify bus effects dynamically. When the listener enters an influence zone (like a cave or tunnel), the zone’s effects are applied to the specified bus with priority-based stacking. Multiple overlapping zones resolve by priority.

Quick Reference

| Need | Bus | Method |

|---|

| Control mixer settings | GS_MixingRequestBus | Mixer control methods |

| Set master volume | AudioManagerRequestBus | SetMixerVolume |

Glossary

| Term | Meaning |

|---|

| Mixing Bus | A named audio channel that routes sound through an effects chain |

| Audio Filter | A real-time audio processing effect applied to a mixing bus |

| Audio Bus Influence Effect | A spatial zone that modifies bus effects based on listener position |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Audio

GS_Audio — Explore this gem on the product page and add it to your project.

3.4 - Score Arrangement

How to work with GS_Play score arrangements — multi-layer dynamic music with configurable time signatures and layer control.

Score Arrangement Tracks are multi-layer music assets for dynamic, adaptive game music. Each arrangement defines a time signature, tempo, fade behavior, and a set of independently controllable Score Layers. This enables music that responds to gameplay — adding percussion during combat, muting melody during dialogue, or transitioning between intensity levels.

For asset structure and playback API, see the Framework API reference.

GS_Audio is in Early Development. Full support planned soon: 2026.

Contents

How It Works

A Score Arrangement Track is a data asset containing:

| Field | What It Controls |

|---|

| Time Signature | Musical timing (4/4, 3/4, 6/8, etc.). |

| BPM | Tempo in beats per minute. |

| Fade Control | How layers fade in and out. |

| Score Layers | Individual audio tracks that play simultaneously. |

Each Score Layer is an independent audio stream within the arrangement. Layers can be enabled or disabled at runtime, creating dynamic music that evolves based on game state.

Supported Time Signatures

4/4, 4/2, 12/8, 2/2, 2/4, 6/8, 3/4, 3/2, 9/8

Glossary

| Term | Meaning |

|---|

| Score Arrangement Track | A multi-layer music data asset with time signature, tempo, and controllable layers |

| Score Layer | An individual audio stream within an arrangement that can be enabled or disabled at runtime |

| BPM | Beats per minute — the tempo of the arrangement |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Audio

GS_Audio — Explore this gem on the product page and add it to your project.

3.5 - Klatt Voice Synthesis

How to work with GS_Play Klatt voice synthesis — text-to-speech with 3D spatial audio, voice profiles, and inline parameter control.

GS_Play includes a built-in text-to-speech system based on Klatt formant synthesis. The KlattVoiceComponent converts text to speech in real time with configurable voice parameters. Voices are positioned in 3D space and attenuate with distance, making synthesized speech feel like it comes from the character speaking.

For component properties, voice parameter details, and the phoneme mapping system, see the Framework API reference.

Contents

How It Works

- Configure a voice using a KlattVoiceProfile — set frequency, speed, waveform, formants, and pitch variance.

- Assign a KlattPhonemeMap — maps text characters to ARPABET phonemes for pronunciation.

- Speak text from ScriptCanvas or C++ — the system converts text to phonemes and synthesizes audio in real time.

- Position in 3D — the voice component uses KlattSpatialConfig for 3D audio positioning relative to the entity.

Voice Configuration

| Parameter | What It Controls |

|---|

| Frequency | Base voice pitch. |

| Speed | Speech rate. |

| Waveform | Voice quality — Saw, Triangle, Sin, Square, Pulse, Noise, Warble. |

| Formants | Vocal tract resonance characteristics. |

| Pitch Variance | Random pitch variation for natural-sounding speech. |

| Declination | Pitch drop over the course of a sentence. |

KTT (Klatt Text Tags) allow inline parameter changes within speech text for expressive delivery:

"Hello <speed=0.5>world</speed>, how are <pitch=1.2>you</pitch>?"

The KlattCommandParser processes these tags during speech synthesis, enabling mid-sentence changes to speed, pitch, and other voice parameters.

For the complete tag reference — all attributes, value ranges, and reset behavior — see the Framework API: KTT Voice Tags.

Phoneme Maps

Two base phoneme maps are available:

| Map | Description |

|---|

| SoLoud_Default | Simple default mapping. |

| CMU_Full | Full CMU pronunciation dictionary mapping. |

Custom phoneme overrides allow project-specific word pronunciations (character names, fantasy terms) without modifying the base map.

3D Spatial Audio

The KlattSpatialConfig controls how synthesized speech is positioned in 3D:

- Voices attenuate with distance from the listener.

- The KlattVoiceSystemComponent tracks the listener position and updates all active voices.

- Multiple characters can speak simultaneously with correct spatial positioning.

Quick Reference

| Need | Bus | Method |

|---|

| Control a voice | KlattVoiceRequestBus | Voice synthesis methods (entity-addressed) |

| System-level voice control | KlattVoiceSystemRequestBus | Listener tracking, engine management |

Glossary

| Term | Meaning |

|---|

| Klatt Synthesis | A formant-based speech synthesis method that generates voice from frequency parameters |

| KTT Tags | Inline text tags that modify voice parameters mid-sentence during synthesis |

| Phoneme Map | A mapping from text characters to ARPABET phonemes for pronunciation |

| KlattSpatialConfig | Configuration for 3D audio positioning and distance attenuation of synthesized speech |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Audio

GS_Audio — Explore this gem on the product page and add it to your project.

4 - GS_Cinematics

Node-graph dialogue sequences, cinematic stage management, polymorphic performances, and world-space UI with typewriter and audio babble.

GS_Cinematics is the complete dialogue and cinematic control system for GS_Play. It provides a node-graph authoring tool for branching dialogue sequences, a runtime sequencer with conditions and side effects, a UI layer for text display and player choice, and a Cinematics Manager for scene staging. Custom conditions, effects, and performance types are discovered automatically through O3DE serialization, so project-specific dialogue behaviors can be added without modifying the gem.

For architecture details, component properties, and extending the system in C++, see the GS_Cinematics API.

Quick Navigation

| I want to… | Feature | API |

|---|

| Coordinate cinematic sequences and manage stage markers for actor positioning | Cinematics Manager | API |

| Author branching dialogue with conditions, effects, and performances in a node graph | Dialogue System | API |

| Display dialogue text, player choices, and speech babble on screen or in world space | Dialogue UI | API |

| Move actors to stage markers during dialogue with navigation or teleport | Performances | API |

Installation

GS_Cinematics requires GS_Core, LyShine, and RecastNavigation. The node editor tools additionally require GraphCanvas and GraphModel as editor-only dependencies.

For a full guided walkthrough, follow the Simple Project Setup guide.

Quick Installation Summary

- Enable the GS_Cinematics gem in your project configuration.

- Create Cinematics Manager and Dialogue Manager prefabs, add to the Game Manager’s Managers list.

- Create

.dialoguedb assets with the Dialogue Editor. - Place DialogueSequencer and DialogueUI components in your level.

- Bake NavMesh in levels where PathTo performances will be used.

Cinematics Manager

The Cinematics Manager coordinates the begin and end of cinematic sequences, broadcasting enter/exit events so other systems know when to yield control. It maintains a registry of stage marker entities placed in each level — named anchor points that performers and cameras look up at runtime to determine positioning during a sequence.

Cinematics Manager

API

Dialogue System

The Dialogue System is the authoring and runtime core. Dialogue content is stored in .dialoguedb assets containing actors, portraits, and sequences. Each sequence is a graph of polymorphic nodes — text, selection, random branch, effects, and performances. A visual node editor lets writers author graphs directly in the O3DE Editor. At runtime, the sequencer walks the graph, evaluates conditions, executes effects, and emits events for the UI layer.

Dialogue System

API

Dialogue UI

The Dialogue UI feature set puts dialogue text and player choices on screen. DialogueUI handles screen-space text display with a Typewriter for character-by-character reveal. DialogueUISelection renders player choices as selectable buttons. World-space variants place speech bubbles above actors. Babble plays audio tied to the active speaker. The DialogueUIBridge routes sequencer events to the correct UI implementation and routes player selection back.

Dialogue UI

API

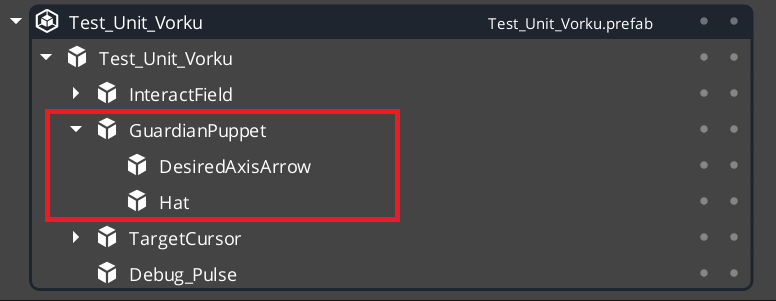

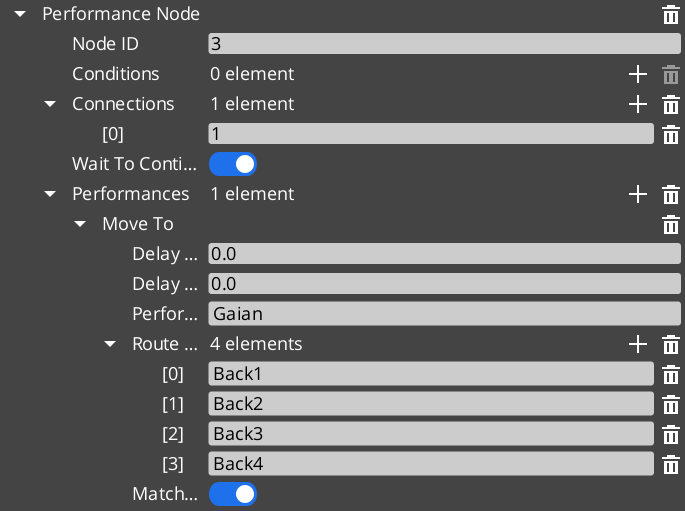

Performances are polymorphic actions that move or reposition actors during dialogue. MoveTo translates actors to named stage markers, PathTo navigates via NavMesh, and Reposition teleports instantly. All run asynchronously — the sequencer waits for completion before advancing. Custom performance types can be created through extending the class.

Performances

API

See Also

For the full API, component properties, and C++ extension guide:

For step-by-step project setup:

Get GS_Cinematics

GS_Cinematics — Explore this gem on the product page and add it to your project.

4.1 - Cinematics Manager

How to coordinate cinematic sequences in GS_Play — beginning and ending cutscenes, registering stage markers, and reacting to cinematic events from scripts.

The Cinematics Manager is the GS_Core-integrated manager component for the GS_Cinematics system. It signals when a cinematic sequence begins and ends, broadcasts events so other systems — UI, movement, input — know when to yield control to a cutscene, and maintains a registry of named CinematicStageMarkerComponent entities placed in each level.

For architecture details, component properties, and extending the system in C++, see the Framework API reference.

Contents

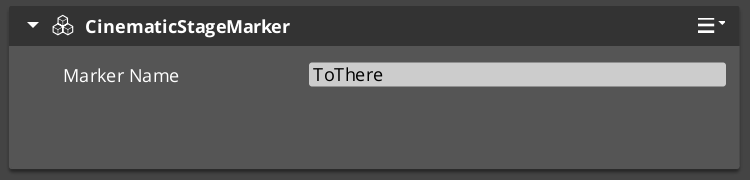

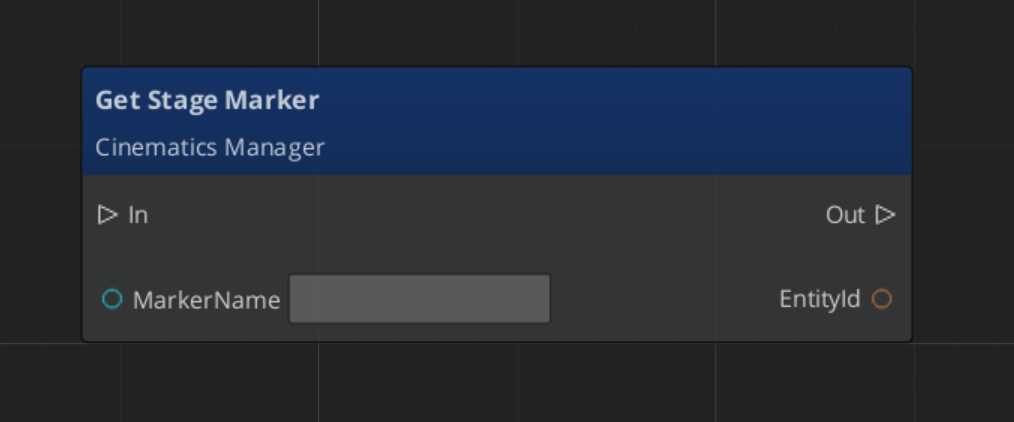

Stage Markers

Stage markers are named anchor entities placed in each level that serve as spatial reference points for cinematic sequences. Performers and camera systems look up markers by name at runtime to determine where actors should stand, face, or move to during a cutscene.

This design decouples authored dialogue data from level-specific layout. The same sequence asset plays in any level as long as that level provides CinematicStageMarkerComponent entities with the expected names.

| Component | Purpose |

|---|

CinematicStageMarkerComponent | Marks a named position in the level for cinematic staging. |

To register a marker, add CinematicStageMarkerComponent to an entity in the level and give it a name. The Cinematics Manager automatically discovers and registers all markers in the level on startup via RegisterStageMarker. At runtime, any system can retrieve a marker entity by name with GetStageMarker.

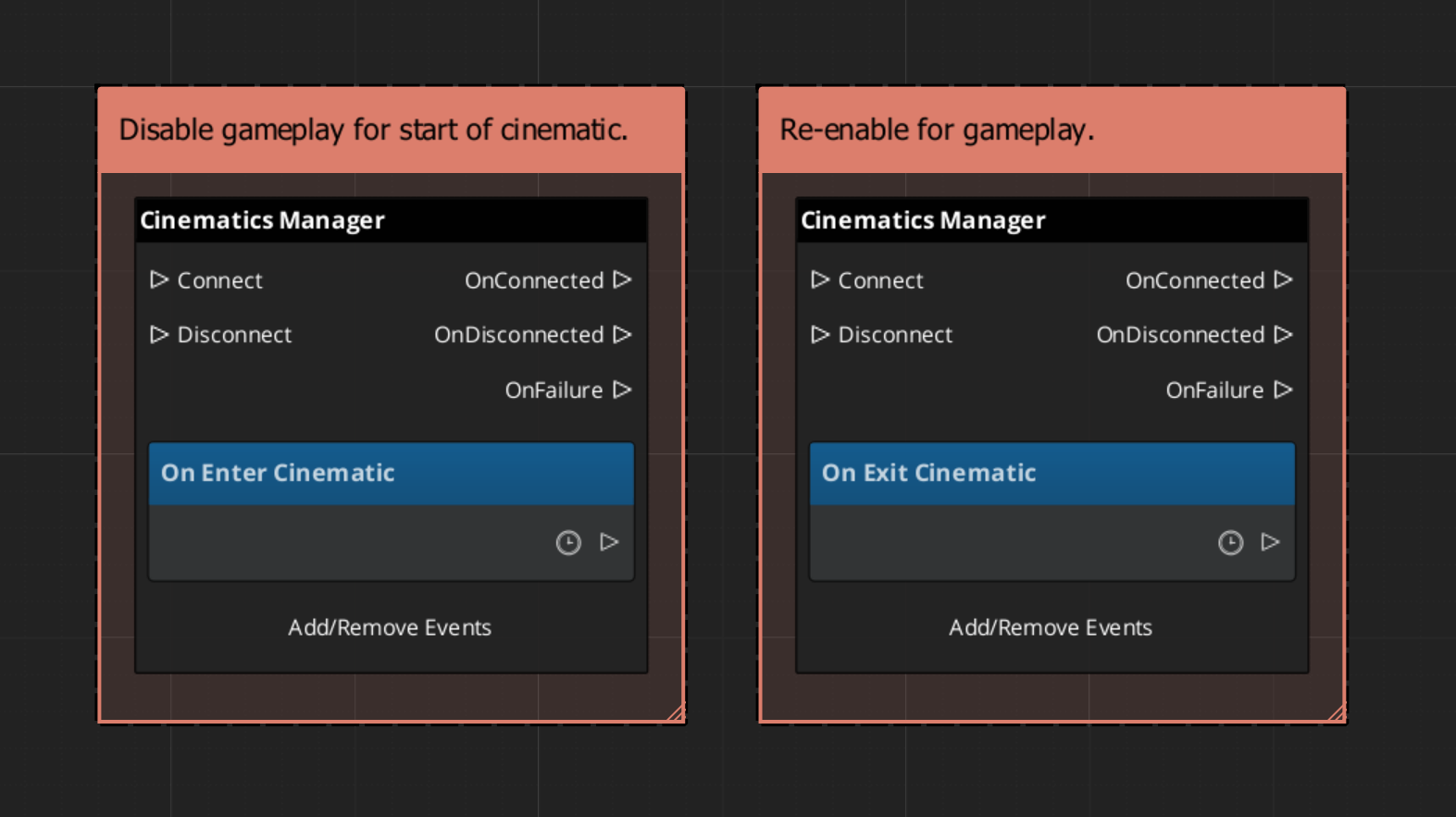

Cinematic Events

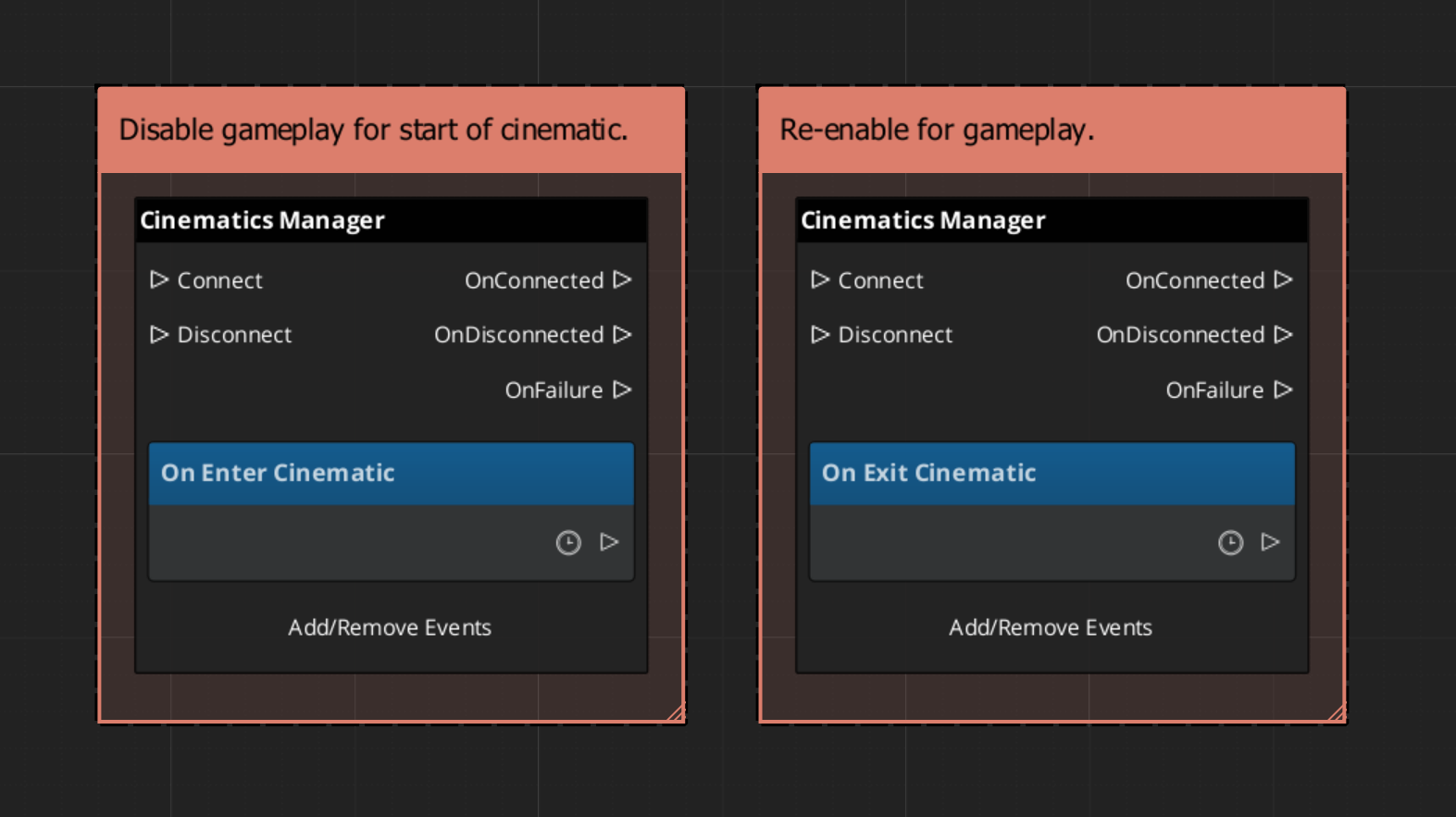

When a cinematic begins and ends, the Cinematics Manager broadcasts events on CinematicsManagerNotificationBus. Listen to these in any system that needs to yield or reclaim control during a cutscene — player input, HUD, AI, camera.

| Event | When It Fires | What to Do |

|---|

EnterCinematic | A cinematic sequence has started. | Disable player input, hide the HUD, suspend AI. |

ExitCinematic | The cinematic sequence has ended. | Re-enable input, restore HUD, resume AI. |

Starting and Ending Cinematics

Call BeginCinematic to signal that a cinematic is starting and EndCinematic when it completes. These calls broadcast EnterCinematic and ExitCinematic respectively. They do not drive animation or sequence playback directly — that is the role of DialogueSequencerComponent. The Cinematics Manager handles the global state change so all listening systems respond in one coordinated broadcast.

| Bus | Method | What It Does |

|---|

CinematicsManagerRequestBus | BeginCinematic | Broadcasts EnterCinematic to all listeners. |

CinematicsManagerRequestBus | EndCinematic | Broadcasts ExitCinematic to all listeners. |

CinematicsManagerRequestBus | RegisterStageMarker | Adds a marker to the registry by name. |

CinematicsManagerRequestBus | GetStageMarker | Returns the entity for a marker by name. |

ScriptCanvas Usage

Reacting to Cinematic State

To pause gameplay systems when a cinematic starts and resume them when it ends, connect to CinematicsManagerNotificationBus:

Triggering a Cinematic

To start a cinematic from a trigger or cutscene entity, call BeginCinematic, drive the sequence through the Dialogue Sequencer, then call EndCinematic on completion:

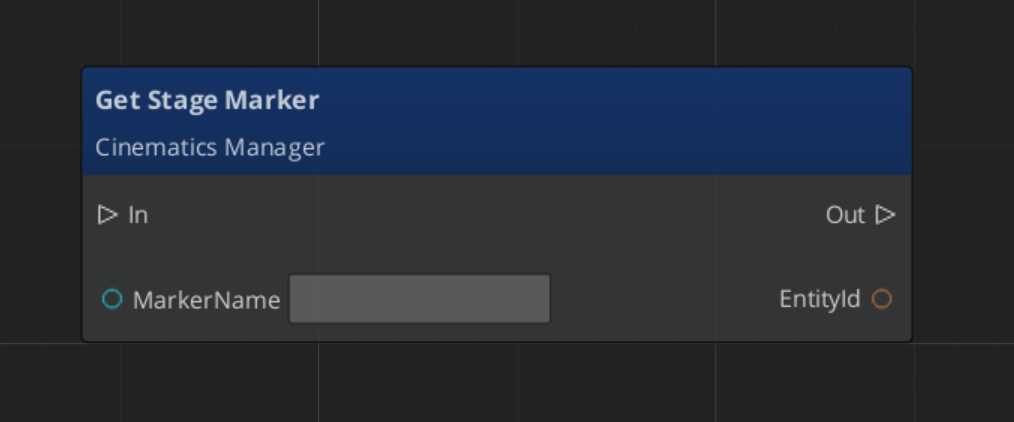

Looking Up a Stage Marker

Quick Reference

| Need | Bus | Method / Event |

|---|

| Start a cinematic | CinematicsManagerRequestBus | BeginCinematic |

| End a cinematic | CinematicsManagerRequestBus | EndCinematic |

| Register a stage marker | CinematicsManagerRequestBus | RegisterStageMarker |

| Retrieve a stage marker entity | CinematicsManagerRequestBus | GetStageMarker |

| Know when a cinematic starts | CinematicsManagerNotificationBus | EnterCinematic |

| Know when a cinematic ends | CinematicsManagerNotificationBus | ExitCinematic |

Glossary

| Term | Meaning |

|---|

| Stage Marker | A named anchor entity in a level used as a spatial reference for cinematic positioning |

| Cinematic | A global state where the Cinematics Manager has signaled that a cutscene is in progress |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Cinematics

GS_Cinematics — Explore this gem on the product page and add it to your project.

4.2 - Dialogue System

How to author and play back branching dialogue in GS_Play — dialogue databases, node types, conditions, effects, and the runtime sequencer.

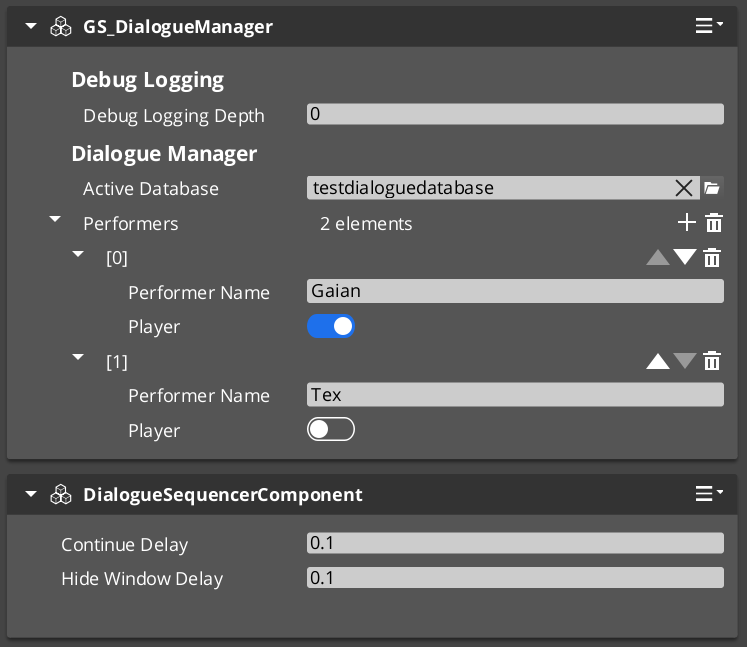

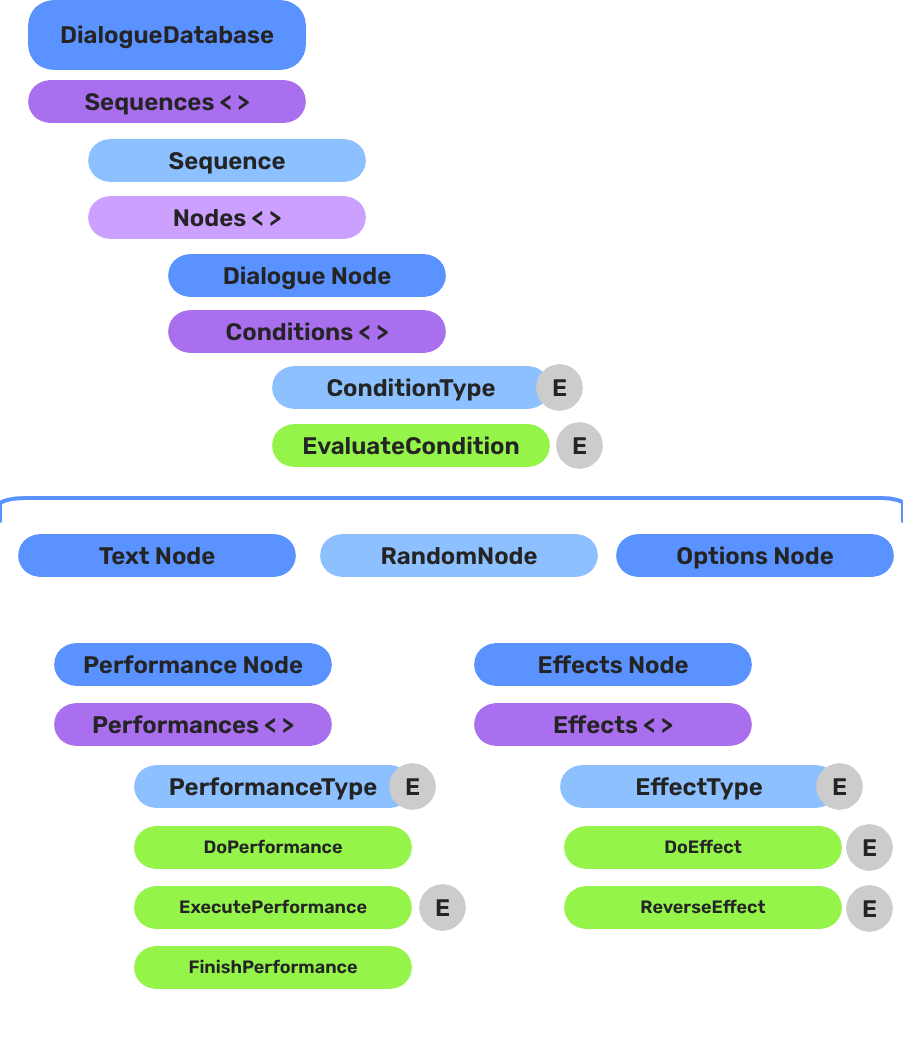

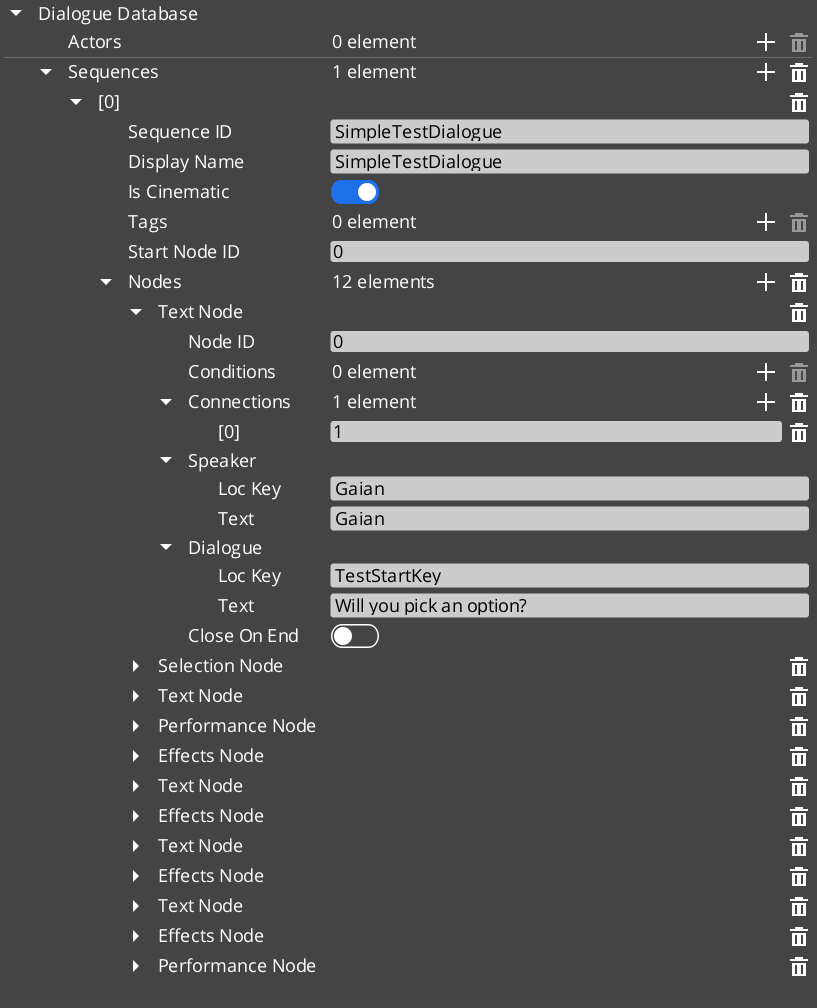

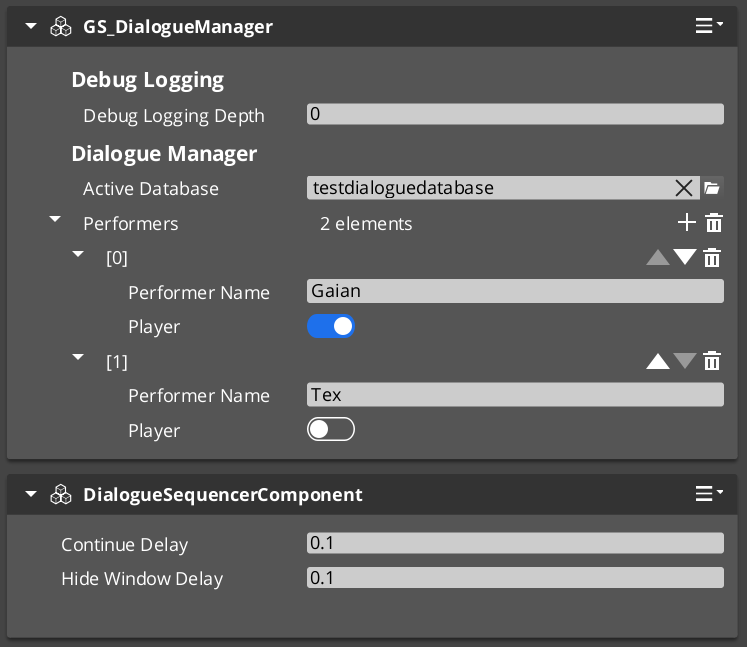

The Dialogue System is the authoring and runtime core of GS_Cinematics. Dialogue content is stored in .dialoguedb assets (DialogueDatabase), which contain named actors and a collection of DialogueSequence records. Each sequence is a graph of polymorphic nodes. At runtime, GS_DialogueManagerComponent manages the database and maps performer names to entities in the level, while DialogueSequencerComponent drives playback — walking the graph, evaluating conditions on branches, executing effects, and emitting events that UI and other systems consume.

For architecture details, component properties, and extending the system in C++, see the Framework API reference.

Contents

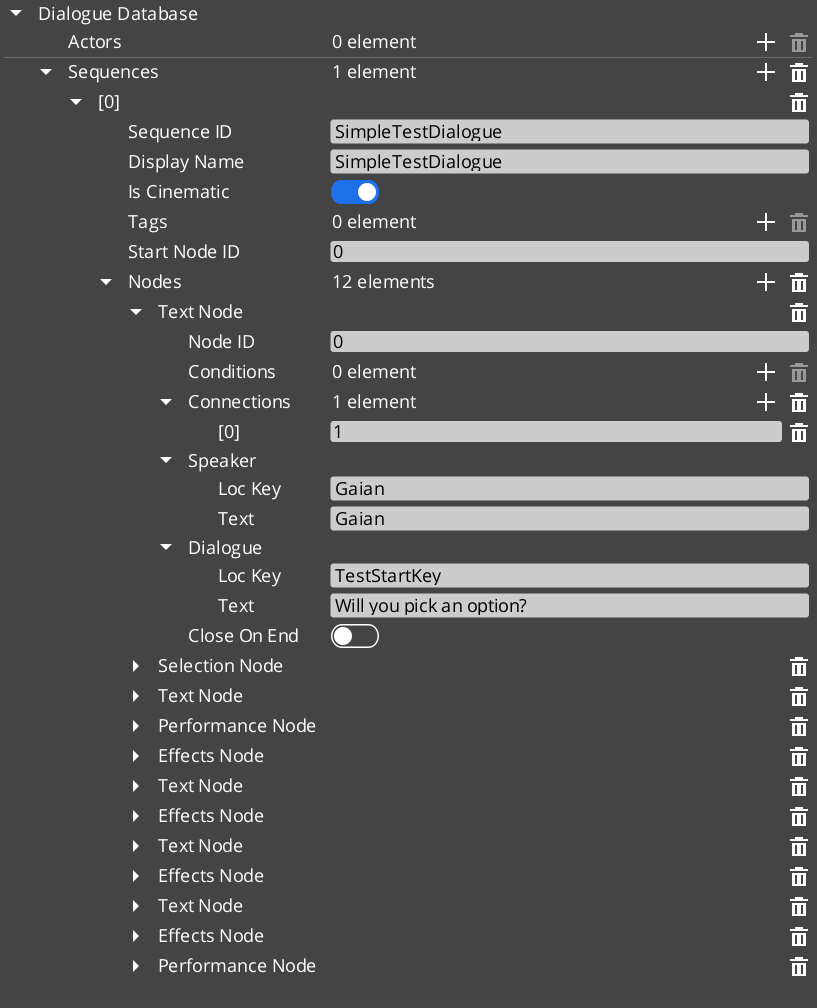

Dialogue Database

Breakdown

A dialogue sequence is authored in the node editor, stored in a .dialoguedb asset, and driven at runtime by the Dialogue Manager and Sequencer:

| Layer | What It Means |

|---|

| DialogueDatabase | Stores named actors and sequences. Loaded at runtime by the Dialogue Manager. |

| DialogueSequence | A directed node graph. The Sequencer walks from startNodeId through Text, Selection, Effects, and Performance nodes. |

| Conditions | Polymorphic evaluators on branches. Failed conditions skip that branch automatically. |

| Effects | Polymorphic actions at EffectsNodeData nodes — set records, toggle entities. |

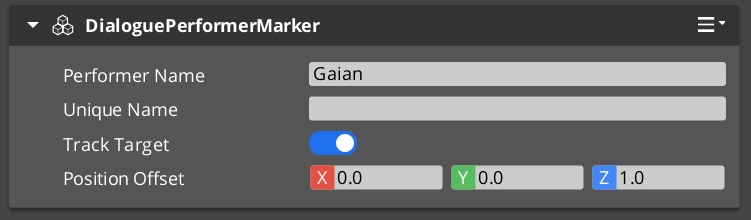

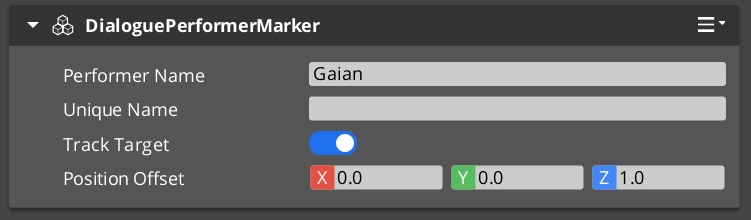

| Performers | Named actor anchors in the level. Resolved from database actor names via DialoguePerformerMarkerComponent. |

Conditions, effects, and performances are discovered automatically at startup — custom types from any gem appear in the editor without modifying GS_Cinematics.

E Indicates extensible classes and methods.

Patterns - Complete list of system patterns used in GS_Play.

DialogueDatabase Asset

The DialogueDatabase is a .dialoguedb asset authored in the O3DE node editor. It is the single source of truth for all dialogue content in a project section.

| Asset Contents | Purpose |

|---|

| Actors | Named character definitions with portrait and metadata. |

| Sequences | Named DialogueSequence records, each a graph of nodes. |

Load a database at runtime by calling ChangeDialogueDatabase on DialogueManagerRequestBus. The manager resolves performer names from the database against DialoguePerformerMarkerComponent entities placed in the current level.

Node Types

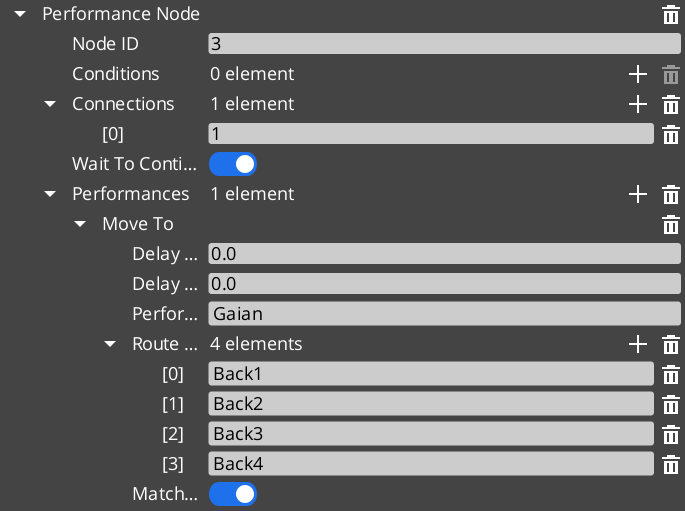

Each sequence is a directed graph of DialogueNodeData nodes. The sequencer walks the graph starting from startNodeId and advances through the nodes in order.

| Node Type | What It Does |

|---|

TextNodeData | Displays a speaker line. Supports LocalizedStringId for localization. |

SelectionNodeData | Presents the player with a list of choices. Branches based on selection. |

RandomNodeData | Selects a random outgoing branch. |

EffectsNodeData | Executes one or more DialogueEffect objects without advancing to a text node. |

PerformanceNodeData | Triggers a DialoguePerformance action and waits for OnPerformanceComplete before continuing. |

Conditions

Conditions are polymorphic objects attached to sequence branches. The sequencer evaluates all conditions on a branch before following it. Branches whose conditions fail are skipped.

| Condition Type | What It Evaluates |

|---|

Boolean_DialogueCondition | A base boolean comparison. |

Record_DialogueCondition | Checks a game record via the RecordKeeper system. Extends Boolean_DialogueCondition. |

Effects

Effects are polymorphic objects executed when the sequencer reaches an EffectsNodeData node. Effects can also be reversed.

| Effect Type | What It Does |

|---|

SetRecords_DialogueEffect | Sets one or more game records via the RecordKeeper system. |

ToggleEntitiesActive_DialogueEffect | Toggles one or more entities active or inactive. |

Performances are polymorphic objects executed when the sequencer reaches a PerformanceNodeData node. A performance can be blocking — the sequencer pauses and waits for OnPerformanceComplete before advancing — or non-blocking, where dialogue continues immediately after the performance fires.

Like conditions and effects, performance types are discovered automatically at startup via the type registry. Custom performance types from any gem appear in the editor without modifying GS_Cinematics.

| Performance Type | What It Does |

|---|

MoveTo_DialoguePerformance | Smoothly moves a named performer to a CinematicStageMarkerComponent position. Fires MoveTo_PerformanceNotificationBus — a companion component on the performer entity responds and moves it, then signals completion. |

PathTo_DialoguePerformance | Navigates a named performer to a marker using the scene navmesh. Uses RecastNavigation pathfinding through the world geometry rather than a direct interpolation. |

RepositionPerformer_DialoguePerformance | Instantly teleports a performer to a marker. Non-blocking — dialogue advances without waiting. |

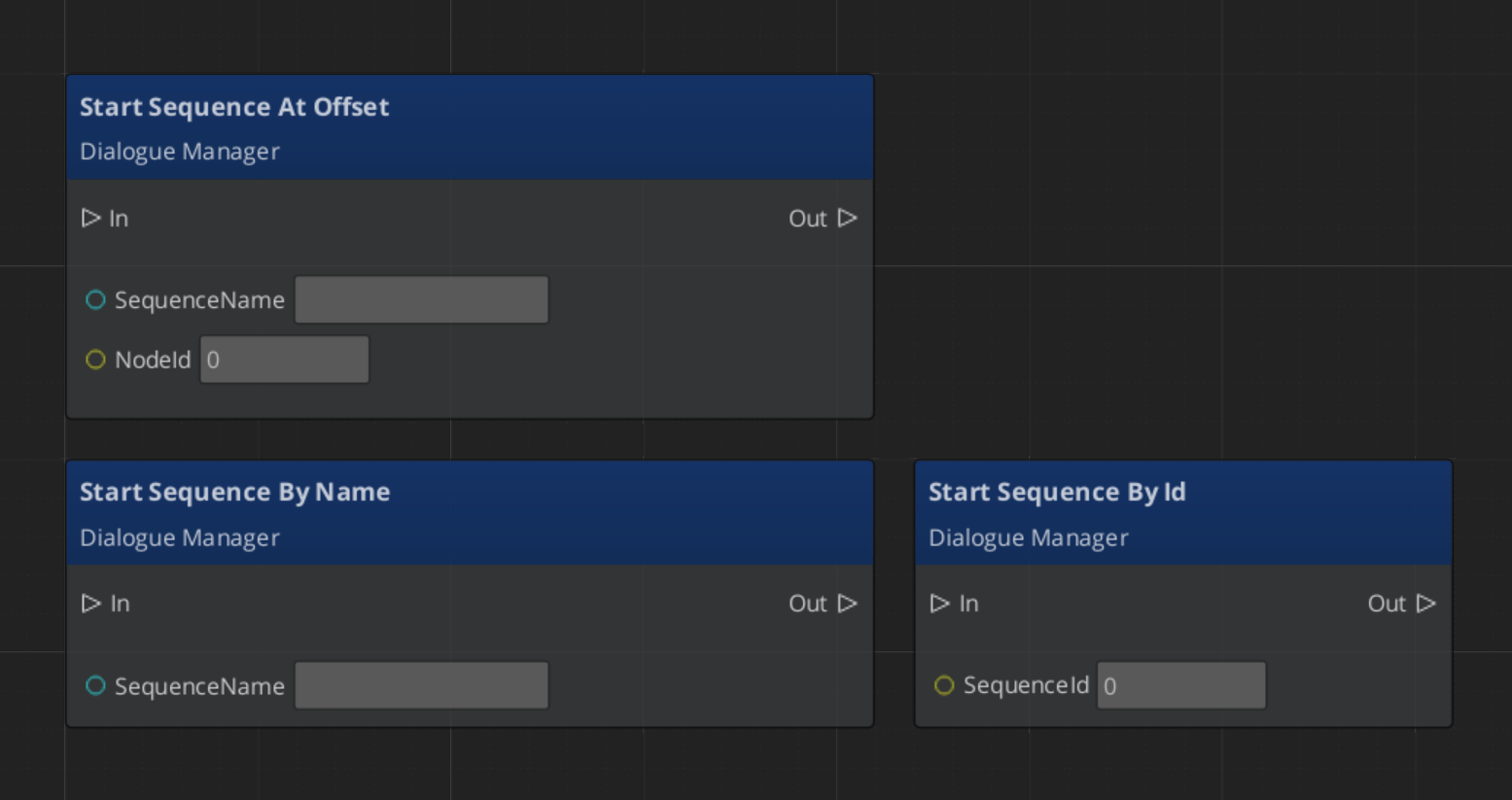

Runtime Playback

GS_DialogueManagerComponent

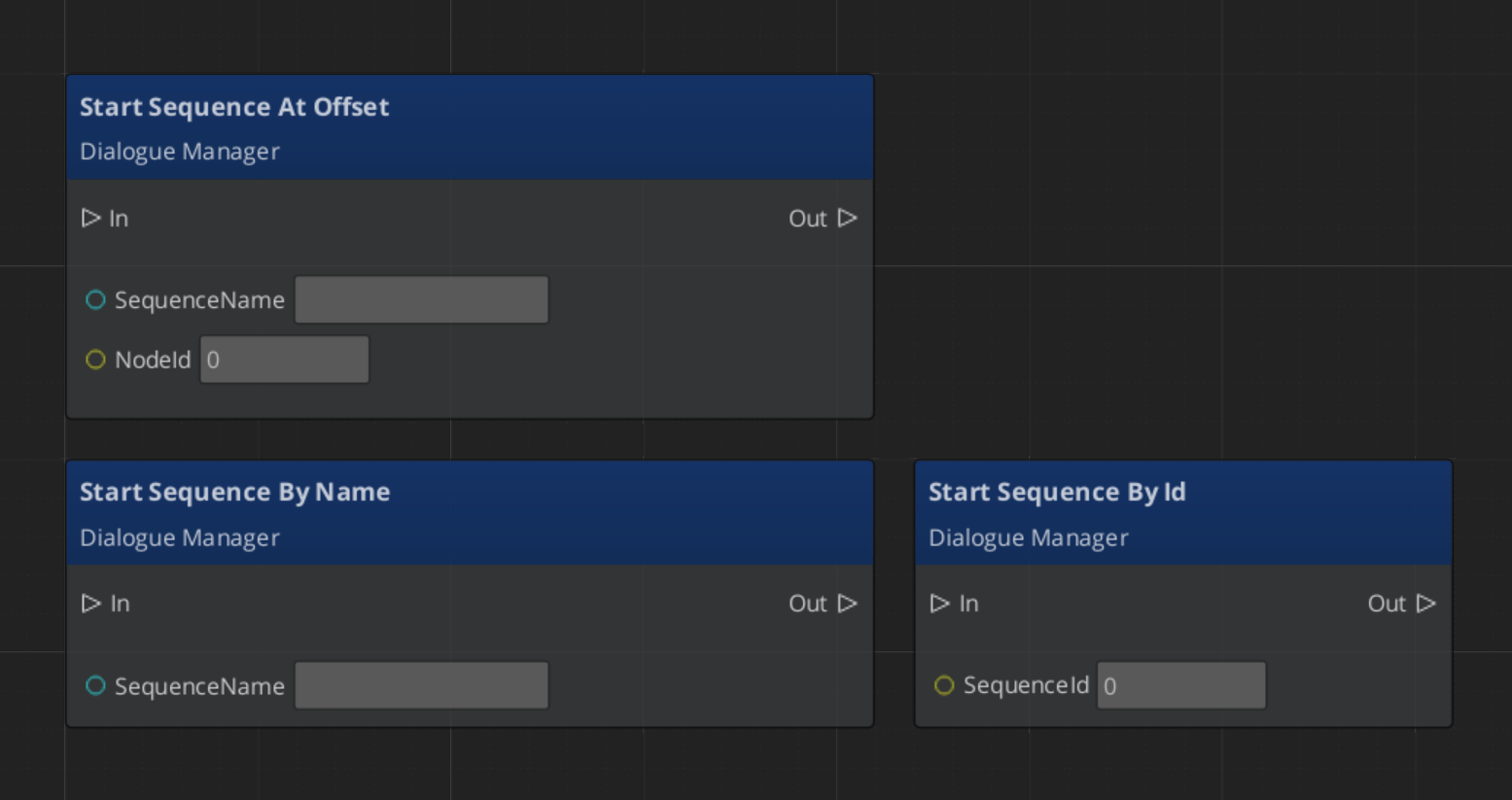

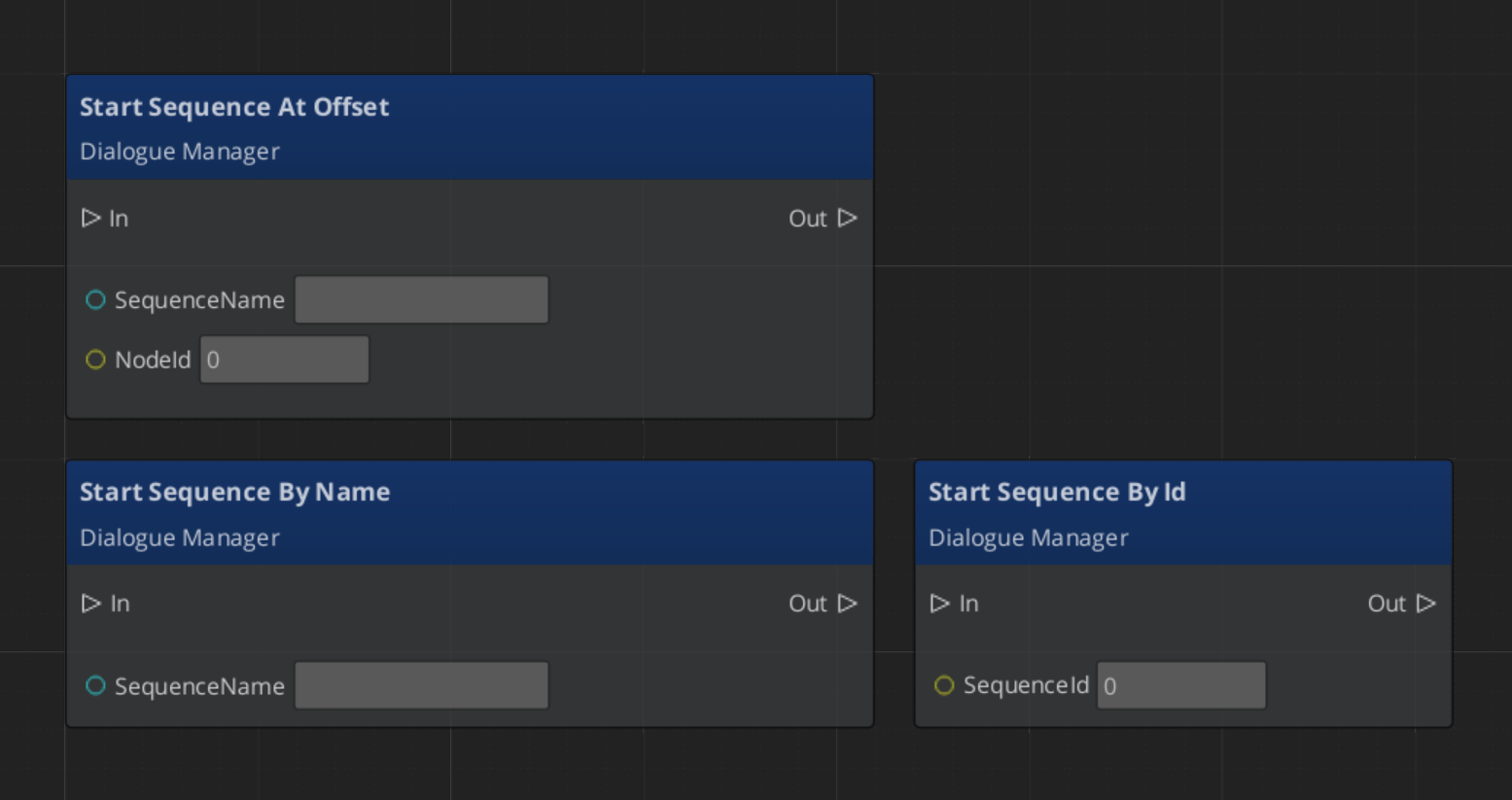

The Dialogue Manager is the top-level manager for all dialogue. It holds the active DialogueDatabase, maps performer names to level entities via DialoguePerformerMarkerComponent, and is the entry point for starting sequences by name.

| Bus | Method | What It Does |

|---|

DialogueManagerRequestBus | StartDialogueSequenceByName | Starts a named sequence from the active database. |

DialogueManagerRequestBus | ChangeDialogueDatabase | Loads a different DialogueDatabase asset. |

DialogueManagerRequestBus | RegisterPerformerMarker | Registers a performer entity by name for the current level. |

DialogueManagerRequestBus | GetPerformer | Returns the entity for a named performer. |

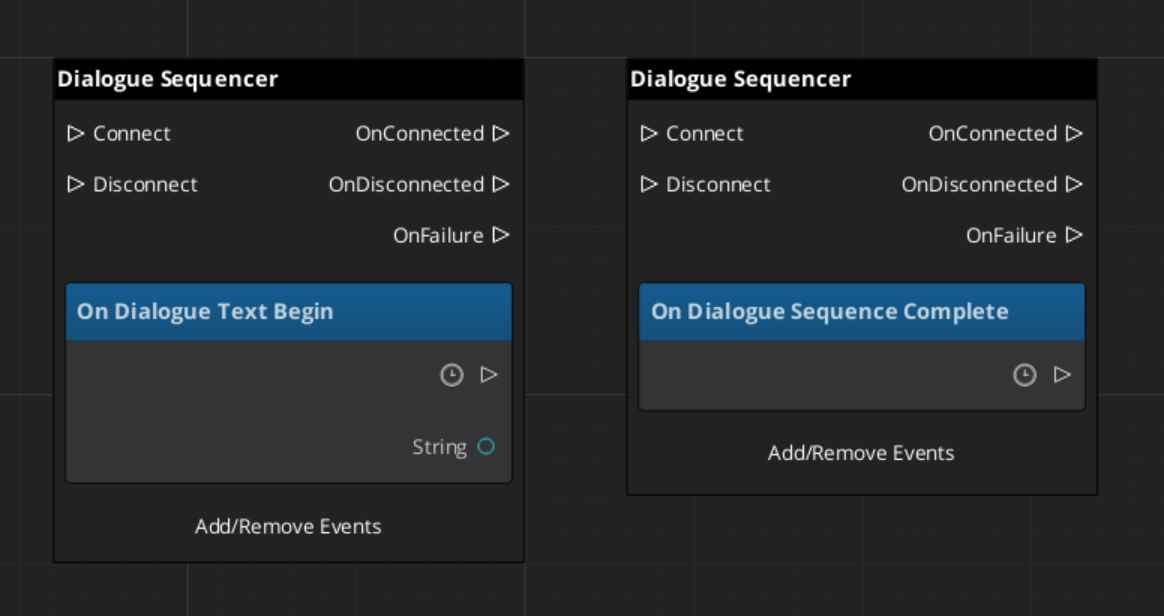

DialogueSequencerComponent

The Dialogue Sequencer drives sequence playback. It walks the node graph, evaluates conditions, executes effects, triggers performances, and emits notifications when text lines begin and when the sequence completes. It is typically placed on a dedicated sequencer entity in the level alongside DialogueUIBridgeComponent.

| Bus | Method / Event | Purpose |

|---|

DialogueSequencerRequestBus | StartDialogueBySequence | Begins playback of a given sequence object. |

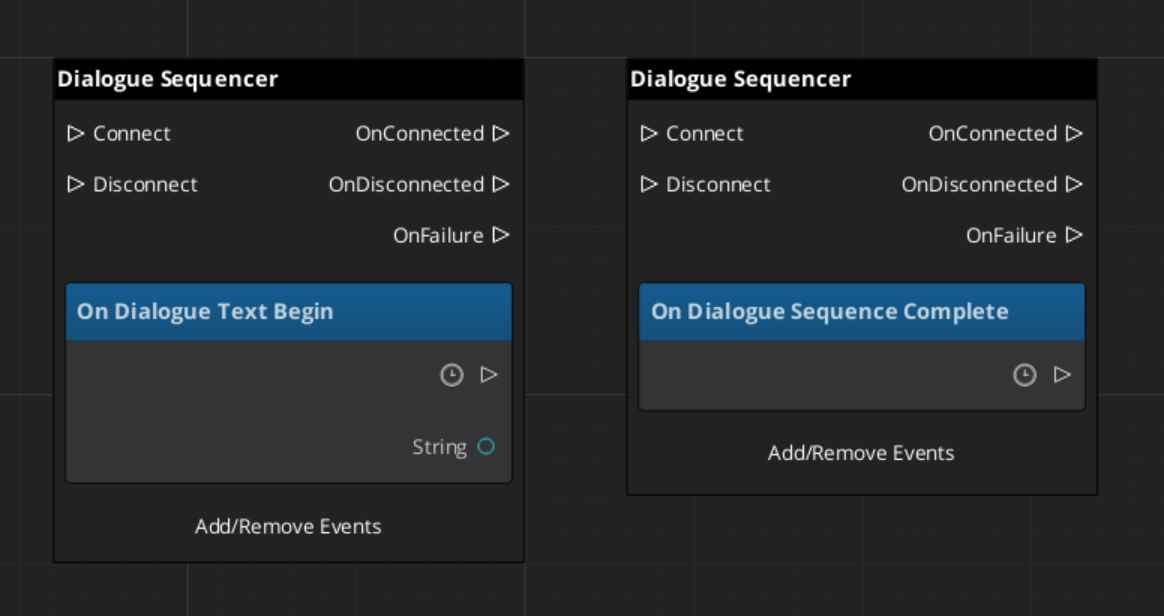

DialogueSequencerNotificationBus | OnDialogueTextBegin | Fires when a TextNodeData begins — carries speaker and text data. |

DialogueSequencerNotificationBus | OnDialogueSequenceComplete | Fires when the sequence reaches its end node. |

Localization

Text nodes store speaker lines as LocalizedStringId references rather than raw strings. A LocalizedStringId holds a key and a default fallback text. At runtime, the sequencer calls Resolve() on each LocalizedStringId, which looks up the key in the active LocalizedStringTable and returns the localized string for the current locale. If no table is loaded or the key is not found, the default text is returned.

To use localization, load a LocalizedStringTable asset through your project’s initialization flow before any dialogue plays.

ScriptCanvas Usage

Starting a Dialogue Sequence

Reacting to Sequence States

Listen on DialogueSequencerNotificationBus to receive speaker and text data as each line begins:

Quick Reference

| Need | Bus | Method / Event |

|---|

| Start a sequence by name | DialogueManagerRequestBus | StartDialogueSequenceByName |

| Load a different database | DialogueManagerRequestBus | ChangeDialogueDatabase |

| Register a performer in the level | DialogueManagerRequestBus | RegisterPerformerMarker |

| Get a performer entity | DialogueManagerRequestBus | GetPerformer |

| Start a sequence object directly | DialogueSequencerRequestBus | StartDialogueBySequence |

| React to a text line starting | DialogueSequencerNotificationBus | OnDialogueTextBegin |

| React to sequence completion | DialogueSequencerNotificationBus | OnDialogueSequenceComplete |

Glossary

| Term | Meaning |

|---|

| DialogueDatabase | A .dialoguedb asset containing actors and dialogue sequences |

| DialogueSequence | A graph of nodes that defines a single dialogue conversation |

| DialogueNodeData | A polymorphic node in the sequence graph (Text, Selection, Random, Effects, Performance) |

| DialogueCondition | A polymorphic evaluator attached to branches that gates progression |

| DialogueEffect | A polymorphic action executed at EffectsNodeData nodes (e.g., setting records) |

| DialoguePerformance | A polymorphic action executed at PerformanceNodeData nodes — moves, paths, or repositions performers. Can be blocking or non-blocking |

| Performer | A named actor entity in the level mapped from the database via DialoguePerformerMarkerComponent |

For full definitions, see the Glossary.

See Also

For the full API, component properties, and C++ extension guide:

For related systems:

Get GS_Cinematics

GS_Cinematics — Explore this gem on the product page and add it to your project.

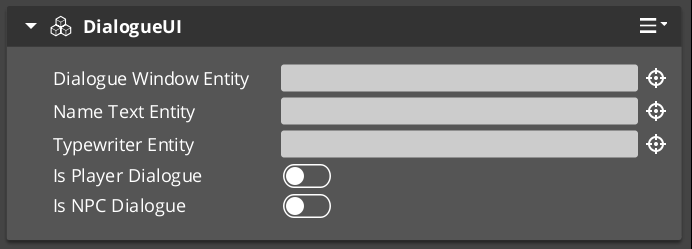

4.2.1 - Dialogue UI

How to display dialogue text, player choices, and typewriter effects in GS_Play — screen-space and world-space UI components and the bridge that connects them to the sequencer.

The Dialogue UI layer is the display side of the GS_Cinematics system. It receives events from DialogueSequencerComponent through DialogueUIBridgeComponent and routes them to the correct UI implementation — screen-space for HUD-style dialogue or world-space for speech bubbles above actors. Player choices are handled by selection components, and the TypewriterComponent reveals text character-by-character. BabbleComponent optionally plays per-character audio babble to give speakers a voice.

For architecture details, component properties, and extending the system in C++, see the Framework API reference.

Contents

Component Overview

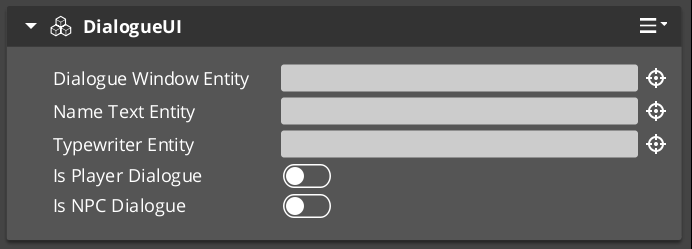

| Component | Space | Purpose |

|---|

DialogueUIComponent | Screen | Displays the current speaker line on the HUD. |

WorldDialogueUIComponent | World | Displays speech bubbles positioned above actors in 3D space. |

DialogueUISelectionComponent | Screen | Renders player choice options on the HUD. |

WorldDialogueUISelectionComponent | World | Renders player choice options in 3D world space. |

DialogueUIBridgeComponent | — | Routes sequencer events to UI and player input back to sequencer. |

TypewriterComponent | — | Reveals text one character at a time with configurable timing. |

BabbleComponent | — | Plays per-character audio babble for the active speaker. |

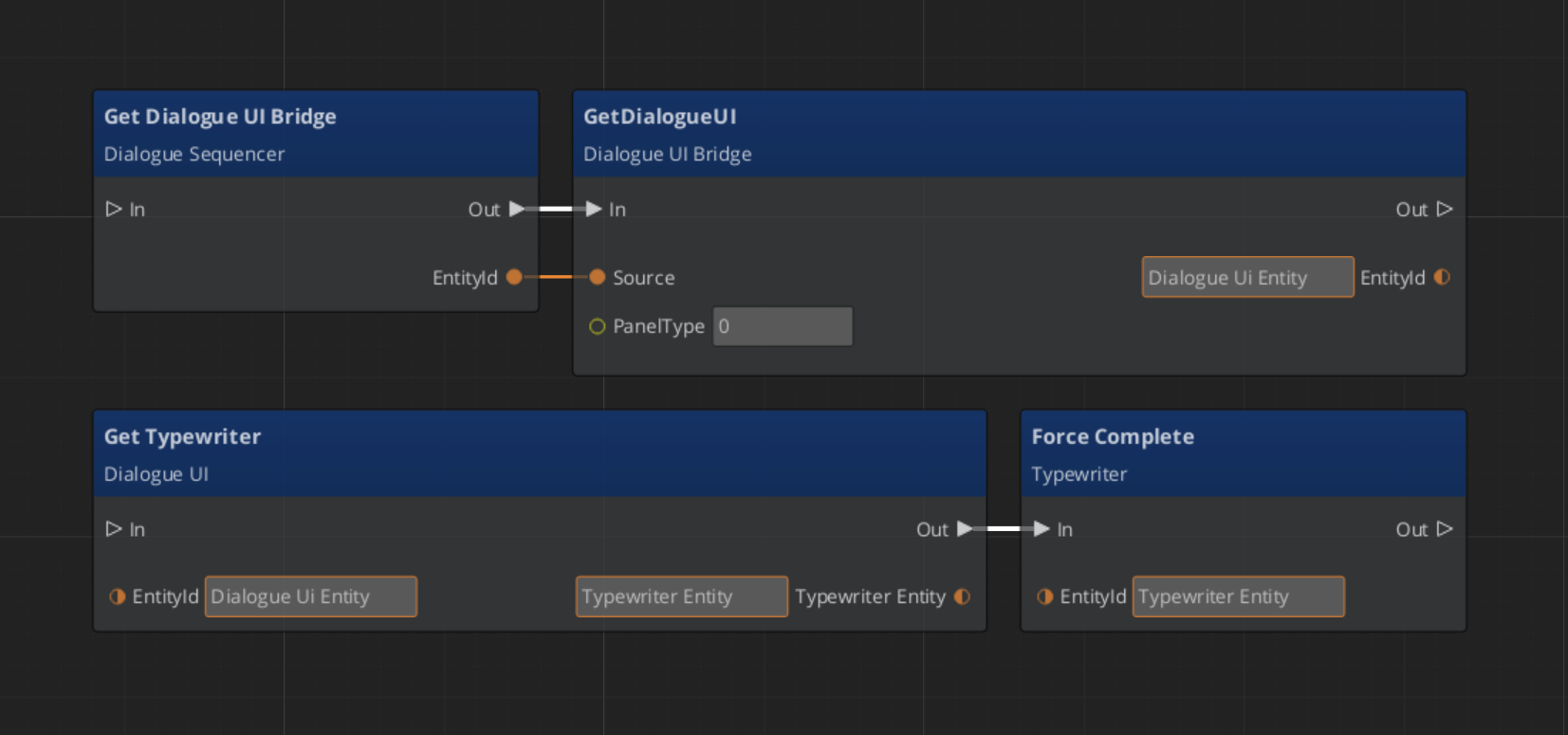

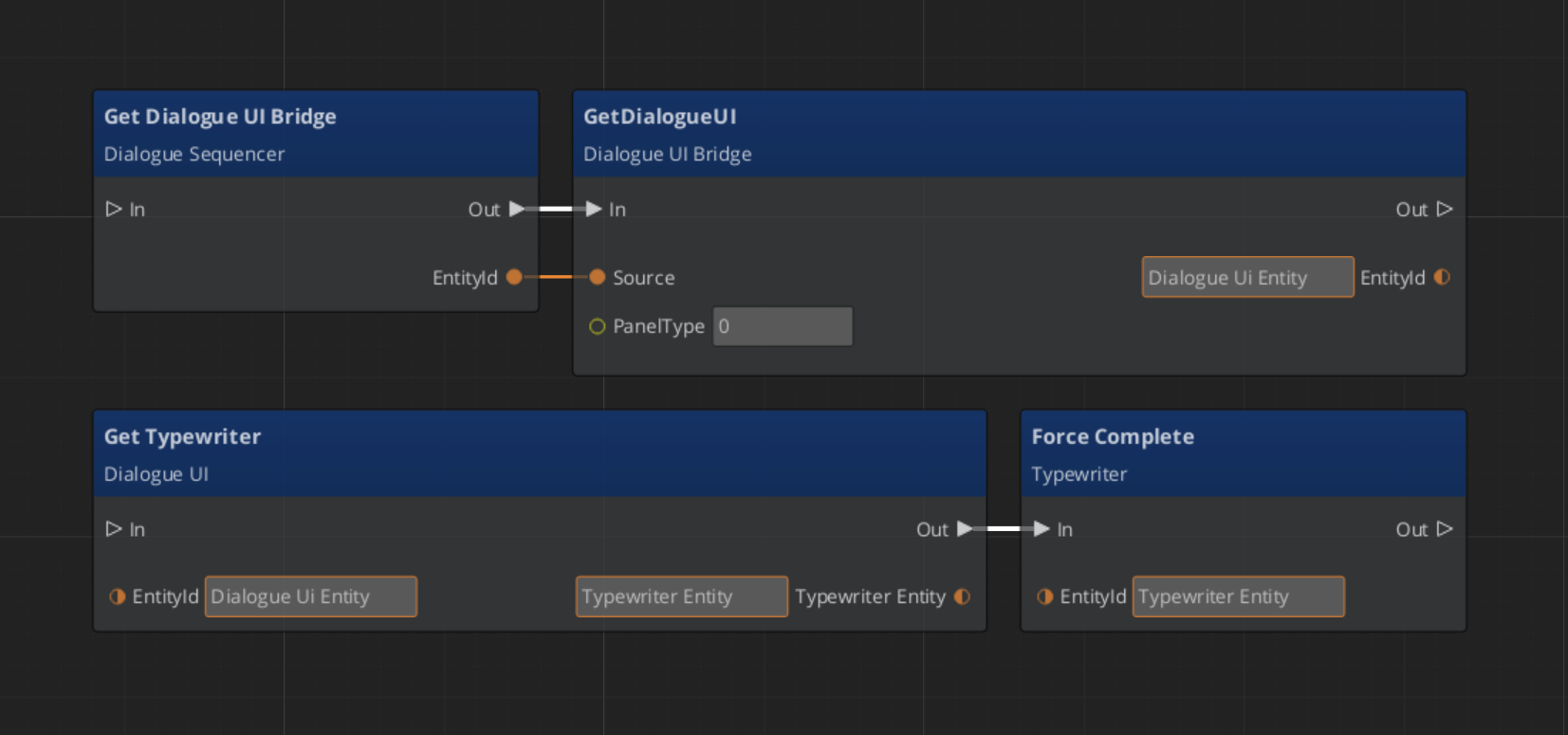

DialogueUIBridgeComponent

The Bridge component is the central connector between the sequencer and the UI. Place it on the same entity as DialogueSequencerComponent. It listens for OnDialogueTextBegin and OnDialogueSequenceComplete from the sequencer and forwards those events to whatever UI components are registered with it. It also receives player selection events from the selection UI and forwards them back to the sequencer.

This design keeps the sequencer and the display fully decoupled — swapping in a new UI implementation only requires registering it with the Bridge.

| Bus | Method | What It Does |

|---|

DialogueUIBridgeRequestBus | RunDialogue | Sends a text line to the registered dialogue UI. |

DialogueUIBridgeRequestBus | RunSelection | Sends selection options to the registered selection UI. |

DialogueUIBridgeRequestBus | RegisterDialogueUI | Registers a UI entity as the active dialogue display target. |

DialogueUIBridgeRequestBus | CloseDialogue | Tells the registered UI to close. |

DialogueUIBridgeNotificationBus | OnDialogueComplete | Fires when the dialogue UI reports it has finished displaying. |

DialogueUIBridgeNotificationBus | OnSelectionComplete | Fires when the player makes a selection. |

Dialogue Display Components

Screen-Space Text

DialogueUIComponent handles HUD-style dialogue display. Add it to a UI canvas entity. It exposes the active TypewriterComponent so other systems can check typewriter state or force completion.

World-Space Speech Bubbles

WorldDialogueUIComponent extends DialogueUIComponent for world-space placement. It positions the dialogue panel above the speaking actor’s entity in 3D space. Use this for over-the-shoulder dialogue or conversations where the camera stays in the world rather than cutting to a UI overlay.

Selection Components

Screen-Space Choices

DialogueUISelectionComponent renders the player’s available choices as a list on the HUD. Each choice is backed by a DialogueSelectButtonComponent entity that is configured with option text and a selection index. When the player activates a button, it fires back through the Bridge to the sequencer.

World-Space Choices

WorldDialogueUISelectionComponent extends DialogueUISelectionComponent for world-space display. Choices appear positioned in 3D space rather than as a HUD overlay, useful for games with diegetic UI.

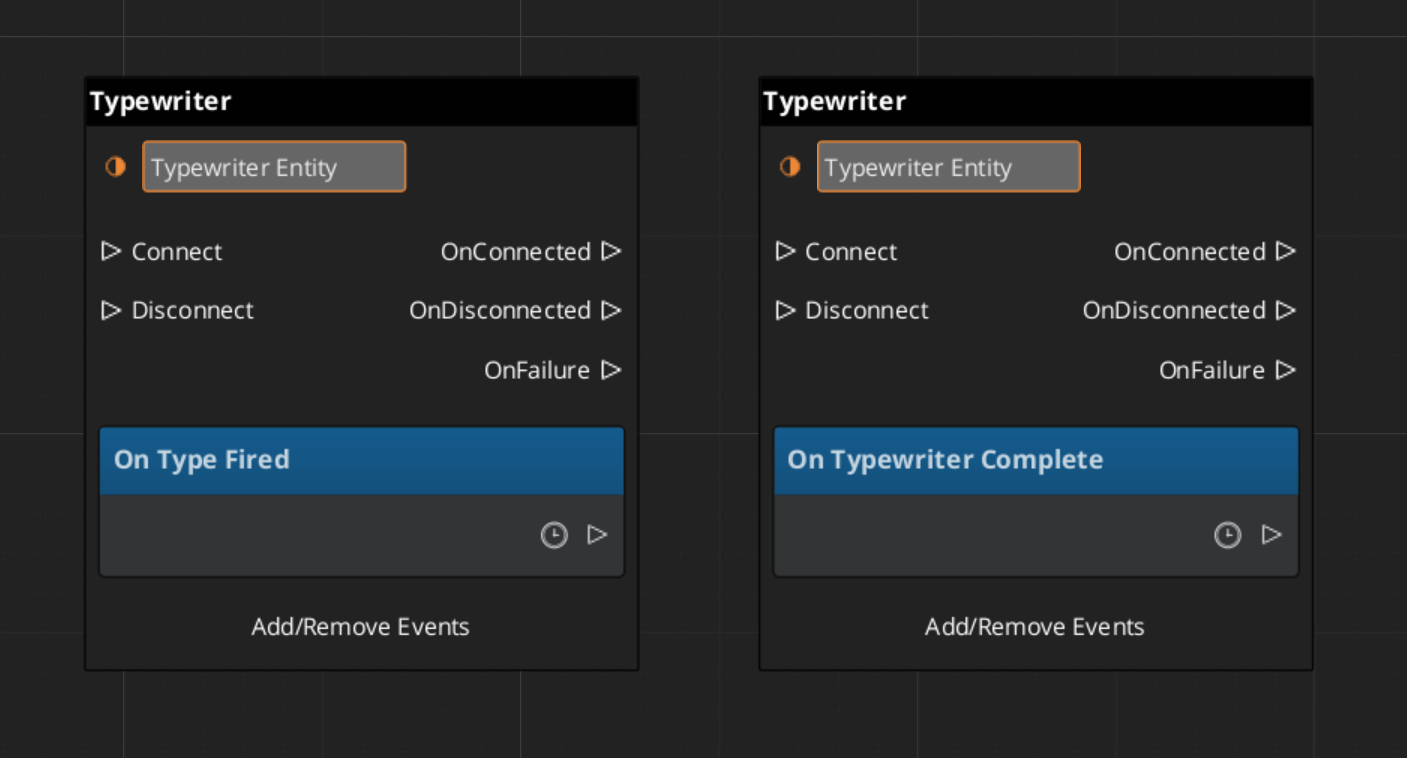

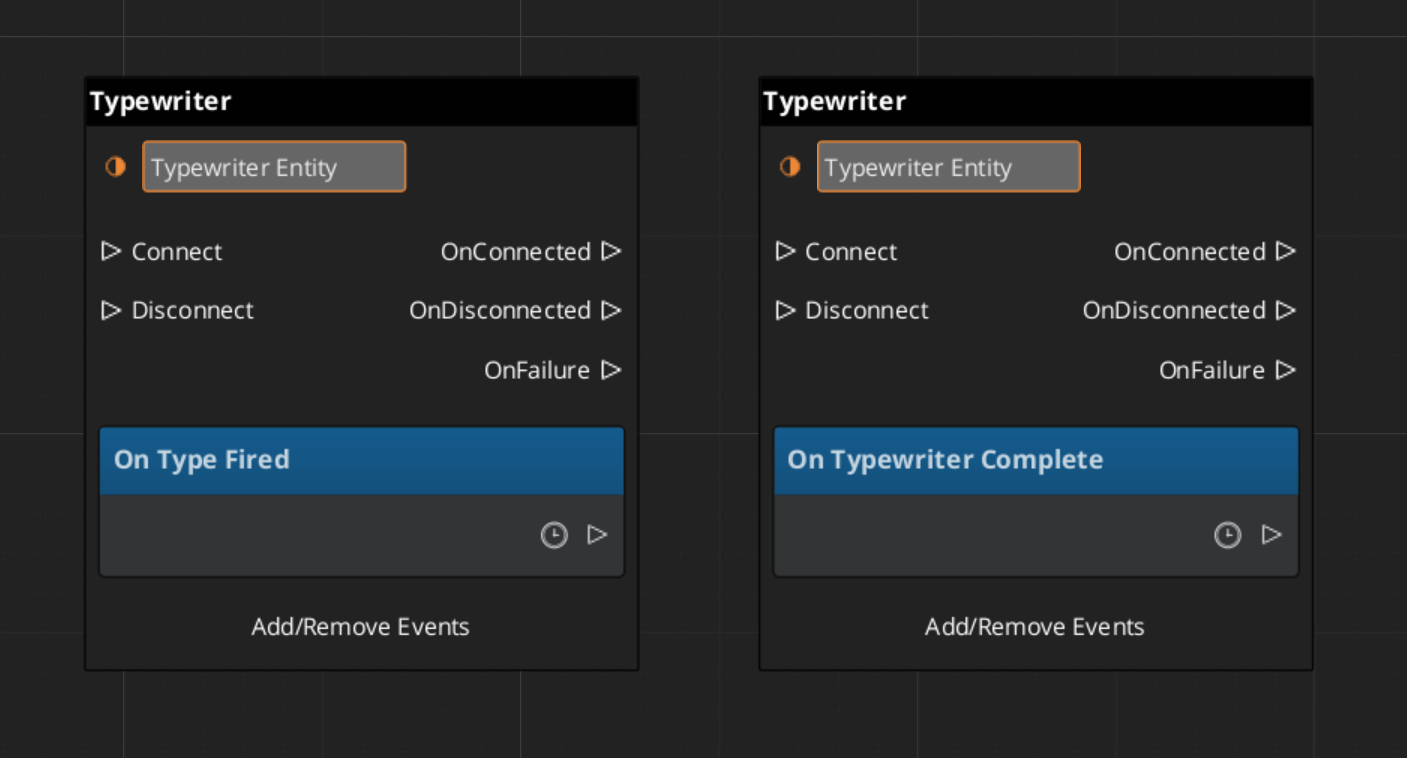

TypewriterComponent

The TypewriterComponent reveals a string one character at a time. It fires OnTypeFired for each character revealed and OnTypewriterComplete when the full string is displayed. Use ForceComplete to instantly reveal the remaining text — typically wired to a player skip input — and ClearTypewriter to reset the display to empty.

| Bus | Method / Event | What It Does |

|---|

TypewriterRequestBus | StartTypewriter(text) | Begins revealing the given string character by character. |

TypewriterRequestBus | ForceComplete | Instantly reveals all remaining characters. |

TypewriterRequestBus | ClearTypewriter | Clears the display and resets state. |

TypewriterNotificationBus | OnTypeFired | Fires each time a character is revealed. |

TypewriterNotificationBus | OnTypewriterComplete | Fires when the full string has been revealed. |

BabbleComponent

BabbleComponent pairs with TypewriterComponent to play short audio sounds for each character revealed, giving the impression of a speaker’s voice. Each actor has a SpeakerBabbleEvents record that maps them to a specific babble sound profile. The component returns the correct BabbleToneEvent for the current speaker via GetBabbleEvent, which is called by the typewriter on each OnTypeFired.

| Bus | Method | What It Does |

|---|

BabbleRequestBus | GetBabbleEvent | Returns the babble audio event for the active speaker. |

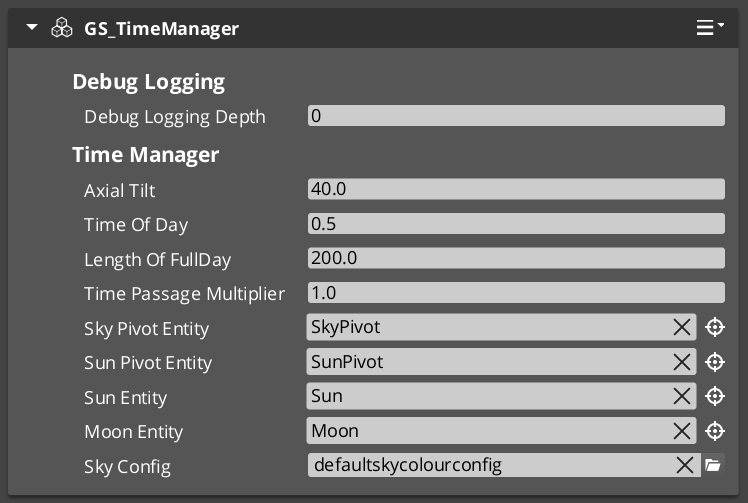

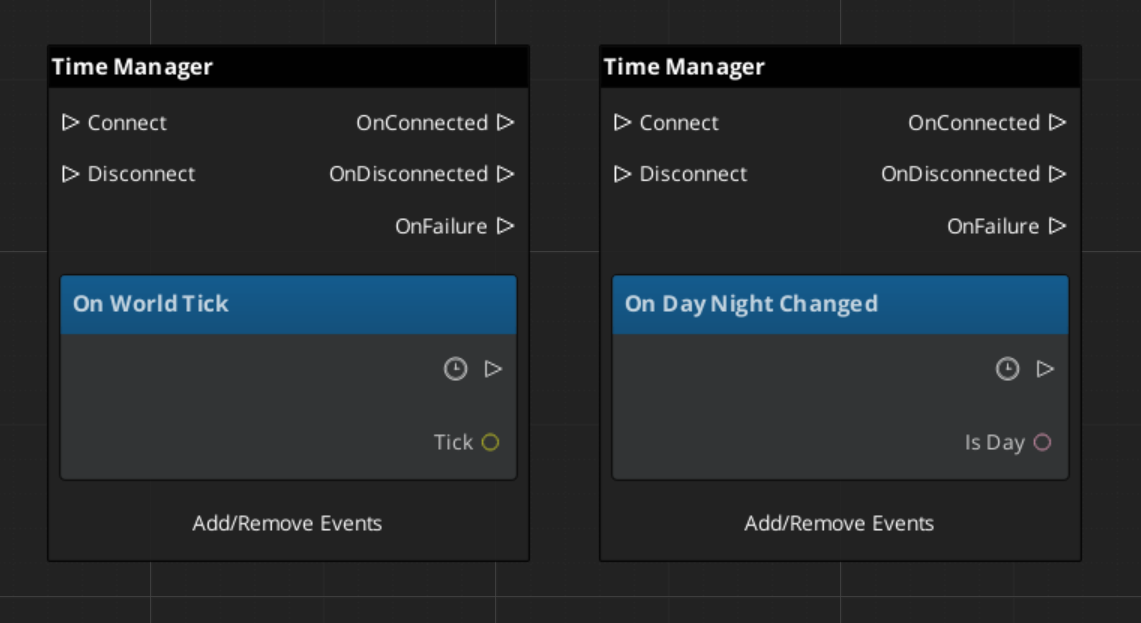

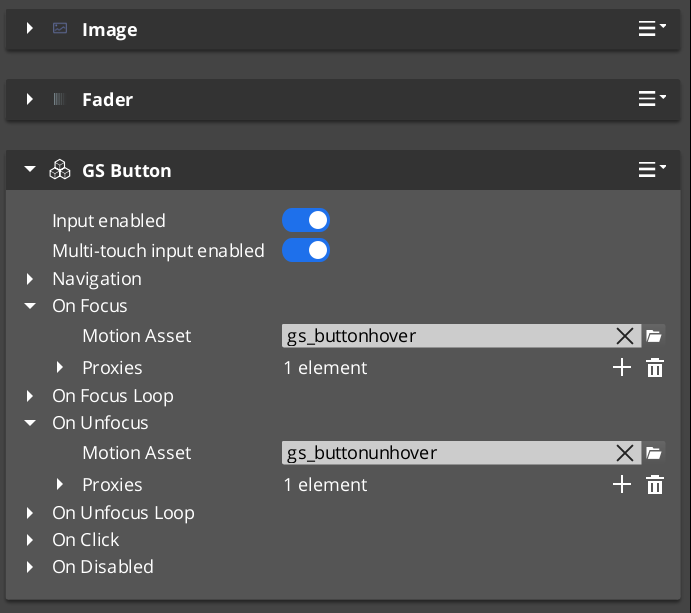

ScriptCanvas Usage