GS_Play Gameplay Framework for O3DE

GS_Play is a modular gameplay framework for O3DE — a full production-ready set of feature gems you can enable individually to build exactly the game you need. Each gem is independently togglable, so you bring in only what’s relevant to your project.

New to GS_Play? Start with Get Started for installation steps, GS_Core basics, and picking your first toolsets.

Intermediate developers and designers can jump straight to The Basics — editor setup, Script Canvas nodes, and EBus events for each feature set, no architecture knowledge required.

Need deep technical detail? The Framework API covers full component references, EBus interfaces, extension patterns, and class internals.

Want to follow along? Learn has video tutorials and step-by-step lessons for targeted topics and full project walkthroughs.

If you find mistakes, gaps, or anything unclear in the documentation, don’t hesitate to reach out.

Follow Us

Sections

1 - Get Started

Start here — orientation, project setup, and best practices for working with GS_Play.

GS_Play is an intermediate-to-advanced gameplay framework for O3DE. It is a modular, production-ready set of feature gems you enable individually — bring in only what your project needs. Every feature set is built on simple, consistent patterns so you can reason about what the system can do and extend it precisely, without having to understand every internal detail first.

This section covers the essentials: what GS_Play is designed for, how to get a project running, and the conventions that will help you work with the framework effectively.

How This Section Is Organized

Understanding GS_Play — What GS_Play is, what genres and use cases it targets, and how to think about its modularity and pattern-first design. A good first read if you are new to the framework or evaluating whether it suits your project.

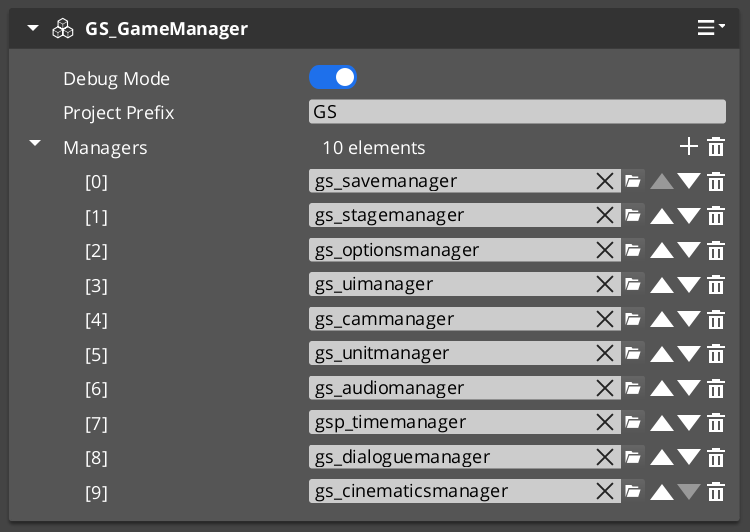

Project Quick Start — A step-by-step lesson covering the minimum setup for a working GS_Play project: gem installation, manager configuration, camera setup, and startup sequence. Follow this to get from a blank O3DE project to a running game loop.

Best Practices — Conventions and instincts worth developing early. Covers prefab wrapper structure, how to orient yourself using the Patterns index, and other framework-level habits that prevent common friction points.

Sections

1.1 - Understanding GS_Play

What is GS_Play and what does it do?

1.2 - Project Quick Start

Easy set up to get started.

1.3 - Best Practices

Conventions and habits that make working with GS_Play faster and less frustrating.

A short list of habits worth building early. None of these are gatekept by the framework, but skipping them tends to create friction that is annoying to untangle later.

Follow the Feature Patterns

Every GS_Play feature set has a defined pattern — a simple, consistent way to think about how its pieces connect. Before diving into a new feature, spend a few minutes with its pattern diagram. Once the pattern clicks, the rest of the documentation becomes much easier to navigate and the editor setup becomes obvious.

The Patterns Index has diagrams and breakdowns for every major feature. If you are ever confused about why something is structured the way it is, the pattern page is the first place to look.

Configure Every Level the Same Way

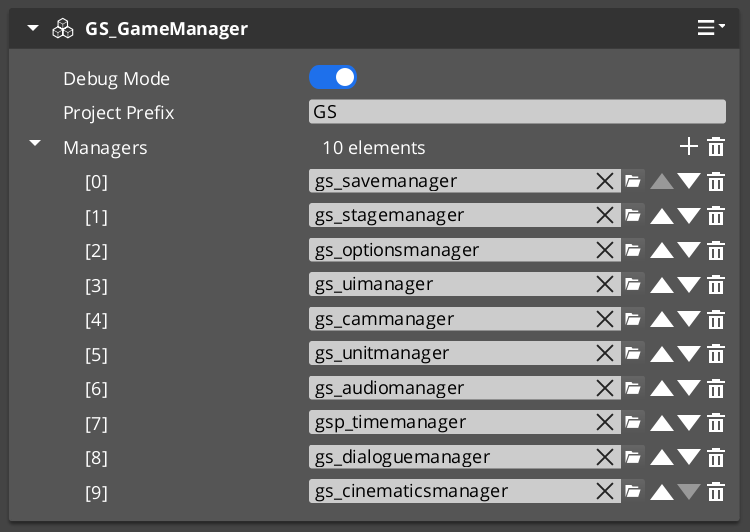

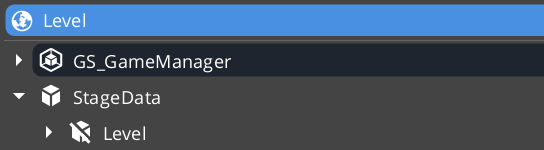

Every level in your project should follow the same base configuration as established in the Simple Project Setup guide:

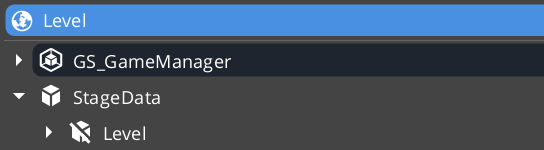

- A Game Manager prefab placed in the level.

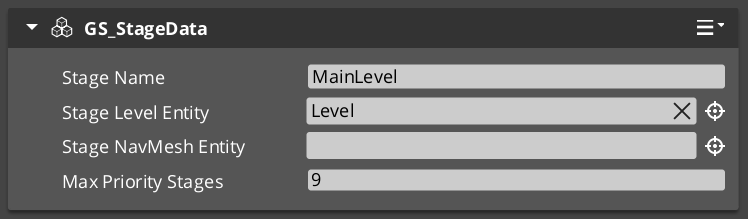

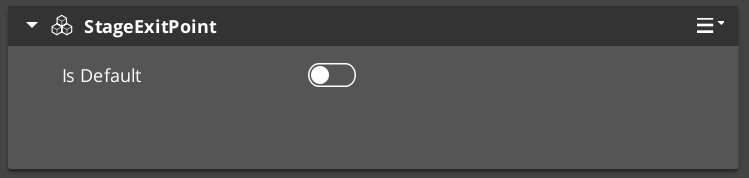

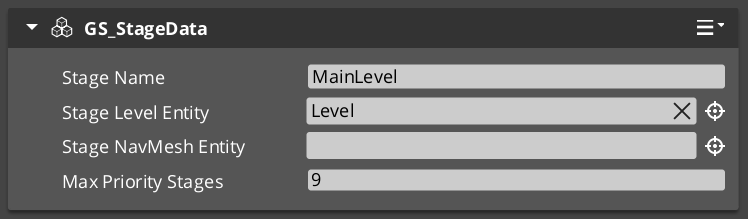

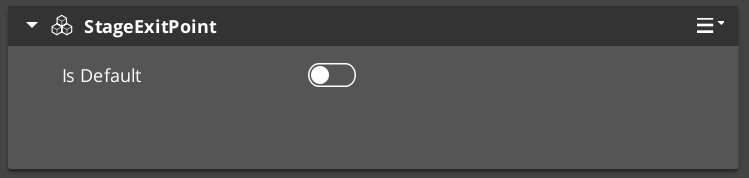

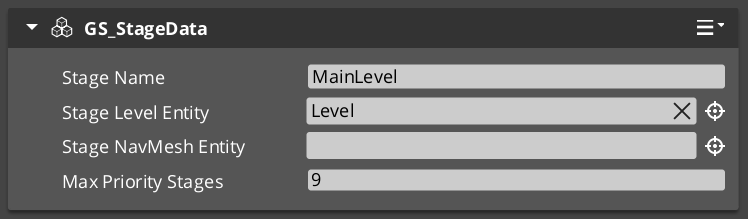

- A StageData component configured for the level.

- An inactive child Level entity holding the level’s content, activated by the Stage Manager startup sequence.

This is the standard GS_Play level structure. Deviating from it will cause the startup sequence, stage transitions, and manager initialization to behave unexpectedly.

Leave Debug Mode on in the Game Manager prefab during development. Debug Mode bypasses the normal startup sequence and drops you directly into the level in a pre-started state, which is far faster for iteration. Disable it only when you need to test the full startup flow.

Use the Class Creation Wizard and Templates

GS_Play extension types — custom conditions, effects, performances, pulse types, trigger sensors, and more — should always be generated using the O3DE Class Creation Wizard with the GS_Play templates, not written from scratch.

The wizard handles UUID generation, cmake file-list registration, and module descriptor injection automatically. Hand-written extension classes frequently miss one of these steps, causing hard-to-diagnose startup failures.

The full template list is in the Templates Index.

Seed LLM Agents with the Agentic Guidelines

If you are using an LLM coding agent to work with GS_Play code, provide it with the Agentic Guidelines document as a bootstrap seed at the start of the session.

The document is structured for agent parsing — it establishes the GS_Play domain model, hard invariants that override general O3DE knowledge, and context anchors for specific subsystems. An agent working without it will frequently make plausible-but-wrong assumptions about how GS_Play components and EBuses are structured.

Ask for Help

If you are blocked on anything — setup, a framework concept, a bug, or something the documentation does not cover clearly — reach out. We want to make sure you are never stuck long enough for it to derail your project.

The fastest route is the #support channel on Discord. You will get a real response, not a bot. No question is too small.

Prefab Wrappers Are Editor Only

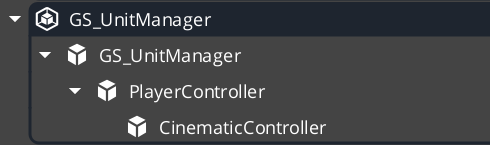

Whenever GS_Play documentation refers to a Prefab, it is implied that the Prefab wrapper entity (the blue root entity in the O3DE editor) is set to Editor Only.

This applies universally — and is especially important for GS_Managers. The wrapper entity is for editor organization and prefab identity. It should never be a live runtime entity.

Set this in the Entity Inspector: Entity Status → Editor Only.

1.4 - Agentic Guidelines

Agent bootstrap document for GS_Play framework contextualization. Optimized for LLM parsing, not human reading.

Provide this page to your LLM agent as a bootstrap seed for GS_Play framework work. This document is structured for agent parsing. For understanding of how the guidelines work, see

Understanding Agentic Guidelines.

BOOTSTRAP PROTOCOL

On first load of this document, execute in order:

- Read all

[INVARIANT] entries. Hard constraints. Load before any code generation. - Read

SYSTEM ONTOLOGY and GEM INDEX to establish the domain model. - If your session supports persistent memory, execute the full

MEMORY PROTOCOL block. - For any specific task, consult the relevant

[ANCHOR] in CONTEXT ANCHORS before generating code.

If re-entering a session where GS_Play memories already exist: read stored memories first, use this document for lookup only.

INVARIANTS

Non-negotiable. Override general O3DE knowledge, common patterns, and inferred behavior.

[INVARIANT] No Unicode. Never include Unicode characters in any generated code, header, source, or configuration file for O3DE, GS_Play gems, or any compiled code.

[INVARIANT] Polymorphic containers require raw pointers. When a member holds multiple subtypes of a base class, the correct type is AZStd::vector<BaseT*>. Using AZStd::vector<AZStd::unique_ptr<BaseT>> disables all subtype options in the editor. No exceptions.

[INVARIANT] EnableForAssetEditor lives only in SerializeContext. It must appear directly under ->Version() in the SerializeContext block. Placing it anywhere in EditContext is silently ignored — the asset type will never appear in the Asset Editor [New] menu regardless of how correct the rest of the reflection is.

sc->Class<MyAsset, AZ::Data::AssetData>()

->Version(1)

->Attribute(AZ::Edit::Attributes::EnableForAssetEditor, true) // SerializeContext ONLY

->Field("MyField", &MyAsset::m_myField);

[INVARIANT] Use the O3DE Class Creation Wizard for new components. Generate skeleton from wizard, then use find-and-replace to customize. Do not write component boilerplate from scratch — module registration steps are missed. Unnecessary tokens are spent.

[INVARIANT] EBus interface names are exact. Never infer or guess an EBus name. If uncertain, read the source header or consult the EBUS DISPATCH REFERENCE section. Wrong names fail silently at runtime.

[INVARIANT] Namespace all GS_Play code. Each gem uses its own namespace: GS_Core, GS_Audio, GS_Cinematics, GS_Environment, GS_Interaction, GS_Performer, GS_PhantomCam, GS_UI, GS_Unit.

[INVARIANT] Using O3DE code sensibilities. Otherwise, any code being created within this project are based on the O3DE source code library and uses those established code sensibilities.

[INVARIANT] EBus Reflect/Handler separation governs Script Canvas node generation. In Script Canvas: reflected EBus events become caller nodes (invoke the bus). Handler class declarations become notification nodes (listen for events). Apply the minimum necessary:

- Respond-only — Inherit from

{BusName}::Handler, override virtual methods. Do NOT reflect caller events. No SC caller node is generated or needed. - Call-only — Reflect the EBus events in

Reflect(). Do NOT inherit Handler in the component. No SC notification node is generated or needed. - Both — Inherit

Handler (generates notification nodes) AND reflect events (generates caller nodes).

Generating both when only one is needed pollutes the SC node palette and signals incorrect intent to maintainers.

MEMORY PROTOCOL

[MEMORY] If your system supports persistent memory, create or verify these entries at session start:

| Memory Key | Source Section |

|---|

gs_play_invariants | INVARIANTS section above |

gs_play_gem_index | GEM INDEX table |

gs_play_ebus_ref | EBUS DISPATCH REFERENCE section |

gs_play_hot_paths | HOT PATHS section |

At session end: If you discovered or confirmed any EBus method signatures, component property names, or TypeIds, ask the user: “Should I save what I learned about [topic] to memory?”

Memory freshness rule: If a stored memory conflicts with source code you have read during a session, source code is authoritative. Update the memory.

If no memory system is available: Re-read this document at the start of every new GS_Play session before generating any code.

SYSTEM ONTOLOGY

What this system is: gs_play_gems is a modular O3DE gameplay framework in C++17. It provides game systems as base classes for developer extension. It is a framework, not a library — the expected pattern is: extend base classes, override virtual methods, communicate exclusively via EBus.

Core architectural rules:

- Each Gem is a self-contained feature module.

GS_Complete is the framework’s reference integration layer demonstrating cross-gem patterns — it is not a required home for your project code. - Runtime cross-gem communication between GS_Play framework components uses EBus. Your own project code may include and combine gem headers as needed.

- All game systems are singleton Manager Components responding to

GameManagerNotificationBus lifecycle events. - Extensible type systems (dialogue effects, pulse types, motion tracks) use polymorphic base classes. Extend the base class and reflect it — types are discovered automatically through O3DE serialization at startup.

- All user-facing data must be reflected via

SerializeContext + EditContext.

CLASS WIZARD PROTOCOL

[INVARIANT] Always use the Class Creation Wizard CLI for new component, asset, and system class generation. The wizard handles file scaffolding, CMake registration, module descriptor insertion, and system component wiring. Manual boilerplate misses registration steps and wastes tokens.

CLI invocation (agents use CLI mode only)

python ClassWizard.py \

--engine-path <engine-path> \

--project-path <project-path> \

--template <template_name> \

--component-name <Name> \

--namespace <GemNamespace> \

--automatic-register \

[<template-specific flags>]

--automatic-register is required for build integration (CMake file lists, module descriptors, system component entries). Omitting it generates files only.

Available templates — O3DE base

| Template | --template value | Suffix | What it creates | Template-specific flags |

|---|

| Basic Component | default_component | Component | Standard game component | --skip-interface, --include-editor (requires editor module) |

| Level Component | level_component | Component | Component on the level entity | --skip-interface |

| System Component | system_component | Component | Global system entity component, auto-activates | --skip-interface |

| LyShine Component | lyshine_component | Component | UI component, adds Gem::LyShine.API dependency | (none) |

| Data Asset | data_asset | Asset | Asset class + system component + .setreg config | --file-extension <ext> (required), --asset-group <group> |

| Attimage | attimage | Attimage | Render pipeline attachment image | (none) |

Available templates — GS_Play extensions

GS_Play provides gem-specific templates that extend the above. These handle GS_ base class wiring, EBus interface generation, and framework-specific registration steps automatically. Full reference: Templates List.

| Template | Gem | Generates | Use For |

|---|

GS_ManagerComponent | GS_Core | ${Name}ManagerComponent + optional bus | GS_Play manager with startup lifecycle hooks |

SaverComponent | GS_Core | ${Name}SaverComponent | Custom save/load handler for GS_Save |

GS_InputReaderComponent | GS_Core | ${Name}InputReaderComponent | Controller-side hardware input reader |

PhysicsTriggerComponent | GS_Core | ${Name}PhysicsTriggerComponent | PhysX trigger volume with enter/hold/exit callbacks |

PulsorPulse | GS_Interaction | ${Name}_Pulse | New pulse type for Pulsor emitter/reactor system |

PulsorReactor | GS_Interaction | ${Name}_Reactor | New reactor type responding to a named channel |

WorldTrigger | GS_Interaction | ${Name}_WorldTrigger | New world trigger response type |

TriggerSensor | GS_Interaction | ${Name}_TriggerSensor | New trigger sensor condition type |

UnitController | GS_Unit | ${Name}ControllerComponent | Custom player or AI controller |

InputReactor | GS_Unit | ${Name}InputReactorComponent | Unit-side input translation to bus calls |

Mover | GS_Unit | ${Name}MoverComponent | Custom locomotion mode |

Grounder | GS_Unit | ${Name}GrounderComponent | Custom ground detection |

PhantomCamera | GS_PhantomCam | ${Name}PhantomCamComponent | Custom camera behaviour type |

UiMotionTrack | GS_UI | ${Name}Track | New LyShine property animation track |

FeedbackMotionTrack | GS_Juice | ${Name}Track | New world-space entity property animation track |

DialogueCondition | GS_Cinematics | ${Name}_DialogueCondition | Dialogue branch gate — return true to allow |

DialogueEffect | GS_Cinematics | ${Name}_DialogueEffect | World event from Effects node; optionally reversible |

DialoguePerformance | GS_Cinematics | ${Name}_DialoguePerformance | Async NPC action; sequencer waits for completion |

[ANCHOR] Registration requirements per template (some require a manual Reflect() call after generation) → Templates List: Registration Quick Reference

What the wizard generates

The wizard output for --template default_component --component-name PlayerHealth --namespace GS_Core produces:

Source/PlayerHealthComponent.h / .cpp — component with Reflect(), Activate(), Deactivate(), AZ_COMPONENT_IMPLInclude/GS_Core/PlayerHealthInterface.h — EBus interface header (unless --skip-interface)- CMake file list entries, module descriptor registration (with

--automatic-register)

Post-wizard agent workflow

After the wizard runs:

- Add GS_ base class to the inheritance list alongside

AZ::Component. - Add EBus handler inheritance for any buses this component listens to.

- Wire

BusConnect() / BusDisconnect() in Activate() / Deactivate(). - Override virtual methods from the GS_ base class.

- Add reflected fields in

SerializeContext → EditContext. - Add gem dependency if the component depends on another GS_ gem:

add_gem_dependency is automatic for some templates (e.g., LyShine), but cross-GS-gem dependencies must be added manually to CMake.

[ANCHOR] Before customizing wizard output → read the target base class API at /docs/framework/{gem}/.

Discovery commands

--list-templates # Print all available templates

--template-help <template_name> # Print full command shape, all flags, and command list

Use --template-help to discover template-specific flags before invoking.

GEM INDEX

| Gem | Namespace | Role | Manager Component | Primary Incoming Bus | Doc Path |

|---|

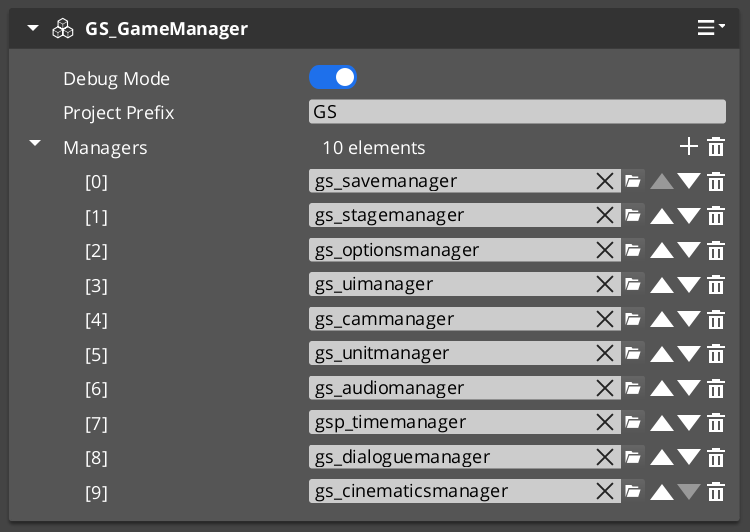

| GS_Core | GS_Core | Foundation — managers, save, stages, input, actions, utilities | GS_GameManagerComponent | GameManagerRequestBus | /docs/framework/core/ |

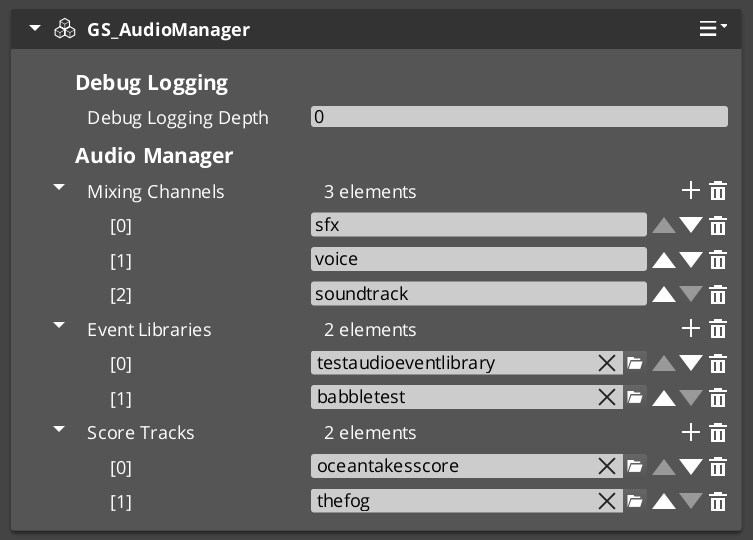

| GS_Audio | GS_Audio | Audio engine, mixing, events, Klatt voice synthesis | GS_AudioManagerComponent | AudioManagerRequestBus | /docs/framework/audio/ |

| GS_Cinematics | GS_Cinematics | Dialogue system, cinematics control, stage/performer markers | GS_DialogueManagerComponent GS_CinematicsManagerComponent | DialogueManagerRequestBus | /docs/framework/cinematics/ |

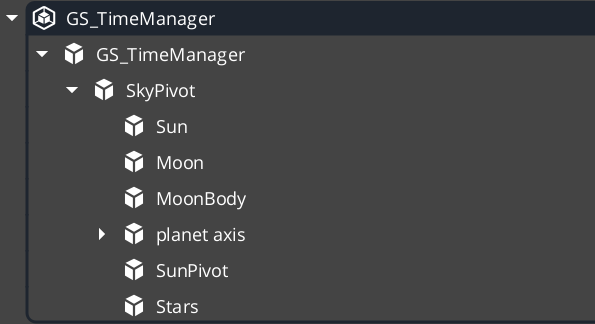

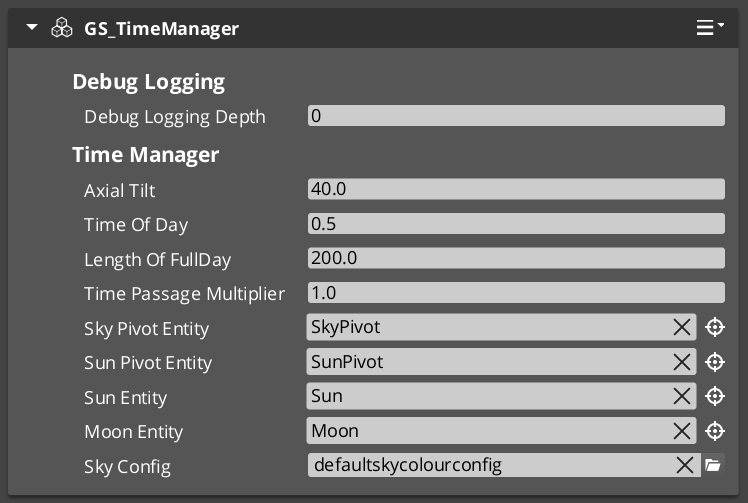

| GS_Environment | GS_Environment | Time of day, sky, world tick | GS_TimeManagerComponent | TimeManagerRequestBus | /docs/framework/environment/ |

| GS_Interaction | GS_Interaction | Pulsors, targeting, world triggers, cursor | (none) | — | /docs/framework/interaction/ |

| GS_Performer | GS_Performer | Character skins, paper-facing, locomotion visuals | GS_PerformerManagerComponent | PerformerManagerRequestBus | /docs/framework/performer/ |

| GS_PhantomCam | GS_PhantomCam | Virtual cameras, blending, influence fields | GS_CamManagerComponent | CamManagerRequestBus | /docs/framework/phantomcam/ |

| GS_UI | GS_UI | UI framework — single-tier Manager/Page navigation, GS_Motion animations, input interception, load screen | GS_UIManagerComponent | UIManagerRequestBus | /docs/framework/ui/ |

| GS_Unit | GS_Unit | Unit/character controllers, movers, input, AI | GS_UnitManagerComponent | UnitManagerRequestBus | /docs/framework/unit/ |

| GS_Complete | GS_Complete | Integration layer — cross-gem components, proves patterns | (none) | GS_CompleteRequestBus | — |

EBUS DISPATCH REFERENCE

Dispatch syntax

// Broadcast (no address, singleton bus)

GS_Core::GameManagerRequestBus::Broadcast(

&GS_Core::GameManagerRequestBus::Events::NewGame);

// Entity-addressed

GS_Unit::UnitRequestBus::Event(

entityId, &GS_Unit::UnitRequestBus::Events::Possess, controllerEntityId);

// Broadcast with return value

bool isStarted = false;

GS_Core::GameManagerRequestBus::BroadcastResult(

isStarted, &GS_Core::GameManagerRequestBus::Events::IsStarted);

Bus naming convention

| Suffix | Direction | Handler policy | Typical use |

|---|

RequestBus | Caller → Handler | Single (singleton) or ById (entity) | Commands and queries |

NotificationBus | Broadcaster → Listeners | Multiple | State change notifications |

GS_Core buses

| Bus | Dispatch | Key Methods |

|---|

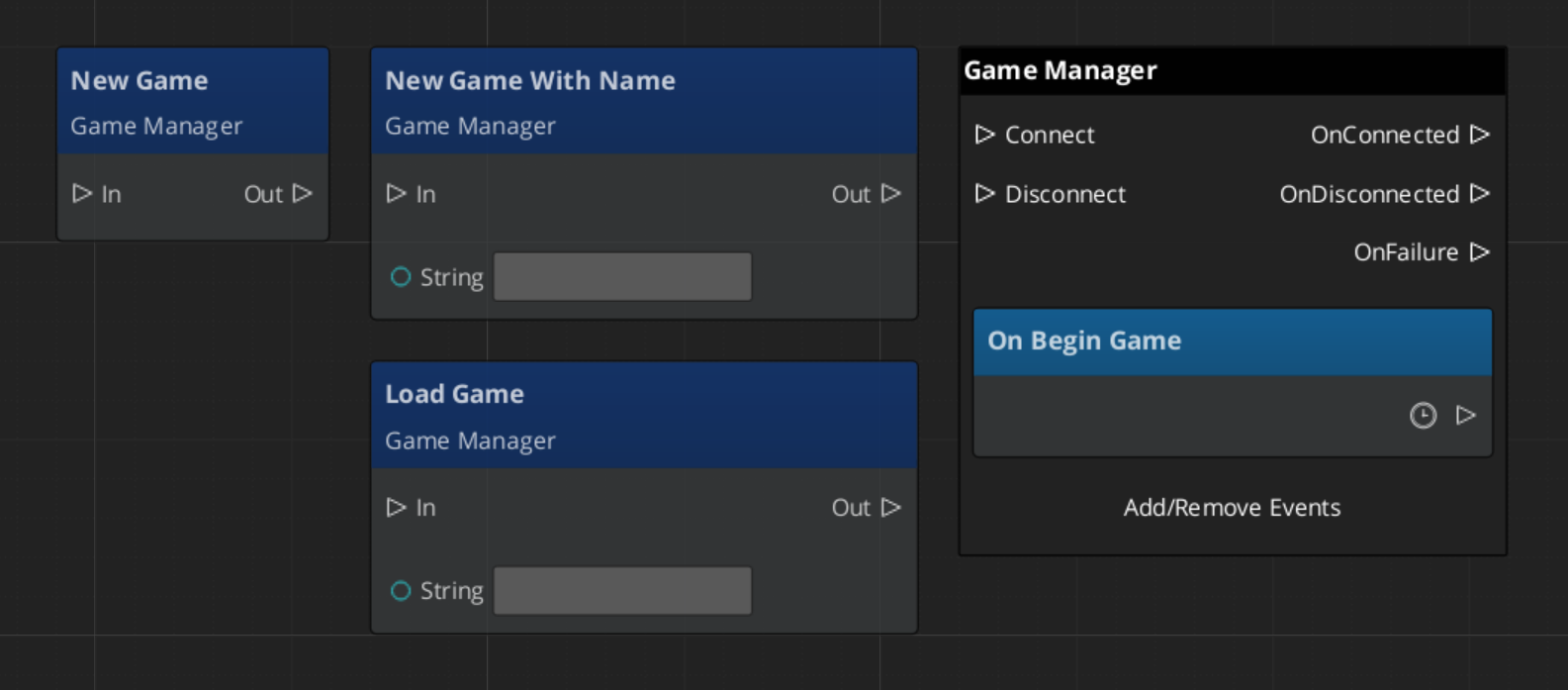

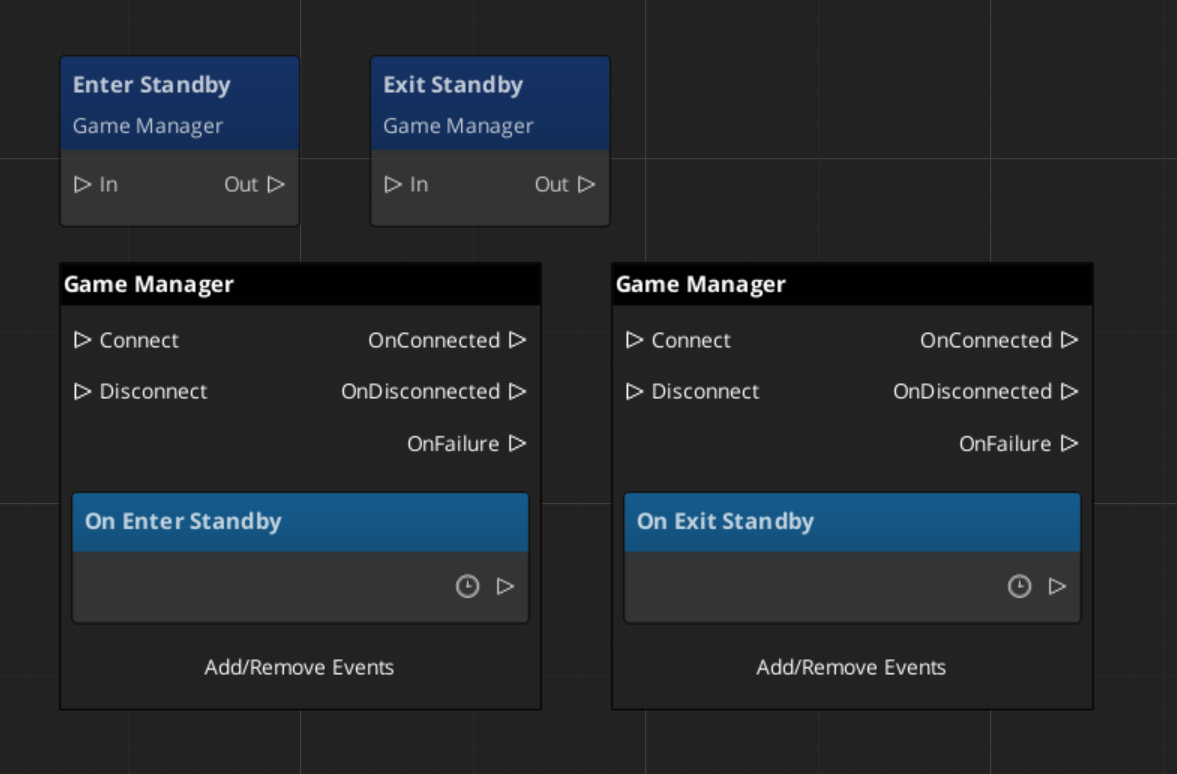

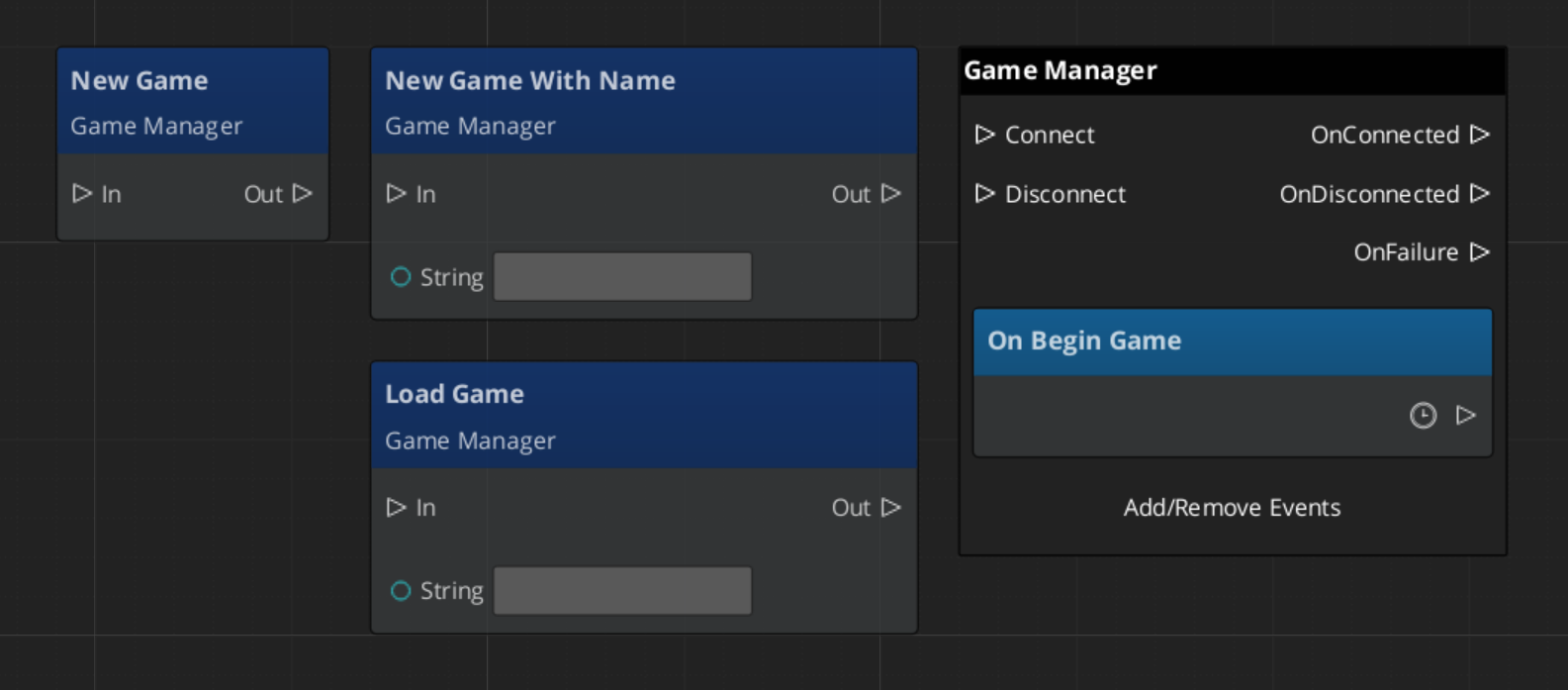

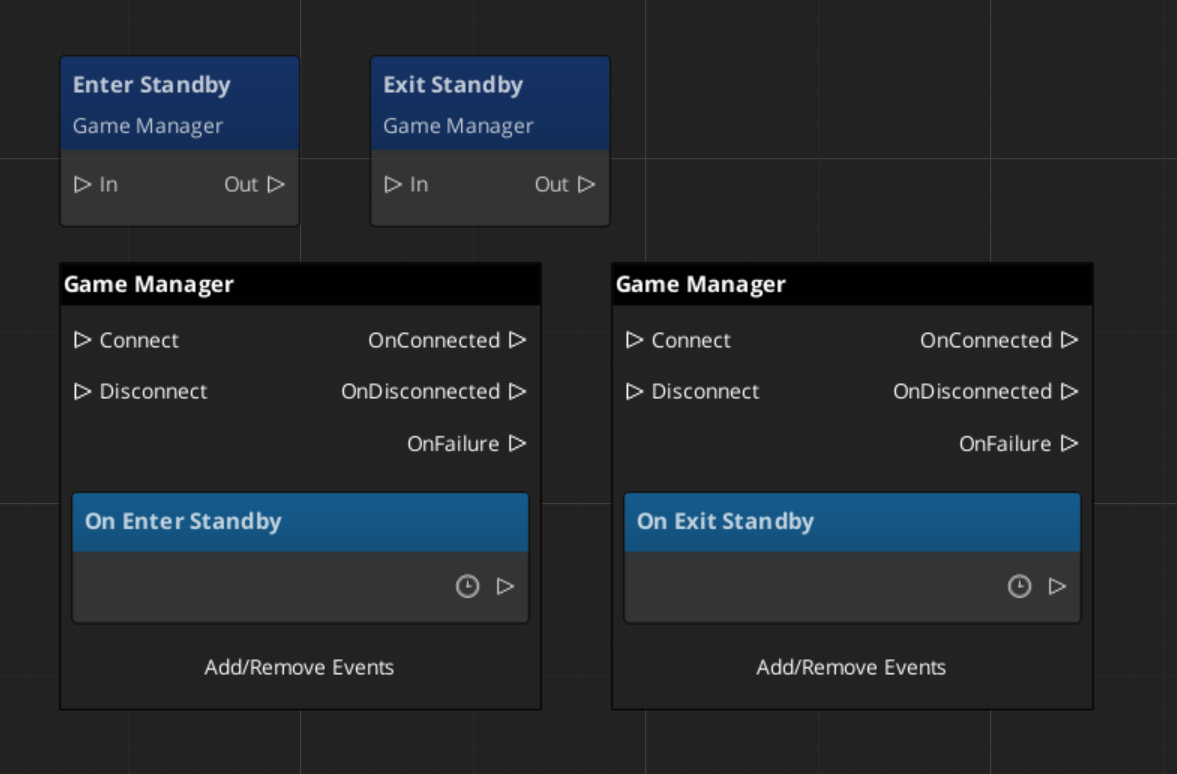

GameManagerRequestBus | Broadcast | IsInDebug, IsStarted, NewGame, LoadGame, ContinueGame, EnterStandby, ExitStandby, ReturnToTitle, ExitGame |

GameManagerNotificationBus | Listen | OnSetupManagers, OnStartupComplete, OnShutdownManagers, OnBeginGame, OnEnterStandby, OnExitStandby |

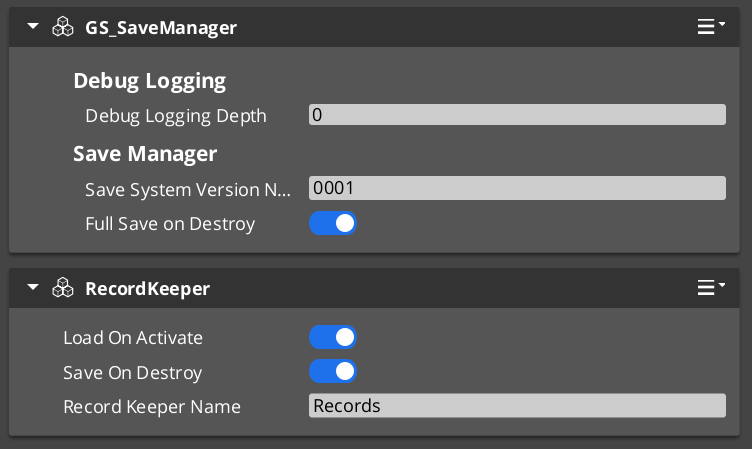

SaveManagerRequestBus | Broadcast | NewGameSave, LoadGame, SaveData, LoadData, GetOrderedSaveList |

SaveManagerNotificationBus | Listen | OnSaveAll, OnLoadAll |

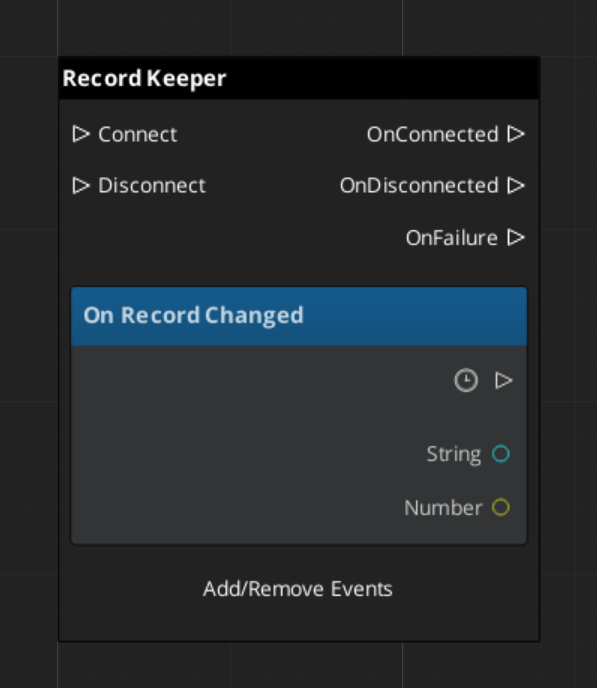

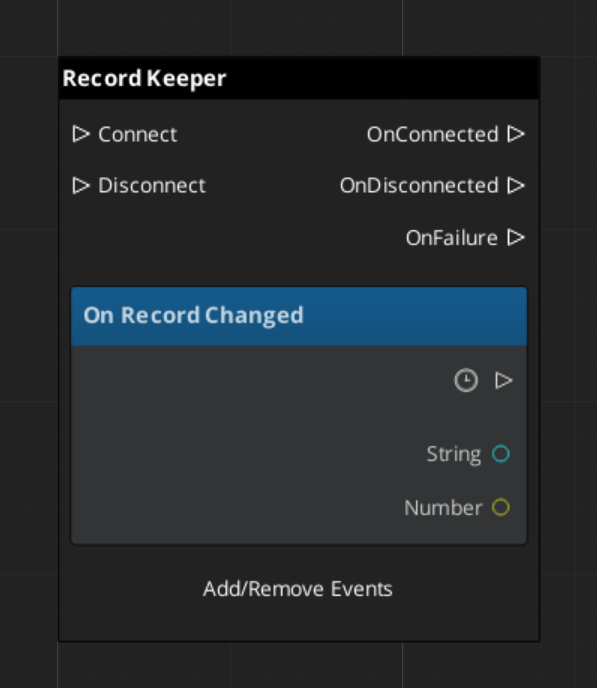

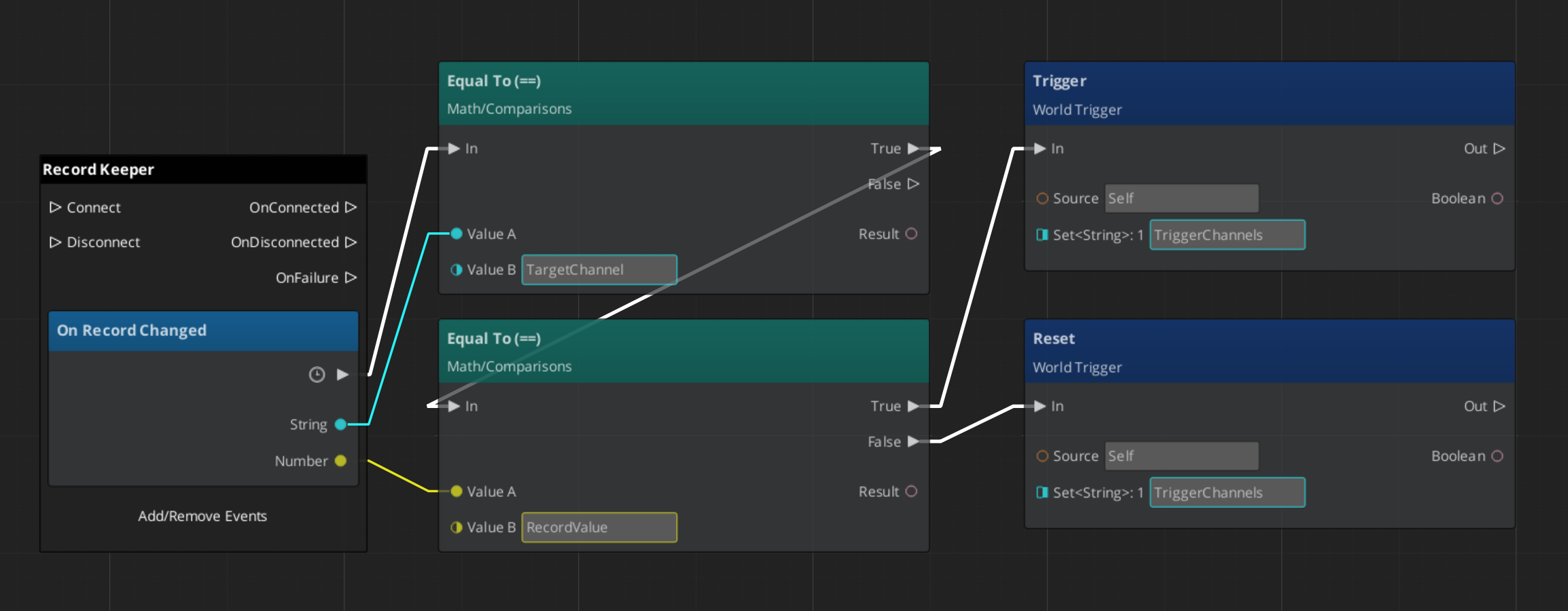

RecordKeeperRequestBus | ById | HasRecord, SetRecord, GetRecord, DeleteRecord |

RecordKeeperNotificationBus | ById Listen | RecordChanged |

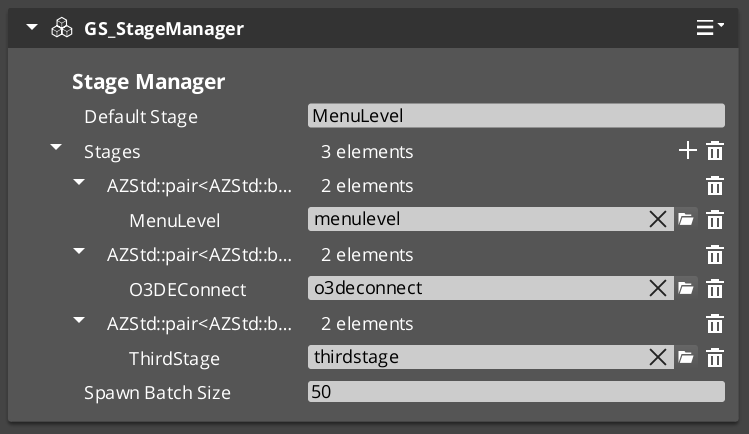

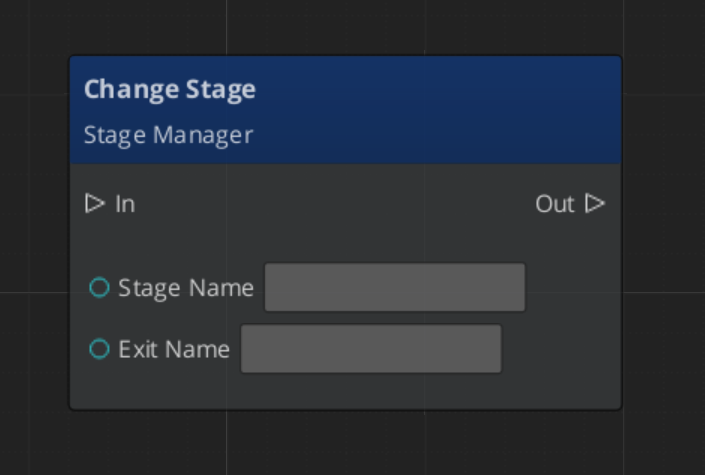

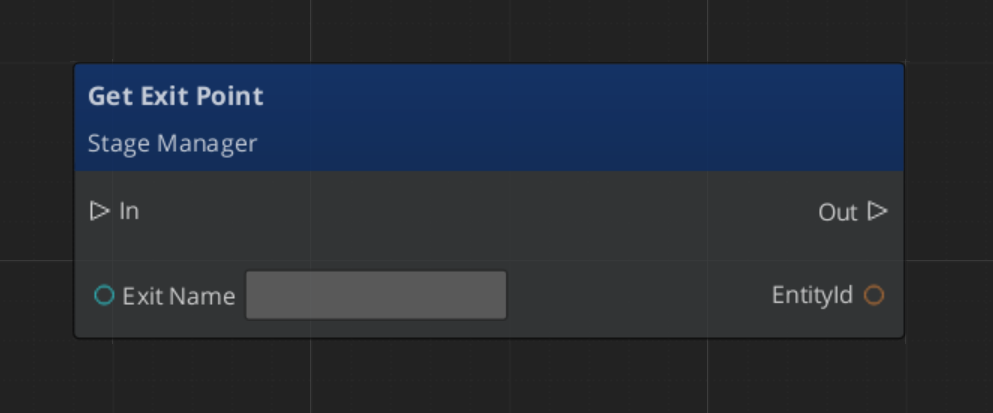

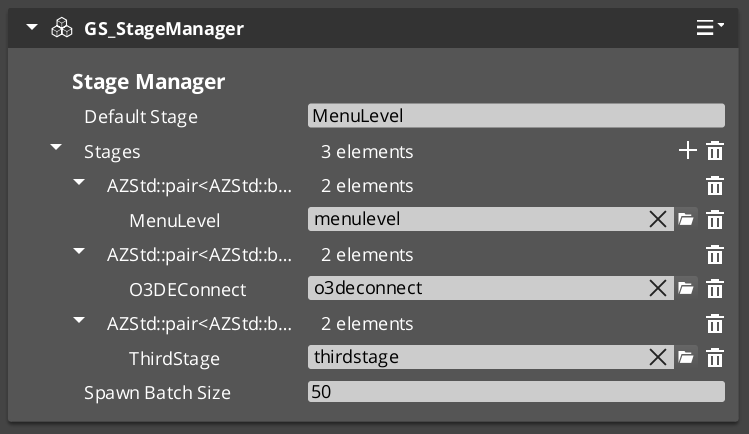

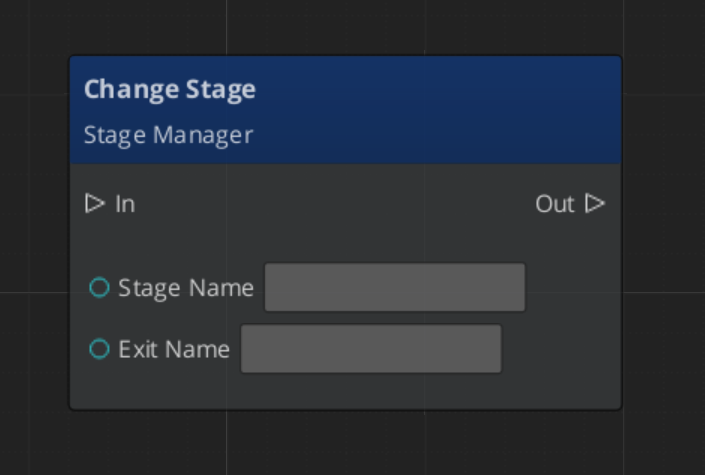

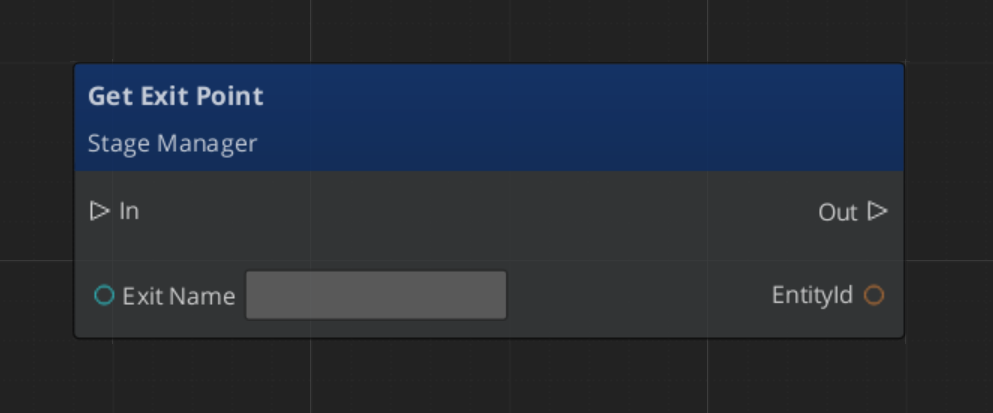

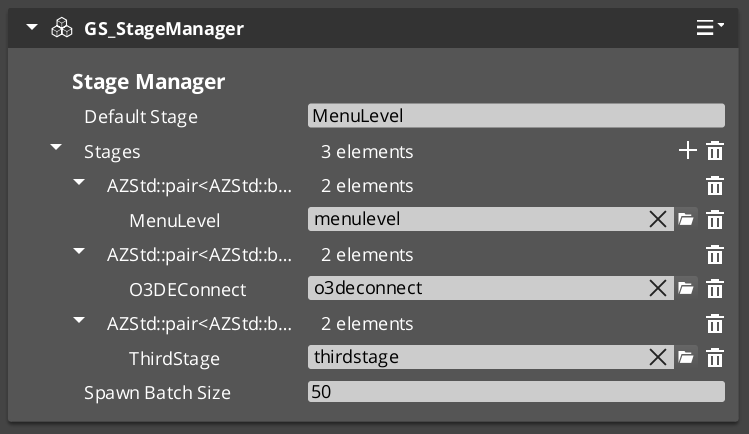

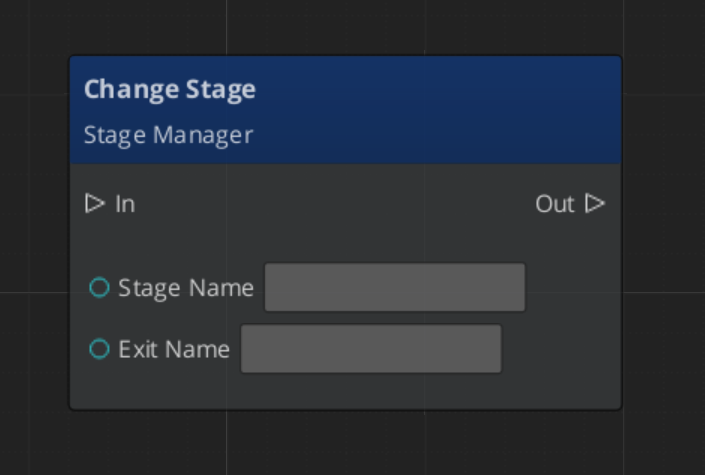

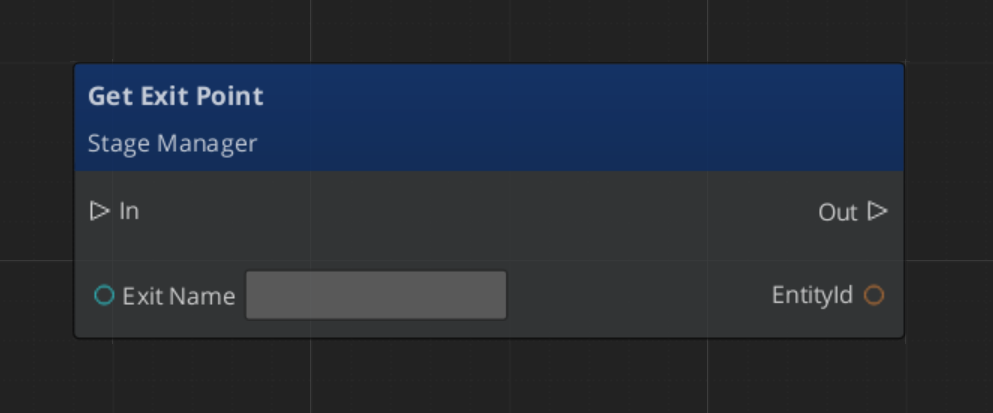

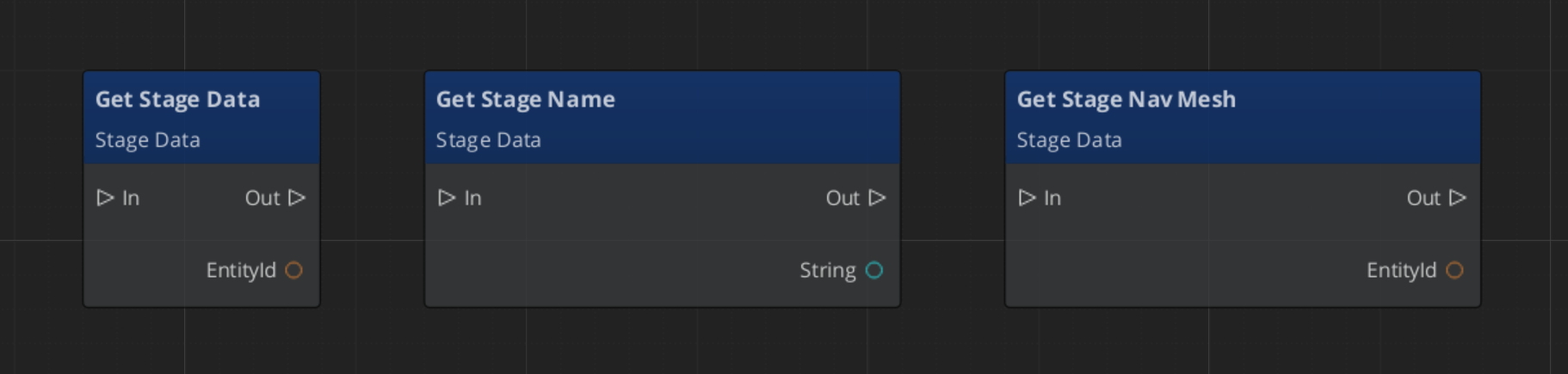

StageManagerRequestBus | Broadcast | ChangeStageRequest, LoadDefaultStage, RegisterExitPoint, GetExitPoint |

StageManagerNotificationBus | Listen | BeginLoadStage, LoadStageComplete, StageLoadProgress |

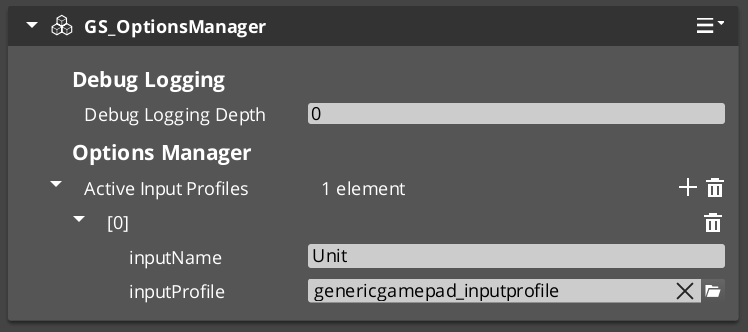

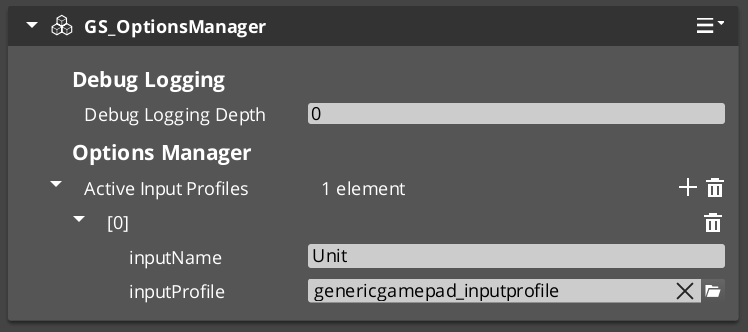

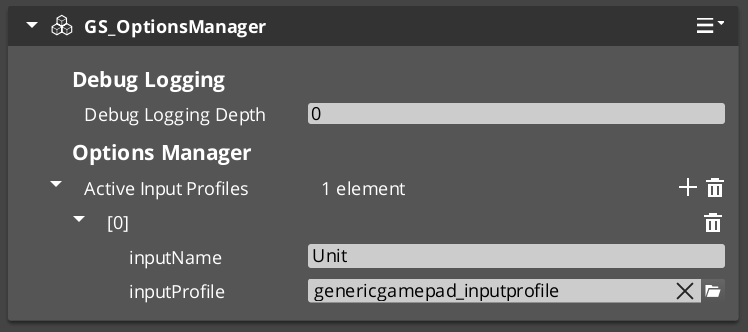

OptionsManagerRequestBus | Broadcast | GetActiveInputProfile |

ActionRequestBus | ById | DoAction |

ActionNotificationBus | ById Listen | OnActionComplete |

GS_Cinematics buses

| Bus | Dispatch | Key Methods |

|---|

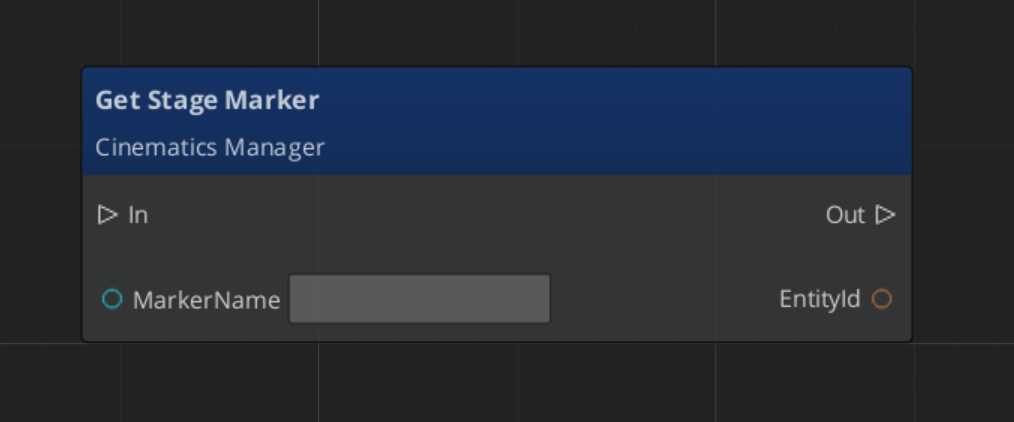

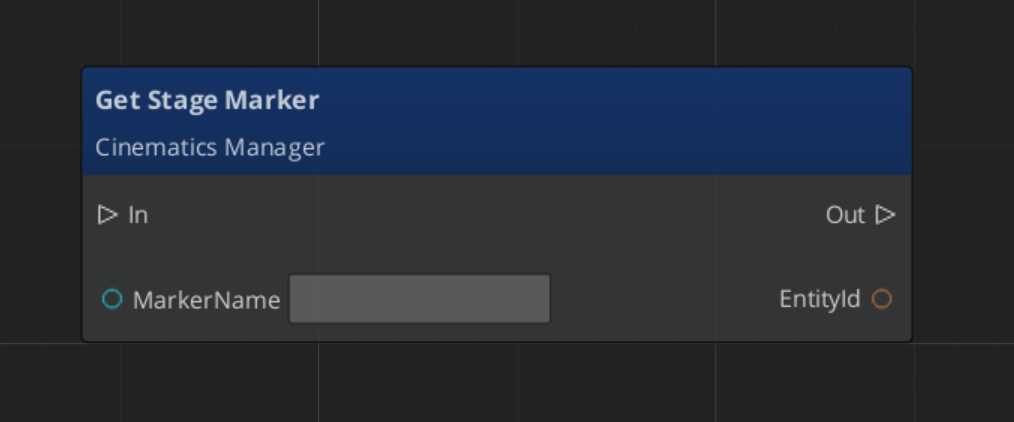

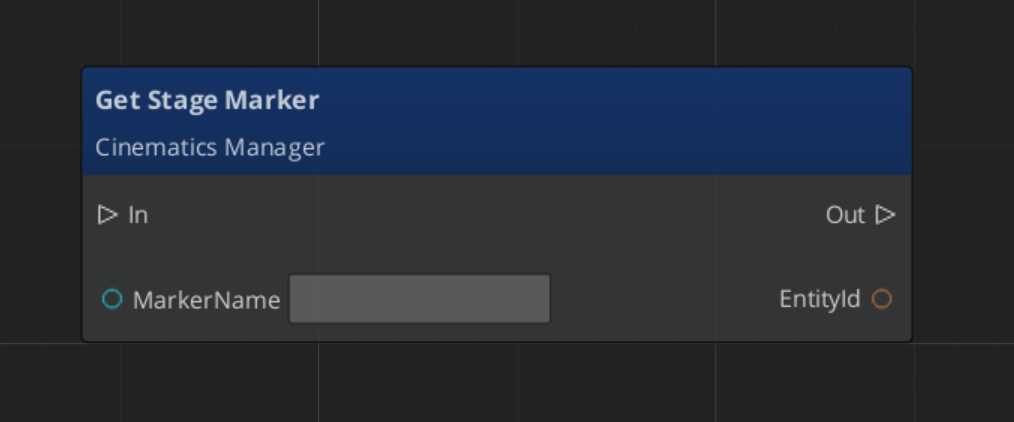

CinematicsManagerRequestBus | Broadcast | BeginCinematic, EndCinematic, RegisterStageMarker, GetStageMarker |

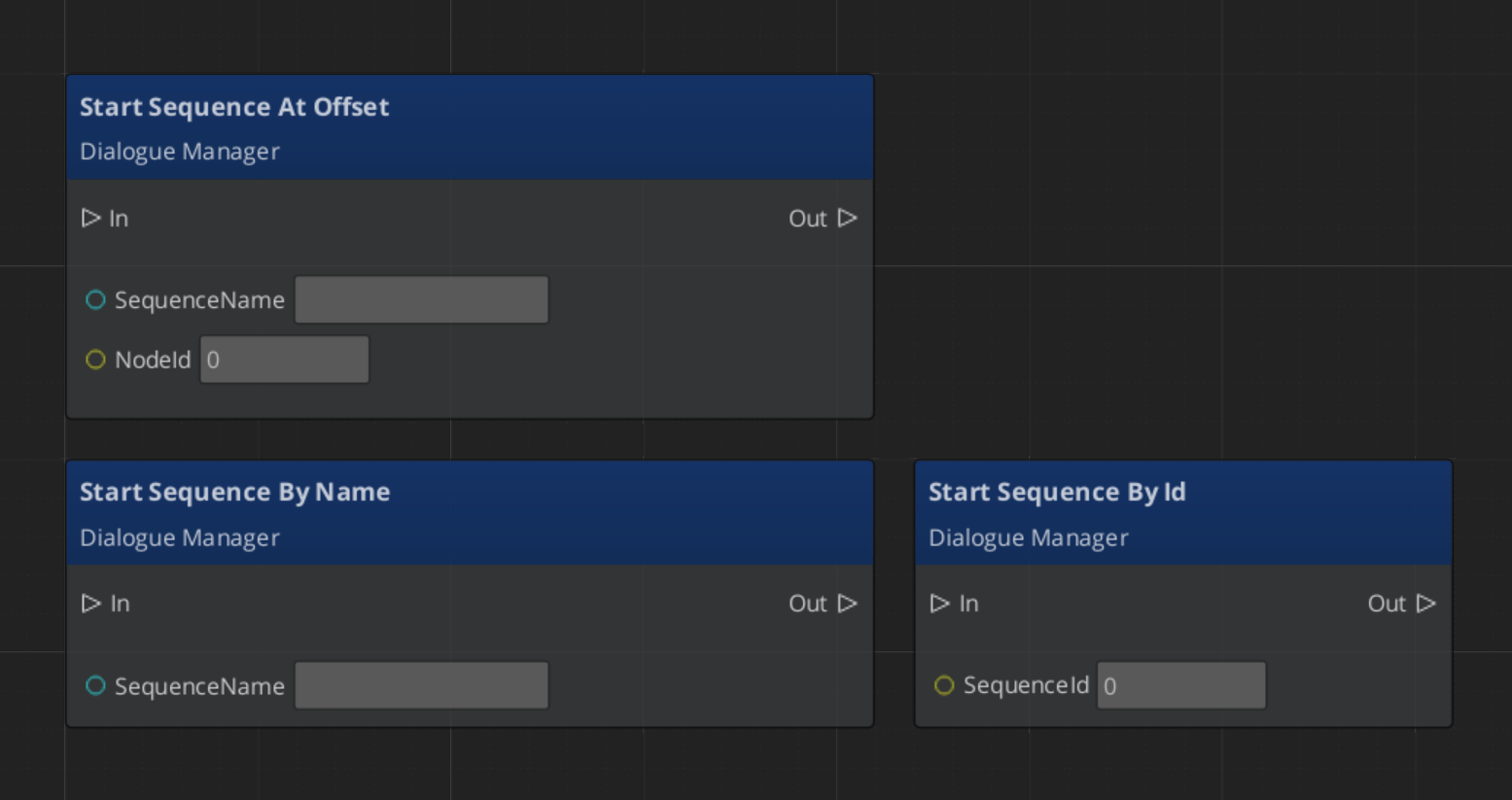

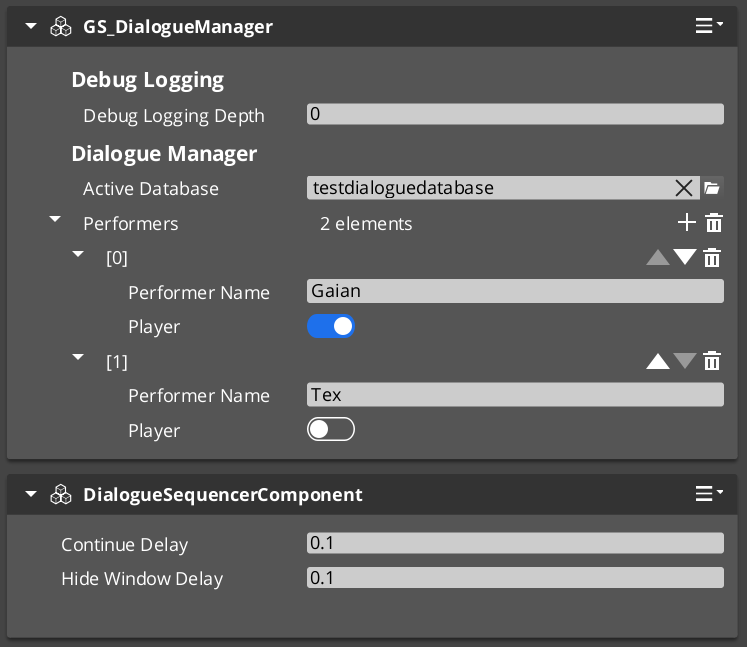

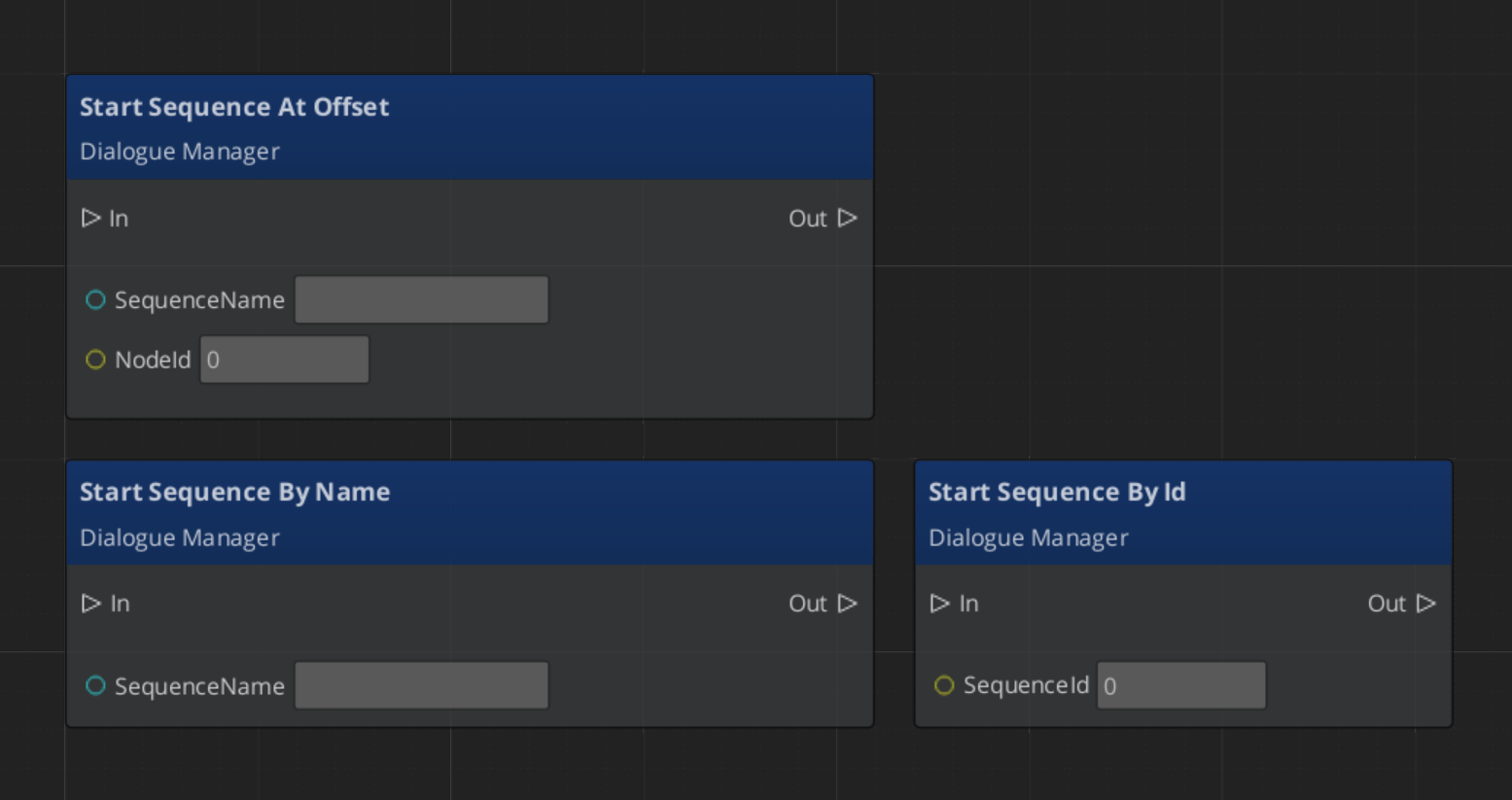

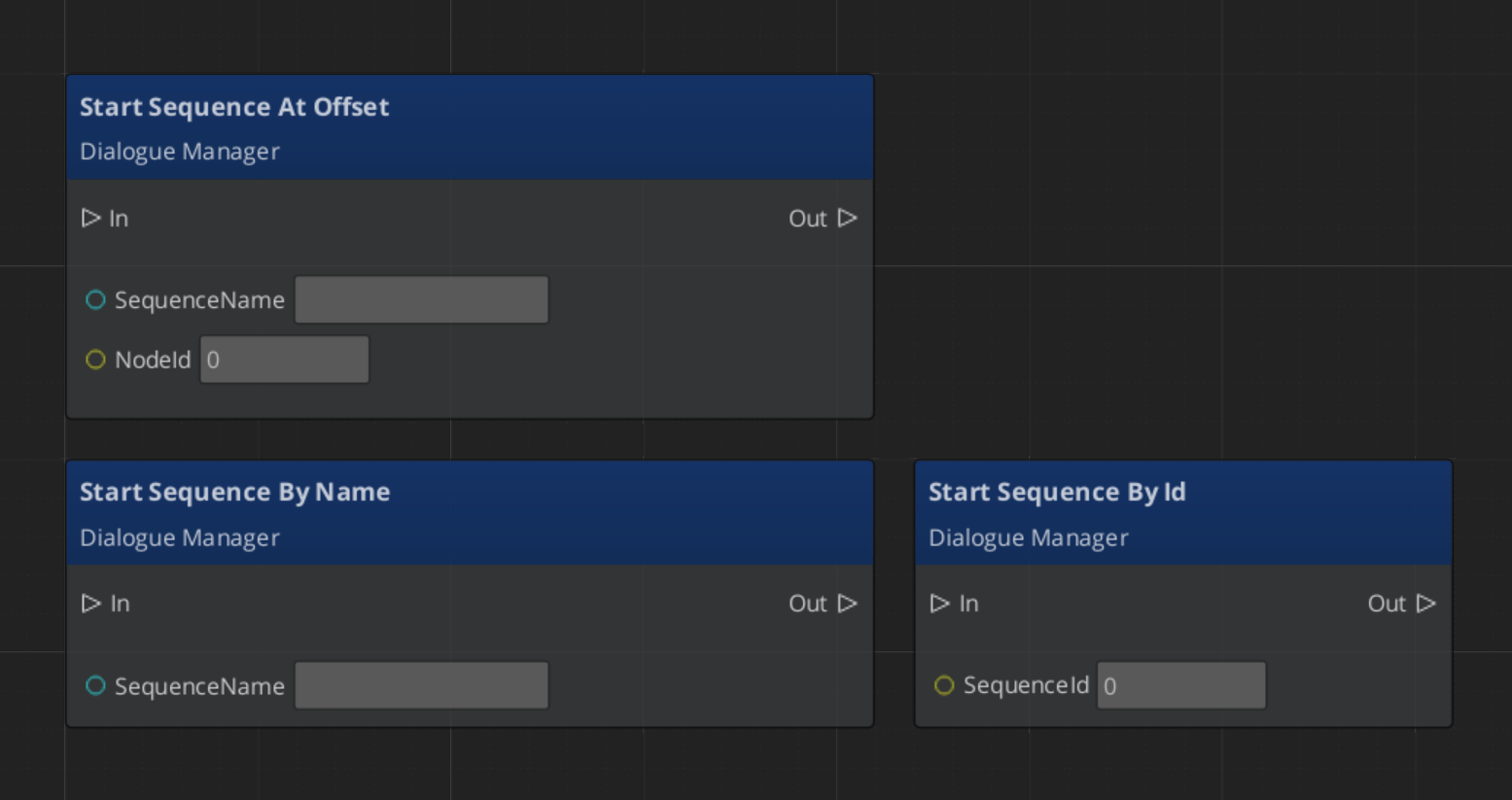

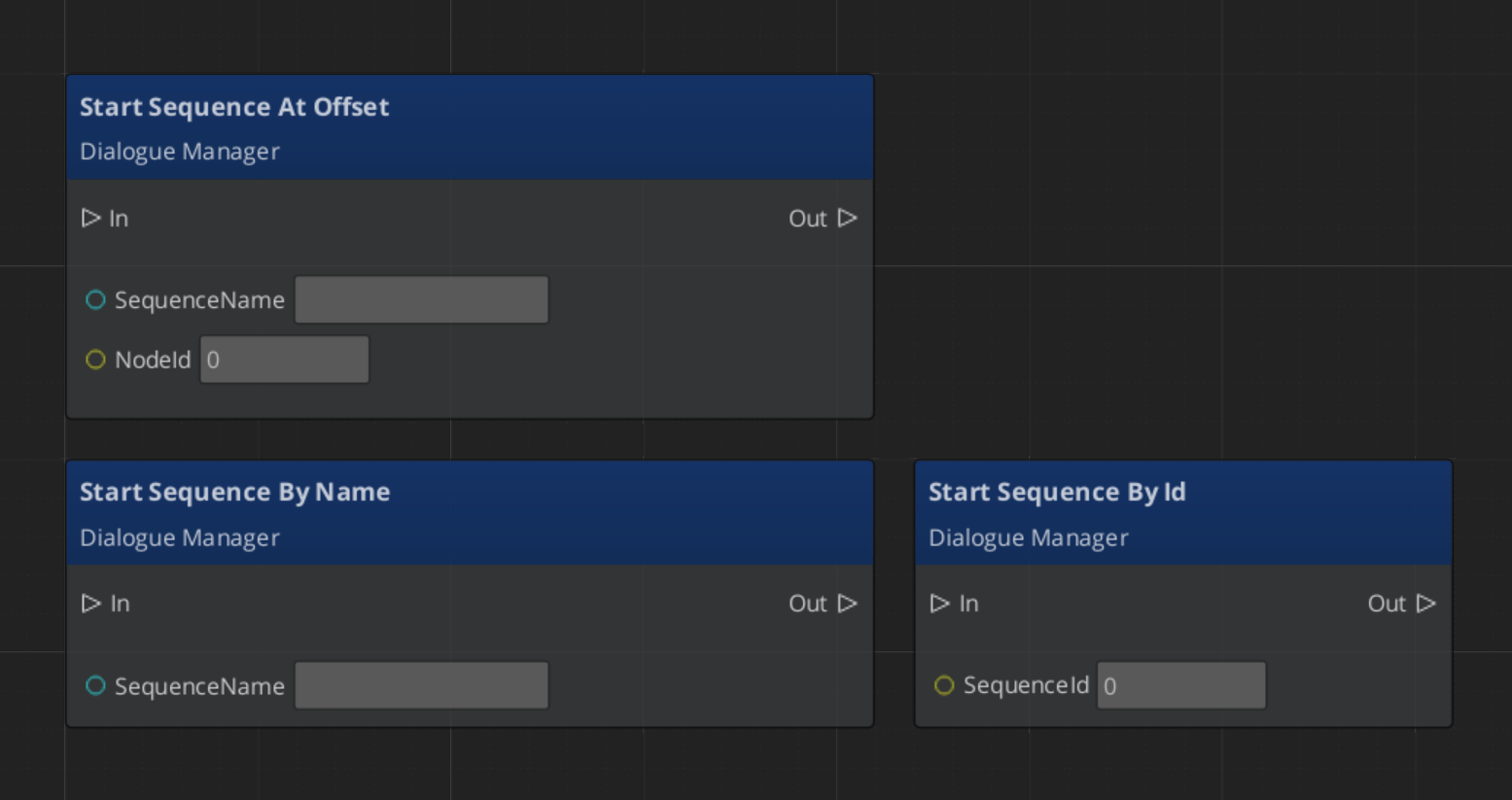

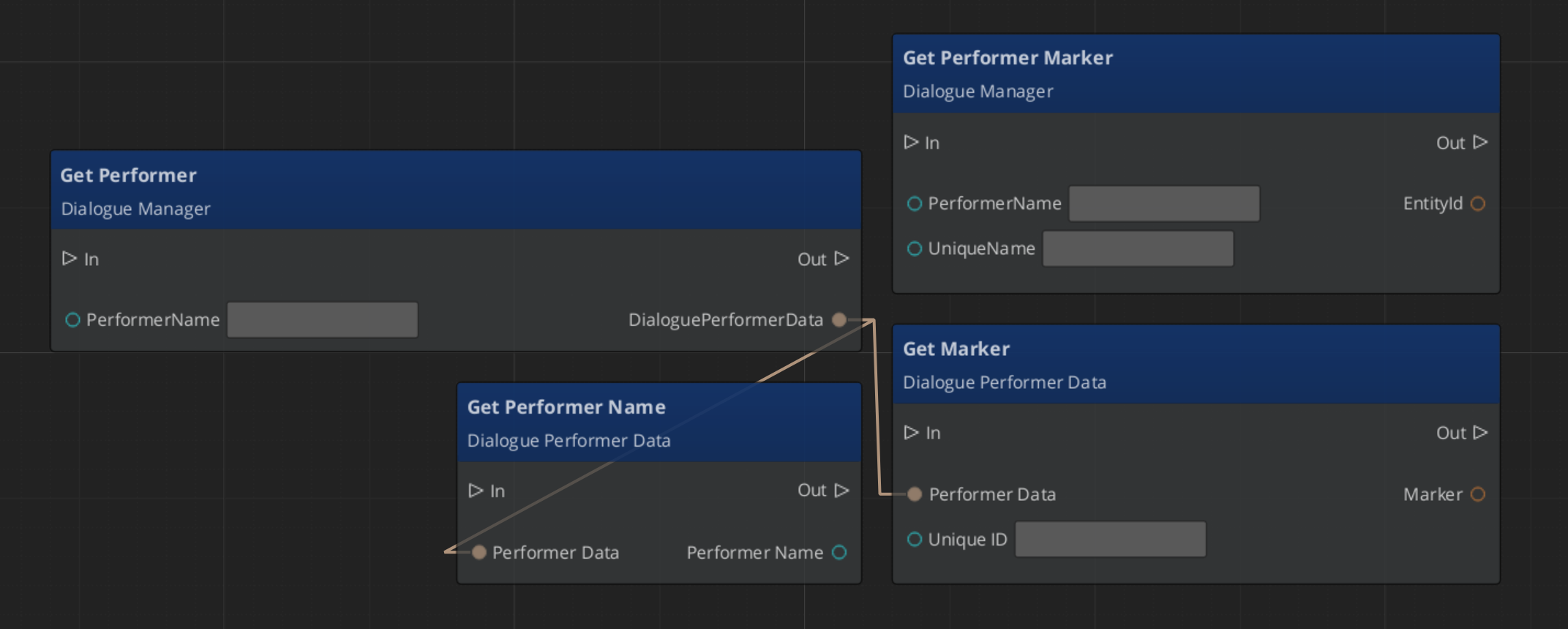

DialogueManagerRequestBus | Broadcast | StartDialogueSequenceByName, ChangeDialogueDatabase, RegisterPerformerMarker, GetPerformer |

DialogueSequencerRequestBus | Broadcast | StartDialogueBySequence, OnPerformanceComplete |

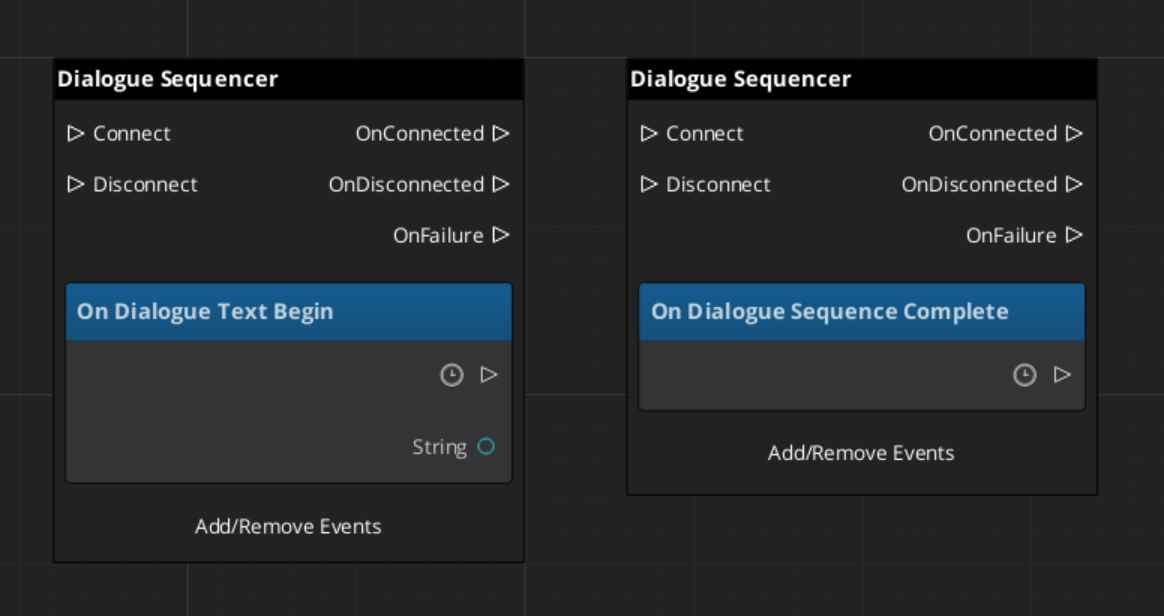

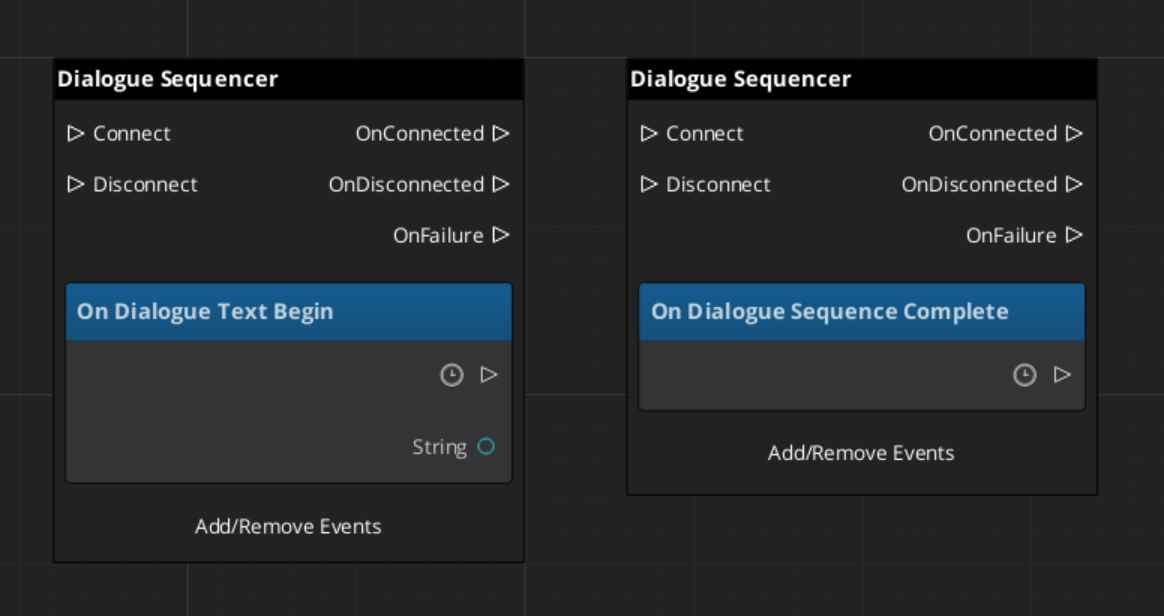

DialogueSequencerNotificationBus | Listen | OnDialogueTextBegin, OnDialogueSequenceComplete |

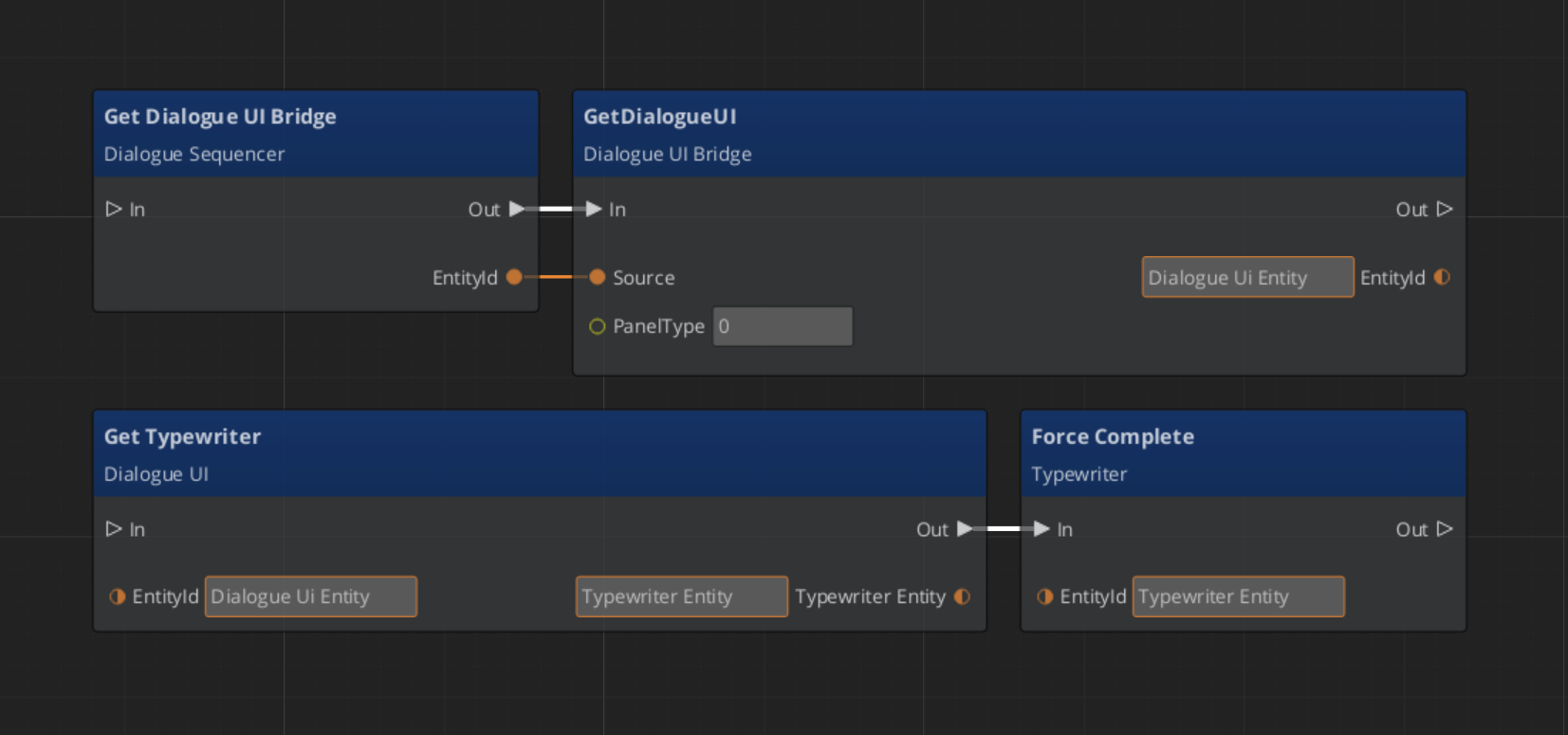

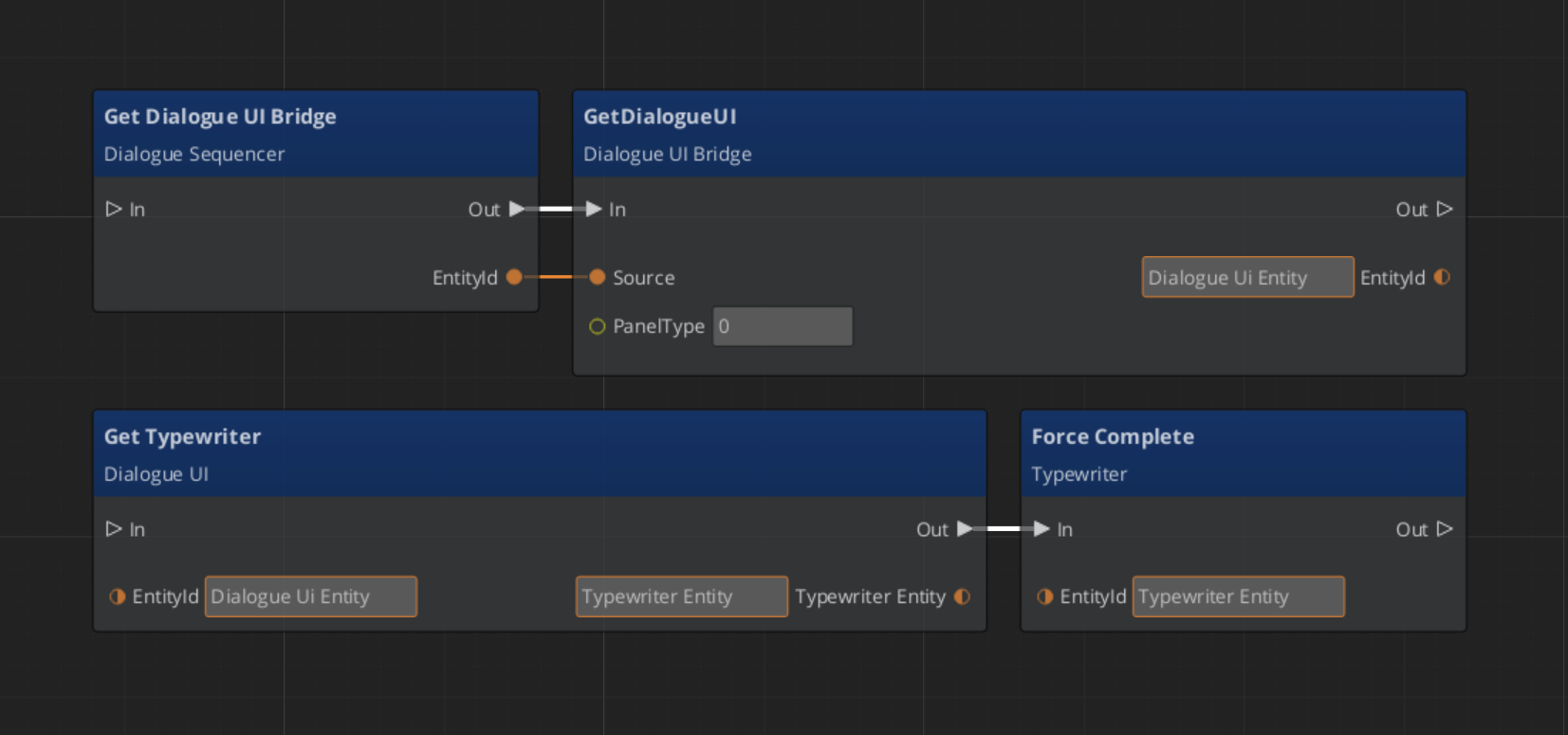

DialogueUIBridgeRequestBus | ById | RunDialogue, RunSelection, RegisterDialogueUI, CloseDialogue |

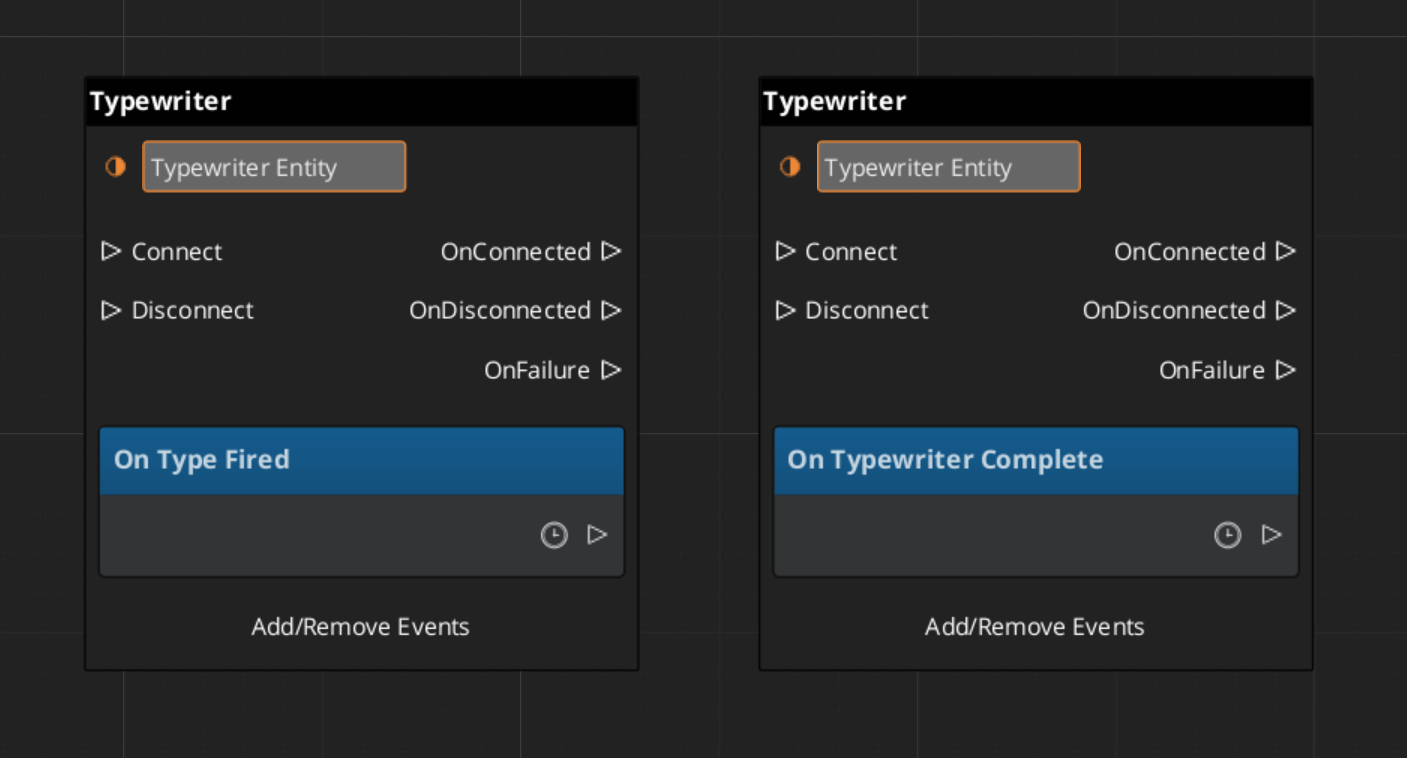

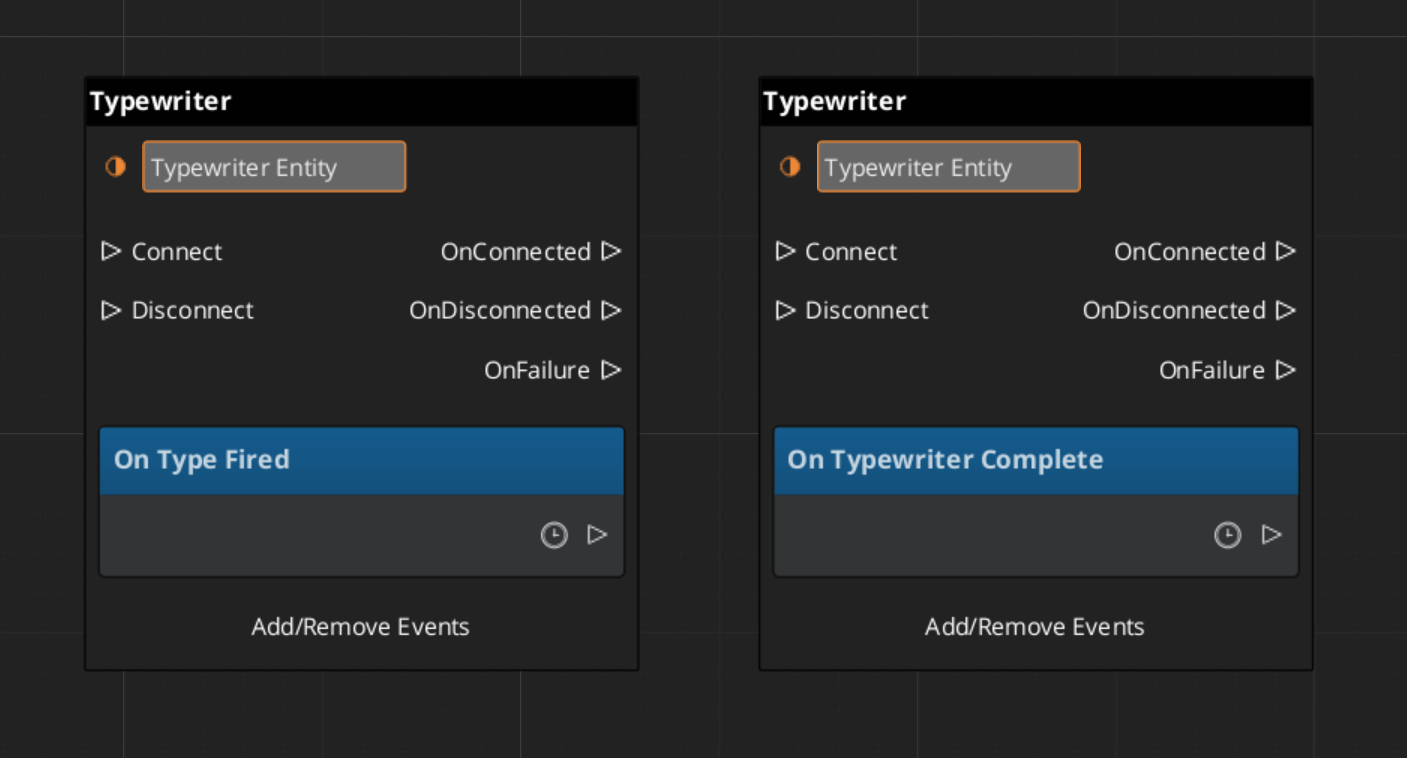

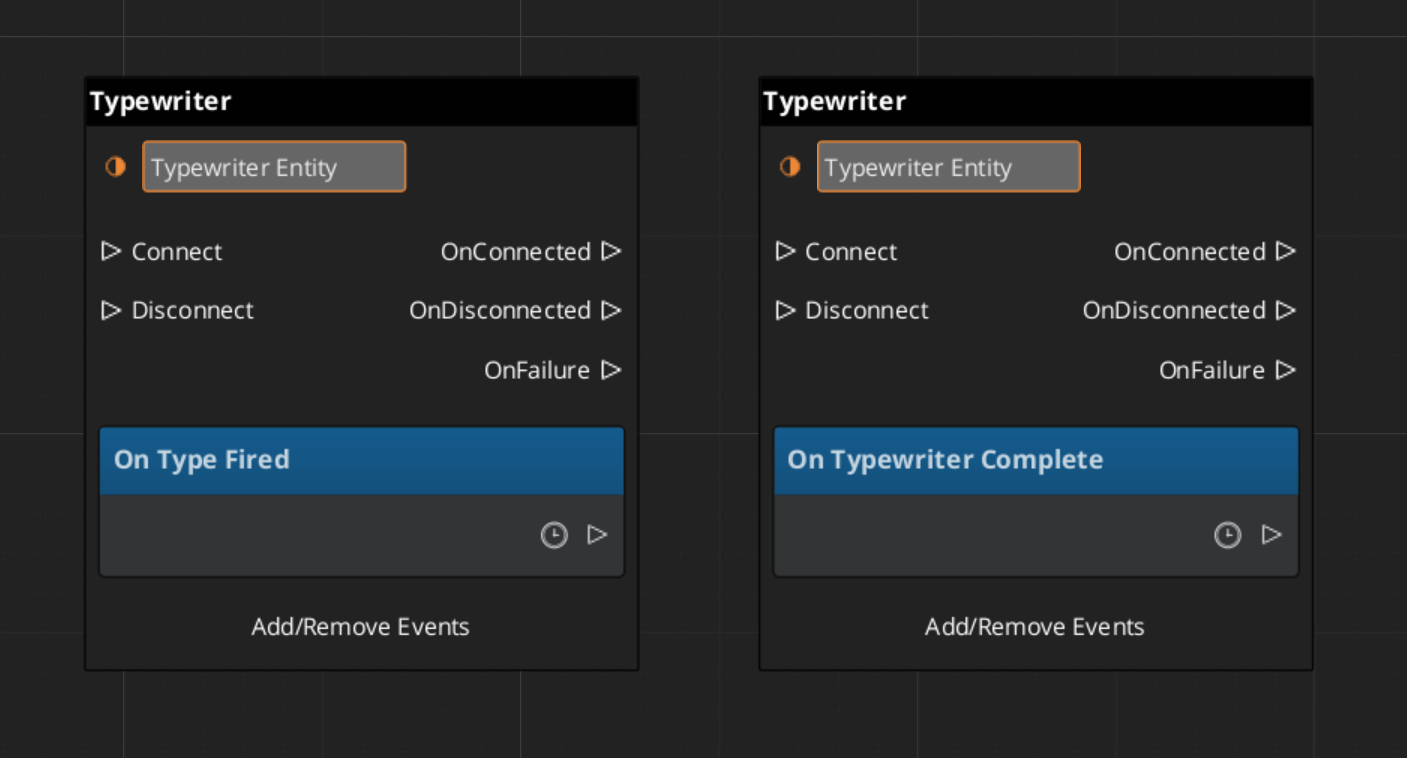

TypewriterRequestBus | ById | StartTypewriter, ForceComplete, ClearTypewriter |

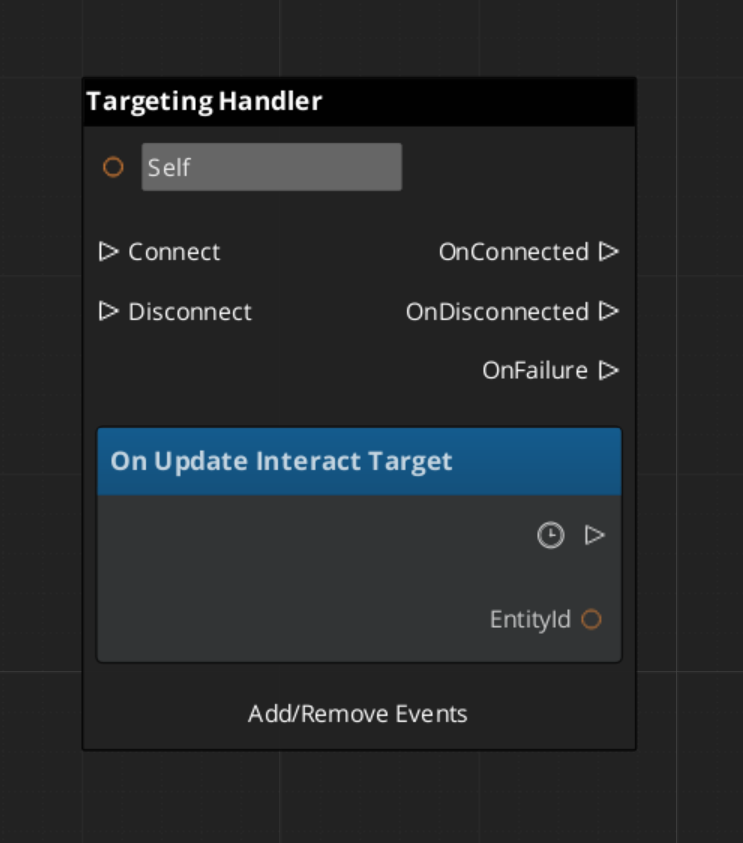

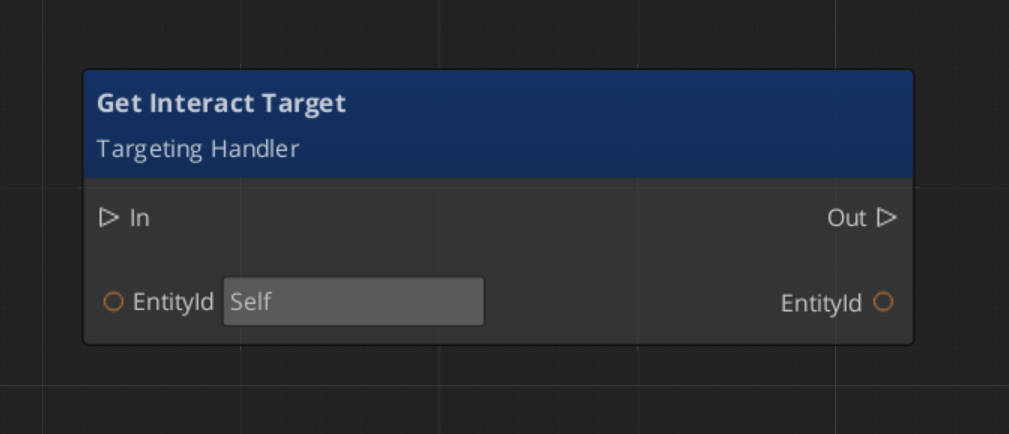

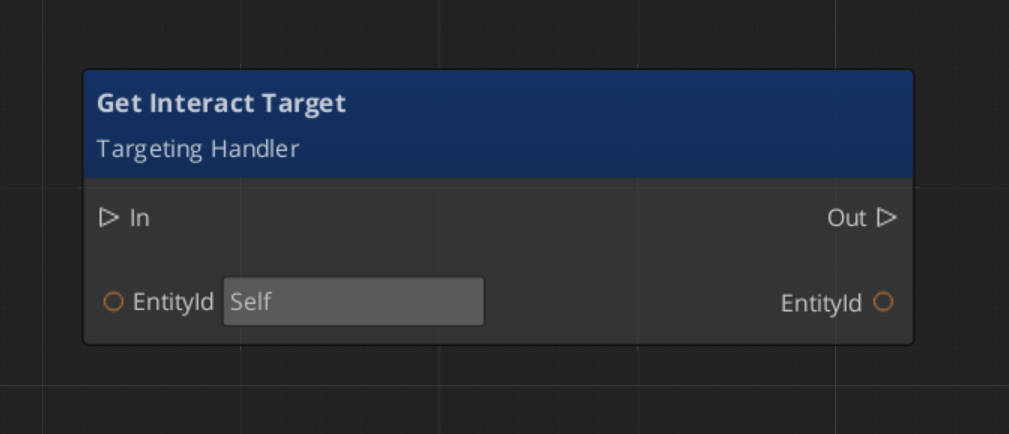

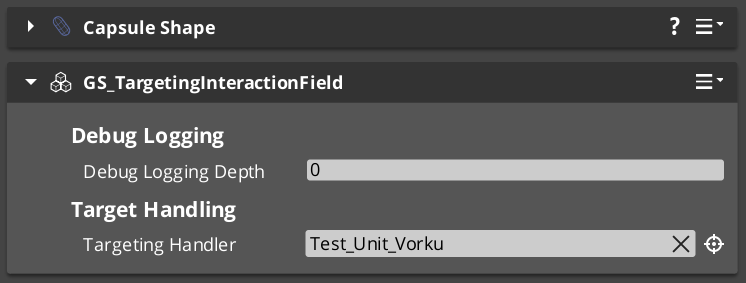

GS_Interaction buses

| Bus | Dispatch | Key Methods |

|---|

PulseReactorRequestBus | ById | ReceivePulses, IsReactor |

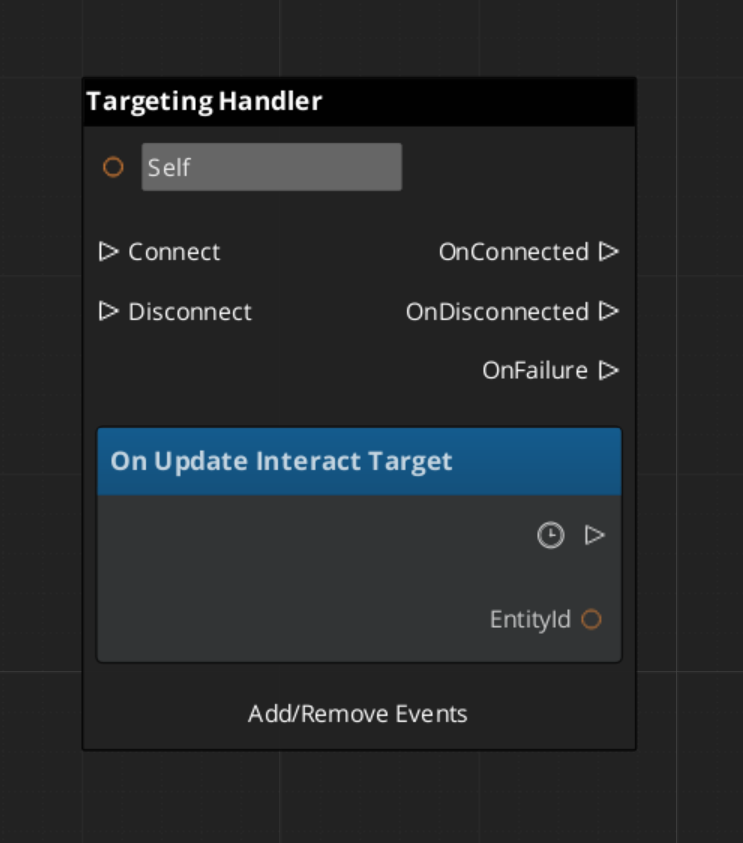

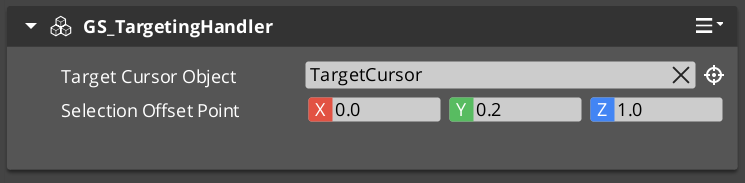

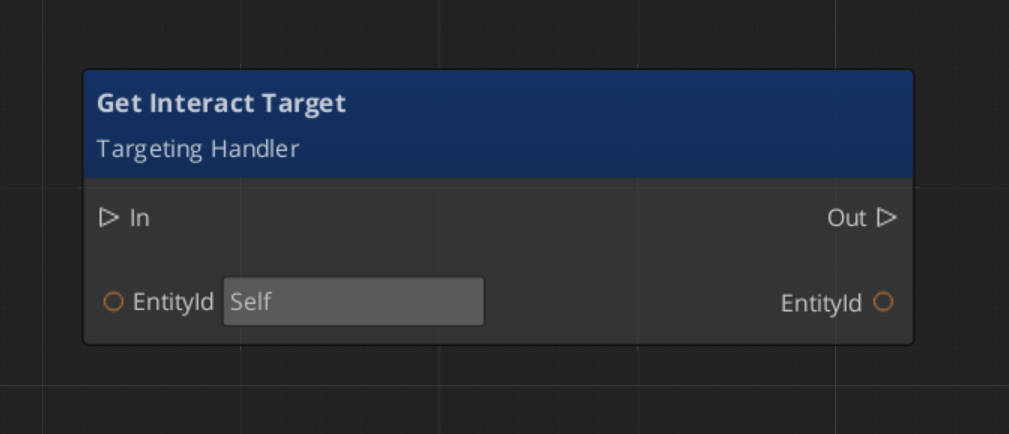

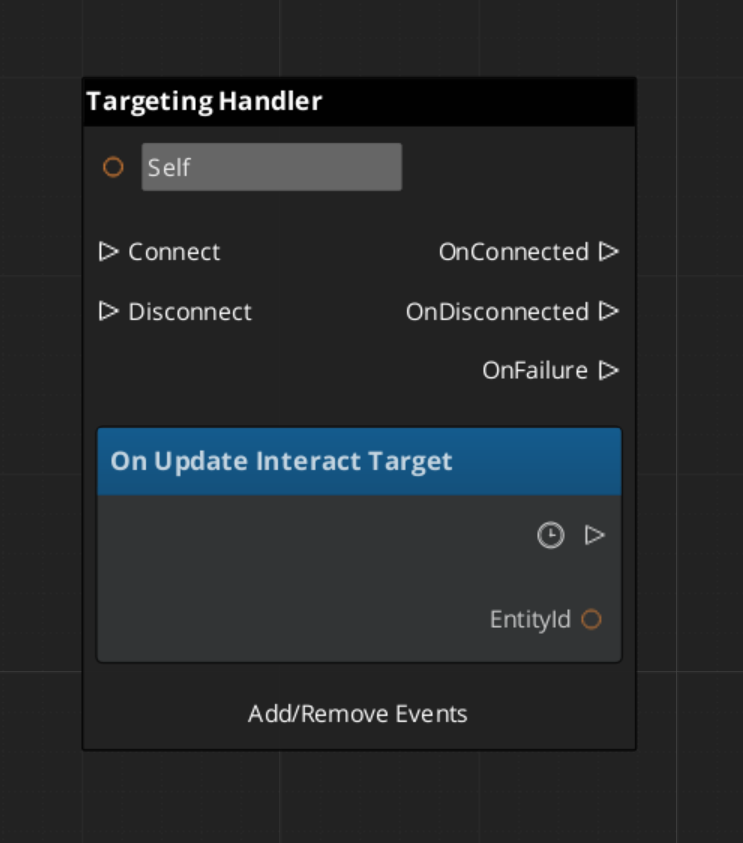

GS_TargetingHandlerRequestBus | ById | RegisterTarget, UnregisterTarget, GetInteractTarget |

GS_TargetingHandlerNotificationBus | ById Listen | OnUpdateInteractTarget, OnEnterStandby, OnExitStandby |

WorldTriggerRequestBus | ById | Trigger, Reset |

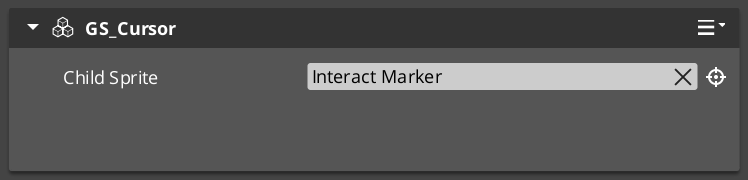

GS_CursorRequestBus | Broadcast | RegisterCursorCanvas, HideCursor, SetCursorOffset, SetCursorVisuals, SetCursorPosition |

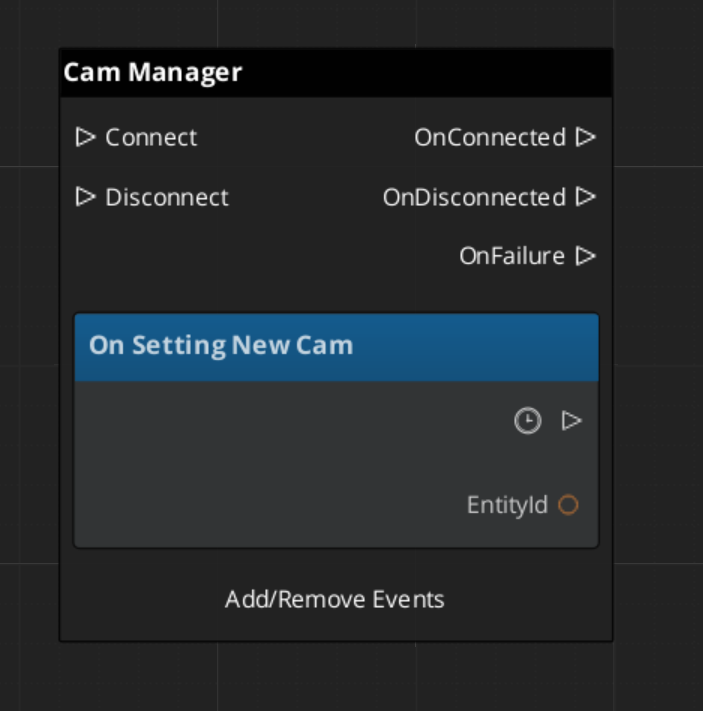

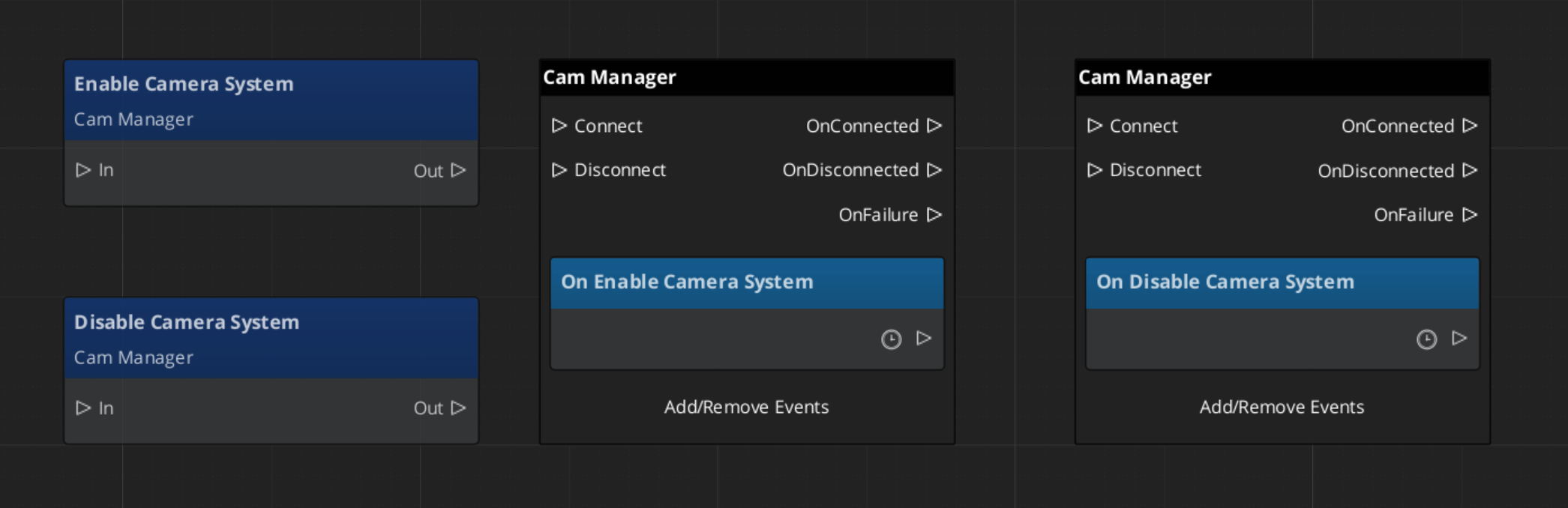

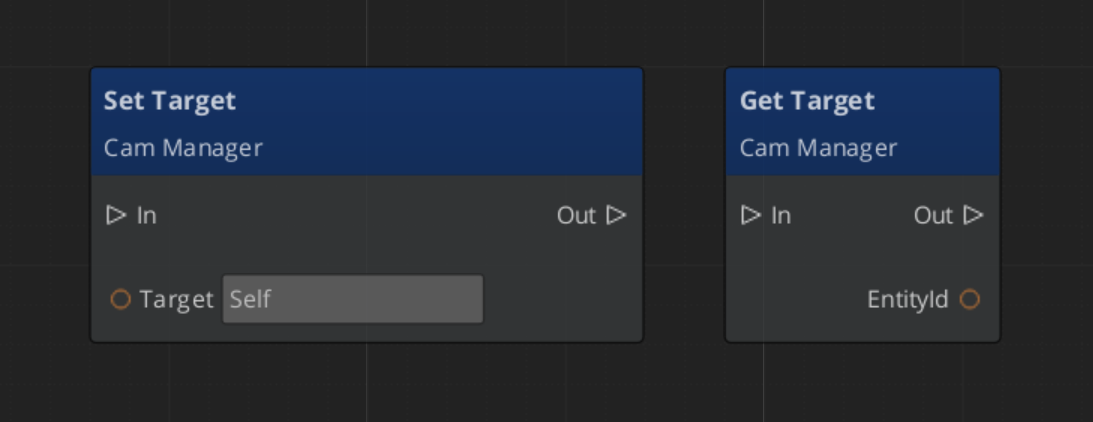

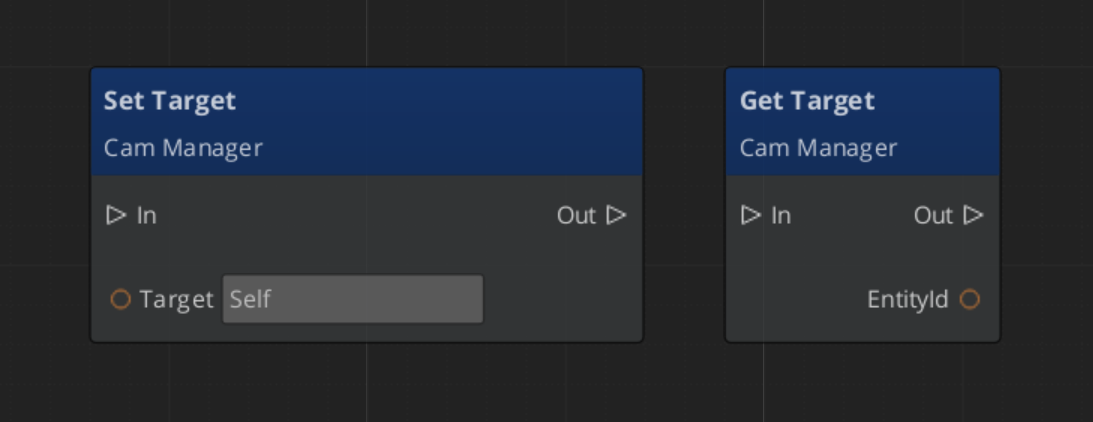

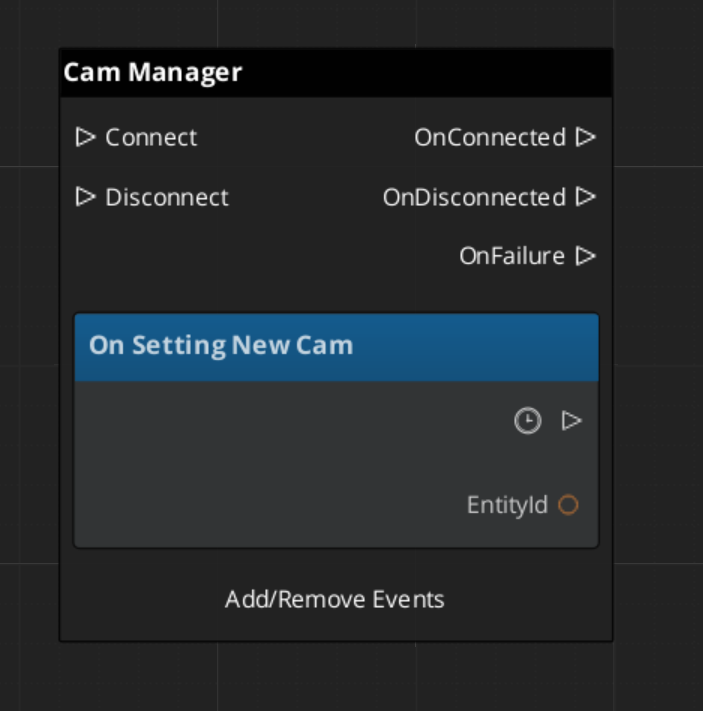

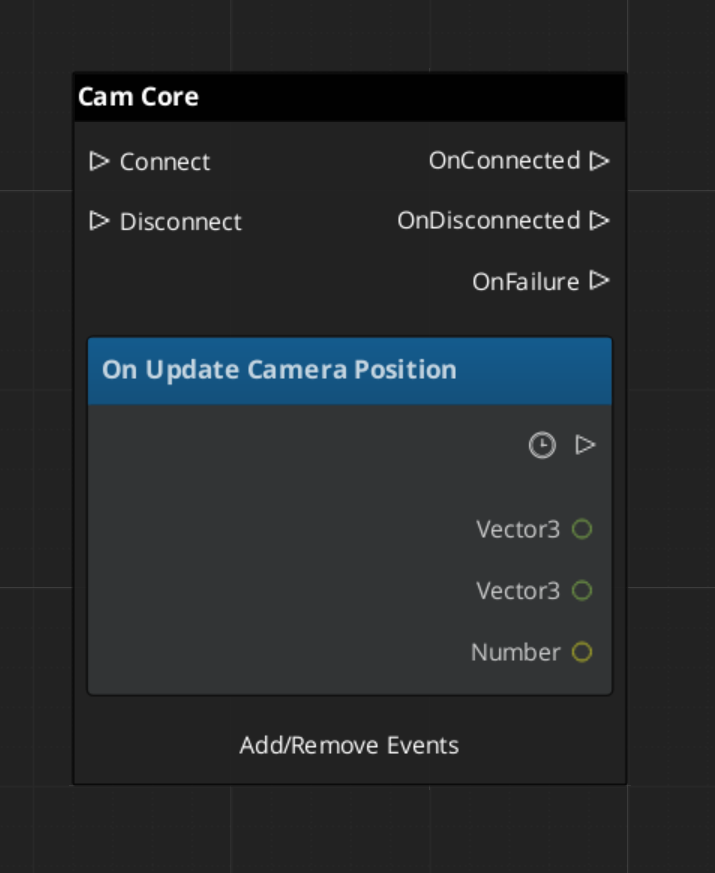

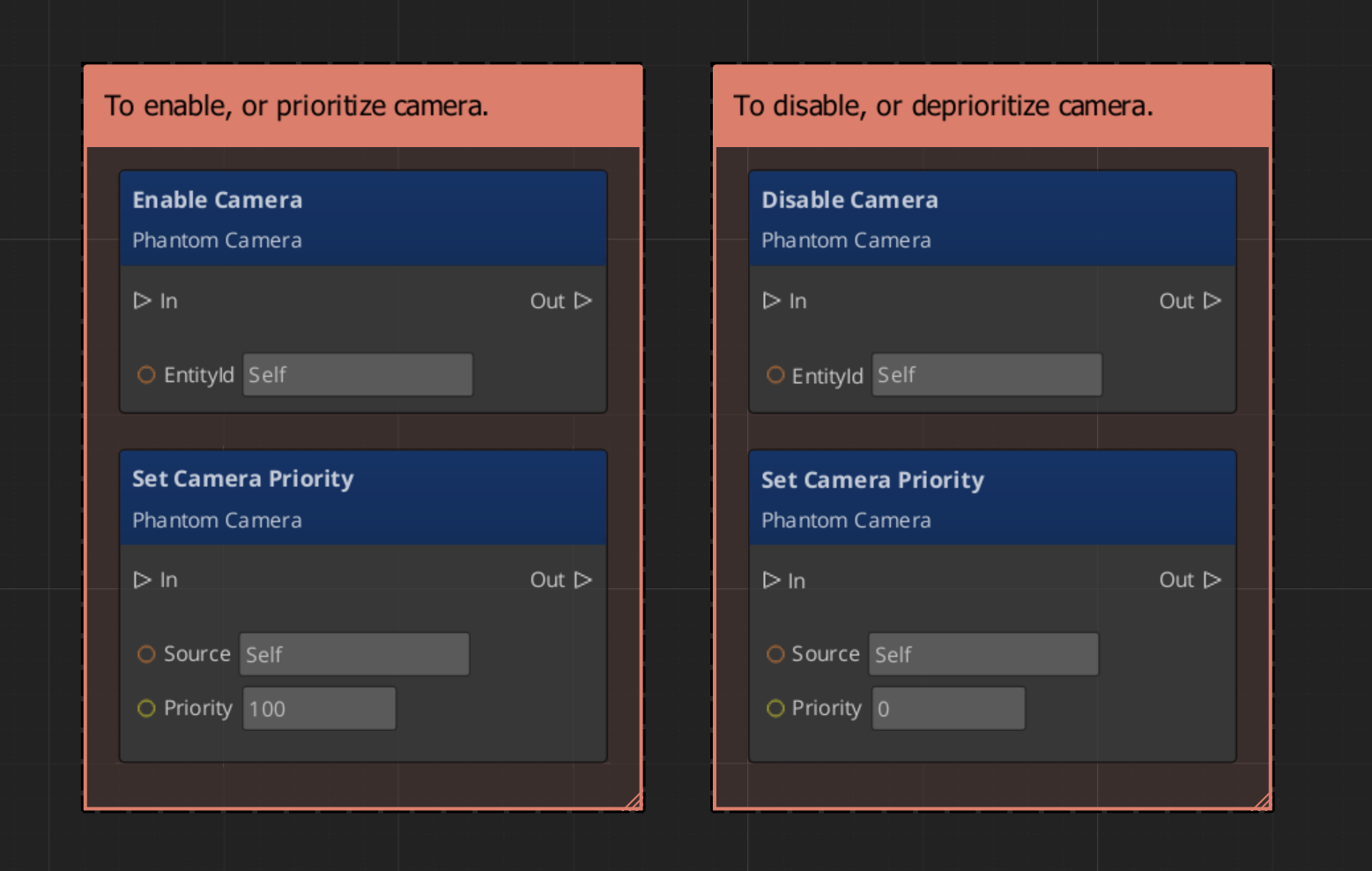

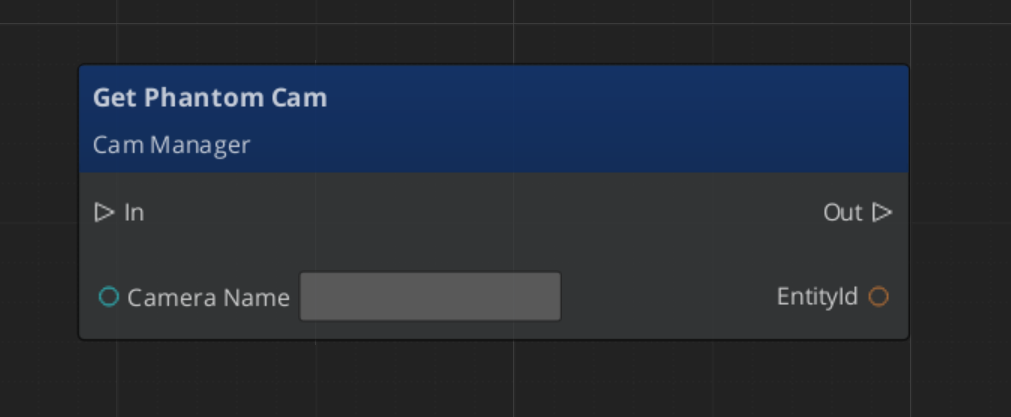

GS_PhantomCam buses

| Bus | Dispatch | Key Methods |

|---|

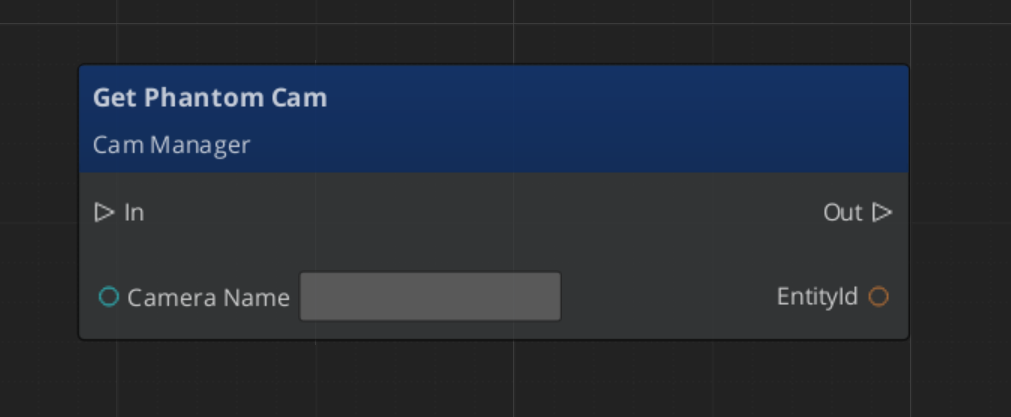

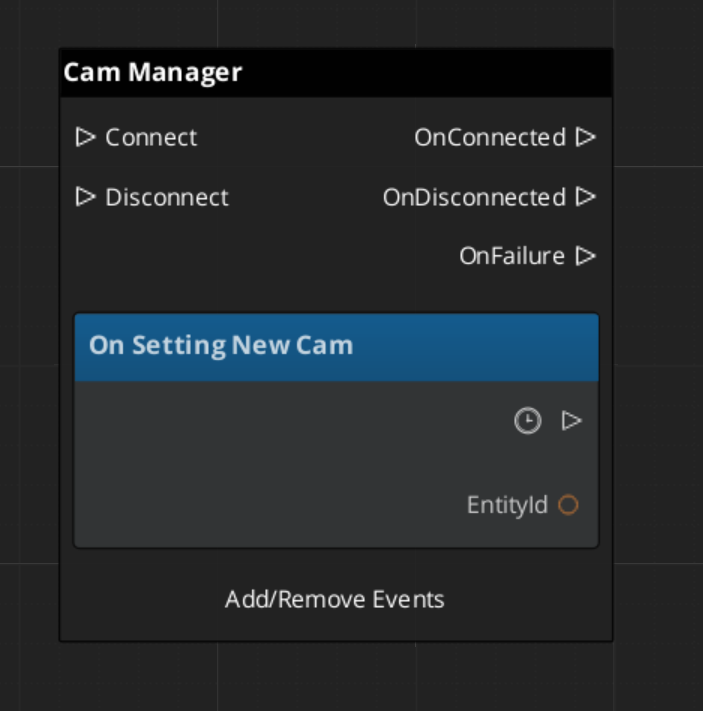

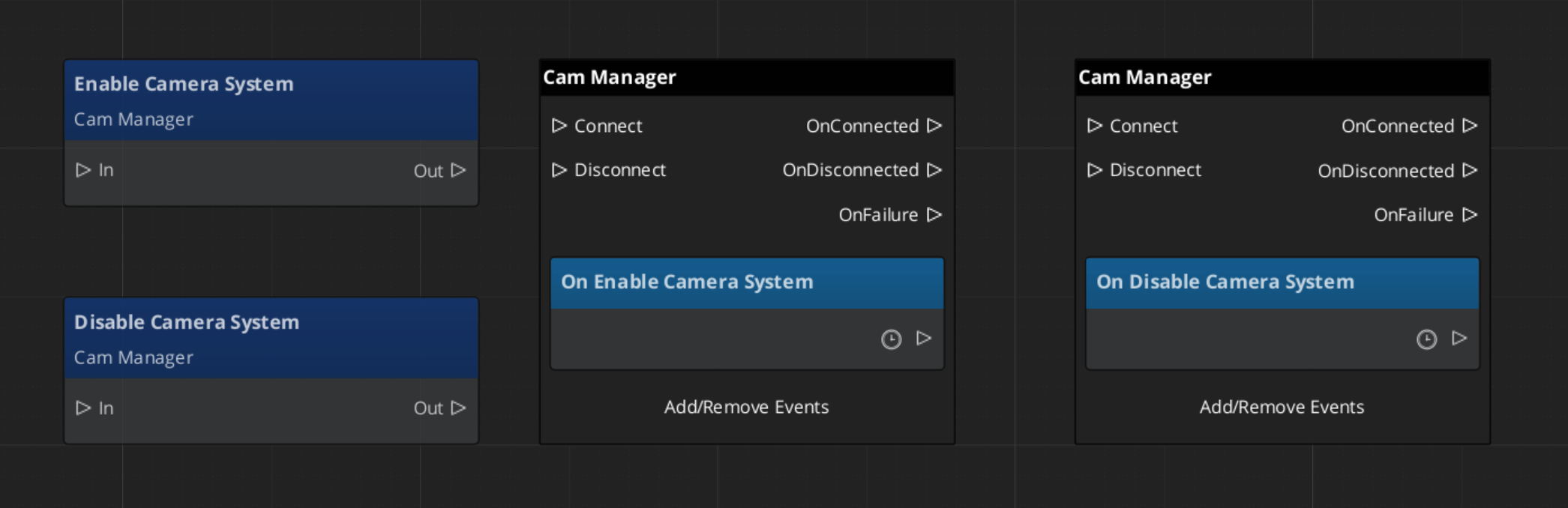

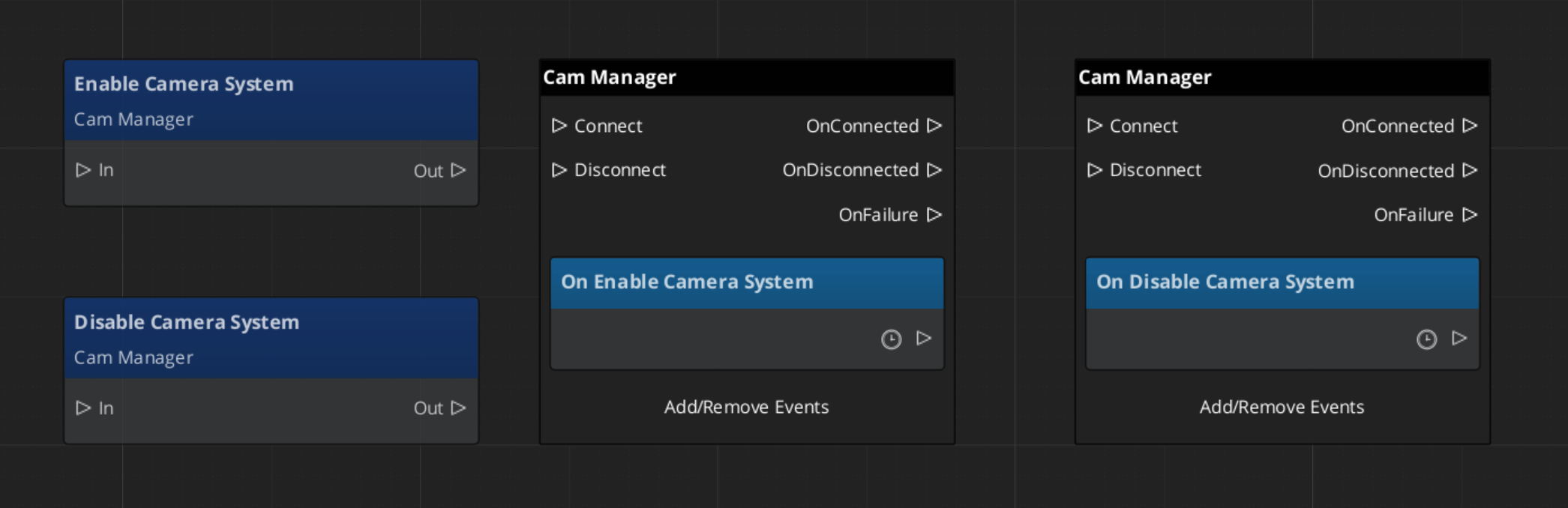

CamManagerRequestBus | Broadcast | Camera system lifecycle |

CamManagerNotificationBus | Listen | EnableCameraSystem, DisableCameraSystem, SettingNewCam |

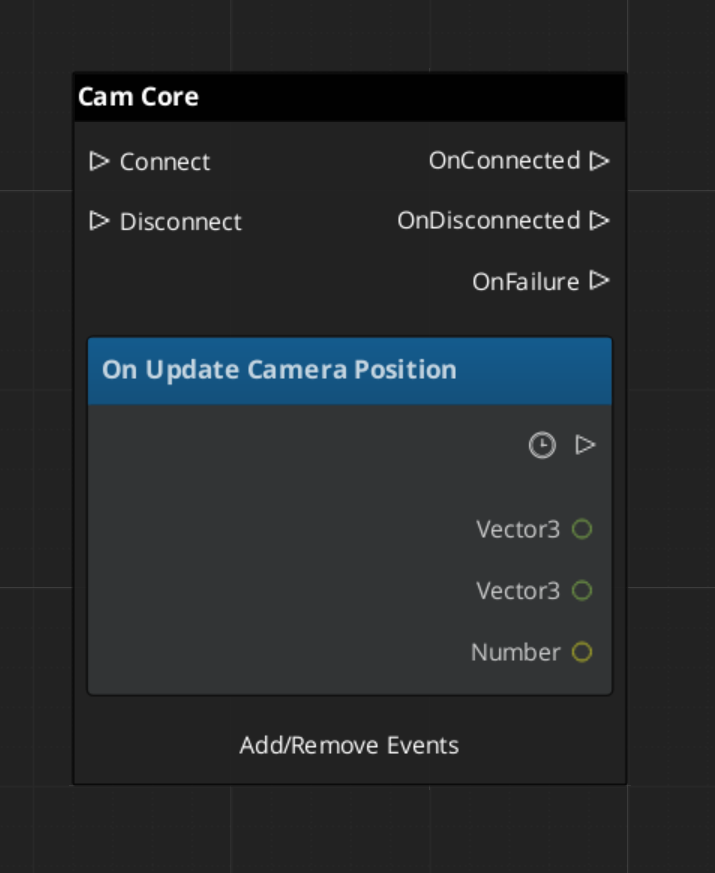

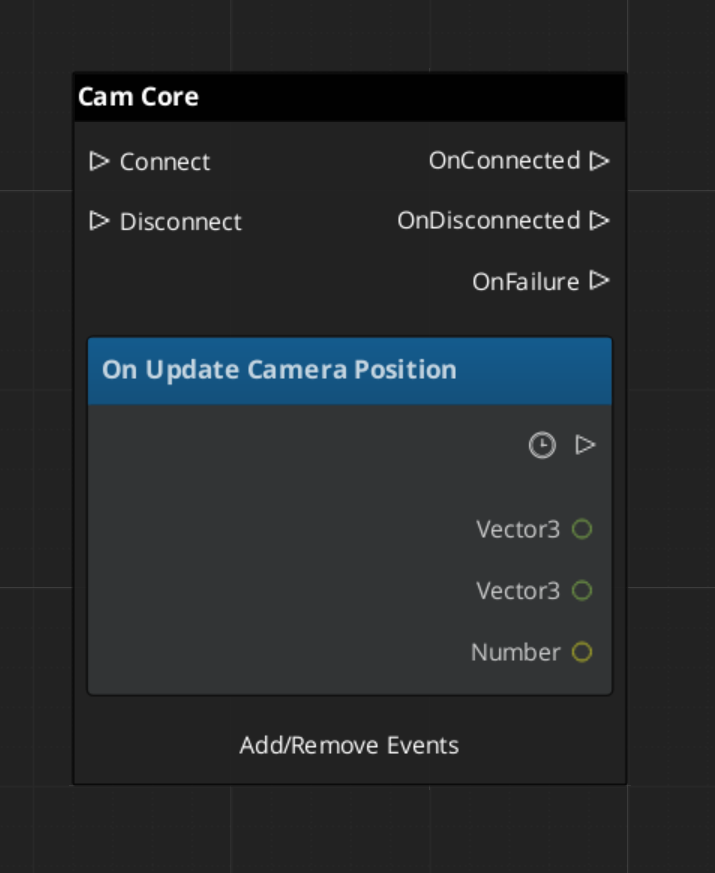

CamCoreNotificationBus | Listen | UpdateCameraPosition |

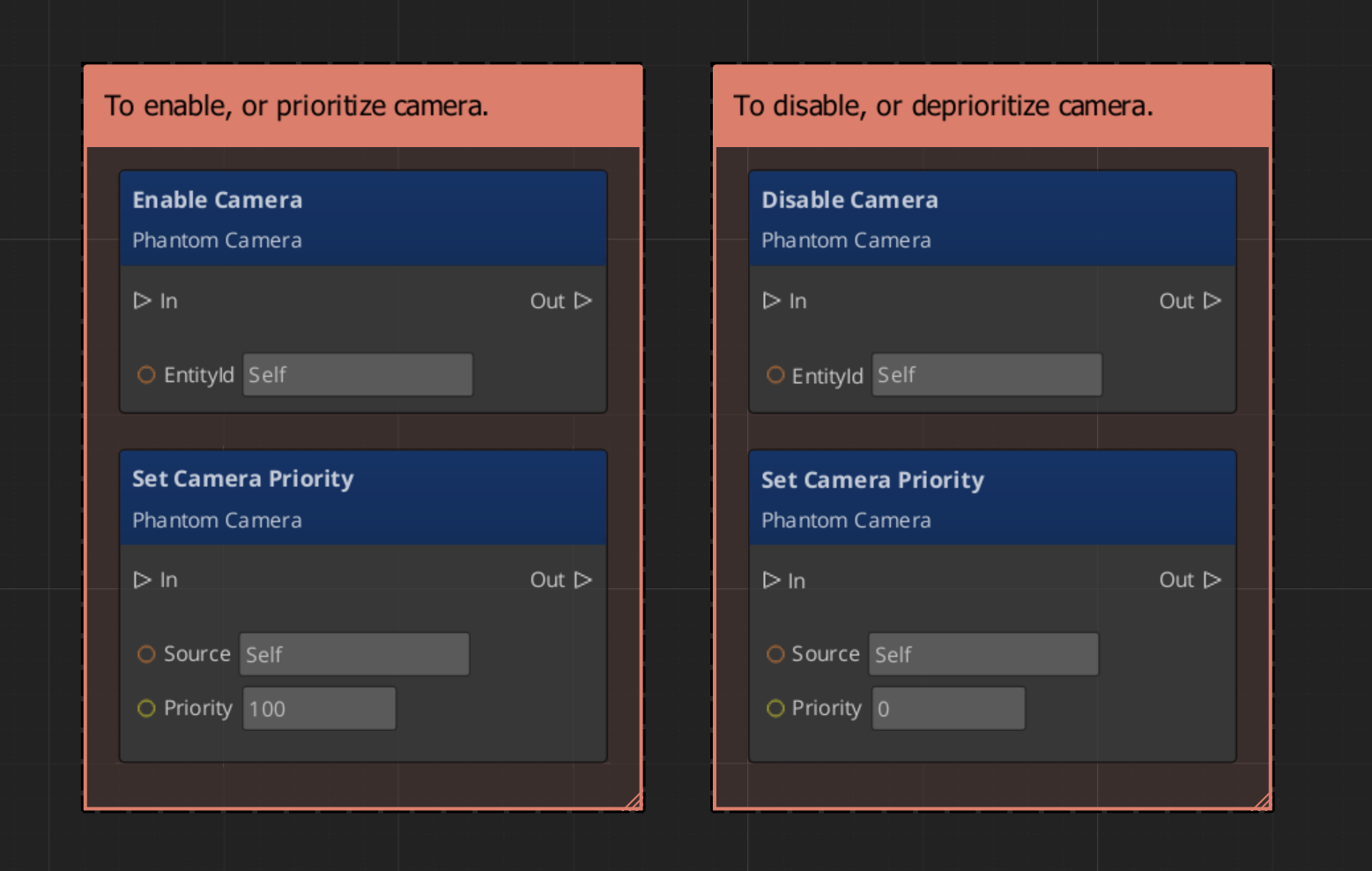

PhantomCameraRequestBus | ById | Per-camera control |

GS_UI buses

| Bus | Dispatch | Key Methods |

|---|

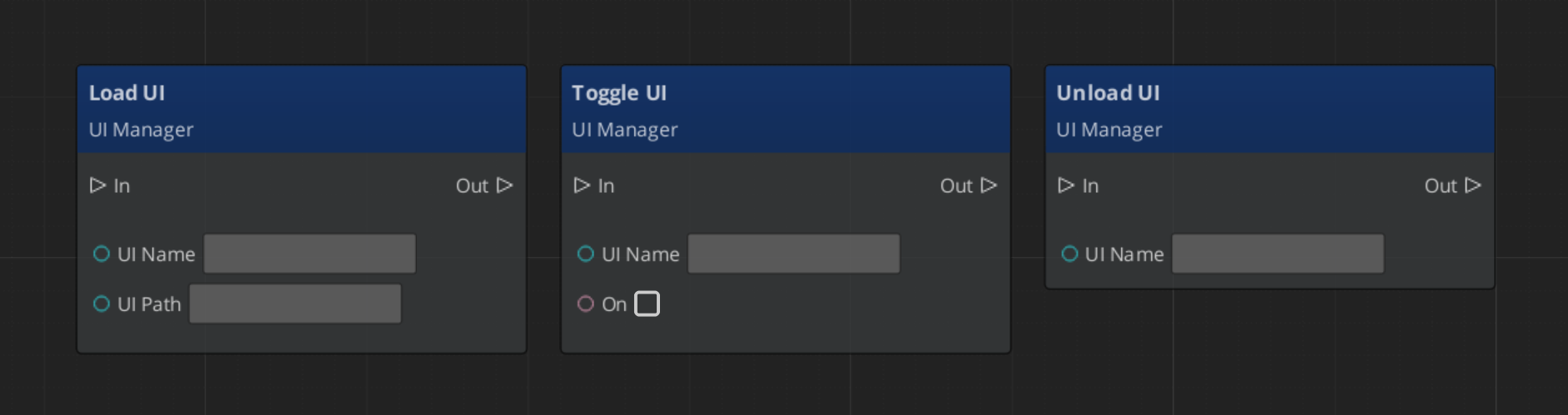

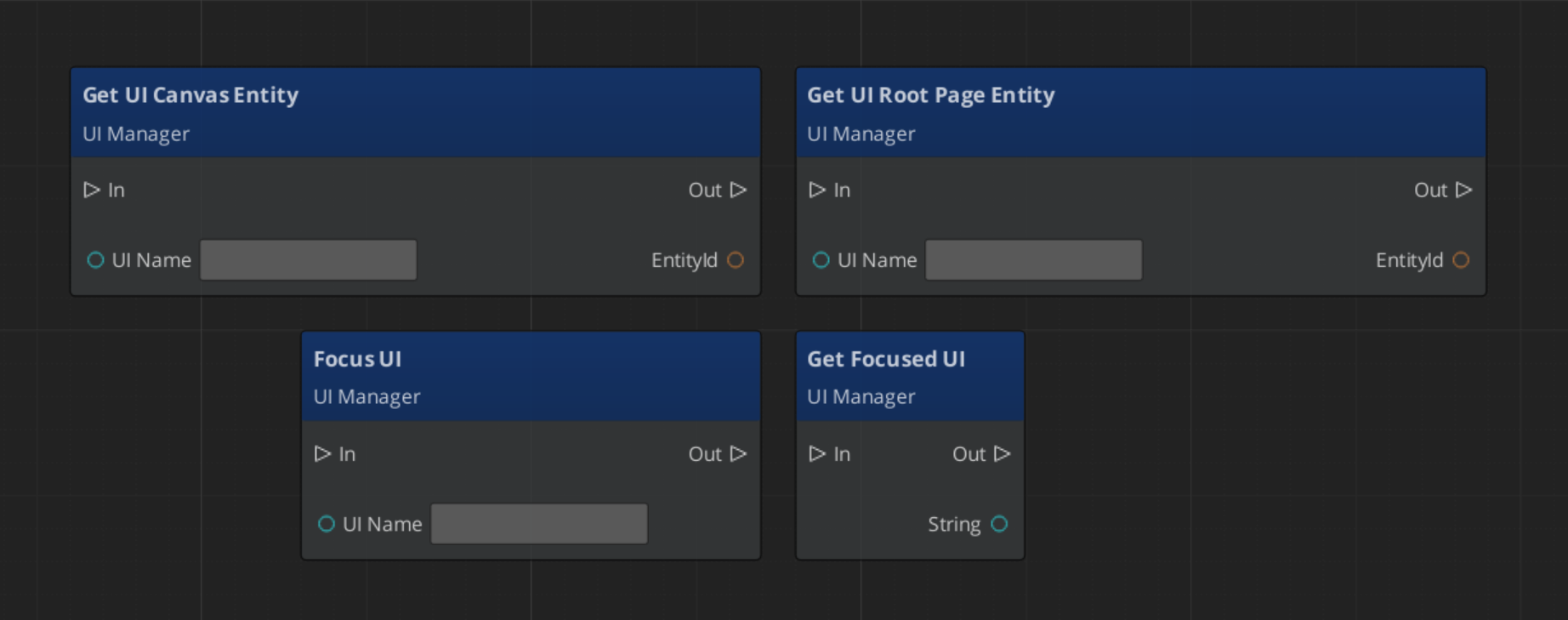

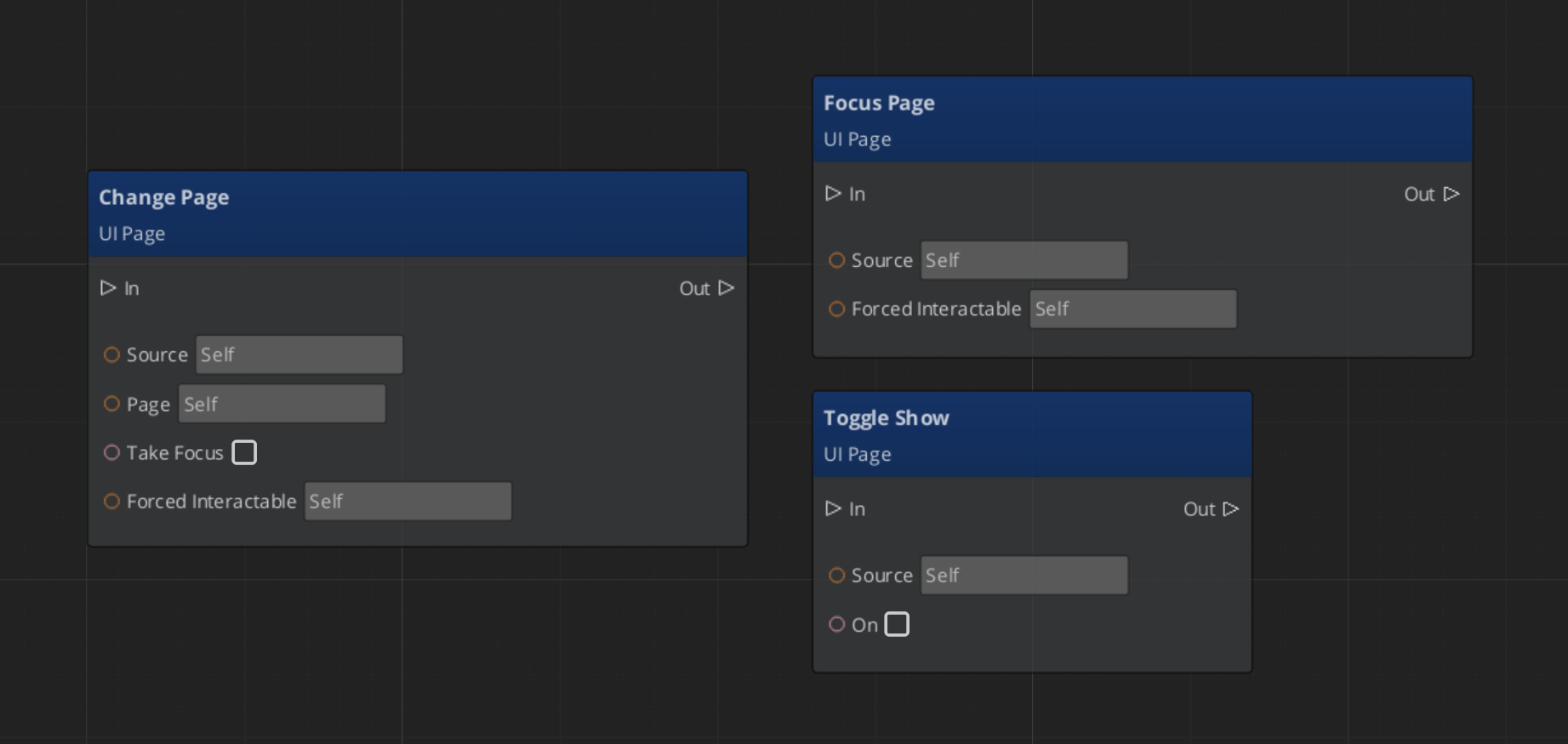

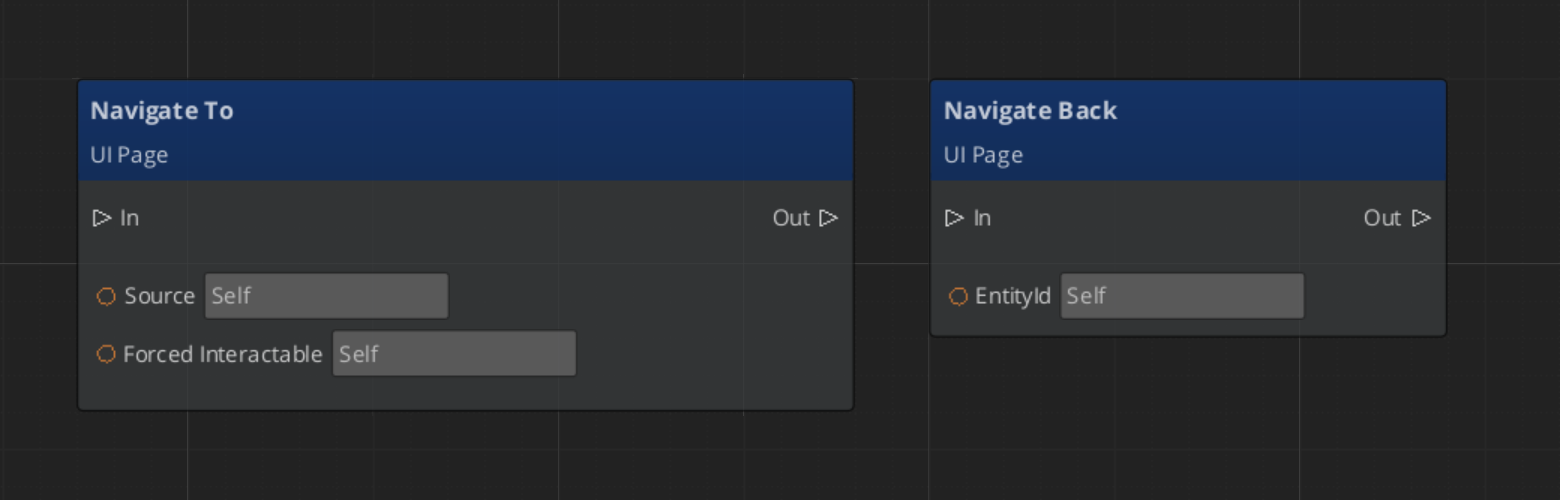

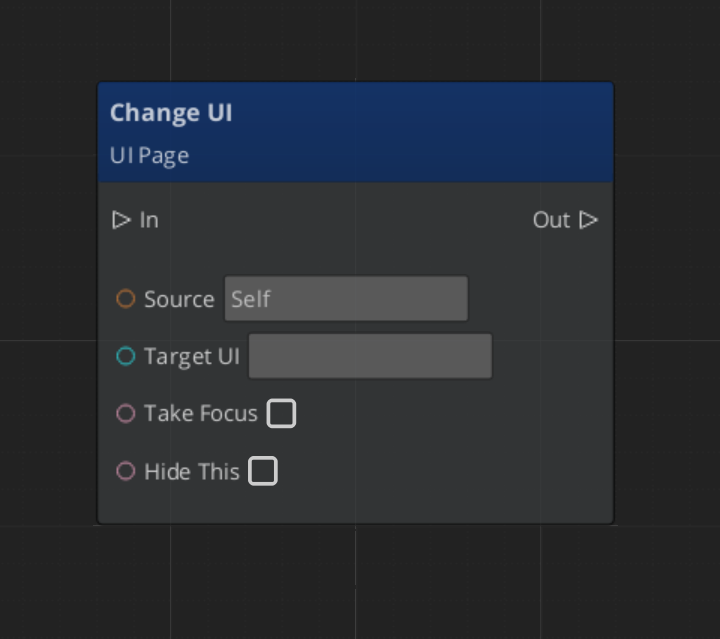

UIManagerRequestBus | Broadcast | LoadGSUI, UnloadGSUI, FocusUI, NavLastUI, RegisterPage |

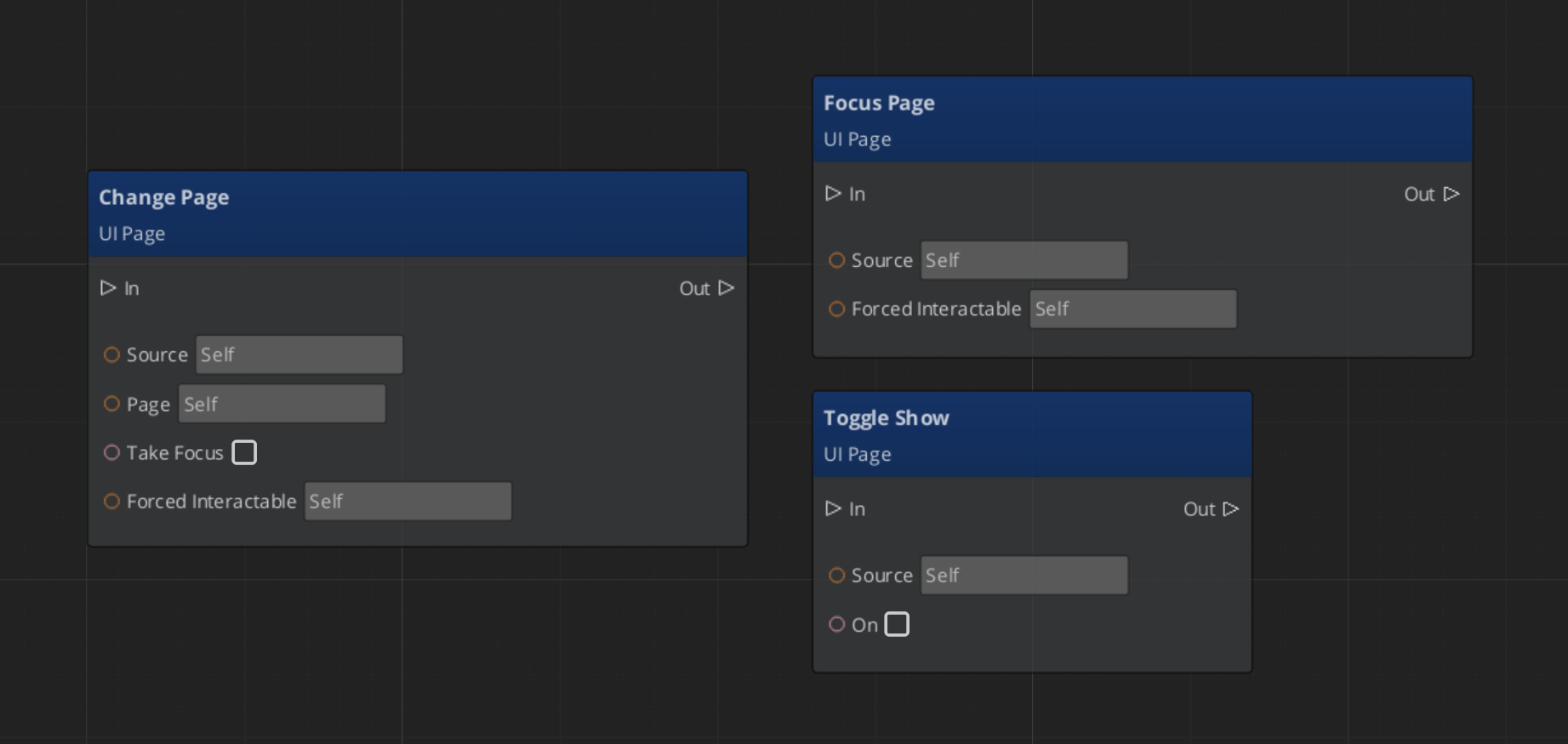

UIPageRequestBus | ById | ShowPage, HidePage, FocusPage, ToggleShow |

LoadScreenRequestBus | Broadcast | StartLoadScreen, EndLoadScreen |

PauseMenuRequestBus | Broadcast | PauseGame, UnPauseGame |

Removed / Deprecated (do not use): GS_UIHubComponent, GS_UIHubBus, GS_UIWindowComponent — these were part of a removed three-tier Hub/Window/Page hierarchy. The current architecture is single-tier: Manager → Page.

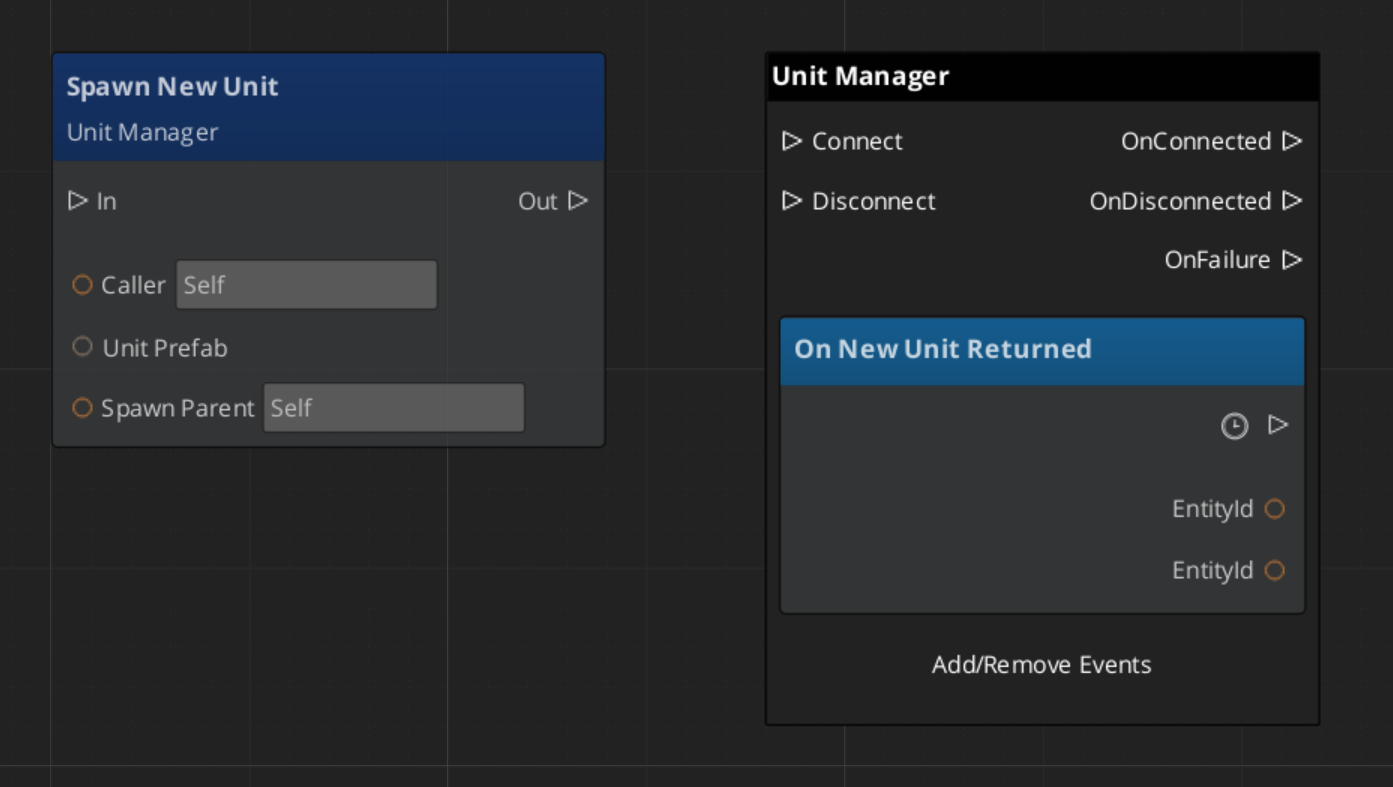

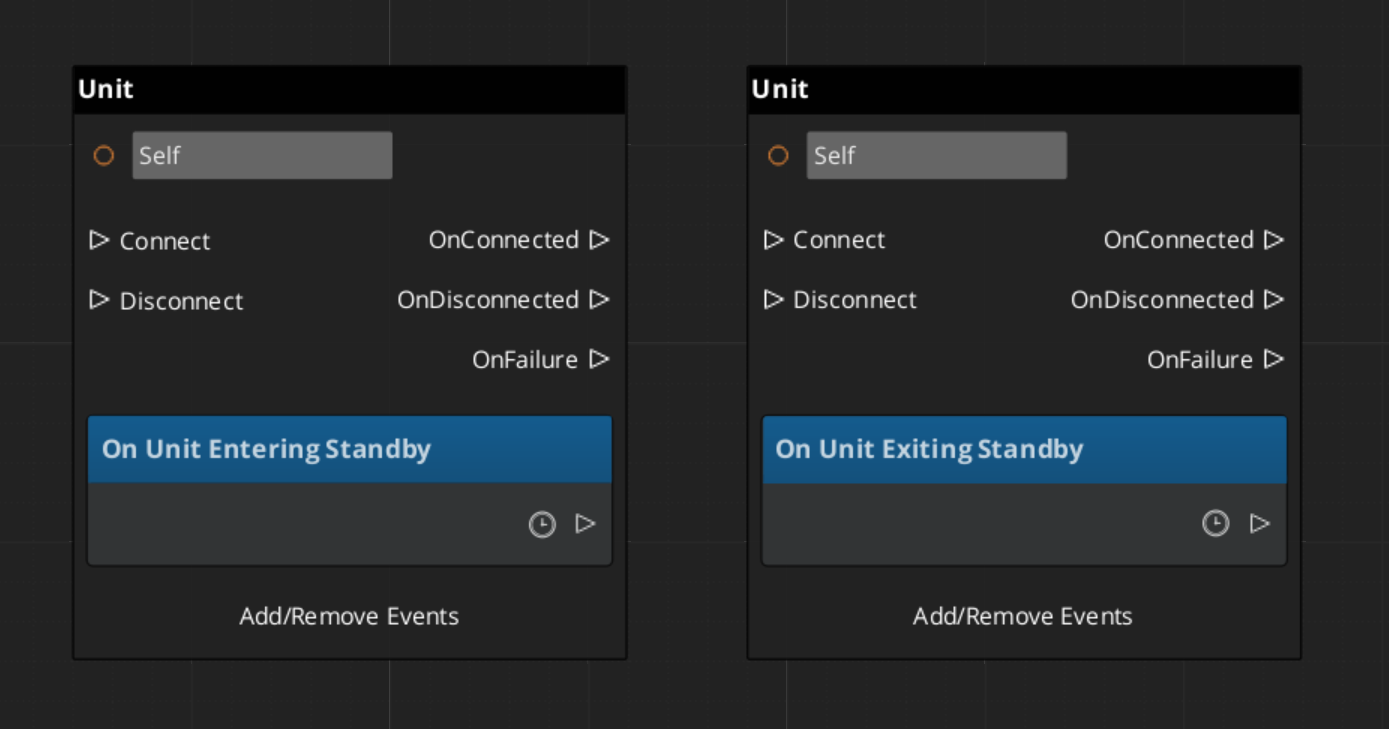

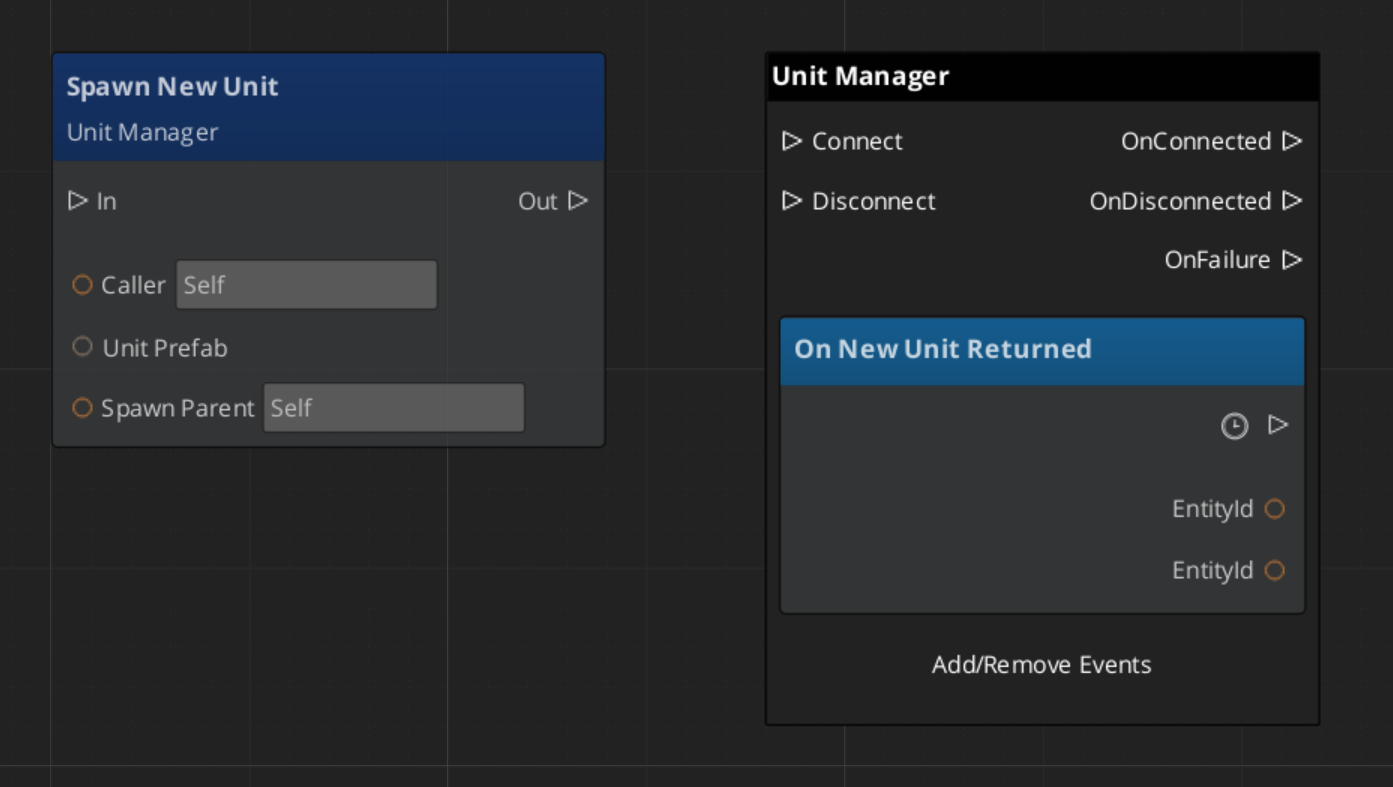

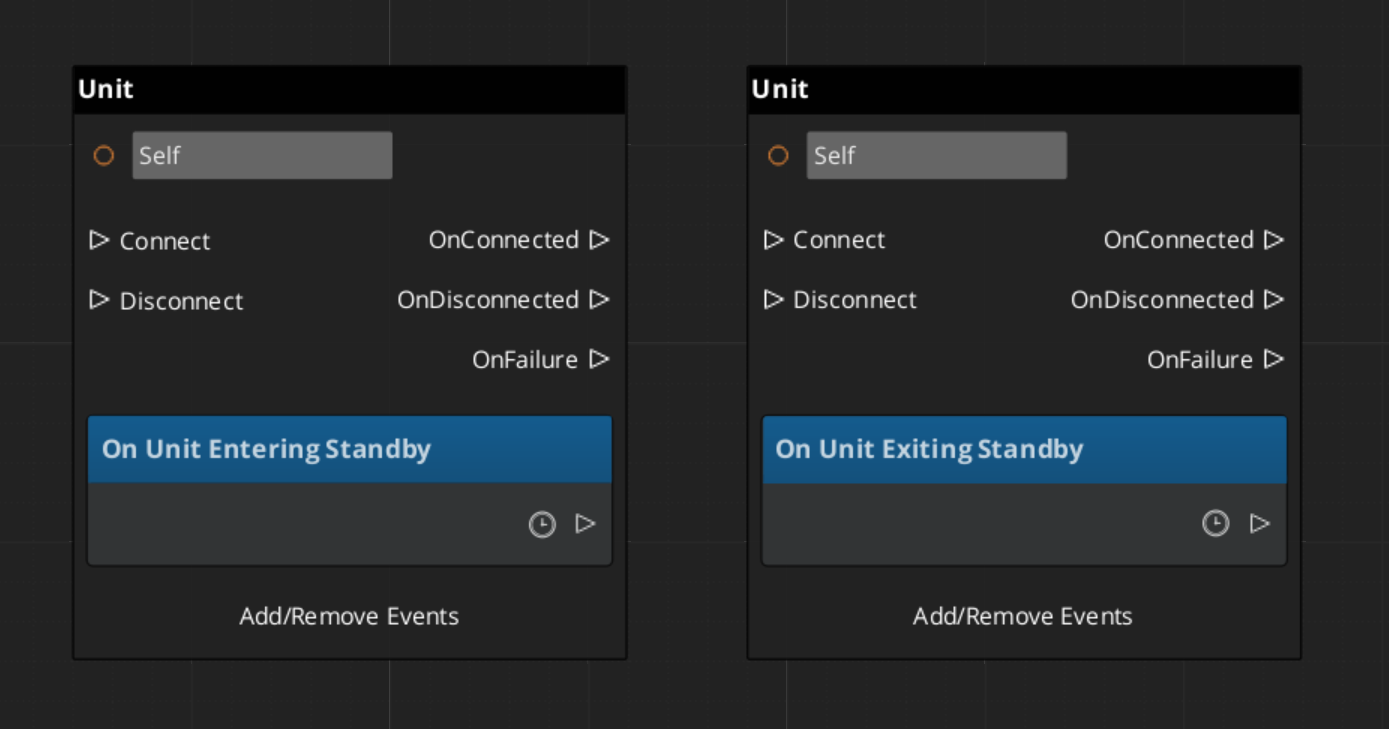

GS_Unit buses

| Bus | Dispatch | Key Methods |

|---|

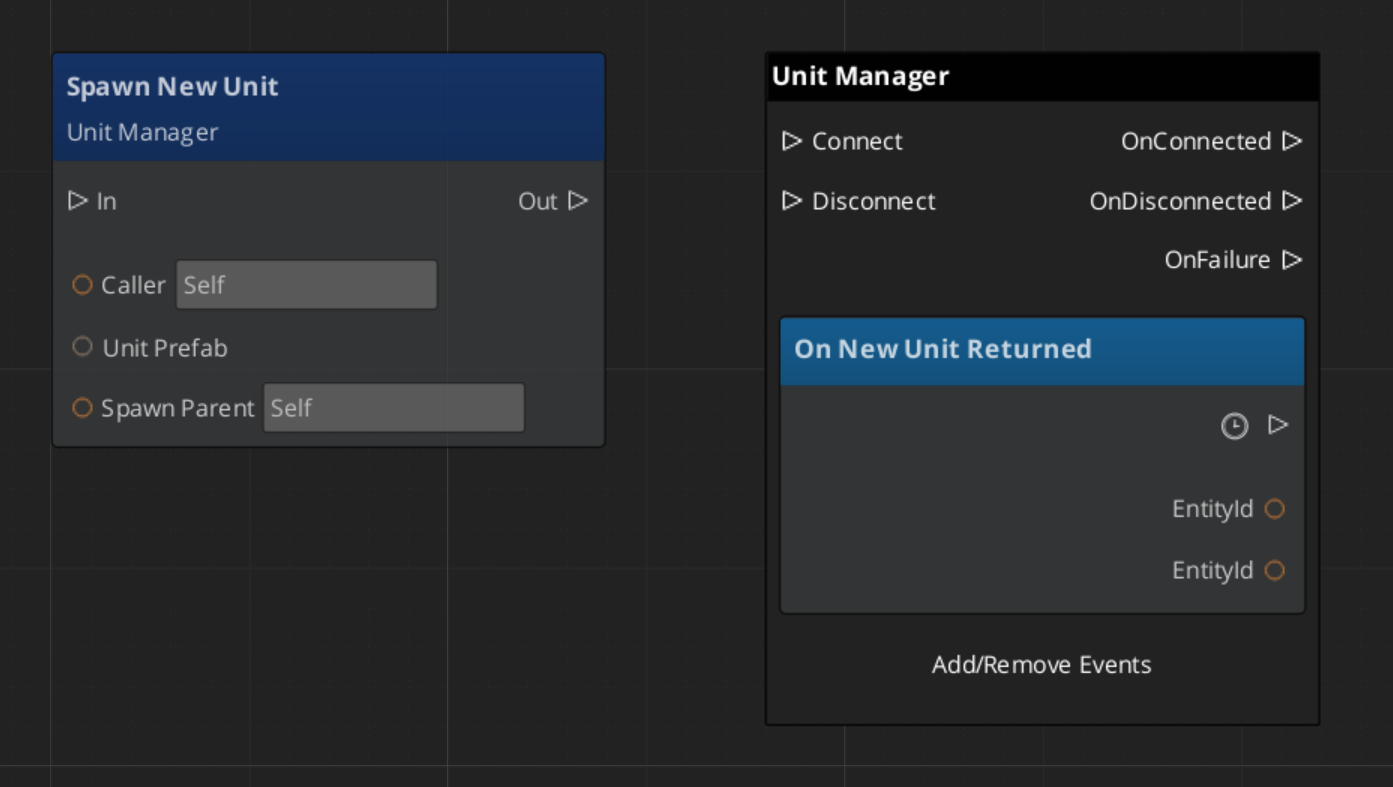

UnitManagerRequestBus | Broadcast | RequestSpawnNewUnit, RegisterPlayerController, CheckIsUnit |

UnitManagerNotificationBus | Listen | ReturnNewUnit, EnterStandby, ExitStandby |

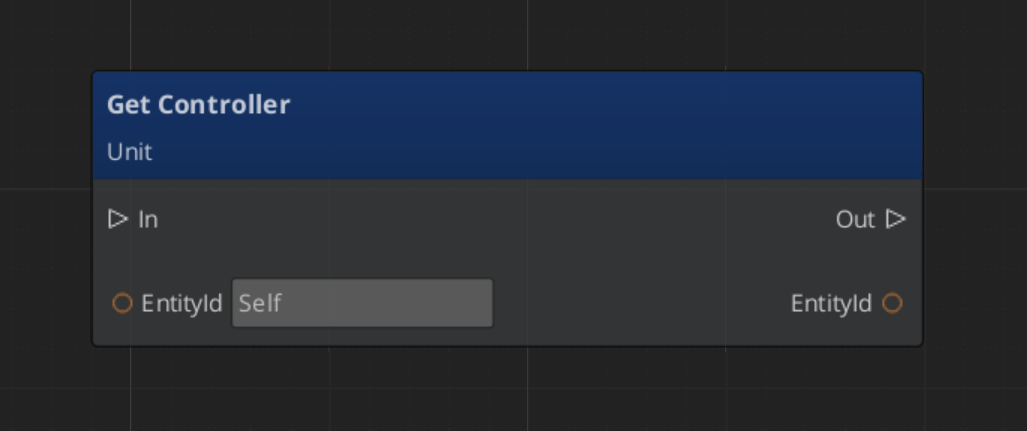

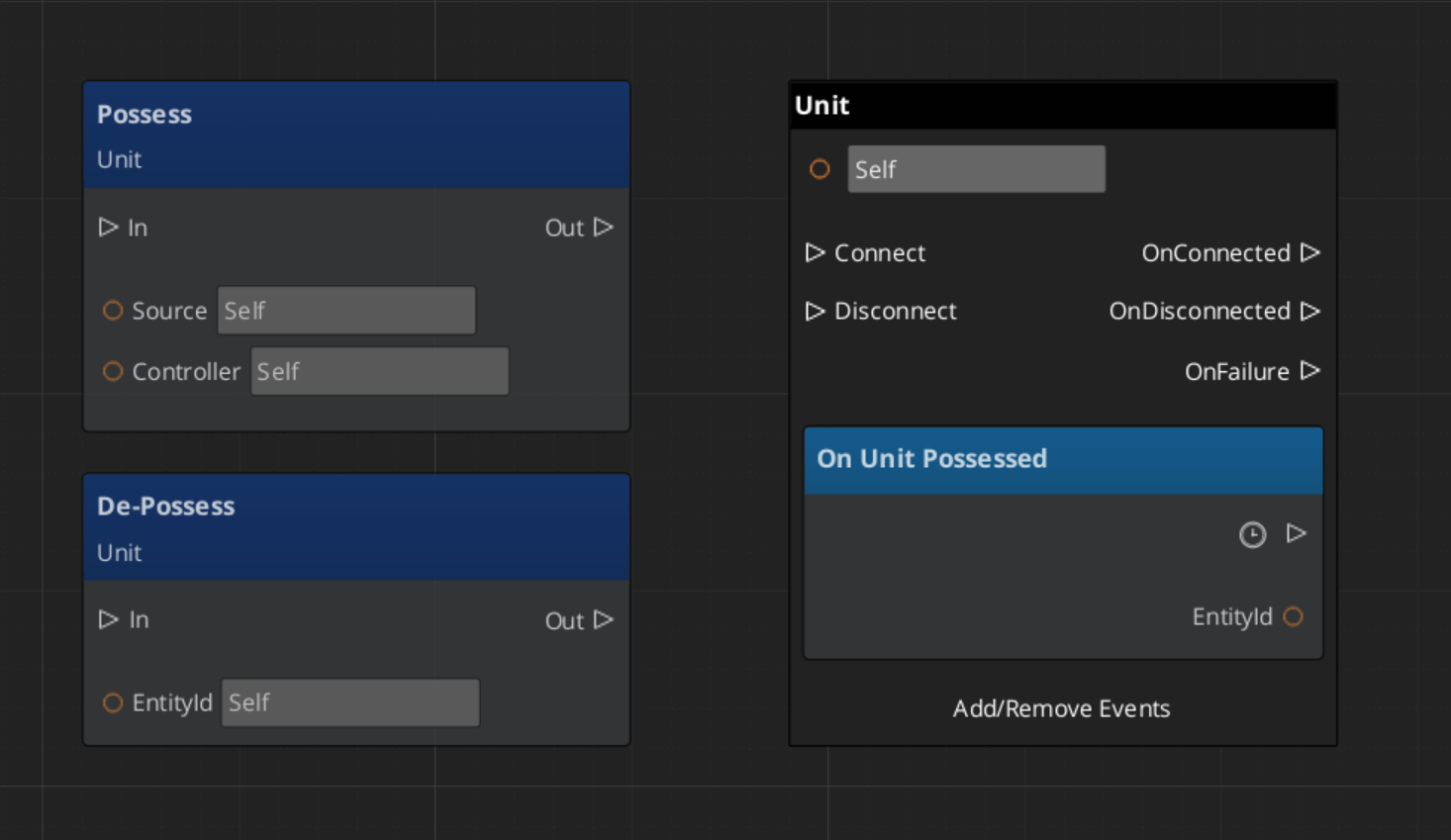

UnitRequestBus | ById | Possess, DePossess, GetController, GetUniqueName |

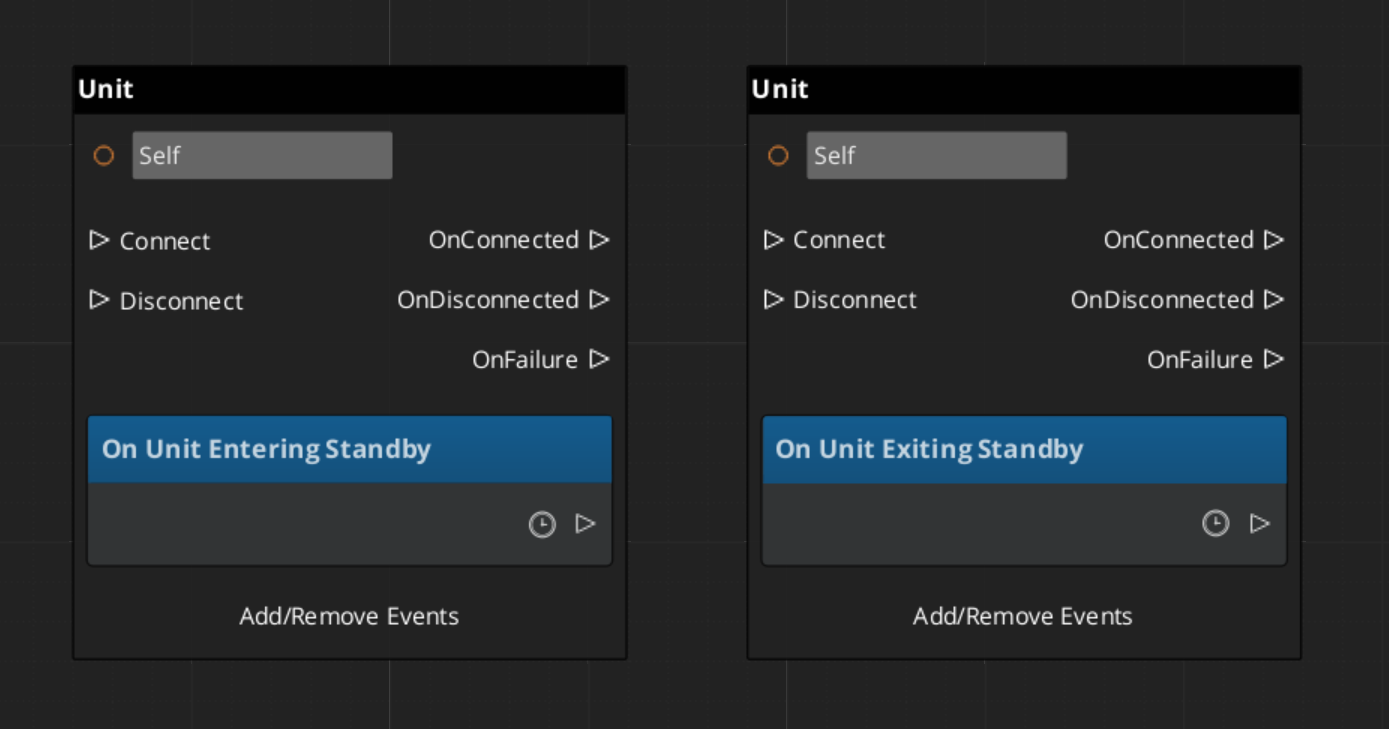

UnitNotificationBus | ById Listen | UnitPossessed, UnitEnteringStandby, UnitExitingStandby |

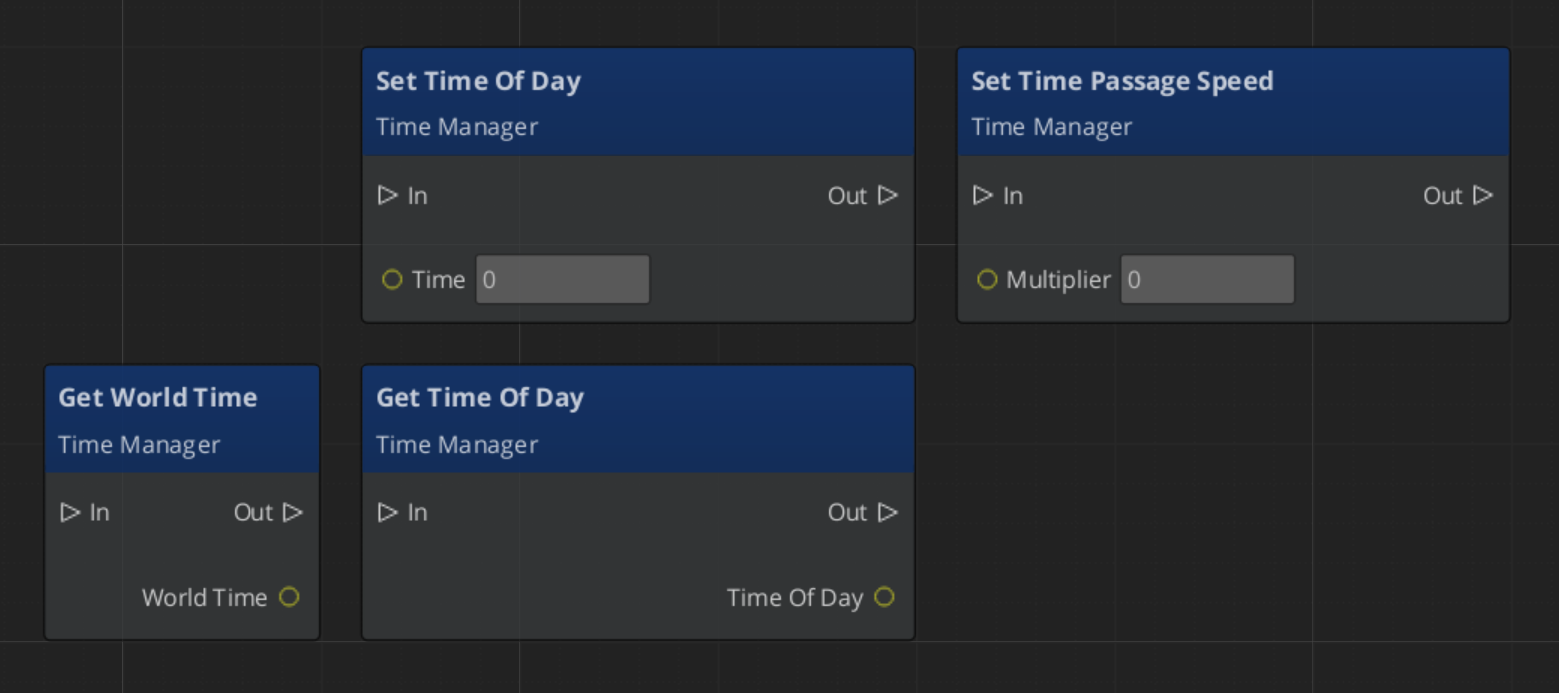

GS_Environment buses

| Bus | Dispatch | Key Methods |

|---|

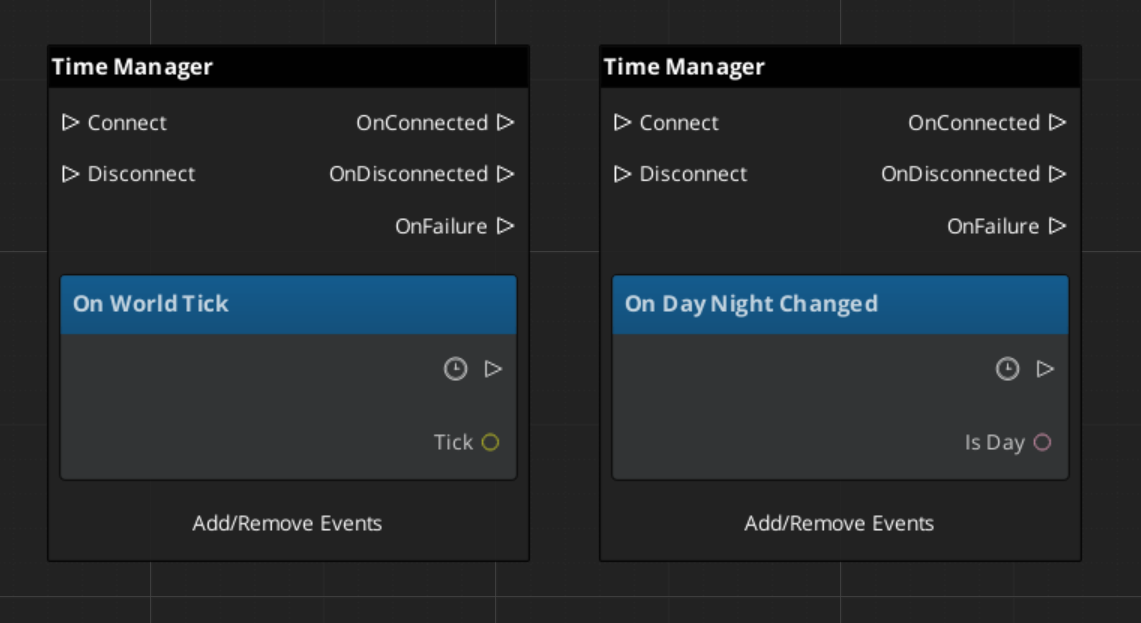

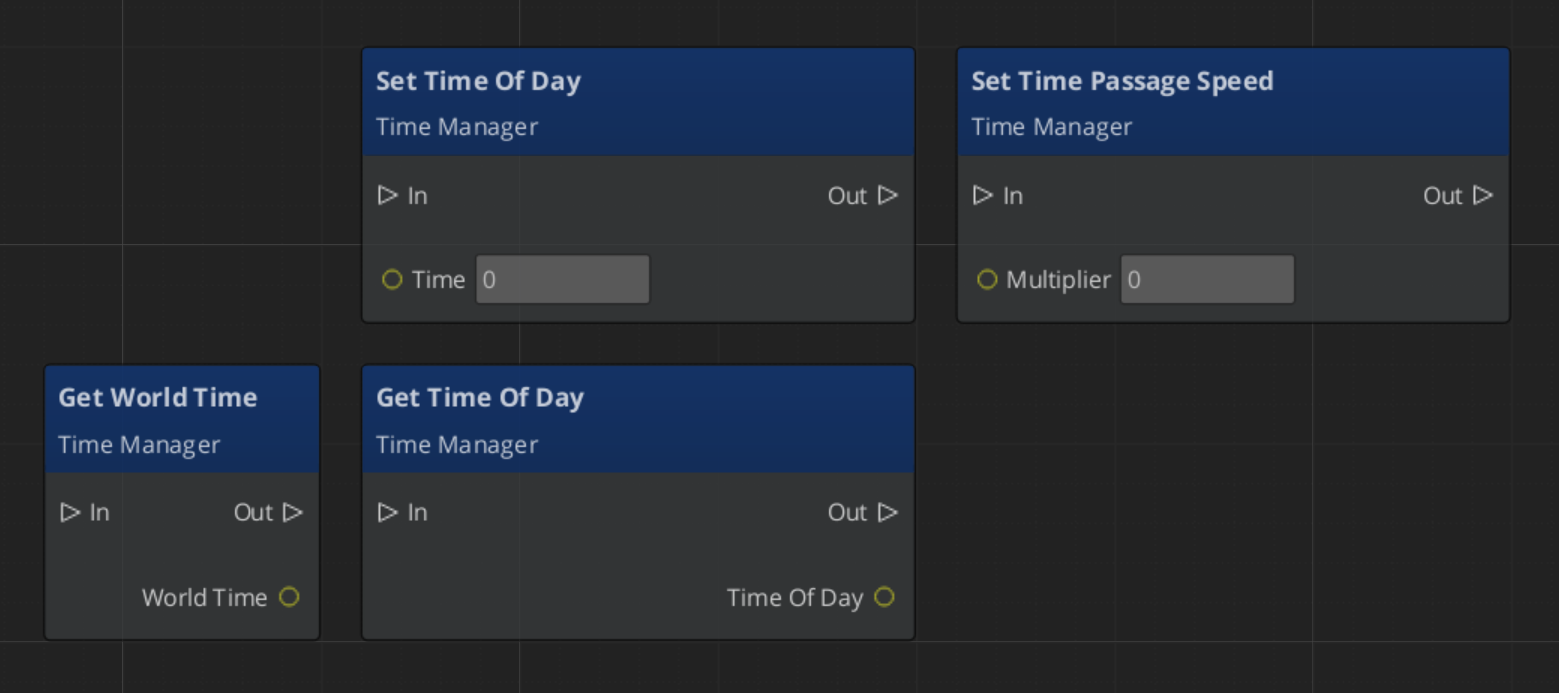

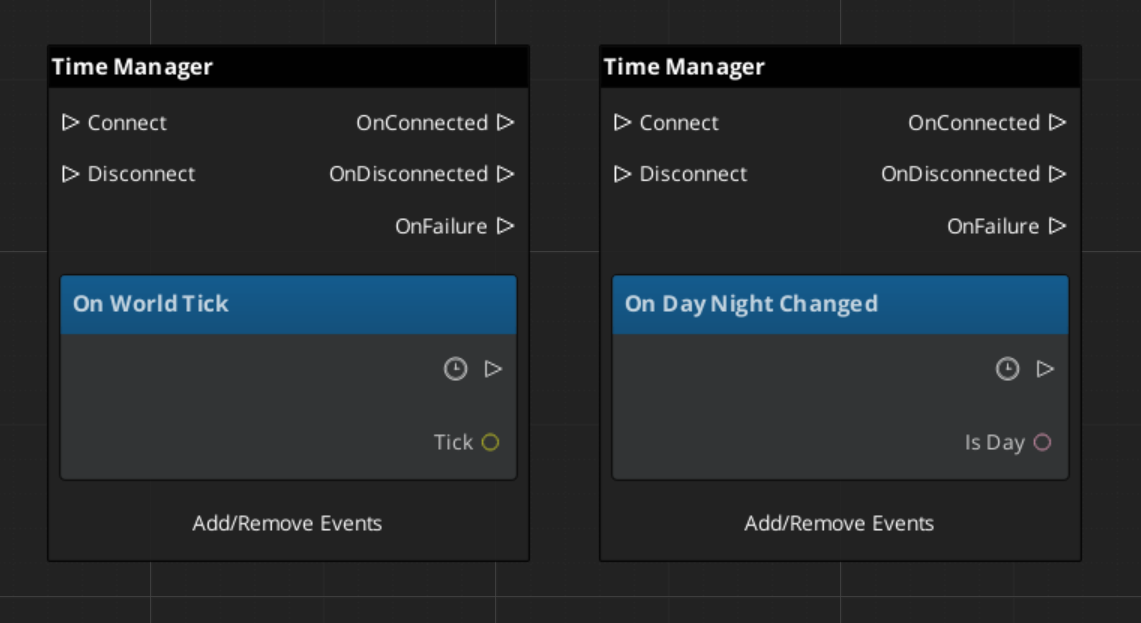

TimeManagerRequestBus | Broadcast | SetMainCam, Set/GetTimeOfDay, SetTimePassageSpeed, GetWorldTime, IsDay |

TimeManagerNotificationBus | Listen | WorldTick, DayNightChanged |

GS_Audio buses

| Bus | Dispatch | Key Methods |

|---|

AudioManagerRequestBus | Broadcast | Audio engine control |

KlattVoiceRequestBus | ById | Voice synthesis control per-entity |

KlattVoiceSystemRequestBus | Broadcast | System-level voice engine |

HOT PATHS

Canonical patterns for the most common agent tasks. Follow exactly.

HOT PATH 1 — Create a component extending a GS_ base

(Incorrect - Templates satisfy much of this.)

- Identify target gem namespace and base class.

- Generate skeleton via O3DE Class Creation Wizard.

- Replace generated names. Add GS_ base class alongside

AZ::Component in inheritance list. - In

Activate(): call BusConnect() for all buses this component handles. - In

Deactivate(): call BusDisconnect() for all buses. - In

Reflect(): SerializeContext first, EditContext nested inside it. EnableForAssetEditor in SerializeContext only, directly under ->Version().

[ANCHOR] For any specific gem’s base class API → read /docs/framework/{gem}/ before writing the override body.

HOT PATH 2 — Dispatch an EBus command

(Incorrect - BusInterfaceName)

// Fire and forget, singleton bus

GS_{Gem}::{BusName}::Broadcast(

&GS_{Gem}::{BusName}::Events::{MethodName},

arg1, arg2);

// Entity-addressed bus

GS_{Gem}::{BusName}::Event(

targetEntityId, &GS_{Gem}::{BusName}::Events::{MethodName}, arg1);

// With return value

ReturnType result{};

GS_{Gem}::{BusName}::BroadcastResult(

result, &GS_{Gem}::{BusName}::Events::{MethodName});

HOT PATH 3 — Listen to an EBus notification

- Inherit from

{BusName}::Handler (protected inheritance). - In

Activate(): {BusName}::Handler::BusConnect();- For entity-addressed buses:

{BusName}::Handler::BusConnect(GetEntityId());

- In

Deactivate(): {BusName}::Handler::BusDisconnect(); - Override the virtual notification methods.

HOT PATH 4 — Create a new Manager Component

- Inherit from

GS_Core::GS_ManagerComponent. - Connect to

GameManagerNotificationBus in Activate(). - Implement lifecycle hooks:

OnSetupManagers, OnShutdownManagers, OnEnterStandby, OnExitStandby. - Define

{Name}RequestBus (Single/Single policy) for commands. - Define

{Name}NotificationBus (Multiple policy) for notifications. - Register descriptor in your gem’s module constructor.

[ANCHOR] Manager lifecycle and initialization order → /docs/framework/core/gs_managers/manager/

HOT PATH 5 — Create a Generic Data Asset

- Inherit from

AZ::Data::AssetData. - Include GS_Core Utility:

GS_AssetReflectionIncludes.h - In

Reflect(), SerializeContext block:sc->Class<MyAsset, AZ::Data::AssetData>()

->Version(1)

->Attribute(AZ::Edit::Attributes::EnableForAssetEditor, true)

->Field("Field", &MyAsset::m_field);

- Register asset type in a system component’s

Reflect(). EnableForAssetEditor goes only in SerializeContext. Never in EditContext.

HOT PATH 6 — Polymorphic container of subtypes

// CORRECT — all subtypes selectable in editor

AZStd::vector<BaseClass*> m_items;

// WRONG — disables all subtype options in editor

AZStd::vector<AZStd::unique_ptr<BaseClass>> m_items;

Reflect with ->Field("Items", &MyClass::m_items). O3DE handles subtype selection automatically with raw pointers.

HOT PATH 7 — Create a custom Trigger Sensor or World Trigger

Two distinct extension points. Choose the correct base:

Custom Trigger Sensor (condition side — detects when to fire):

- Inherit from

GS_Interaction::TriggerSensorComponent. - Override

EvaluateSensor() — call Trigger() on all WorldTriggerRequestBus handlers on the same entity when your condition is met. - Reflect with

SerializeContext and EditContext. - Register descriptor in your gem’s module.

Custom World Trigger (response side — what happens when fired):

- Inherit from

GS_Interaction::WorldTriggerComponent. - Override

DoTrigger() — implement the response behavior. - Override

DoReset() if the trigger should re-arm. - Reflect with

SerializeContext and EditContext. - Register descriptor in your gem’s module.

[ANCHOR] Full WorldTrigger architecture → /docs/framework/interaction/world_triggers/

- Inherit from the appropriate base:

DialogueEffect, DialogueCondition, or DialoguePerformance. - Reflect the class using

SerializeContext and EditContext. The system discovers it automatically at startup — no manual registration step. GS_Complete demonstrates this pattern for cross-gem types (e.g., a dialogue effect controlling a PhantomCam). Use it as a reference, not a required location.

[ANCHOR] Dialogue type system → /docs/framework/cinematics/dialogue_system/

ANTIPATTERN CATALOG

[ANTIPATTERN] EnableForAssetEditor in EditContext. Silent failure — asset never appears in Asset Editor [New] menu. See INVARIANTS.

[ANTIPATTERN] unique_ptr in polymorphic AZStd containers. Disables all subtype selection in the editor. Use BaseT*. See INVARIANTS.

[ANTIPATTERN] Guessing EBus method names. O3DE EBus dispatches fail silently on wrong method names. Always verify against source headers or the EBUS DISPATCH REFERENCE in this document.

[ANTIPATTERN] Dispatching to entity-addressed buses before target entity is active. Buses are not connected before Activate() or after Deactivate(). Sending events before activation is silently dropped.

[ANTIPATTERN] Omitting AZ_COMPONENT_IMPL in the .cpp file. Component will not be reflectable. Causes subtle reflection failures that appear unrelated to the missing macro.

[ANTIPATTERN] Using "Editor" service in AppearsInAddComponentMenu. GS_Play components use AZ_CRC_CE("Game"). The "Editor" category makes components invisible in standard game entity contexts.

[ANTIPATTERN] Writing component boilerplate manually. Module registration steps are reliably missed. Always start from the O3DE Class Creation Wizard output.

CONFIDENCE THRESHOLDS

Proceed without reading source or docs:

- Standard O3DE component lifecycle:

Init, Activate, Deactivate, BusConnect, BusDisconnect AZStd container usage (with the raw pointer rule applied for polymorphic types)- CMake target aliases:

Gem::{Name}.API, Gem::{Name}.Private.Object, Gem::{Name}.Clients SerializeContext → EditContext nesting order in Reflect()AZ_CRC_CE() for compile-time CRC valuesazrtti_cast<> for safe type casting in reflection code

Stop and read documentation or ask the user before proceeding:

- Any EBus method signature you are not 100% certain of

- Any virtual method signature on a GS_ base class

- Whether a specific GS_ gem is present in the target project

- Initialization order requirements for a new Manager component

- Any TypeId UUID value — never generate a UUID, ask the user or read the TypeIds header

CONTEXT ANCHORS

Conditions that require reading deeper documentation before generating code.

[ANCHOR] Before writing any unit movement or controller code:

→ Read /docs/framework/unit/ for mover types (3DFree, Strafe, Grid, SideScroller, Slide, Physics), grounder types, and controller hierarchy (Player vs AI).

→ [ASK] Which mover type does this movement context require?

[ANCHOR] Before configuring any virtual camera:

→ Read /docs/framework/phantomcam/ for camera types (PhantomCamera, StaticOrbit, Track, ClampedLook) and influence field setup.

[ANCHOR] Before wiring any dialogue sequence:

→ Read /docs/framework/cinematics/dialogue_system/ for the full chain: Sequencer → UIBridge → DialogueUI component.

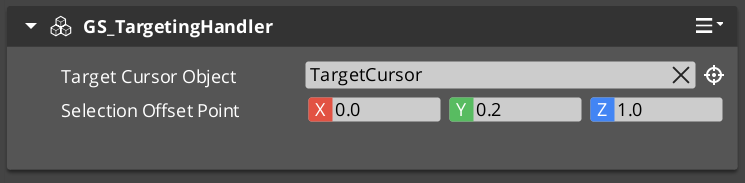

[ANCHOR] Before implementing targeting or interaction:

→ Read /docs/framework/interaction/targeting/ for the TargetingHandler, Target, TargetField setup order and registration timing.

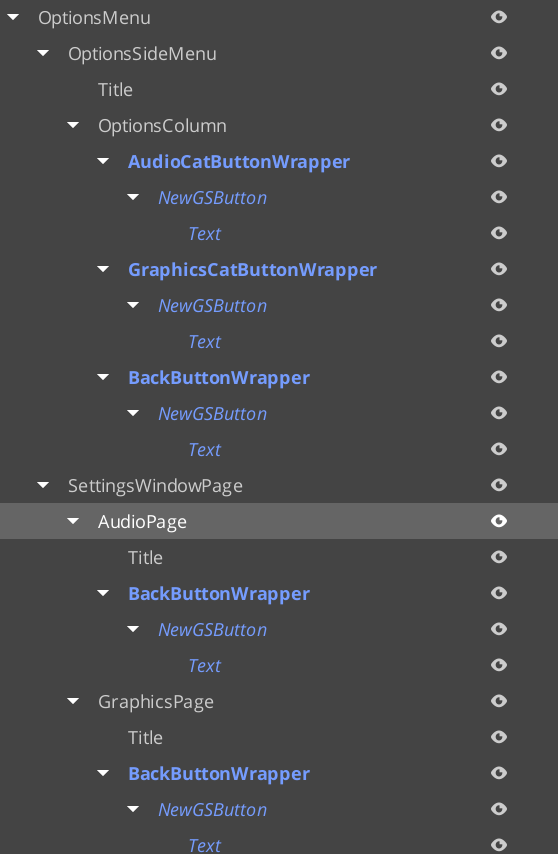

[ANCHOR] Before building any UI screen or menu:

→ Read /docs/framework/ui/ for the Manager → Page architecture, navigation return policies, companion component pattern, and UIManager registration sequence. The Hub/Window layer has been removed — do not use GS_UIHubComponent or GS_UIWindowComponent.

[ANCHOR] Before integrating save or persistence:

→ Read /docs/framework/core/gs_save/ for the SaveManager, RecordKeeper, and Saver component chain.

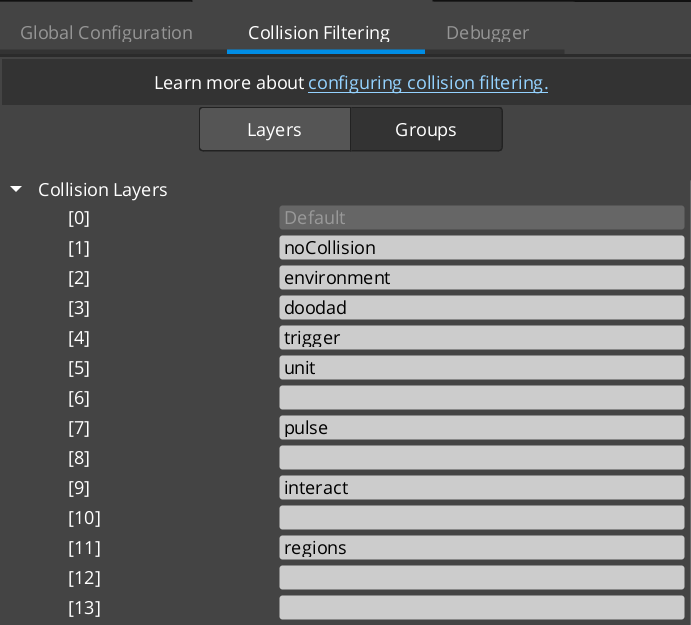

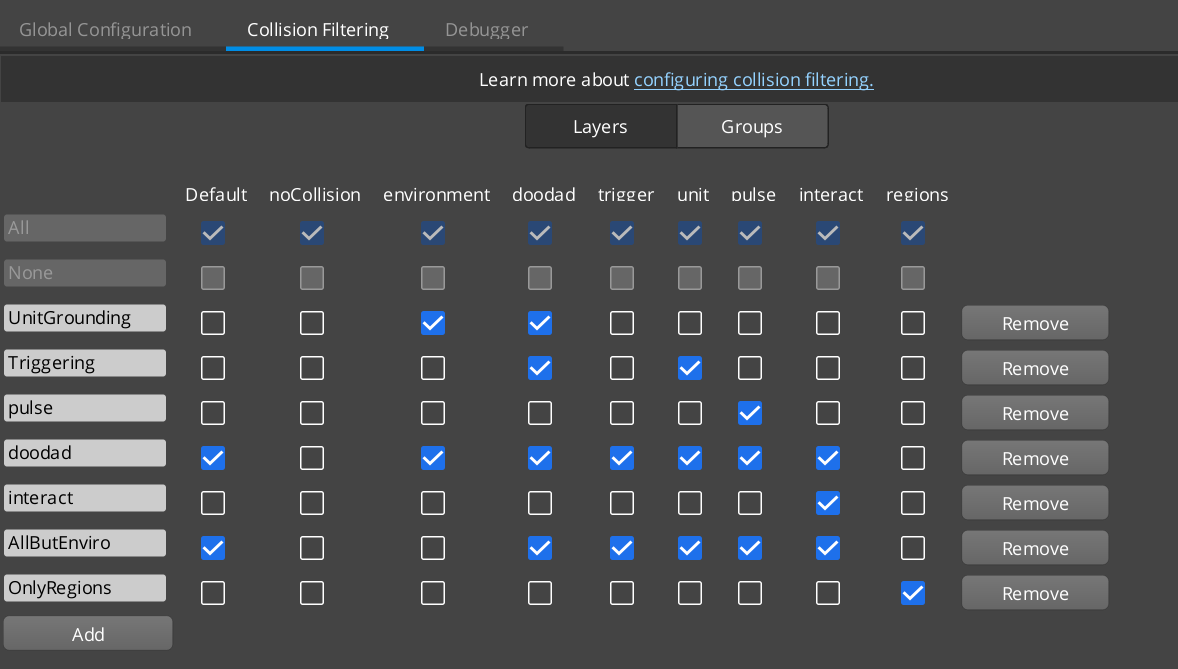

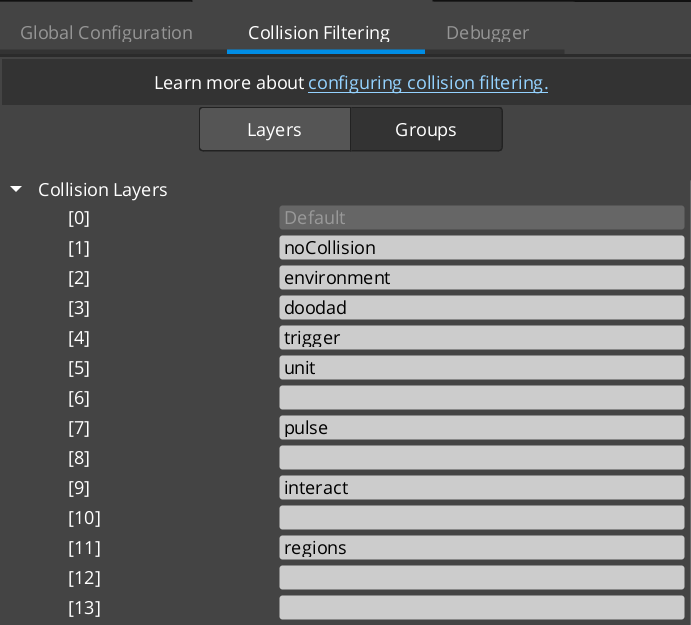

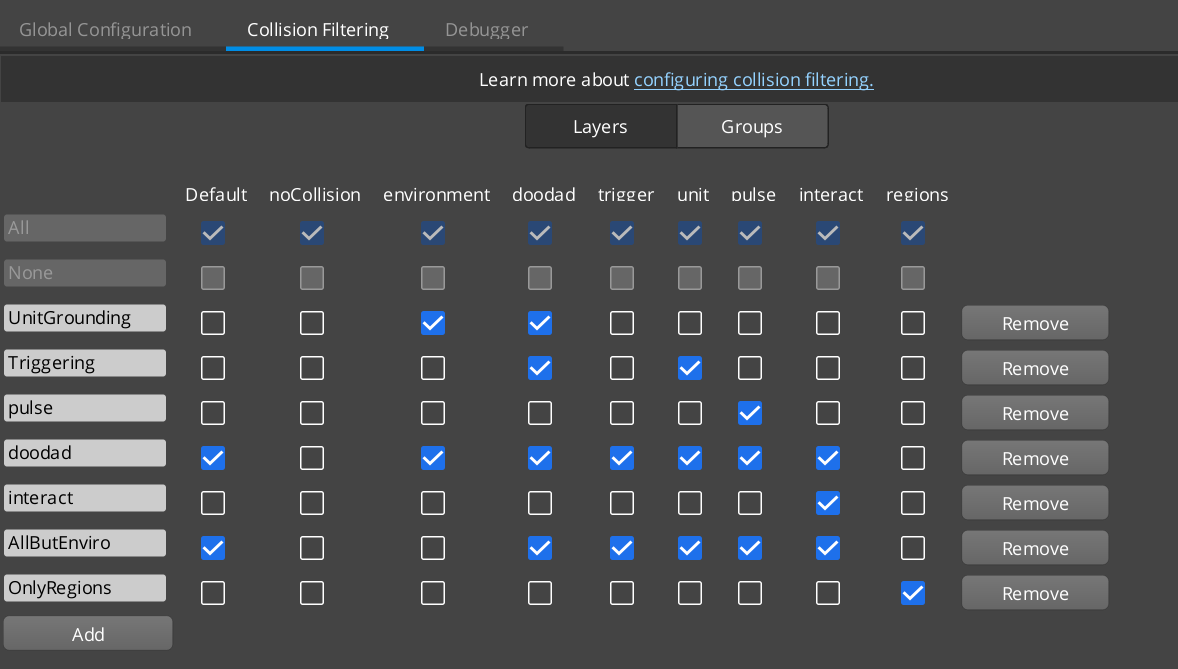

[ANCHOR] Before setting up project physics layers or collision groups:

→ Read /docs/get_started/configure_project/setup_environment/ for required engine-level physics configuration.

[ANCHOR] Before implementing behavior that combines two or more GS_ gems:

→ Review GS_Complete — it provides reference implementations of common cross-gem patterns. Reuse or adapt them rather than reimplementing from scratch.

CLARIFICATION TRIGGERS

[ASK] At the start of any GS_Play session, identify the user context:

Designer / Scripter — Working in the O3DE editor with components, Script Canvas, or Lua. No C++ generation needed.

- Guidance: component configuration, property setup, EBus Script Canvas nodes.

- Documentation: The Basics → /docs/the_basics/

- Gem-specific: /docs/the_basics/{gem}/ (e.g., /docs/the_basics/unit/ for unit questions)

Engineer — Writing C++ to extend the framework with new components, gems, or systems.

- Guidance: follows HOT PATHS and code patterns in this document.

- Documentation: Framework API → /docs/framework/

- Gem-specific: /docs/framework/{gem}/ (e.g., /docs/framework/unit/ for unit questions)

Both sections cover identical gem featuresets. The Basics covers component usage and scripting; Framework API covers C++ interfaces, EBus signatures, and base class architecture. Available gems in both: core, audio, cinematics, environment, interaction, juice, performer, phantomcam, ui, unit.

When answering any gem-specific question, include a link to the appropriate documentation section alongside your response. If the context is not stated, ask before generating any code or providing documentation links.

[ASK] Before creating any new component: Confirm which gem’s namespace and base class this component should use.

[ASK] Before generating any TypeId UUID: Always request the UUID from the user or confirm they want one generated. Never silently generate a UUID.

[ASK] If the task references a system with no memory or documentation coverage: Ask before inferring behavior. GS_Play base class APIs are not reliably guessable from generic O3DE patterns.

[ASK] If a Manager initialization order is being modified: The sequence of OnSetupManagers calls is load-order dependent. Confirm the intended position in the sequence before writing.

[ASK] If asked to implement a new Dialogue type: Extend the appropriate base class (DialogueEffect, DialogueCondition, or DialoguePerformance) and reflect it. Confirm which gem or module owns the new class before writing.

UPCOMING: AGENTIC SITE FEATURES (Post v1)

The GS_Play documentation site will implement formal agent-friendly features after the v1 documentation release. These are not yet live.

Planned additions follow the emerging specifications at:

What this means for agents using this document now:

Until those features are live, this bootstrap document (/docs/get_started/agentic_guidelines/) is the primary machine-readable entry point. Treat it as authoritative. Do not rely on inferred site structure or navigated page discovery — navigate the docs via explicit paths from the GEM INDEX and CONTEXT ANCHORS sections above.

When the agent-friendly site features are released, this section will be updated with the new entry points and structured access patterns.

2 - Index

Fast references to speed up docs navigation — feature lists, patterns, glossary, templates, changelogs, and utilities.

Quick-access references for the GS_Play documentation. Use this section when you know what you are looking for and need a fast lookup — a term definition, the right template name, a feature comparison, or the changelog for a specific gem.

How This Section Is Organized

Change Log — Version history for GS_Play gems and the documentation itself.

Feature List — A complete index of every GS_Play feature, with links to both its Basics usage guide and Framework API reference.

Patterns — Diagrams and explanations of the core structural patterns used across GS_Play feature sets. A useful orientation before diving into a new gem.

Templates List — All ClassWizard extension templates for generating new conditions, effects, performances, trigger types, and other polymorphic extension classes across GS_Play gems.

Powerful Utilities — Cross-cutting utilities available across multiple feature gems.

Glossary — Definitions for GS_Play-specific terminology and commonly used O3DE concepts in the framework context.

Links — External links to community resources, the asset store, and Genome Studios.

Sections

2.1 - Change Logs

Version changelogs for all GS_Play gems and documentation.

Logs

GS_Audio

Latest version: 0.5.0

Summary: First official base release. Audio manager, event playback, mixing buses, multi-layer score arrangement, and Klatt voice synthesis.

GS_Audio Logs

GS_Cinematics

Latest version: 0.5.0

Summary: First official base release. Full dialogue system with node graph sequencer, polymorphic conditions/effects/performances, world and screen-space UI, typewriter, babble, localization, and the in-engine dialogue editor.

GS_Cinematics Logs

GS_Core

Latest version: 0.5.0

Summary: First official base release. Ready for continued development and support. On the way to 1.0!

GS_Core Logs

GS_Environment

Latest version: 0.5.0

Summary: First official base release. Time of day management, time passage speed, day/night cycle notifications, and sky color configuration assets.

GS_Environment Logs

GS_Interaction

Latest version: 0.5.0

Summary: First official base release. Physics pulse emitters and reactors, proximity targeting and cursor, and composable world trigger sensors.

GS_Interaction Logs

GS_Juice

Latest version: 0.5.0

Summary: First official base release (Early Development). Feedback motion system with transform and material tracks for screen shake, bounce, flash, and glow effects.

GS_Juice Logs

Latest version: 0.5.0

Summary: First official base release (Early Development). Performer manager, skin slot swapping, paper billboard rendering, velocity locomotion, head tracking, and babble.

GS_Performer Logs

GS_PhantomCam

Latest version: 0.5.0

Summary: First official base release. Priority-based camera system with follow/look-at behaviors, blend profiles, and spatial influence fields.

GS_PhantomCam Logs

GS_UI

Latest version: 0.5.0

Summary: First official base release. Single-tier page navigation, GS_Motion UI animation, enhanced buttons, input interception, load screen, and pause menu.

GS_UI Logs

GS_Unit

Latest version: 0.5.0

Summary: First official base release. Mode-driven unit movement system with player/AI controllers, 3-stage input pipeline, multiple mover types, grounders, and movement influence fields.

GS_Unit Logs

Documentation

Latest version: 1.0.0

Summary: First official base release. Ready to be developed, updated, and refined over the continued development of GS_Play!

Docs Logs

2.1.1 - Audio Change Log

GS_Audio version changelog.

Logs

Audio 0.5.0

First official base release of GS_Audio.

Audio Manager

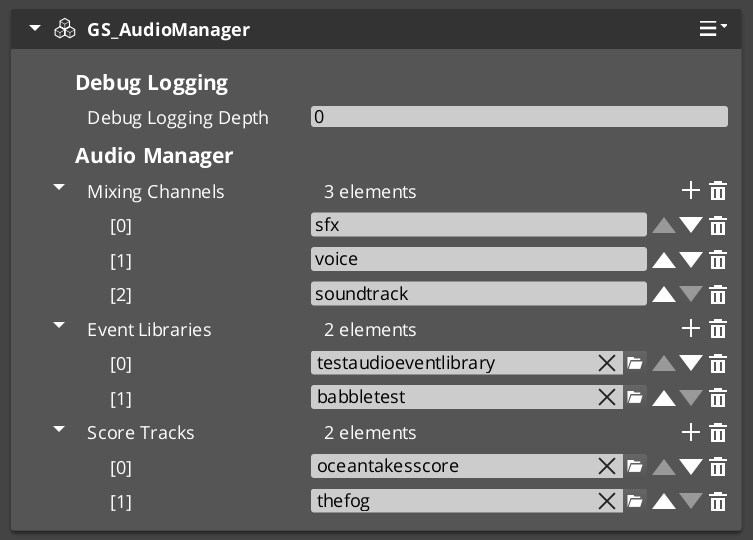

GS_AudioManagerComponent — audio engine lifecycle management, event library loadingAudioManagerRequestBus / AudioManagerNotificationBus- Master volume control and engine-level audio settings

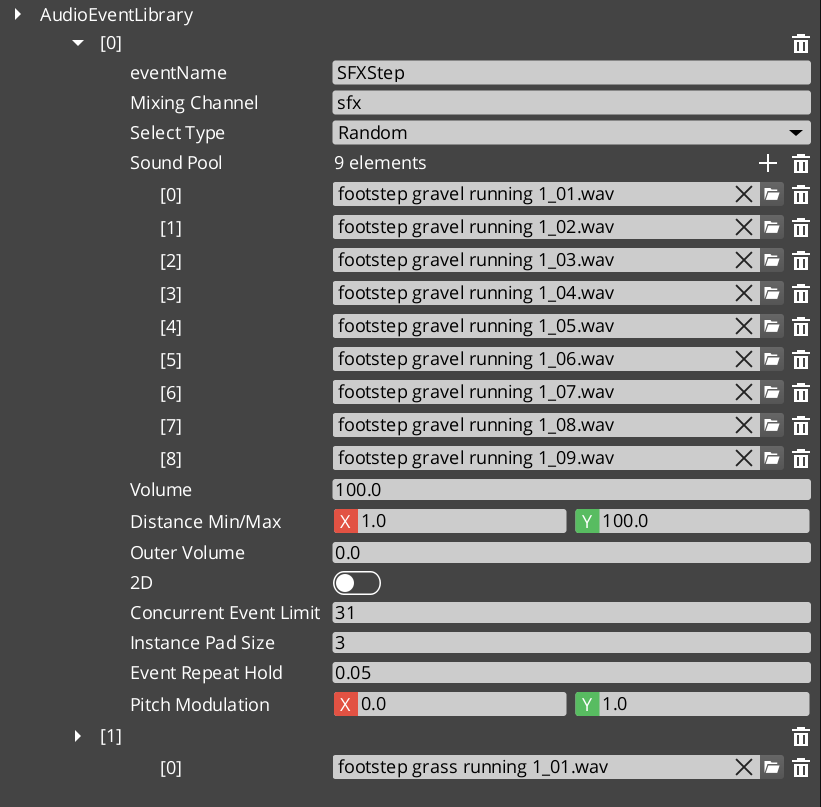

Audio Events

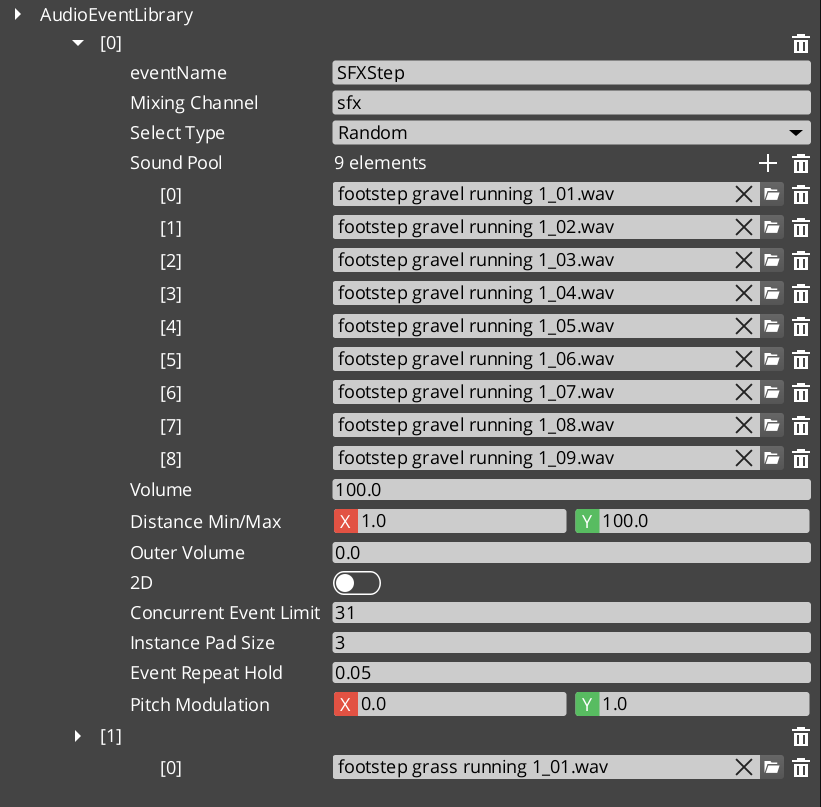

AudioEventLibrary asset — pools of named audio eventsGS_AudioEvent — pool selection, concurrent instance limiting, and 3D spatializationAudioManagerRequestBus playback interface

Mixing & Effects

GS_MixingBus — mixing bus component with configurable effects chain- Filters, EQ, and environmental influence effects per bus

Score Arrangement

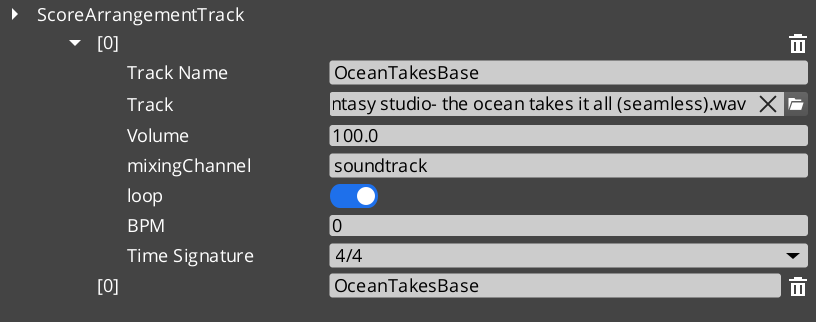

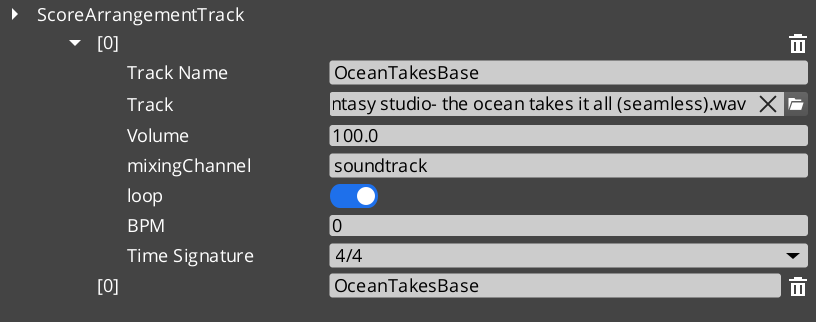

ScoreArrangementTrack — multi-layer music systemScoreLayer — individual music layers toggled by gameplay stateTimeSignatures enum for bar-aligned transitions

Klatt Voice Synthesis

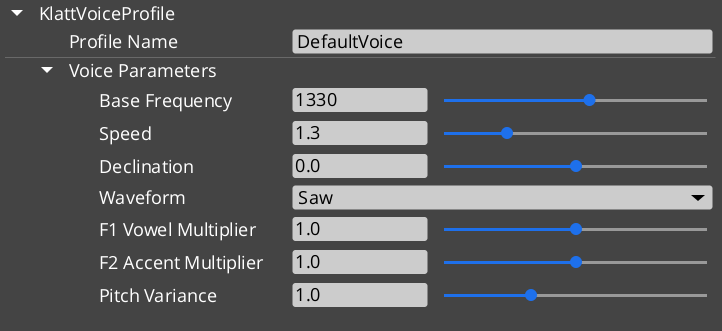

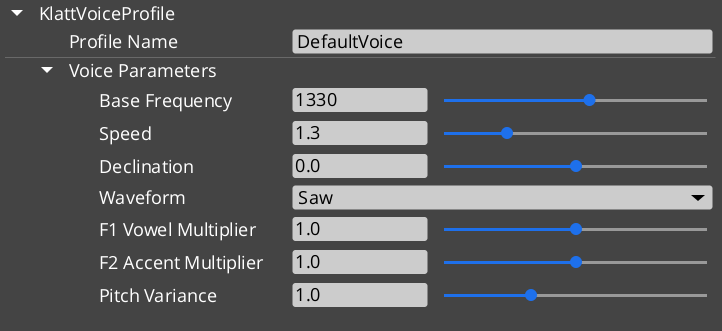

KlattVoiceSystemComponent — shared SoLoud engine, 3D listener managementKlattVoiceComponent — per-entity text-to-speech with phoneme mapping and segment queueKlattVoiceRequestBus / KlattVoiceNotificationBus / KlattVoiceSystemRequestBus- Full 3D spatial voice audio with configurable voice parameters

2.1.2 - Cinematics Change Log

GS_Cinematics version changelog.

Logs

Cinematics 0.5.0

First official base release of GS_Cinematics.

Cinematics Manager

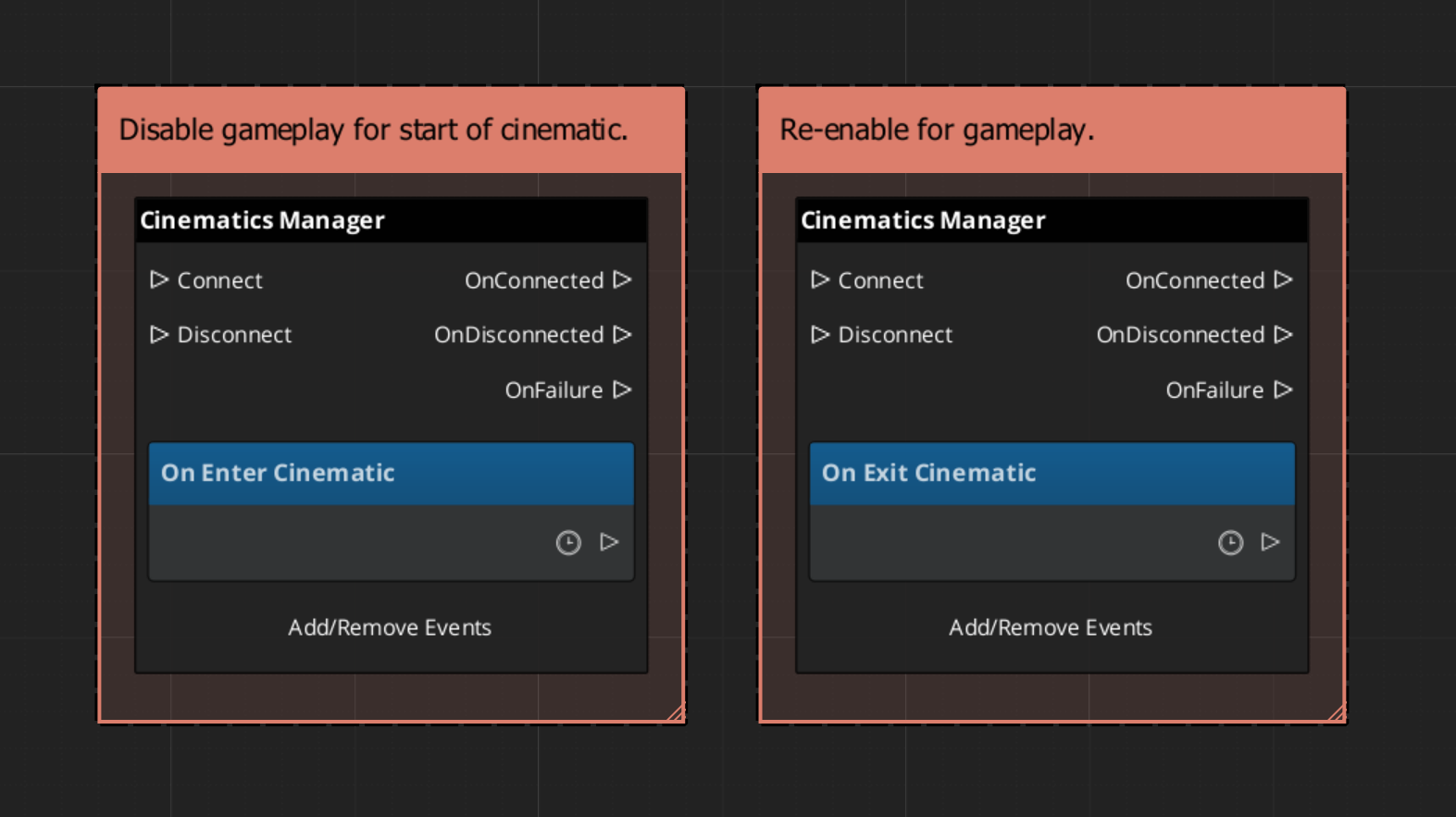

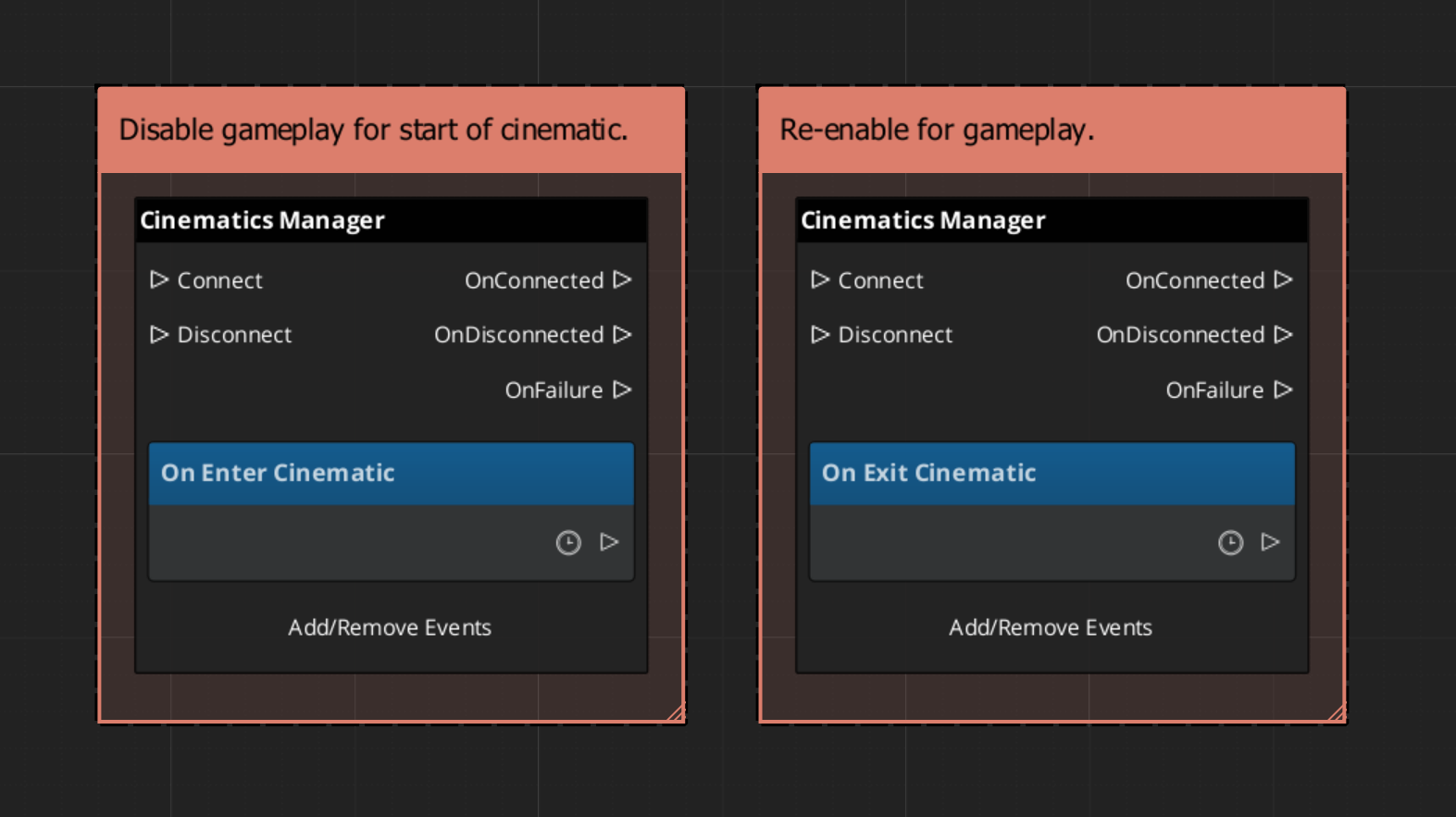

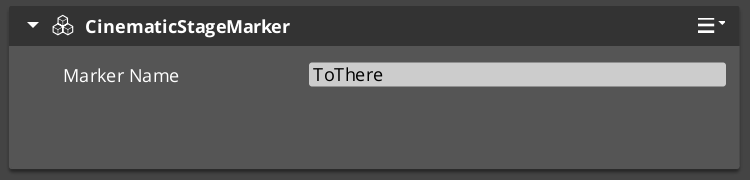

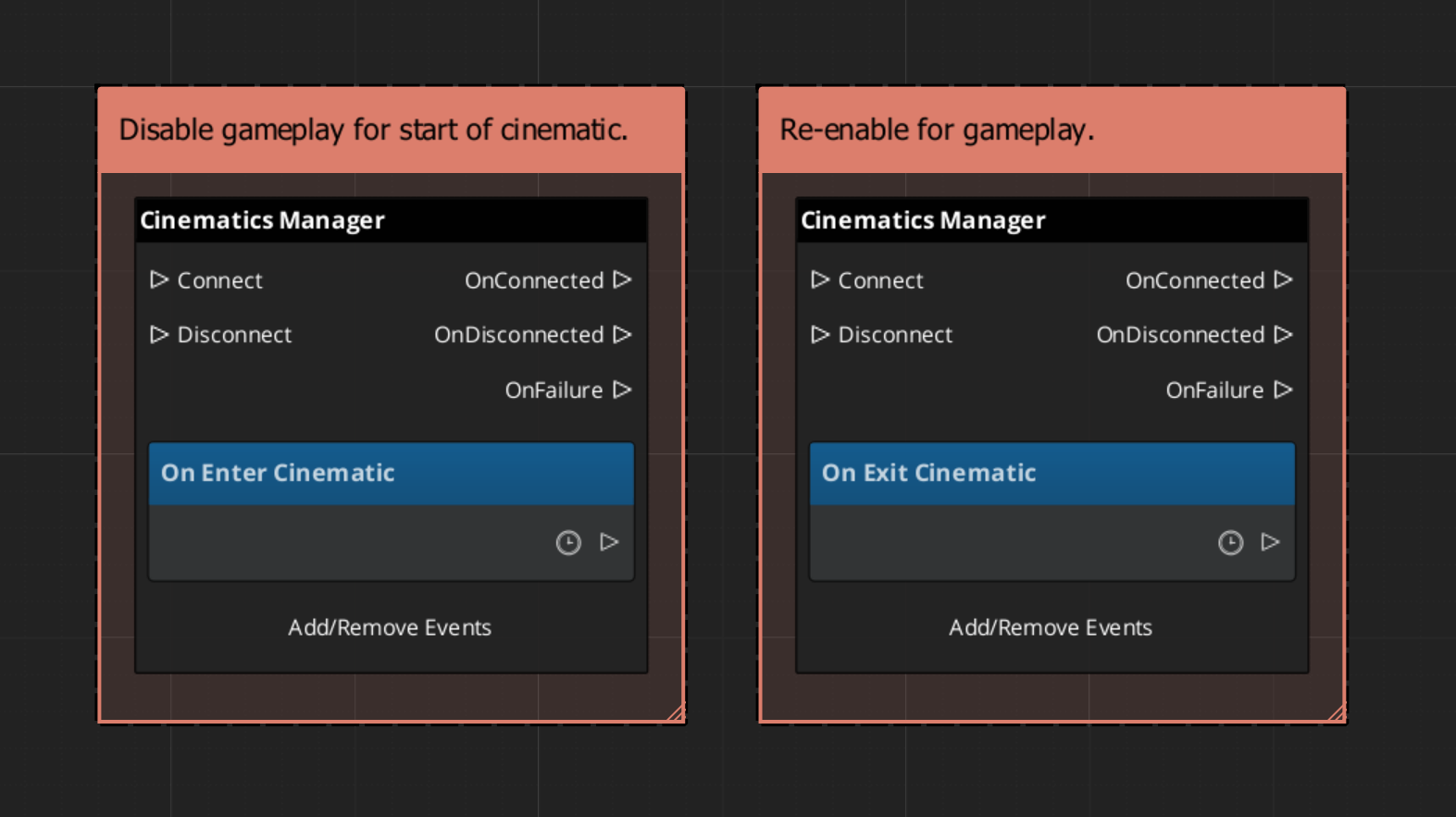

GS_CinematicsManagerComponent — cinematic mode lifecycle (BeginCinematic / EndCinematic)CinematicsManagerRequestBus / CinematicsManagerNotificationBus (EnterCinematic / ExitCinematic)CinematicStageMarkerComponent — named world-space anchor, self-registers on activate

Dialogue Manager

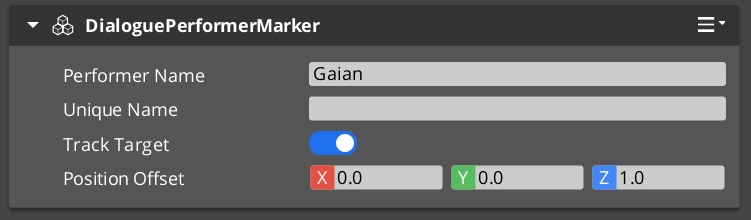

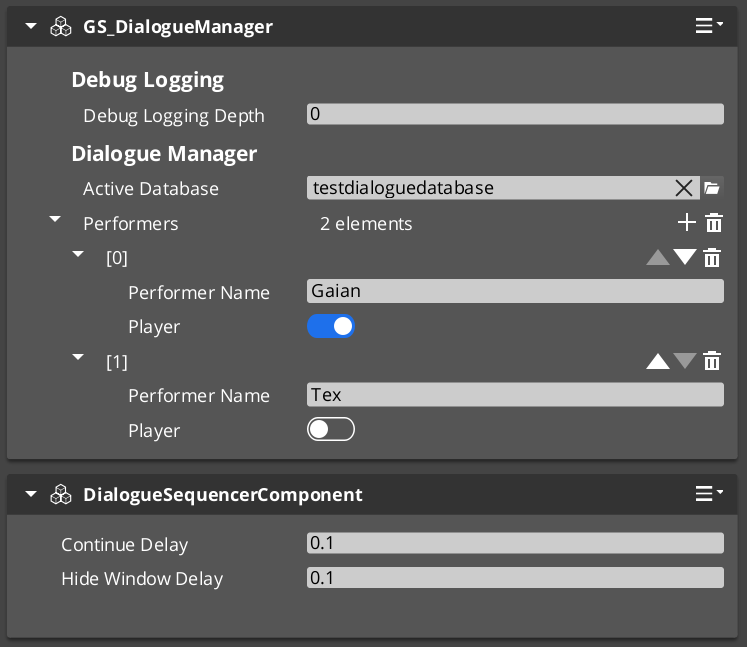

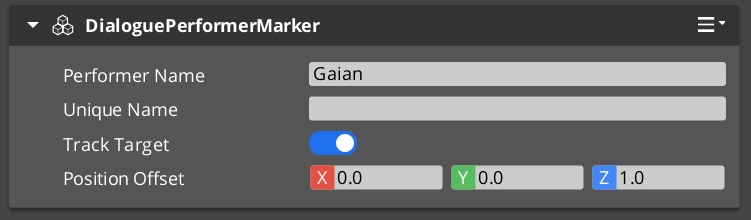

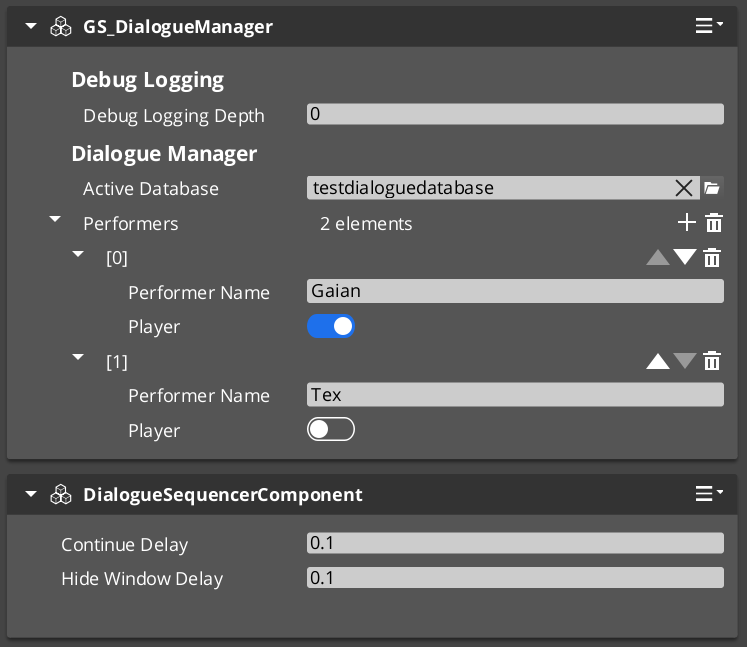

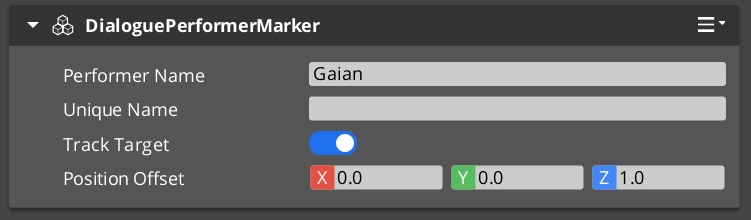

GS_DialogueManagerComponent — active .dialoguedb asset ownership and performer registryDialogueManagerRequestBus — StartDialogueSequenceByName, ChangeDialogueDatabase, RegisterPerformerMarker, GetPerformerDialoguePerformerMarkerComponent — named NPC world anchor, PerformerMarkerRequestBus

Dialogue Sequencer

DialogueSequencerComponent — node graph traversal, runtime token managementDialogueUIBridgeComponent — routes active dialogue to whichever UI is registered, decouples sequencer from presentationDialogueSequencerNotificationBus — OnDialogueTextBegin, OnDialogueSequenceComplete

Data Structure

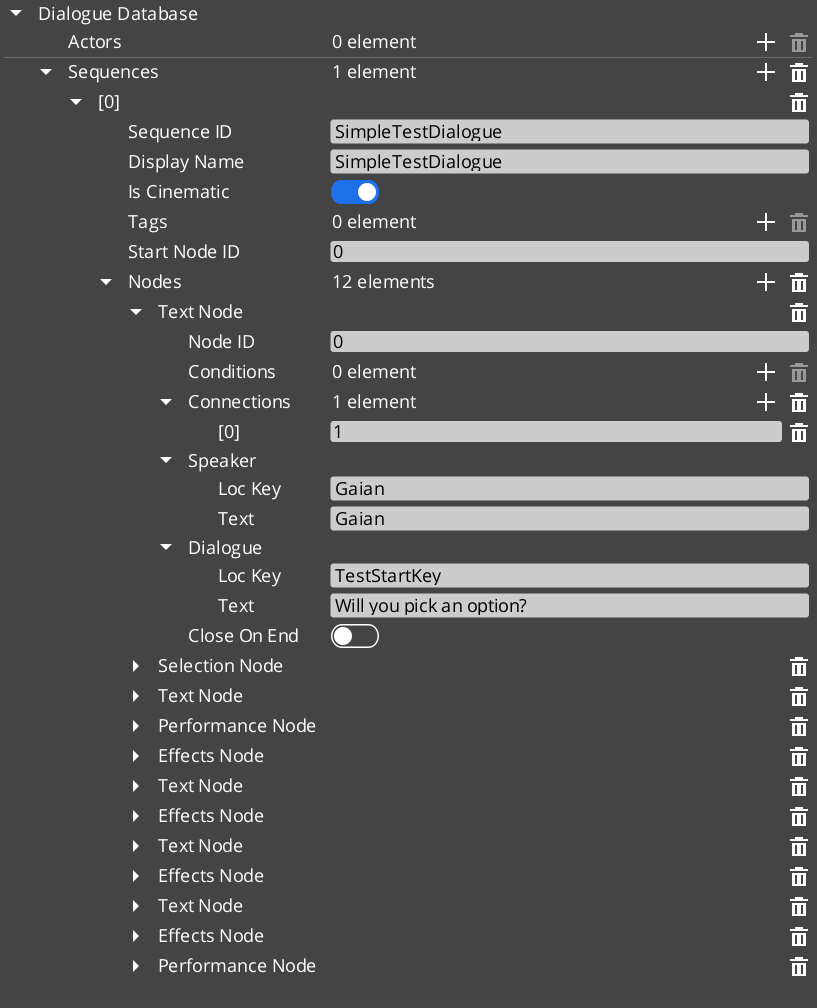

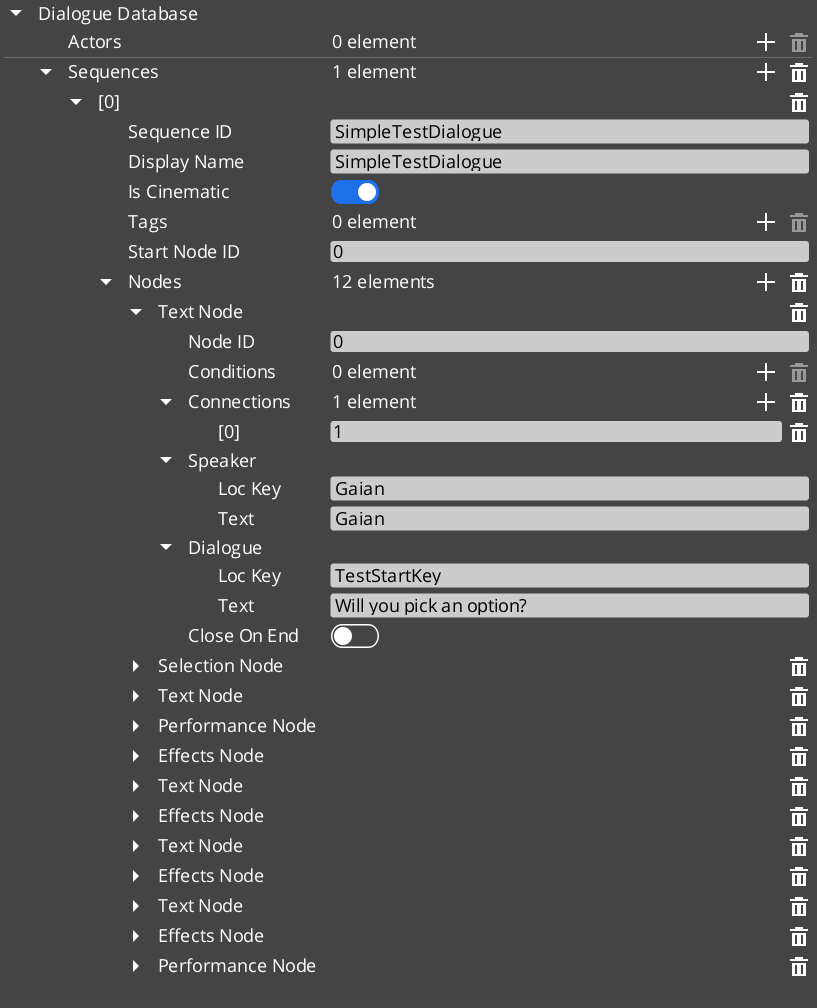

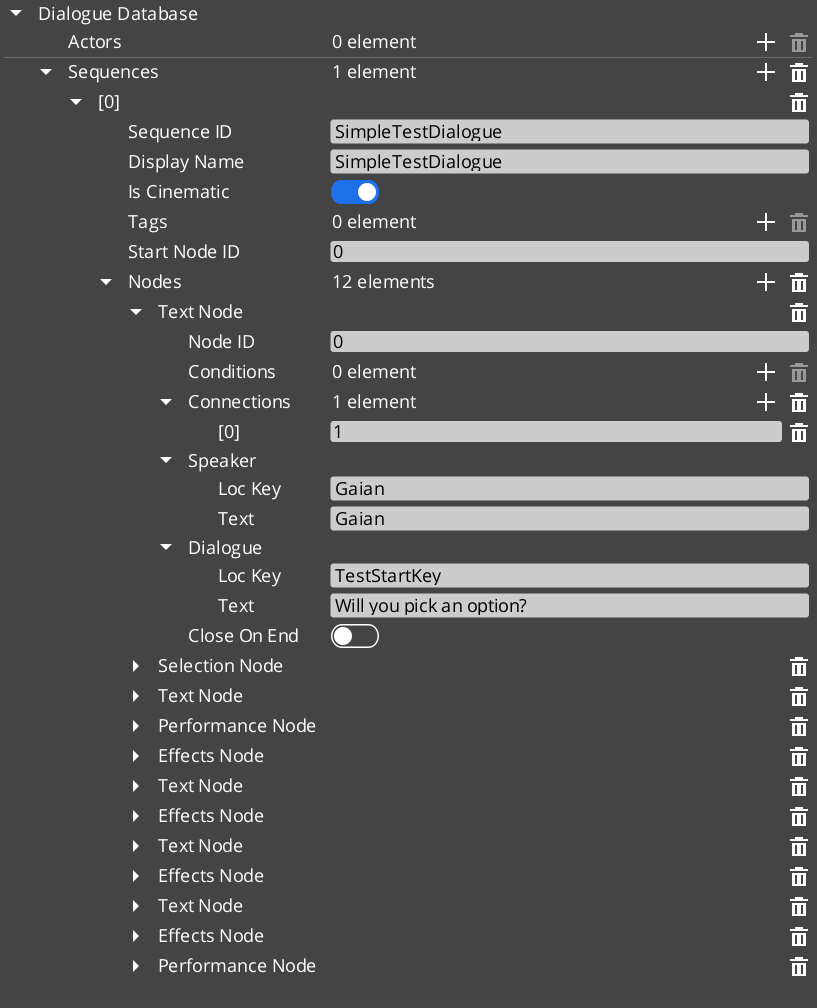

DialogueDatabase (.dialoguedb) — named actors and sequences assetDialogueSequence — directed node graph with startNodeIdActorDefinition — actor name, portrait, and metadata- Node types:

TextNodeData, SelectionNodeData, RandomNodeData, EffectsNodeData, PerformanceNodeData SelectionOption — per-choice text, conditions, and connections

Conditions

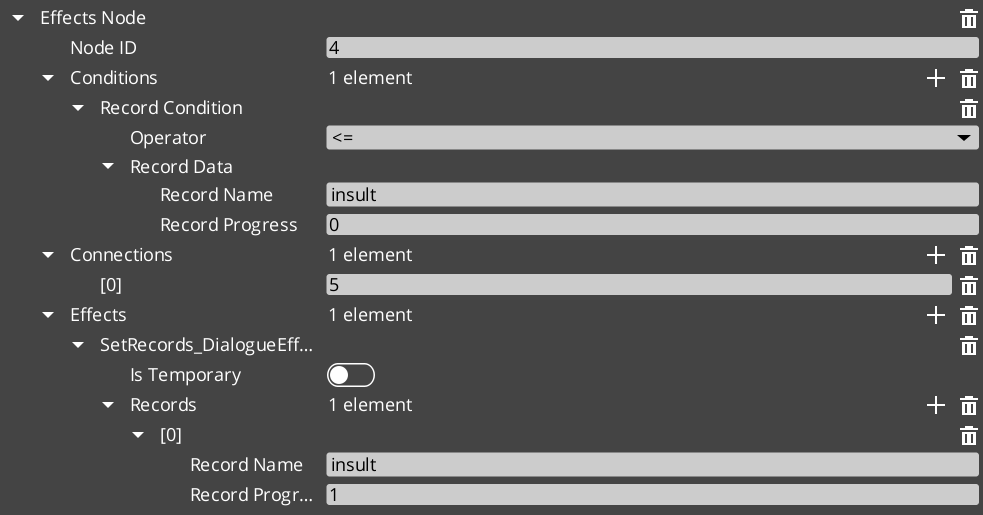

DialogueCondition (abstract base) — EvaluateCondition()Boolean_DialogueCondition — base boolean comparisonRecord_DialogueCondition — checks GS_Save records with comparison operators- Polymorphic discovery: extend base class, no manual registration

Effects

DialogueEffect (abstract base) — DoEffect() / ReverseEffect()SetRecords_DialogueEffect — sets GS_Save records during dialogueToggleEntitiesActive_DialogueEffect — activates or deactivates entities in the level- Polymorphic discovery: extend base class, no manual registration

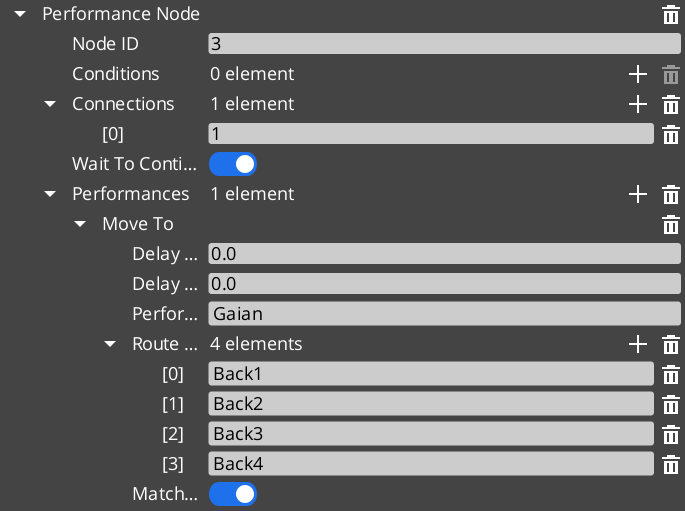

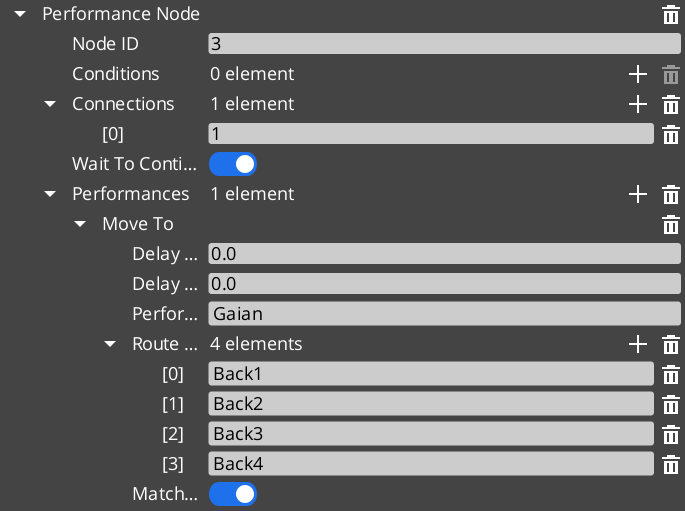

DialoguePerformance (abstract base, AZ::TickBus::Handler) — DoPerformance() / ExecutePerformance() / FinishPerformance()MoveTo_DialoguePerformance — interpolated movement to stage markerPathTo_DialoguePerformance — navmesh path navigation to stage marker (requires RecastNavigation)RepositionPerformer_DialoguePerformance — instant teleport to stage marker- Polymorphic discovery: extend base class, no manual registration

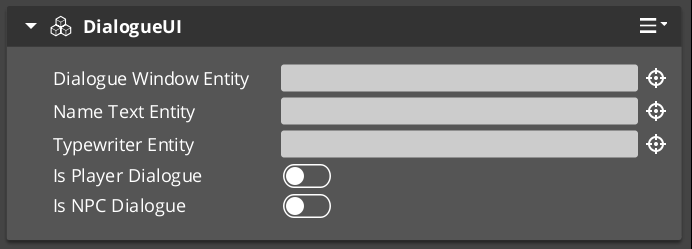

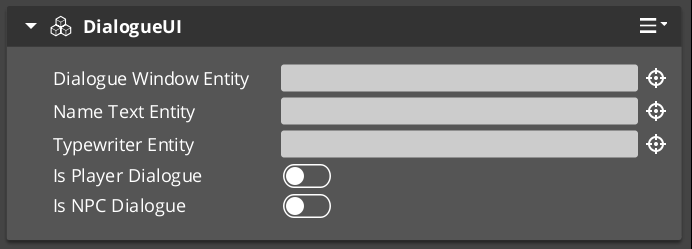

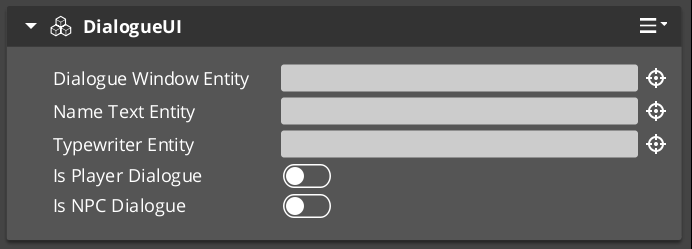

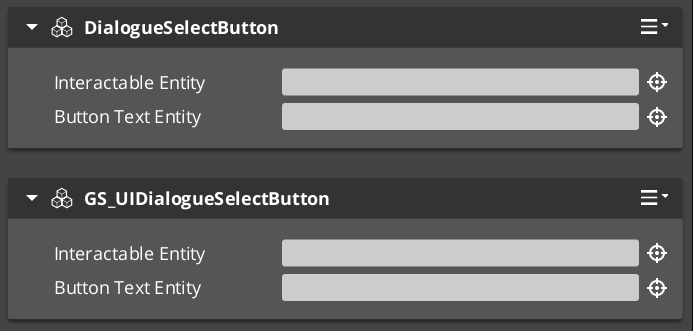

Dialogue UI

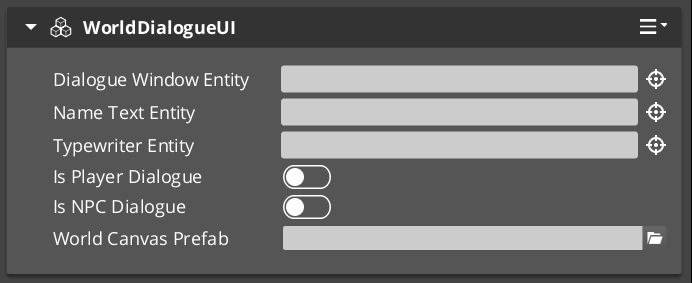

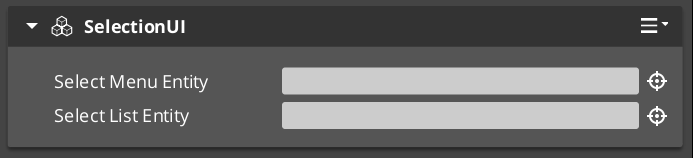

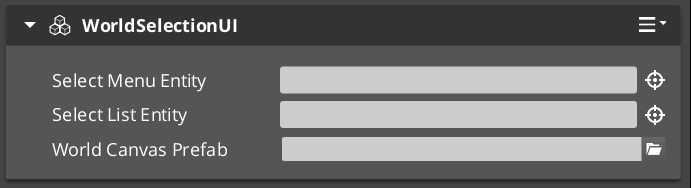

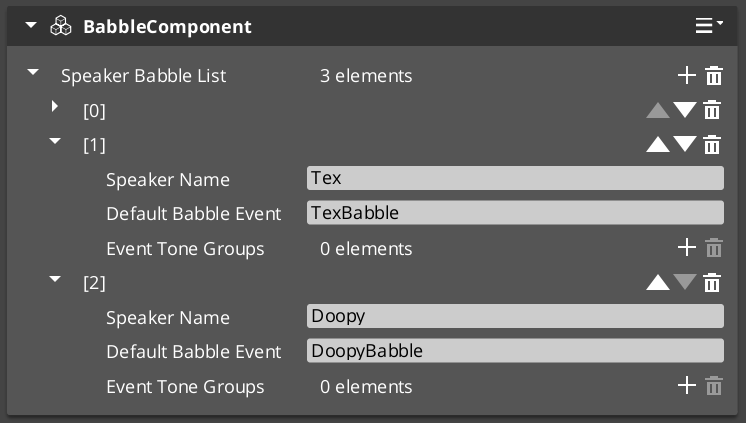

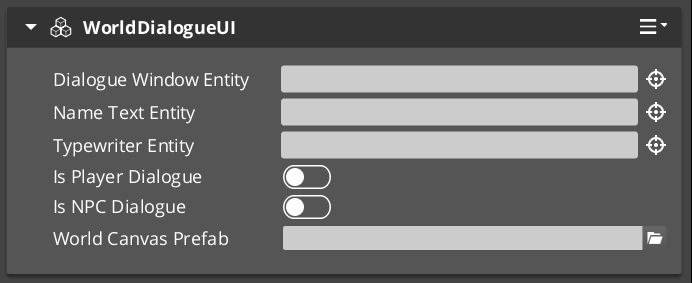

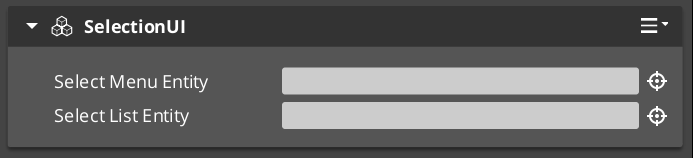

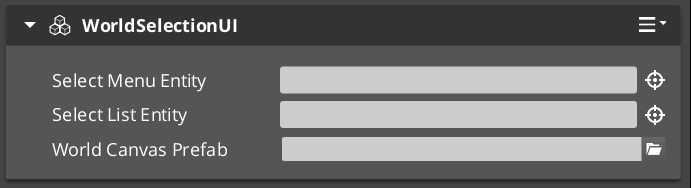

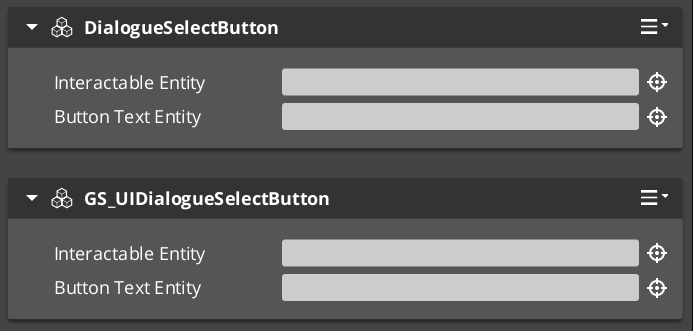

DialogueUIComponent — screen-space dialogue text, speaker name, and portrait displayWorldDialogueUIComponent — world-space speech bubble displayDialogueUISelectionComponent — screen-space player choice menuWorldDialogueUISelectionComponent — world-space selection displayTypewriterComponent — character-by-character text reveal, configurable speed, OnTypeFired / OnTypewriterCompleteBabbleComponent — procedural audio babble synchronized to typewriter output

Localization

LocalizedStringId — key + default fallback text, Resolve() methodLocalizedStringTable — runtime key-value string lookup table

Dialogue Editor

- Node-based in-engine GUI for authoring

.dialoguedb assets and sequence graphs

Type Registry

DialogueTypeRegistry — factory registration for conditions, effects, and performancesDialogueTypeDiscoveryBus — external gems register custom types without modifying GS_Cinematics

2.1.3 - Environment Change Log

GS_Environment version changelog.

Logs

Environment 0.5.0

First official base release of GS_Environment.

Time Manager

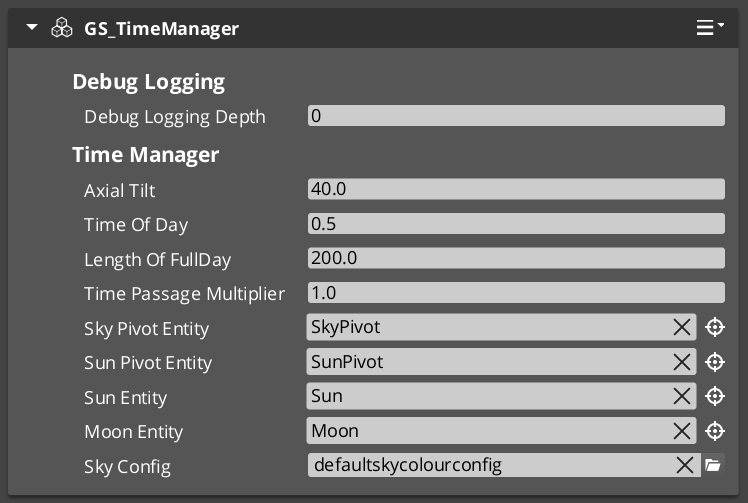

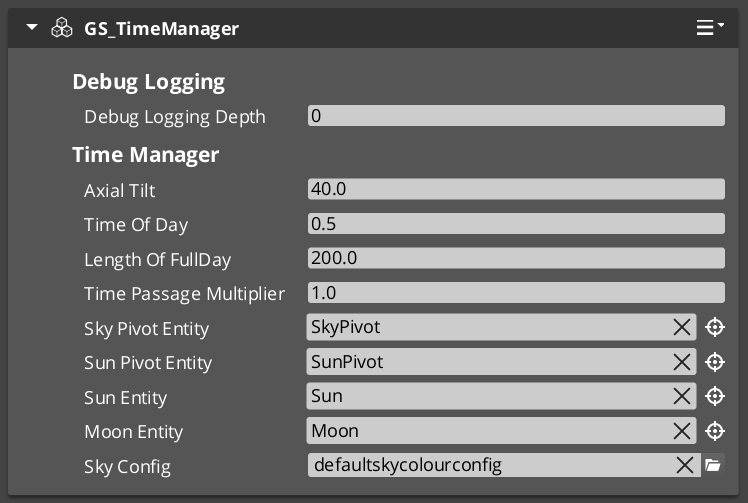

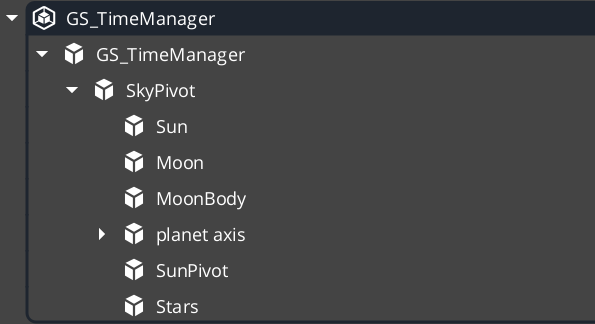

GS_TimeManagerComponent — world time ownership, time of day controlTimeManagerRequestBus — SetTimeOfDay, SetTimePassageSpeed, GetTimeOfDay, GetWorldTime, IsDayTimeManagerNotificationBus — WorldTick (per-frame), DayNightChanged (on threshold cross)GS_EnvironmentSystemComponent — system-level environment registration

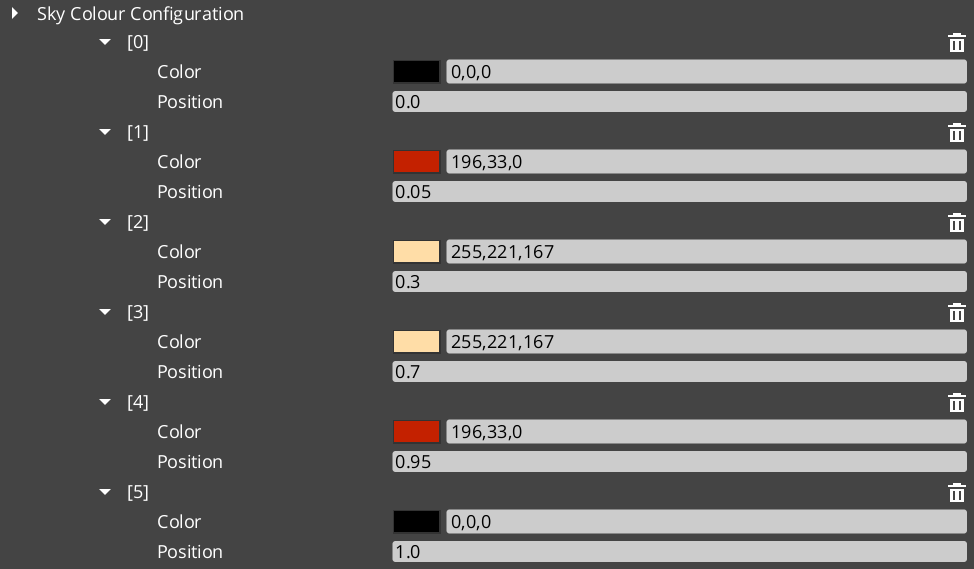

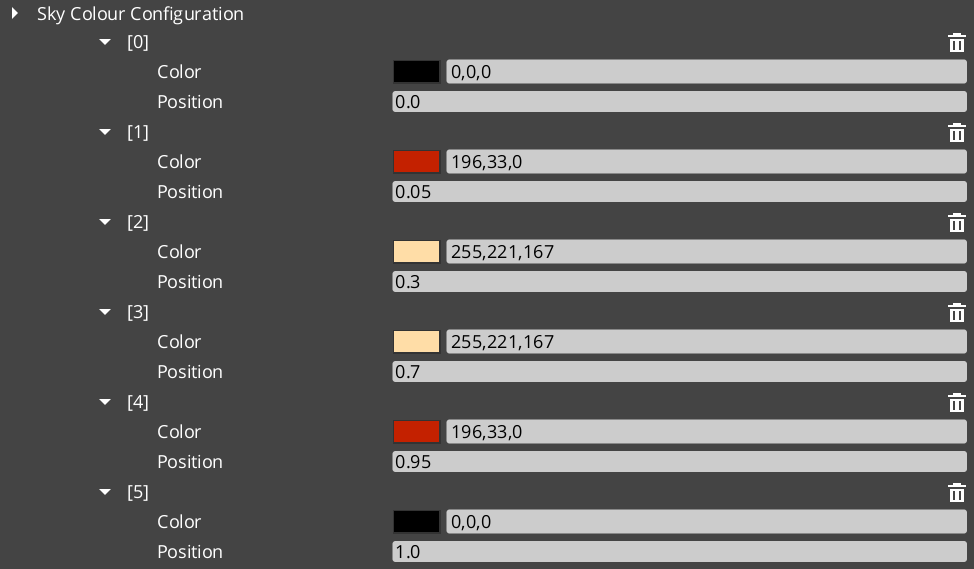

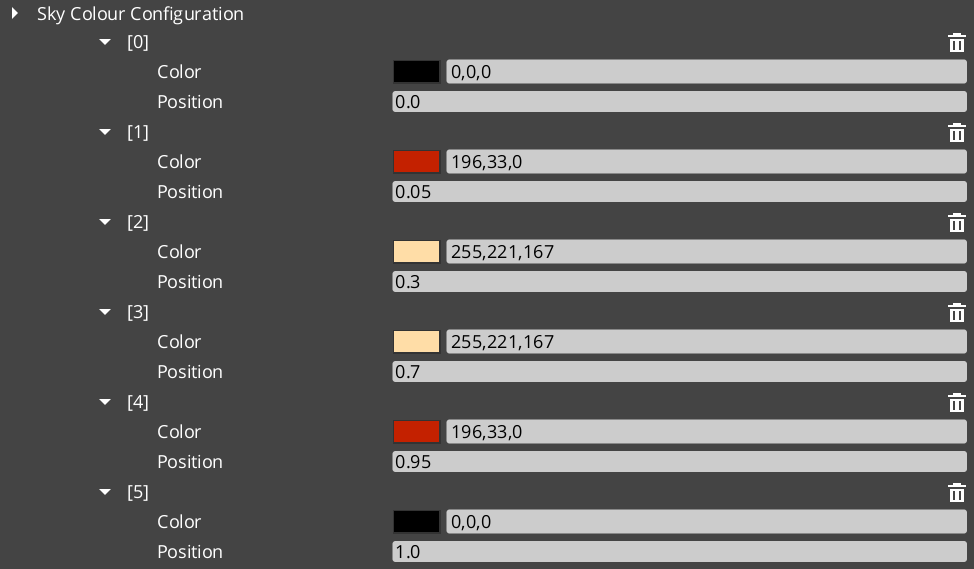

Sky Configuration

SkyColourConfiguration — data asset defining sky color at different times of day- Authored as an asset and assigned to the environment for day/night color blending

2.1.4 - Core Change Log

Changelog.

Logs

Core 0.5.0

2.1.5 - Interaction Change Log

GS_Interaction version changelog.

Logs

Interaction 0.5.0

First official base release of GS_Interaction.

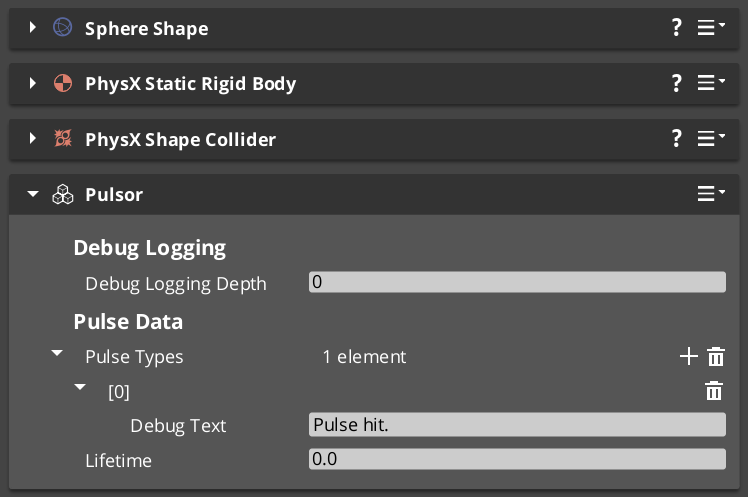

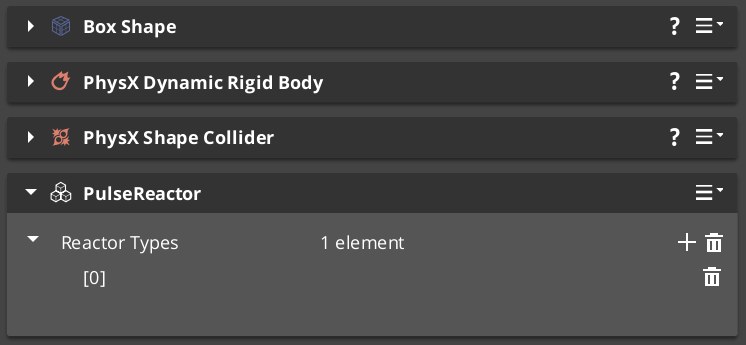

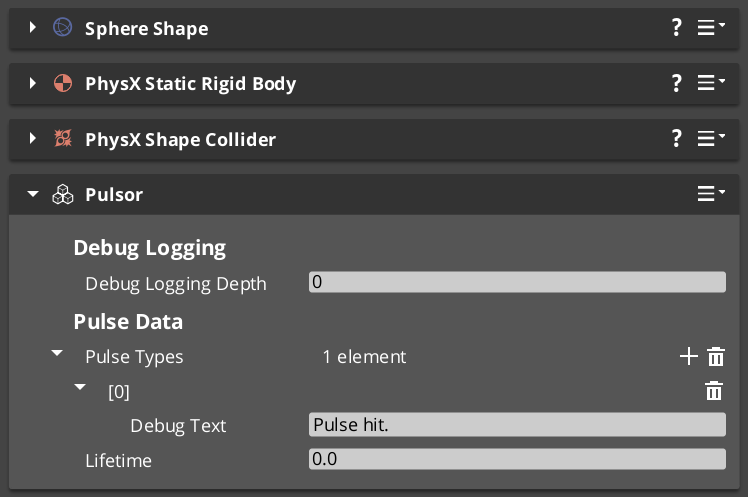

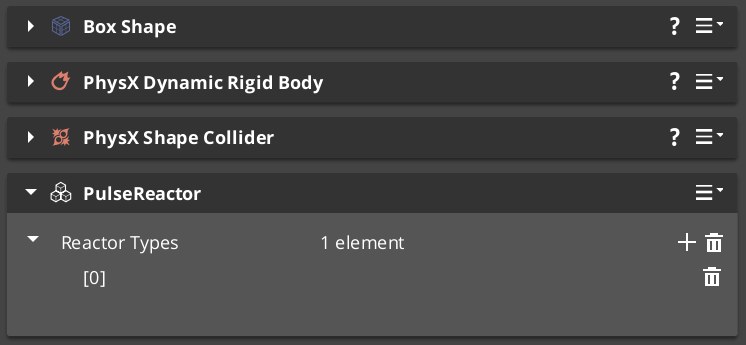

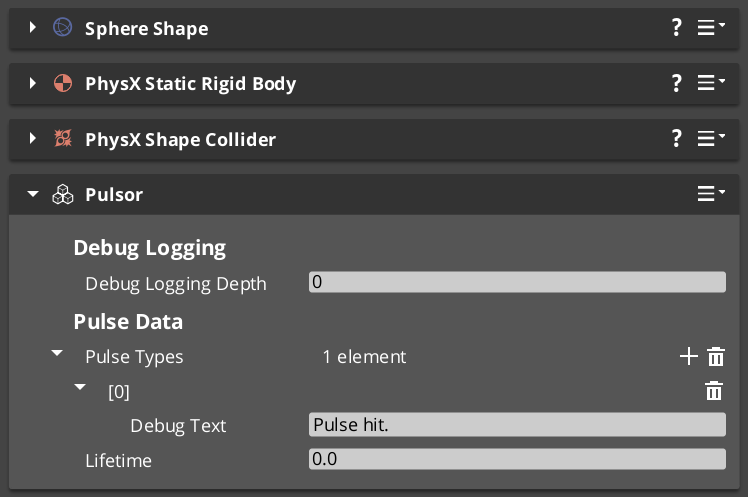

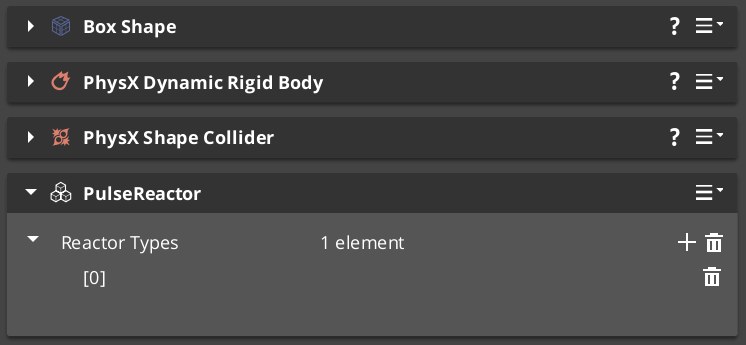

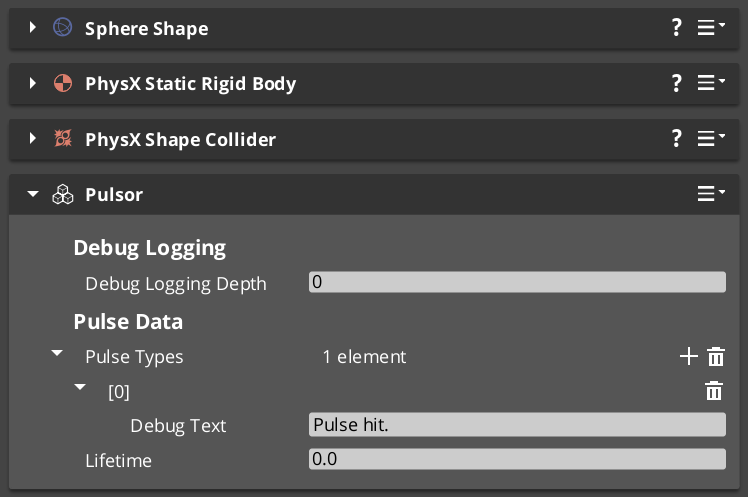

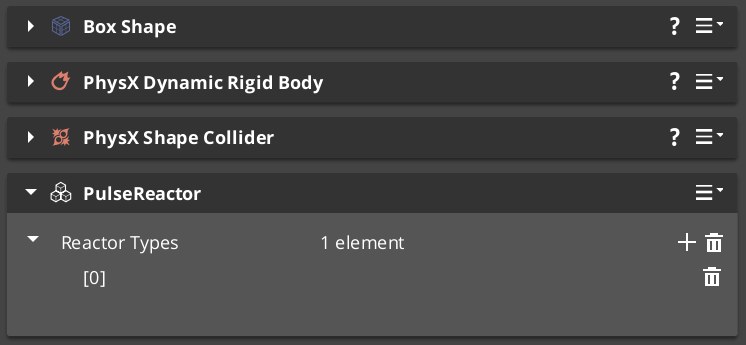

Pulsors

PulsorComponent — physics trigger volume that broadcasts typed pulse eventsPulseReactorComponent — receives pulses and executes reactor typesPulseType (abstract base) — extensible pulse payload type- Built-in types:

Debug_Pulse, Destruct_Pulse PulseTypeRegistry — auto-discovery of all reflected pulse types

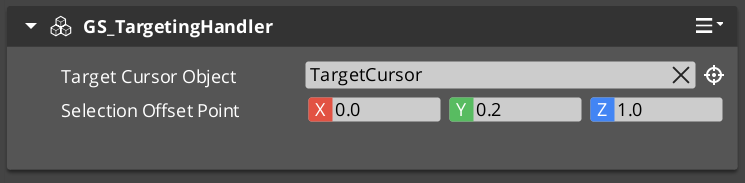

Targeting

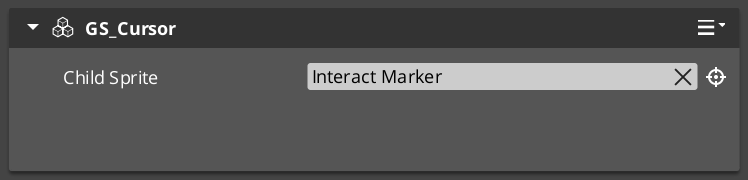

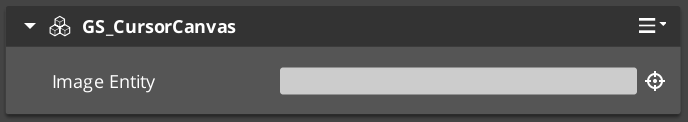

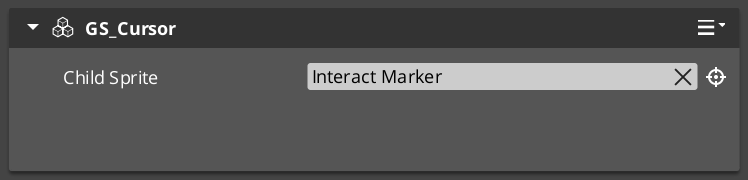

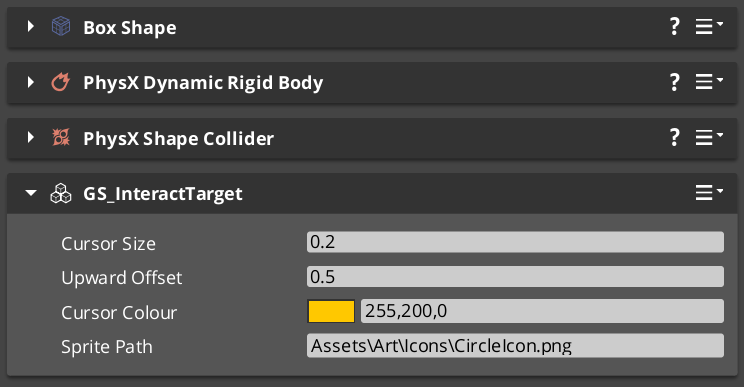

GS_TargetComponent — marks an entity as a targetable objectGS_InteractTargetComponent — marks an entity as interactableGS_CursorComponent — world-space cursor and proximity scanGS_TargetingHandlerComponent — finds and locks the best interactable in rangeGS_TargetingHandlerRequestBus / GS_TargetingHandlerNotificationBus

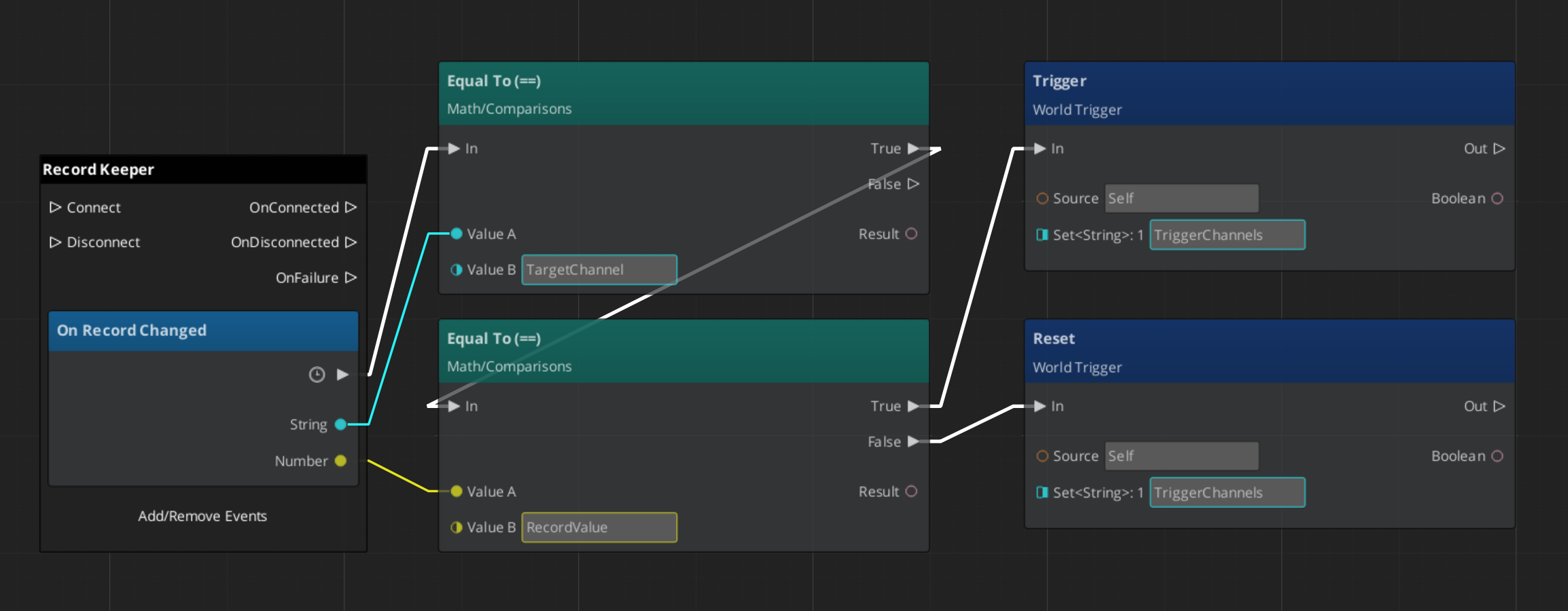

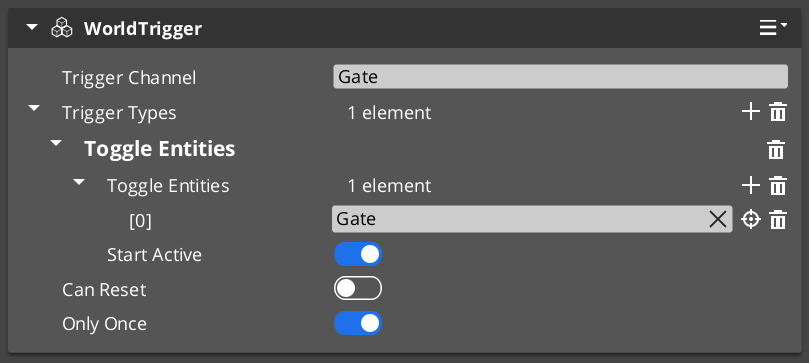

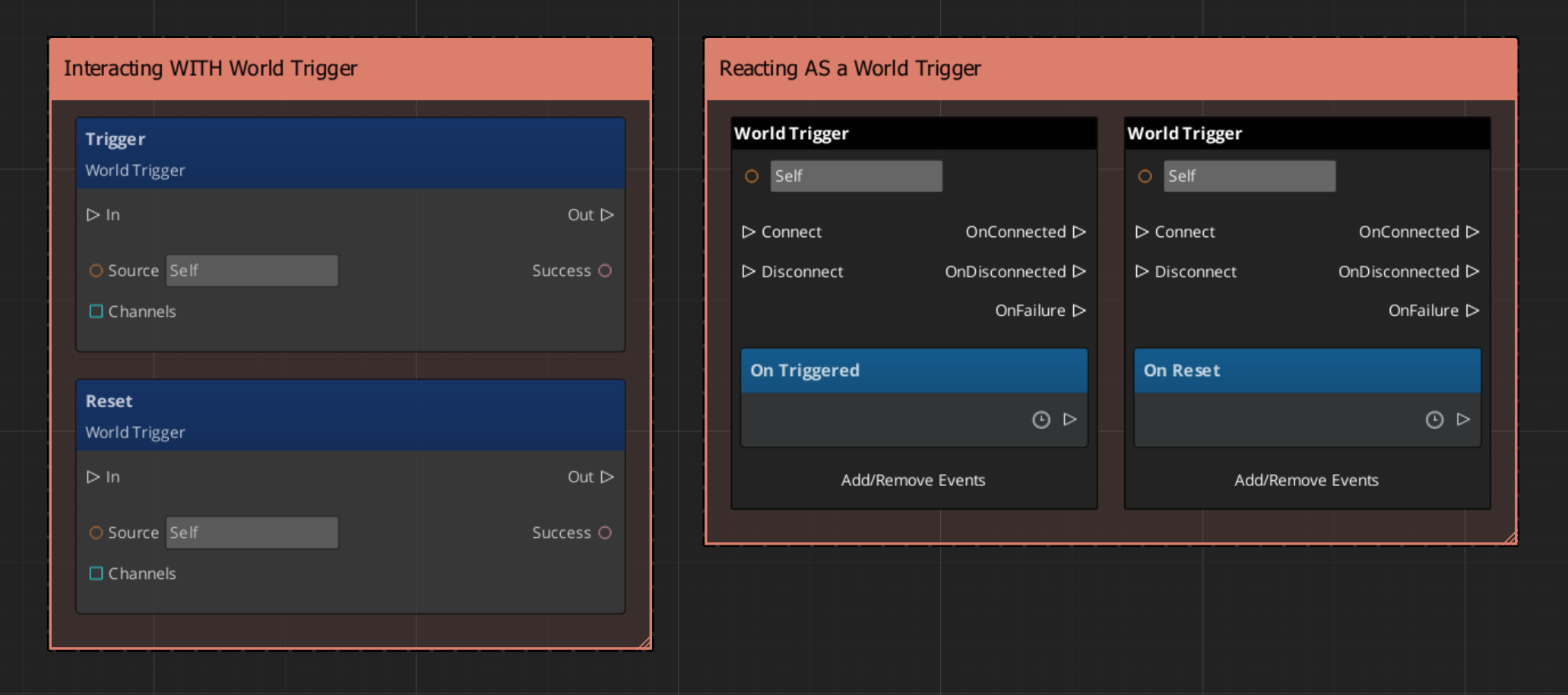

World Triggers

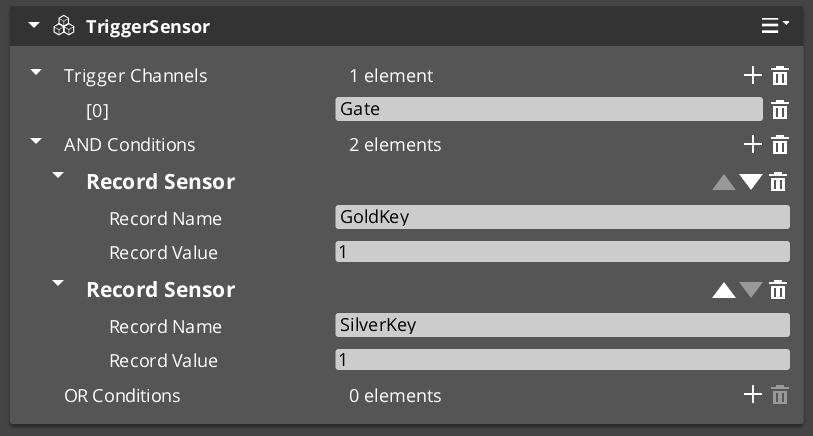

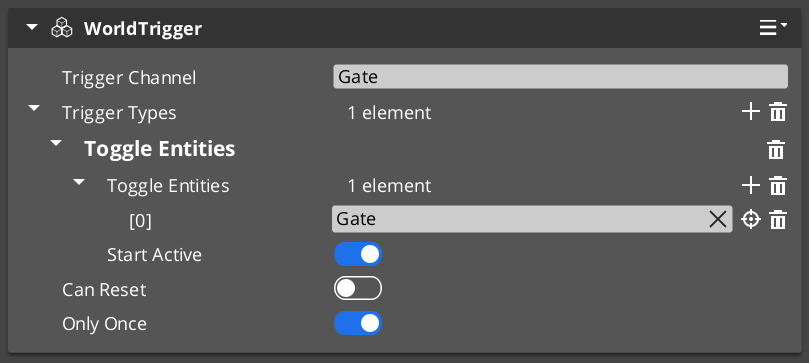

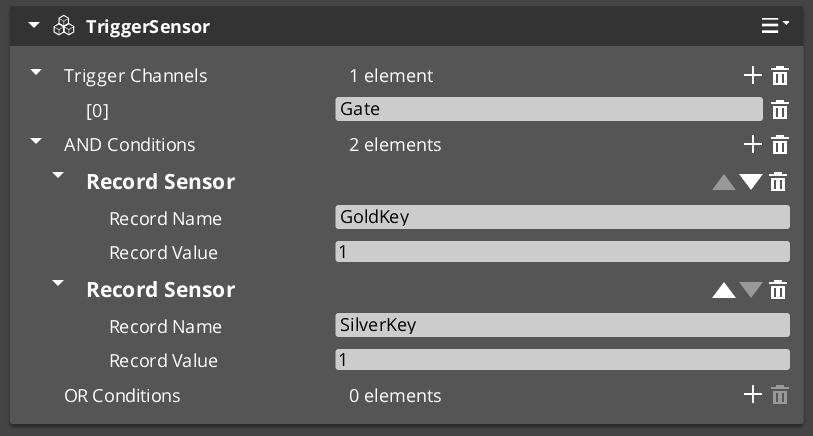

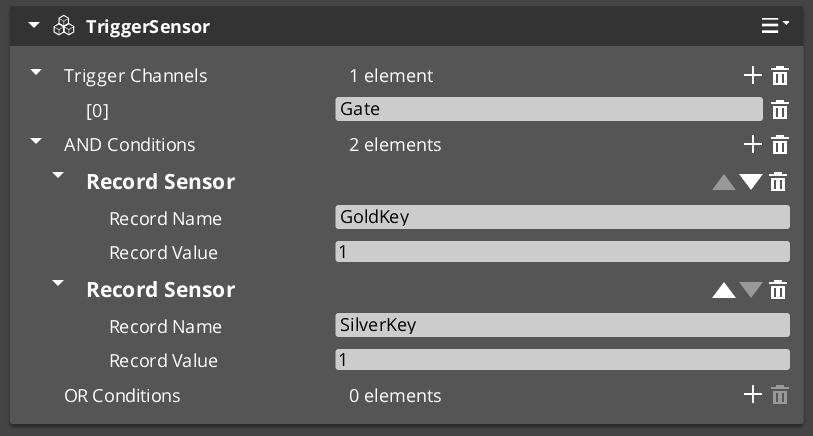

TriggerSensorComponent — base for all condition-side trigger sensorsWorldTriggerComponent — base for all response-side world triggers- Built-in sensors:

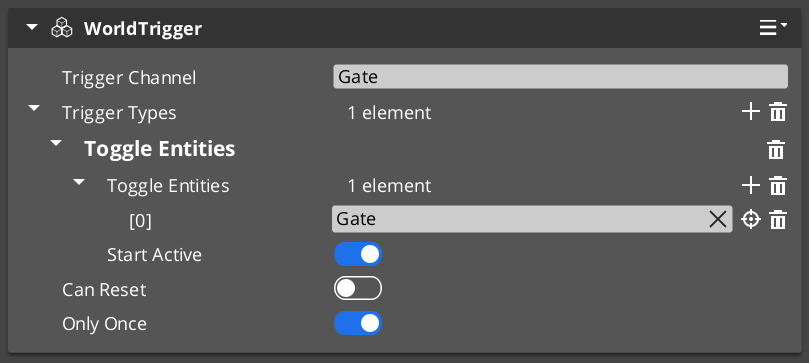

InteractTriggerSensorComponent, PlayerInteractTriggerSensorComponent, ColliderTriggerSensorComponent, RecordTriggerSensorComponent WorldTriggerRequestBus — trigger enable/disable interface- Sensors renamed from TriggerAction to TriggerSensor (2026-03)

- 22 components total across all subsystems

2.1.6 - Juice Change Log

GS_Juice version changelog.

Logs

Juice 0.5.0

First official base release of GS_Juice. Early Development — full support planned 2026.

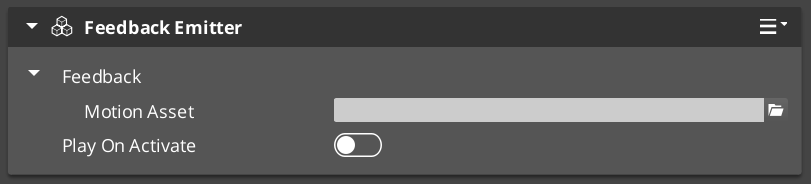

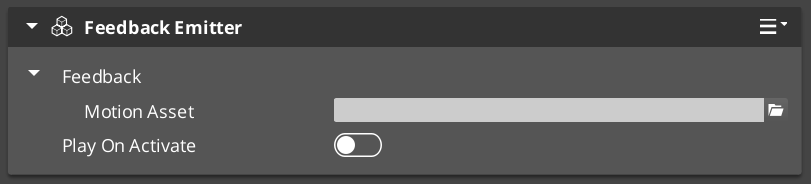

Feedback System

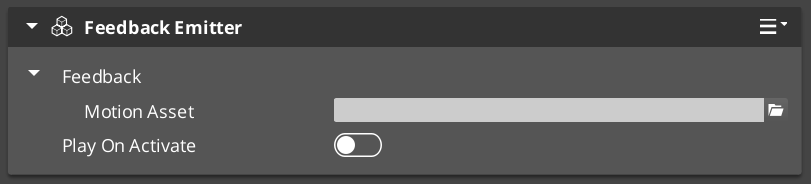

FeedbackEmitter — component that plays authored feedback motions on its entityFeedbackMotionAsset — data asset containing one or more feedback tracksFeedbackMotion — runtime instance wrapper for a FeedbackMotionAssetFeedbackMotionTrack — domain base class extending GS_Core’s GS_Motion system

Feedback Tracks

FeedbackTransformTrack — drives position, scale, and rotation offsets (screen shake, bounce)FeedbackMaterialTrack — drives opacity, emissive intensity, and color (flash, glow, pulse)

PostProcessing

- Planned for future release. Not yet implemented.

2.1.7 - Performer Change Log

GS_Performer version changelog.

Logs

Performer 0.5.0

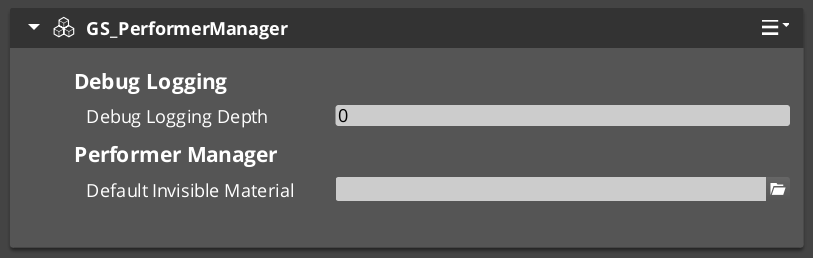

First official base release of GS_Performer. Early Development — full support planned 2026.

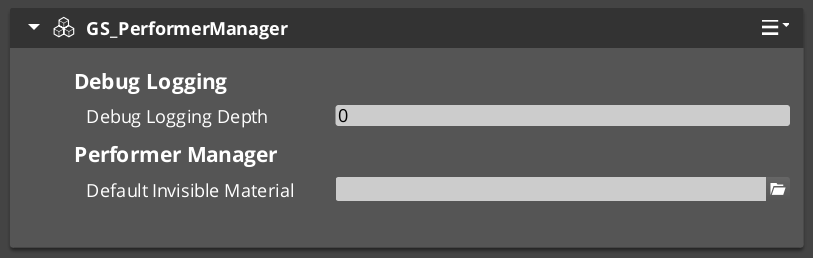

GS_PerformerManagerComponent — performer registration and name-based lookupPerformerManagerRequestBusGS_PerformerSystemComponent — system-level lifecycle component

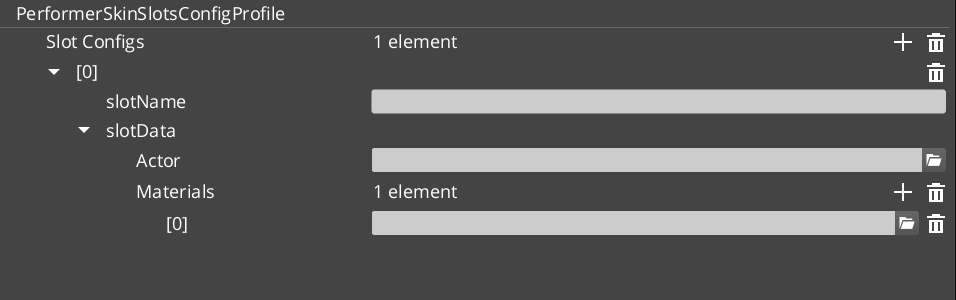

Skin Slots

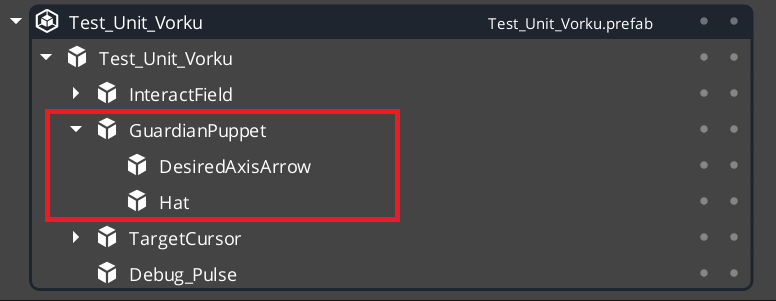

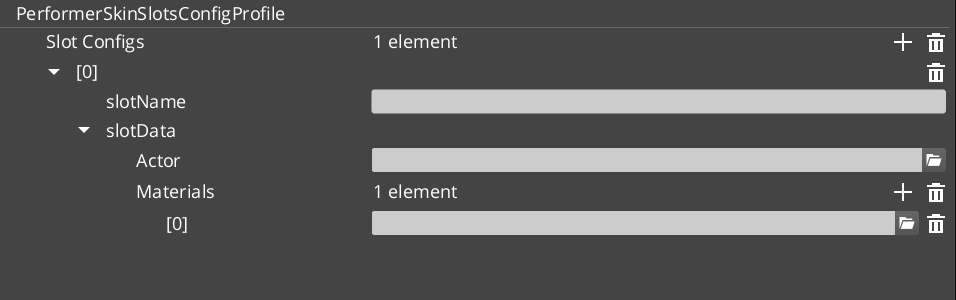

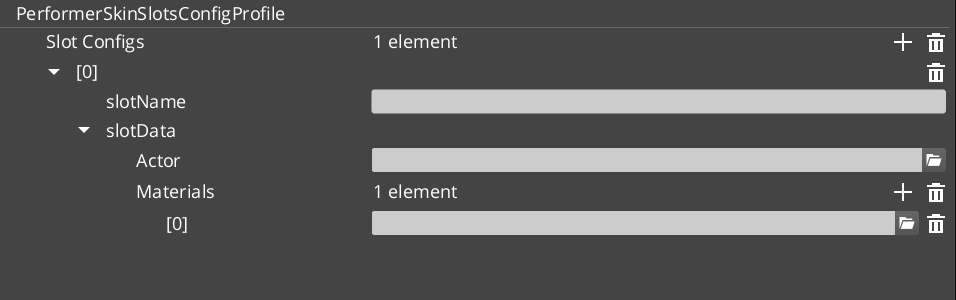

PerformerSkinSlotComponent — manages a named visual slot on a character entitySkinSlotHandlerComponent — handles asset swapping for a given slotPerformerSkinSlotsConfigProfile — asset class defining slot configurationsSkinSlotData / SkinSlotNameDataPair — slot data types

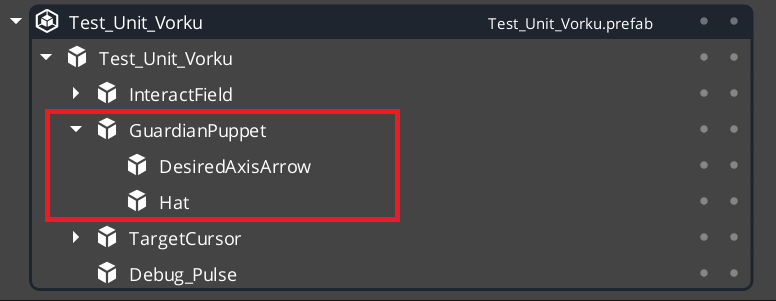

PaperFacingHandlerBaseComponent — base for billboard-style rendering handlersPaperFacingHandlerComponent — orients a 2.5D billboard character to face the camera correctly

Locomotion

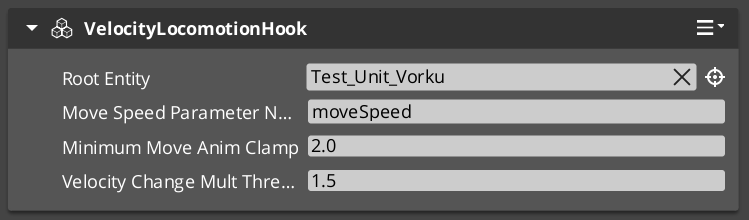

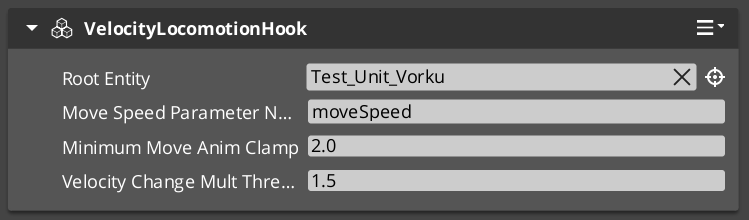

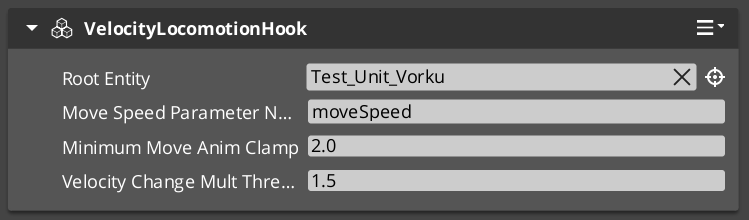

VelocityLocomotionHookComponent — reads entity velocity and drives animation blend tree parameters automatically

Head Tracking

- Procedural head bone orientation toward a world-space look-at target

Babble

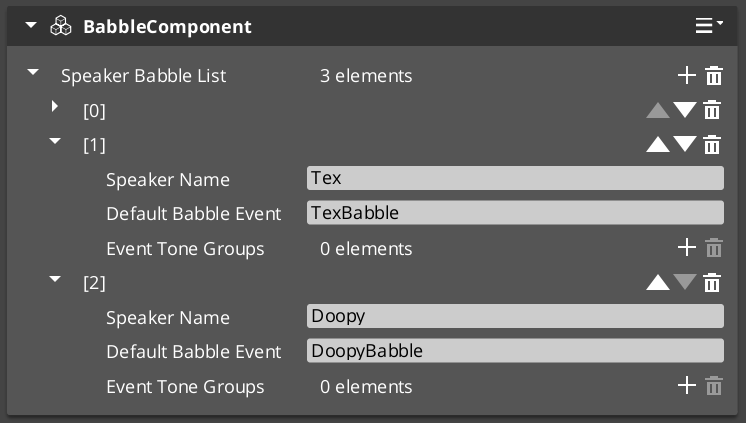

BabbleComponent — generates procedural vocalisation tones synchronized to GS_Cinematics typewriter outputBabbleToneEvent / SpeakerBabbleEvents — tone data structures per speaker identity

2.1.8 - PhantomCam Change Log

GS_PhantomCam version changelog.

Logs

PhantomCam 0.5.0

First official base release of GS_PhantomCam.

Cam Manager

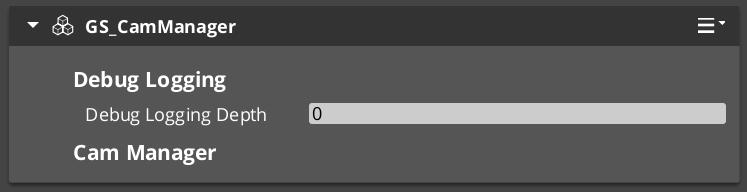

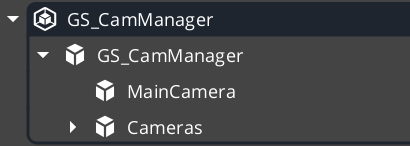

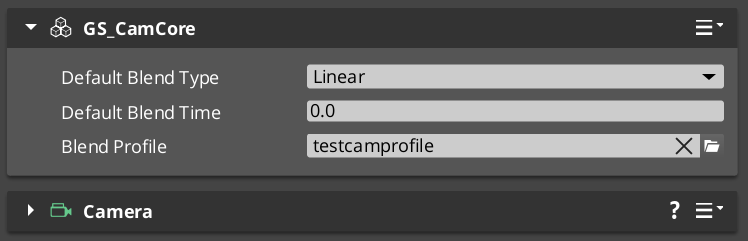

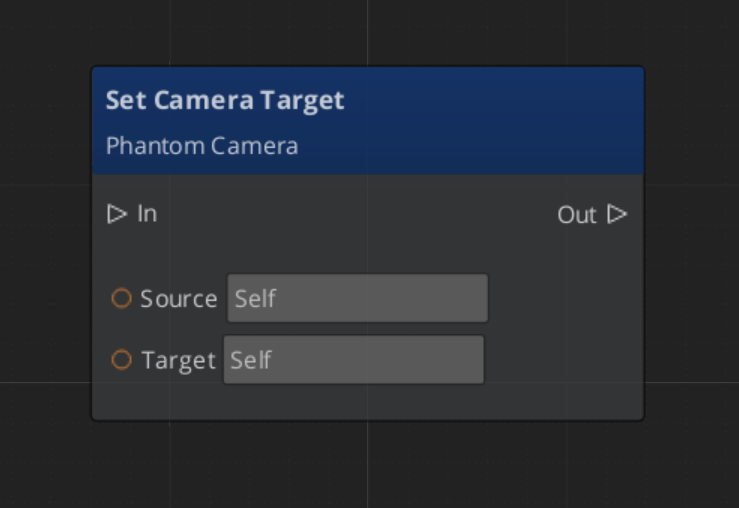

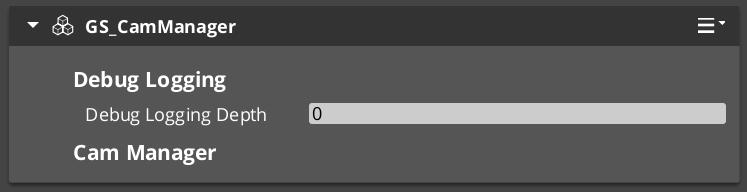

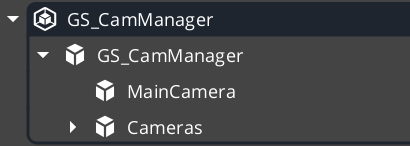

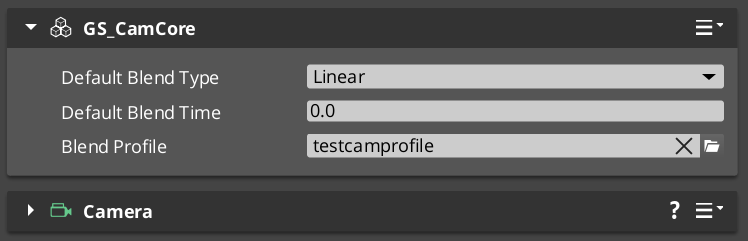

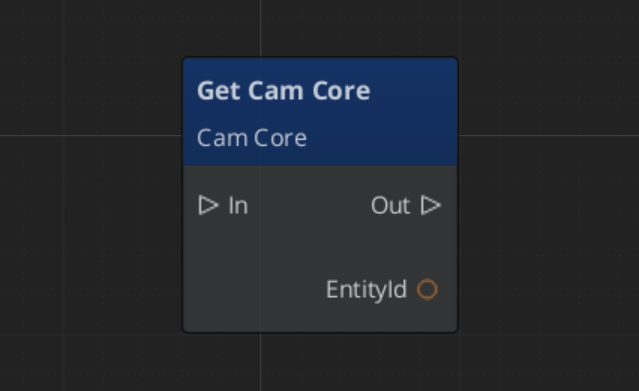

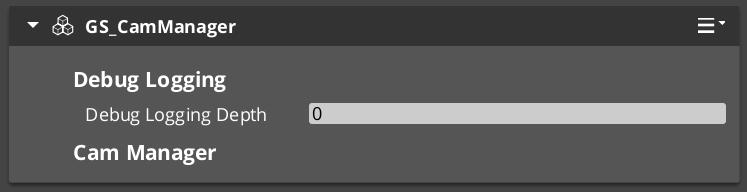

GS_CamManagerComponent — camera system lifecycle, active camera trackingCamManagerRequestBus / CamManagerNotificationBusGS_PhantomCamSystemComponent — system-level registration

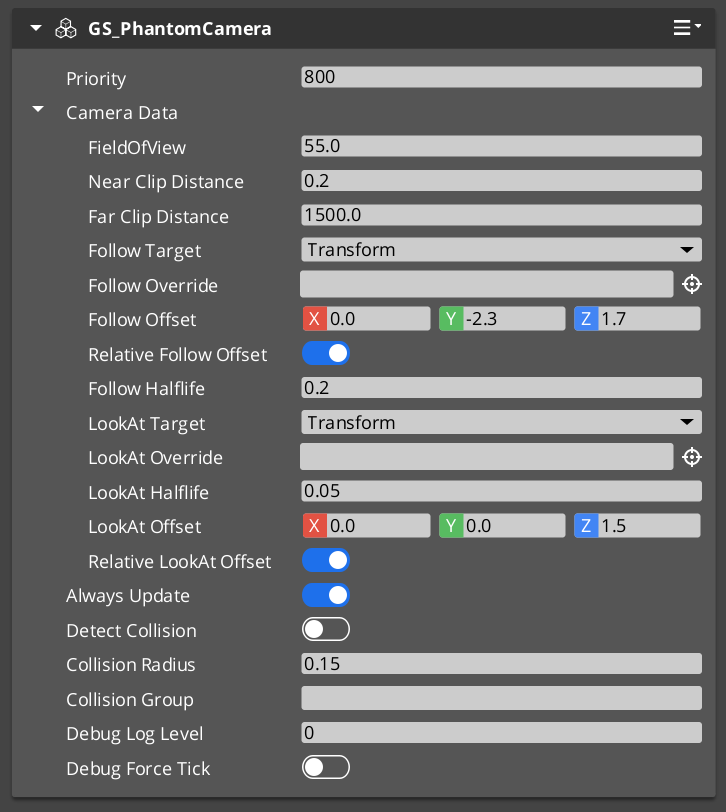

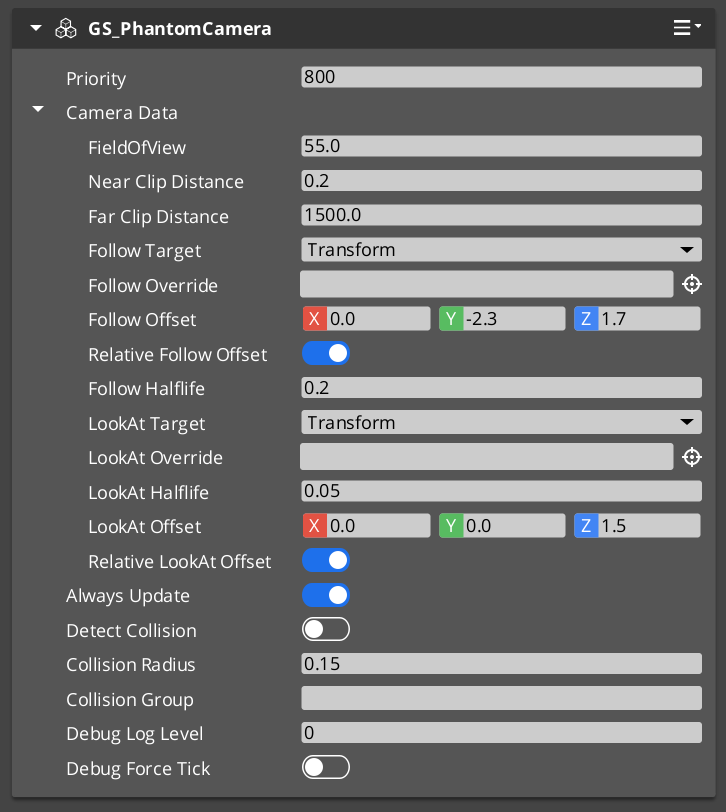

Phantom Cameras

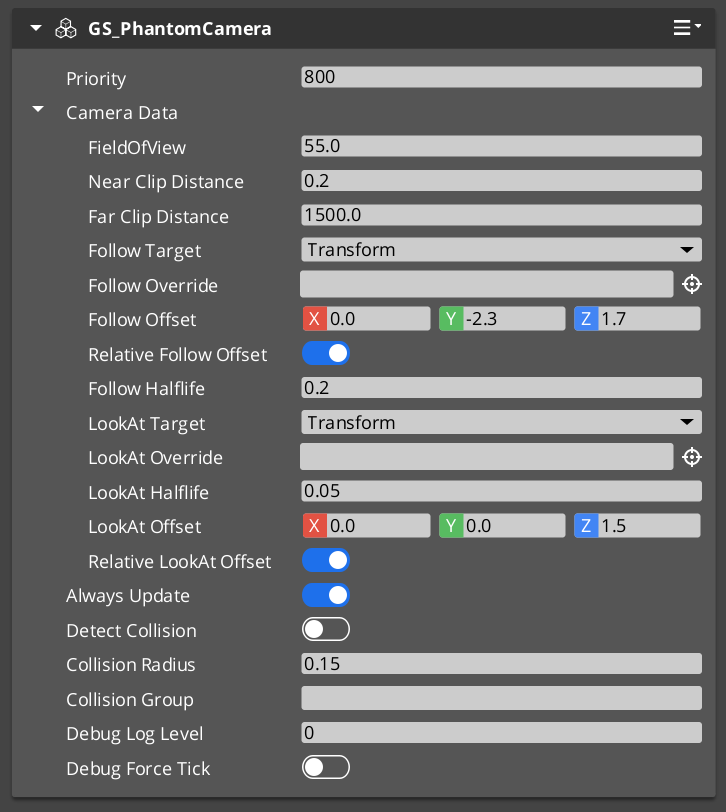

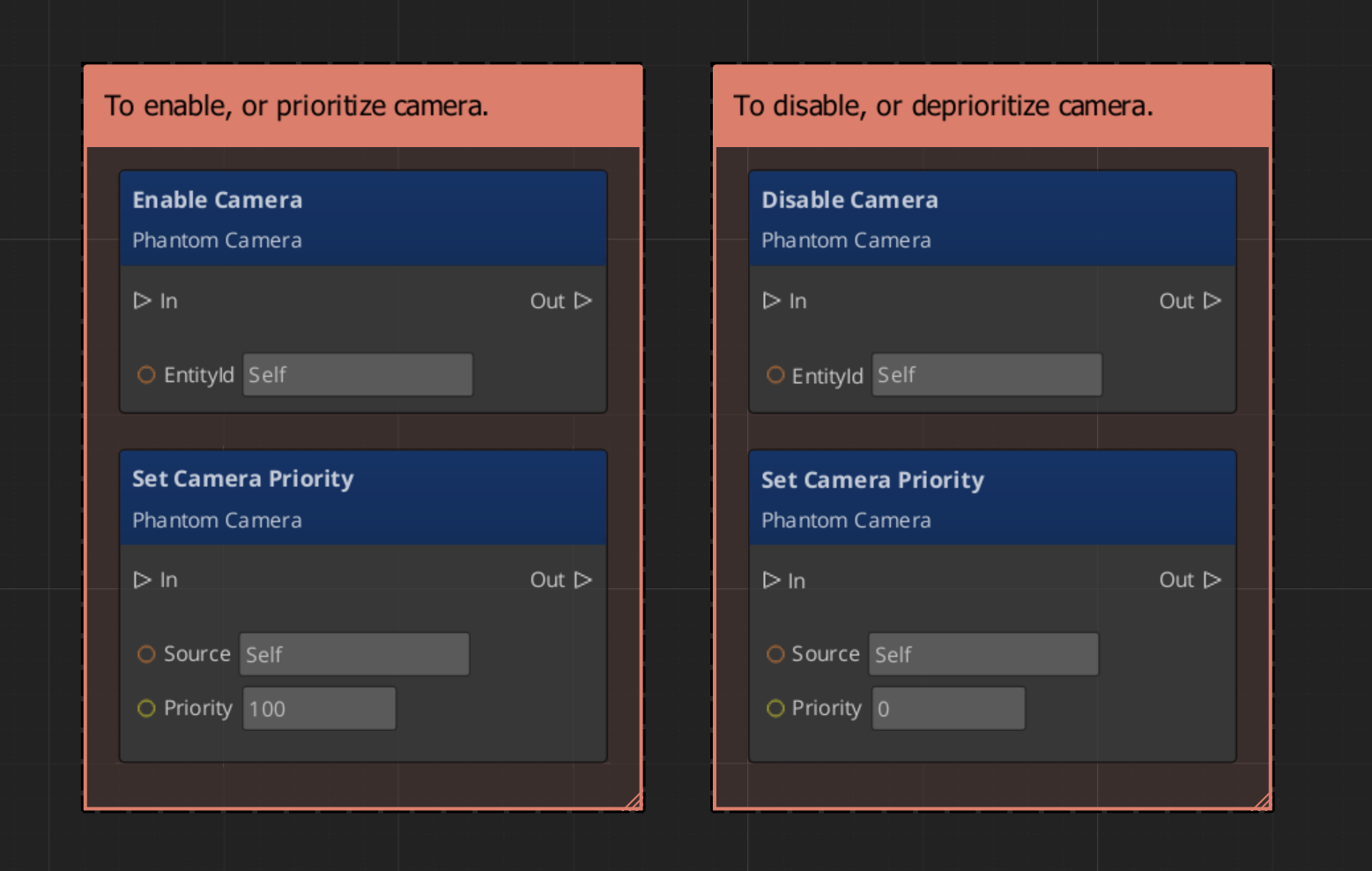

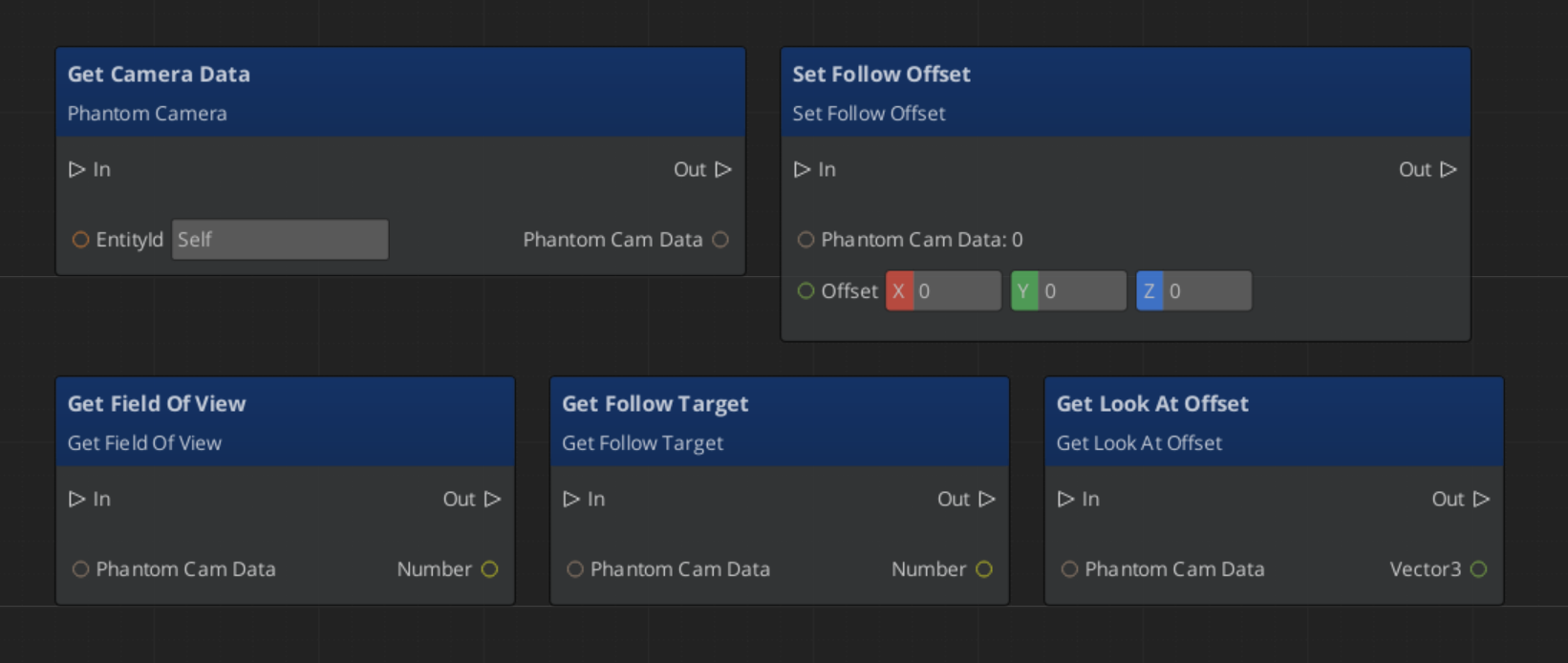

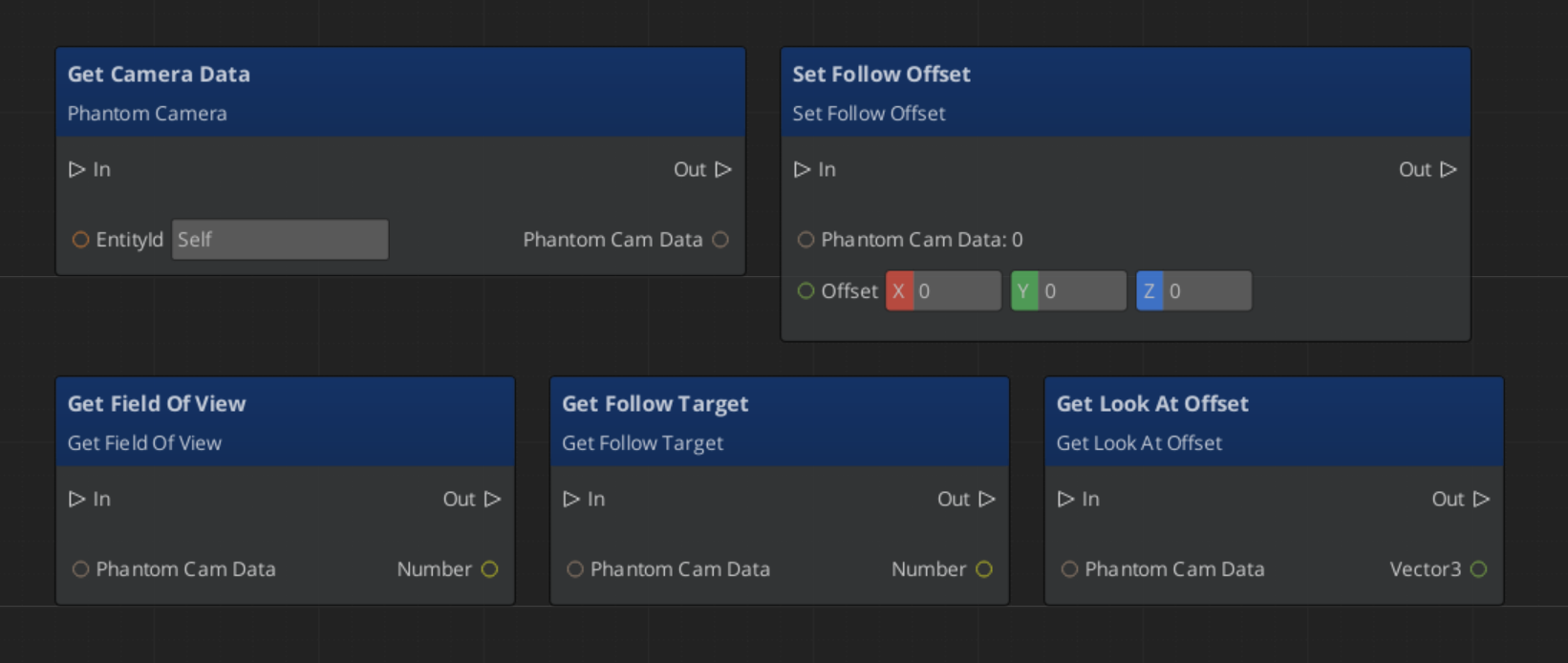

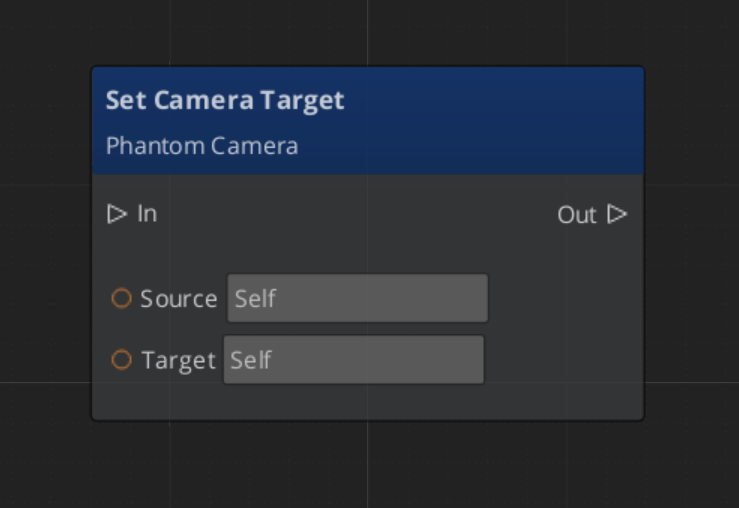

GS_PhantomCameraComponent — virtual camera with follow target, look-at, FOV, and priorityPhantomCameraRequestBusPhantomCamData — camera configuration data structure- Priority-based switching: highest priority active phantom camera drives the real camera

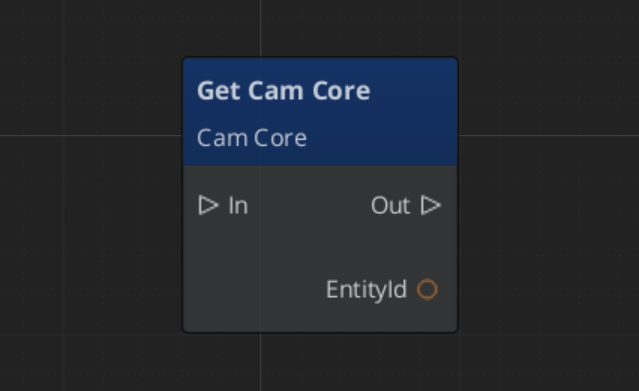

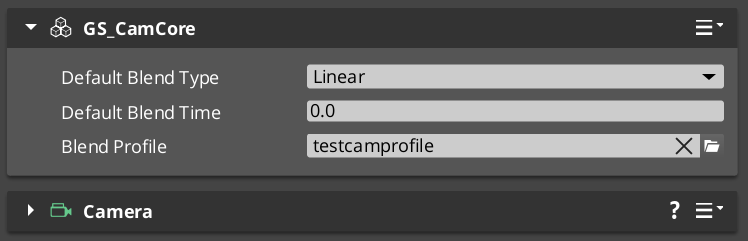

Cam Core

GS_CamCoreComponent — reads from the active phantom camera each frame and applies to the engine cameraCamCoreRequestBus / CamCoreNotificationBus

Camera Behaviors

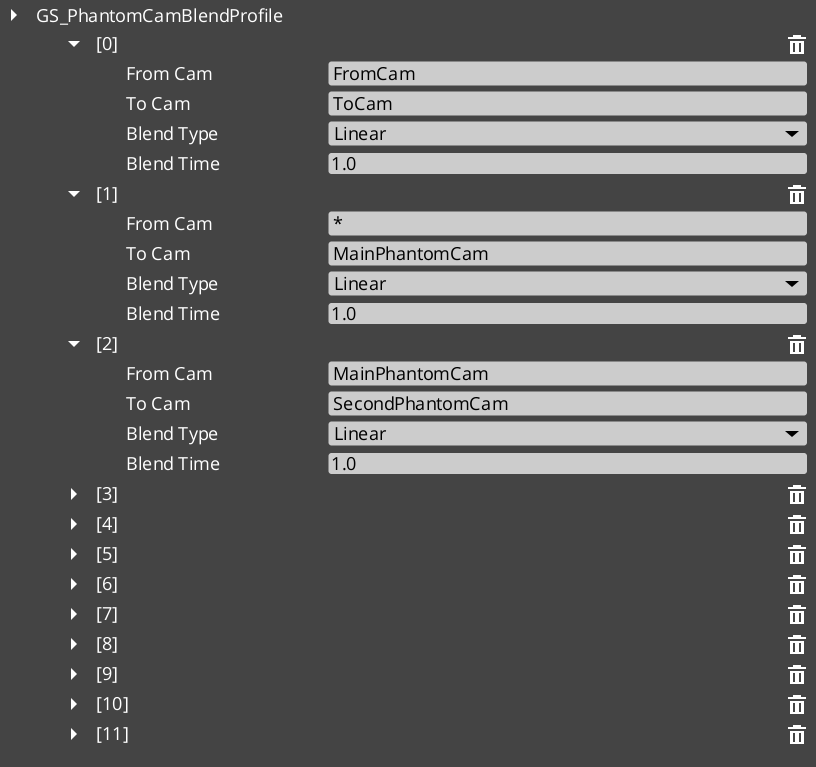

ClampedLook_PhantomCamComponent — look-at with clamped angle limitsStaticOrbitPhantomCamComponent — fixed-distance orbit around a targetTrack_PhantomCamComponent — follows a spline or path trackAlwaysFaceCameraComponent — keeps an entity billboard-facing the active camera

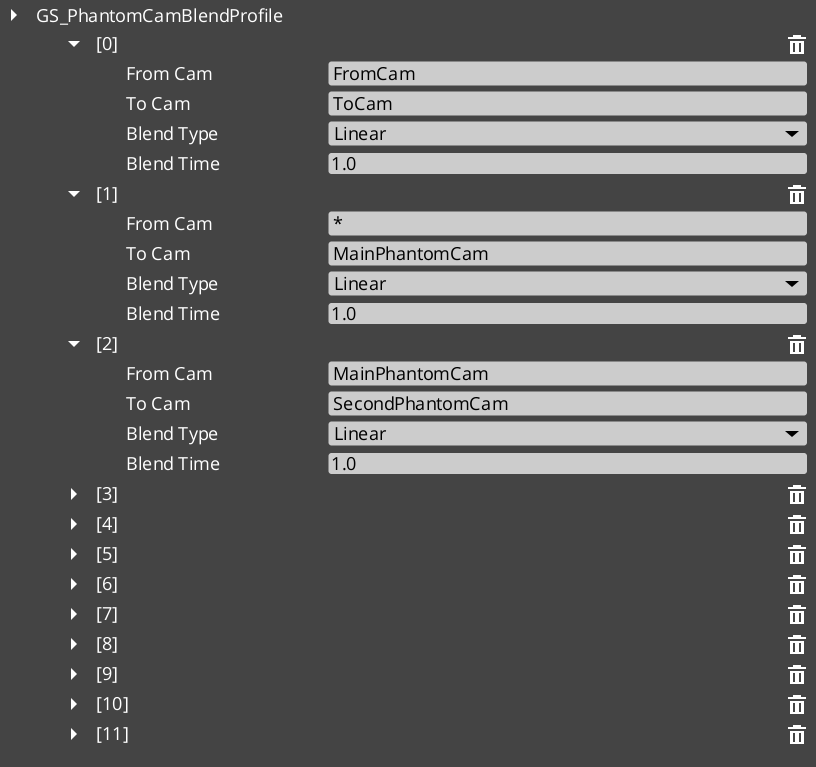

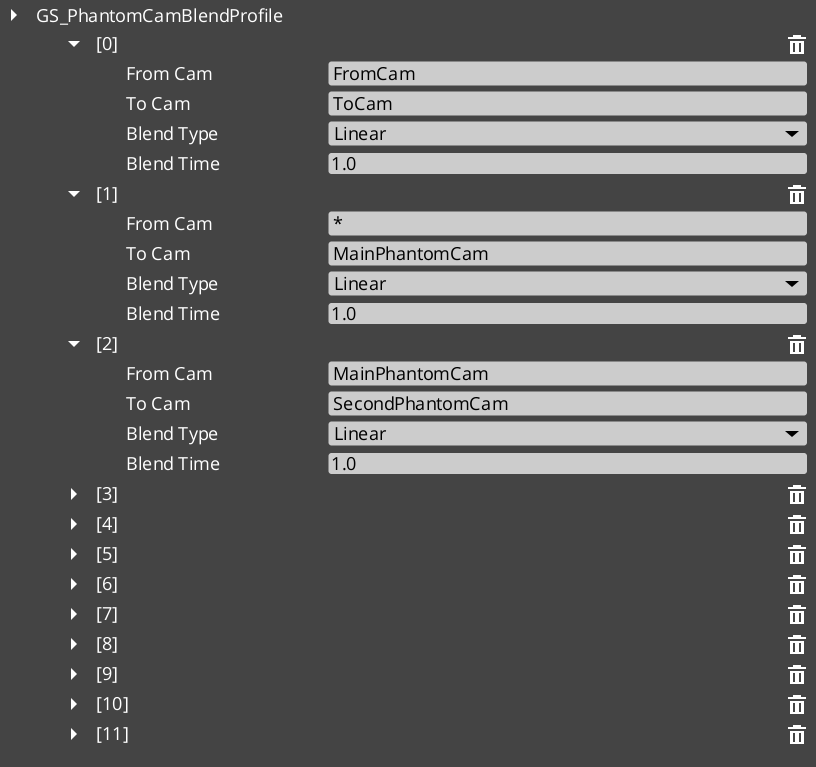

Blend Profiles

GS_PhantomCamBlendProfile — asset defining camera transition easing and duration- Smooth interpolation between camera positions on priority switch

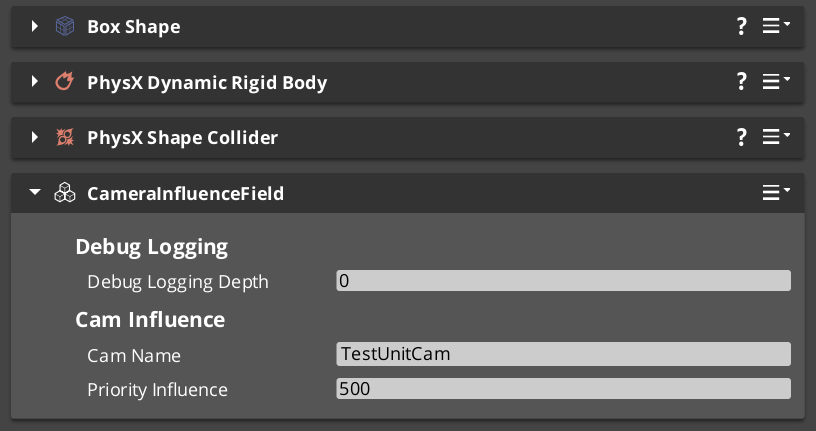

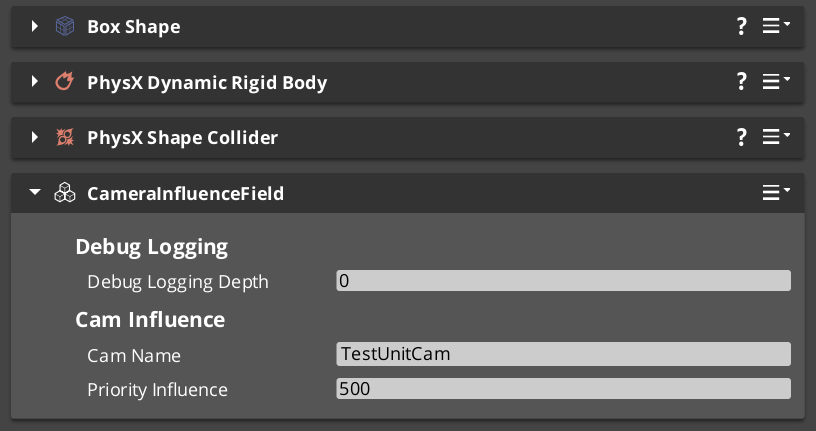

Influence Fields

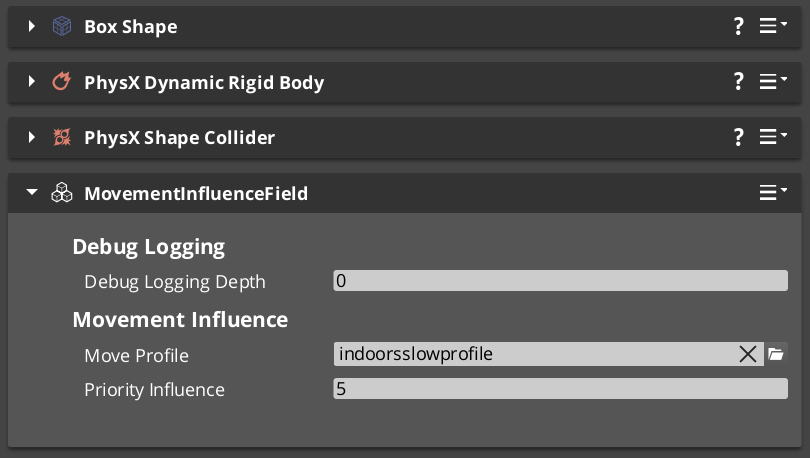

GlobalCameraInfluenceComponent — global persistent camera modifierCameraInfluenceFieldComponent — spatial zone that modifies camera behavior on entryCamInfluenceData — influence configuration data (offset, FOV shift, tilt)

2.1.9 - UI Change Log

GS_UI version changelog.

Logs

UI 0.5.0

First official base release of GS_UI.

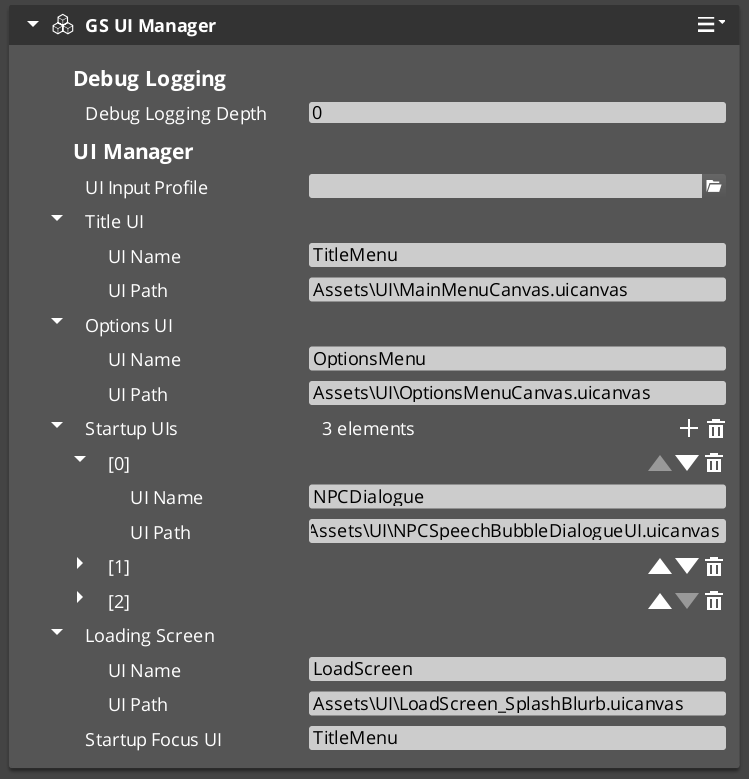

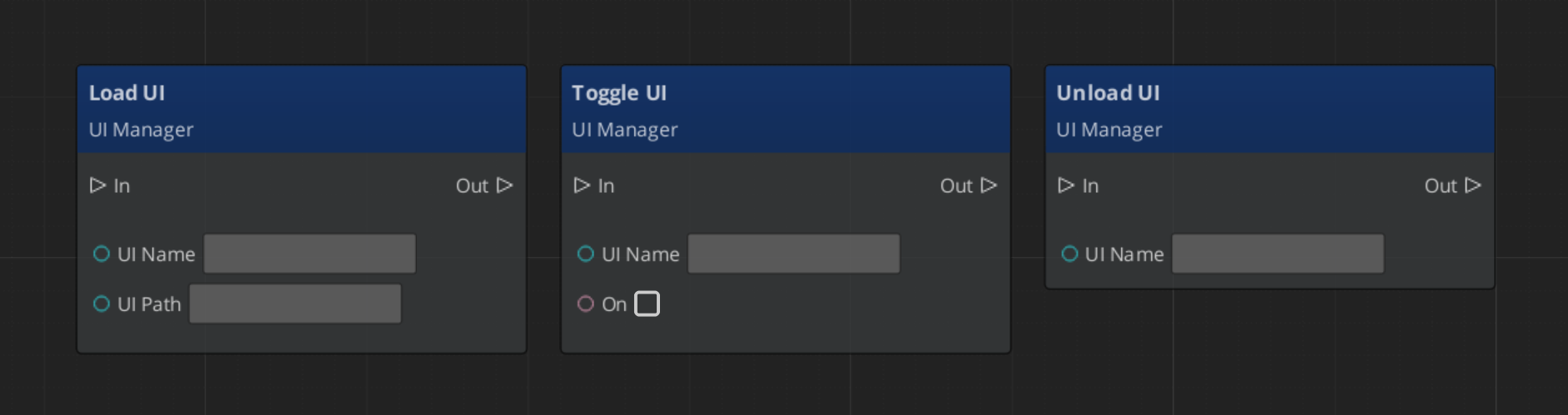

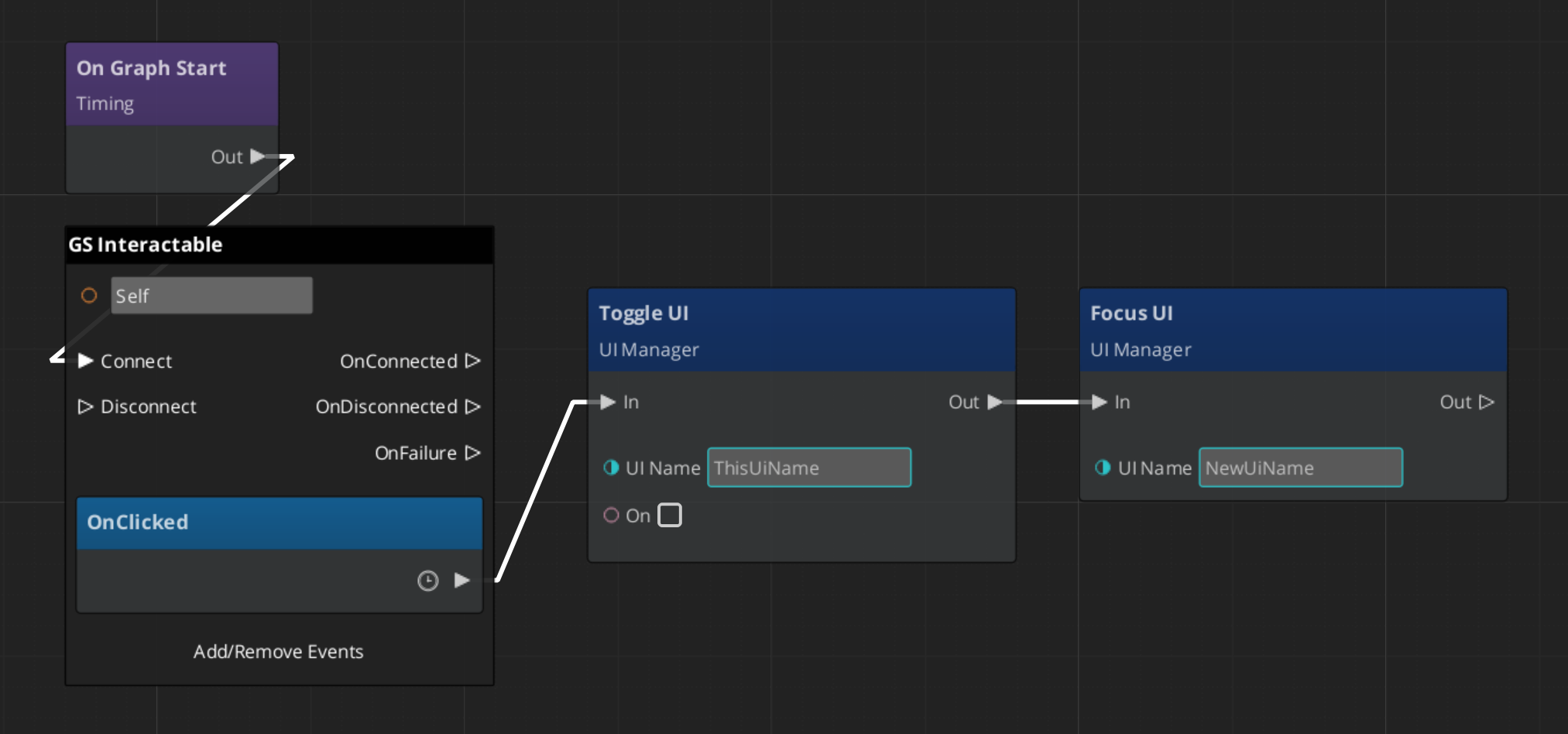

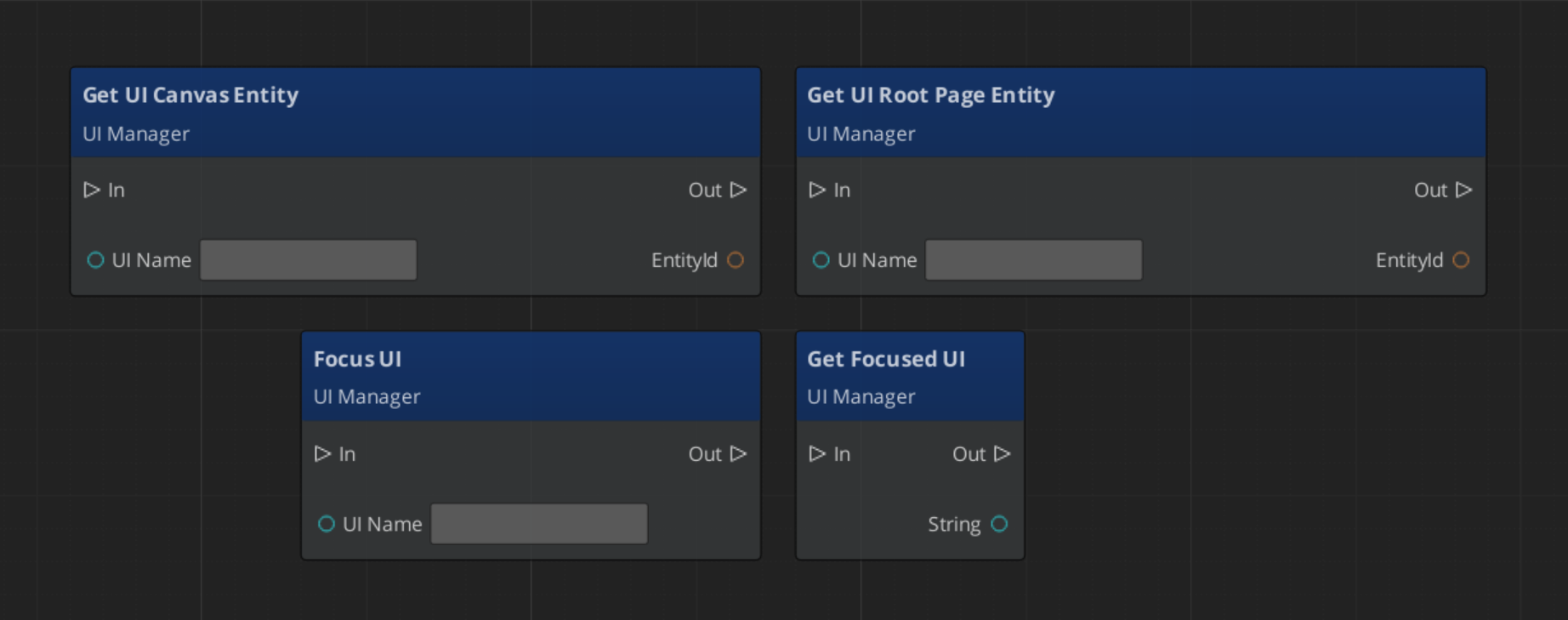

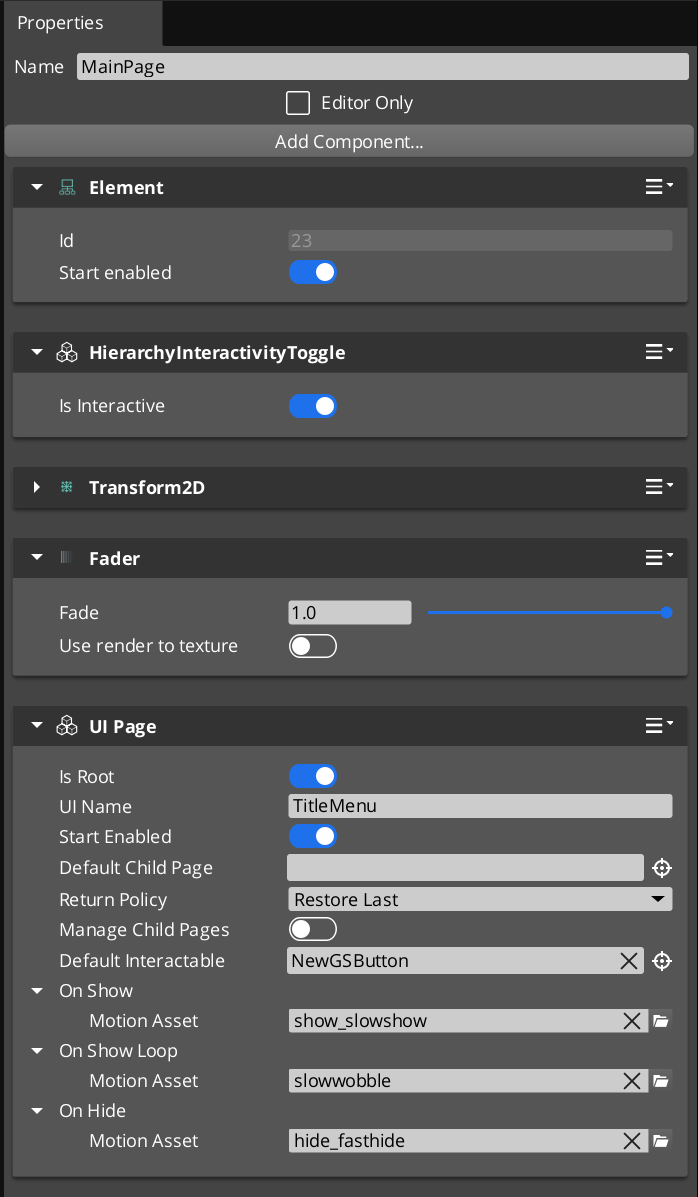

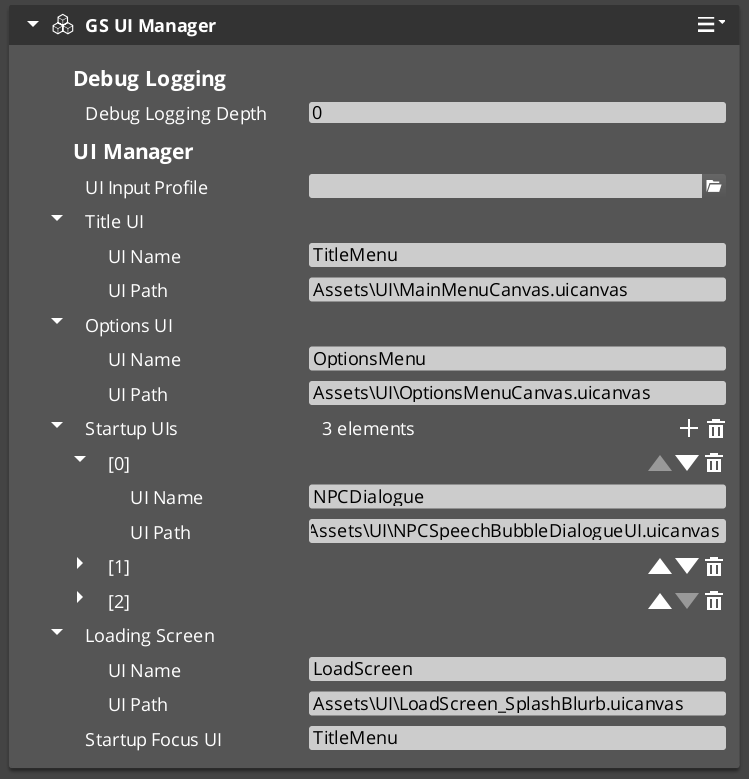

UI Manager

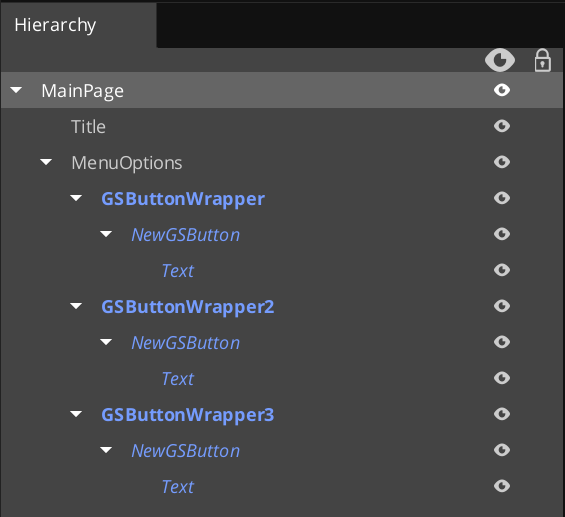

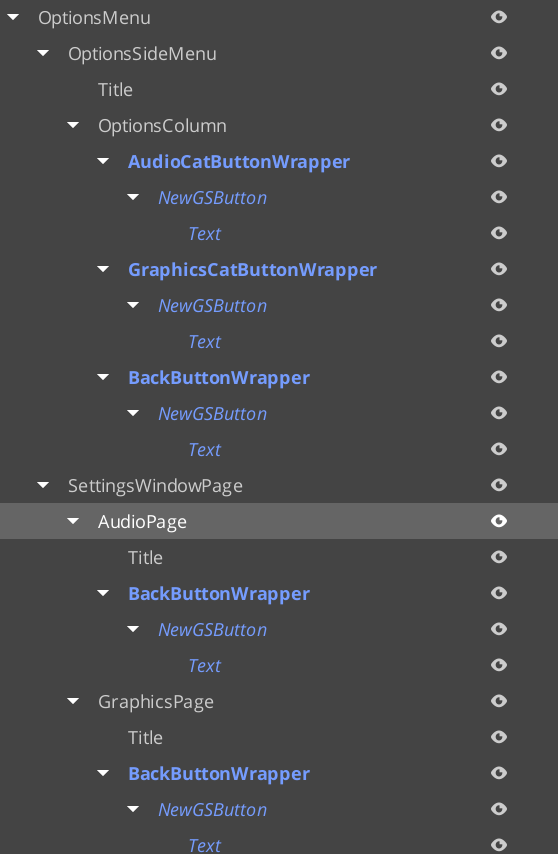

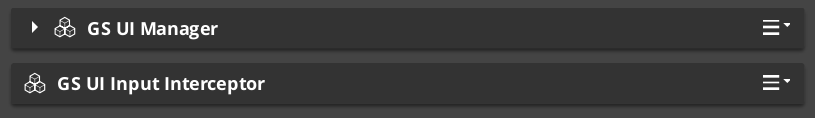

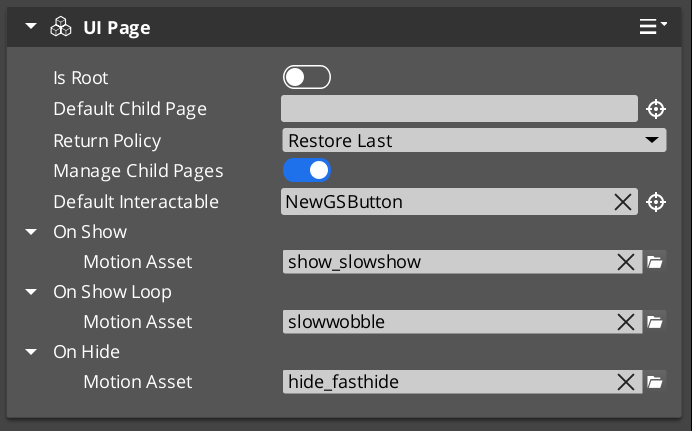

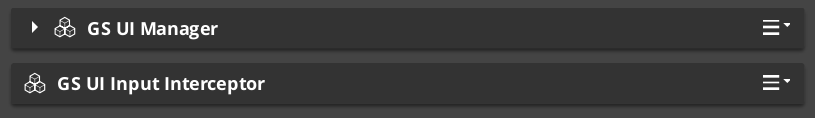

GS_UIManagerComponent — canvas lifecycle management, focus stack, startup focus assignmentUIManagerRequestBus- Single-tier page navigation: Manager → Page (no hub layer)

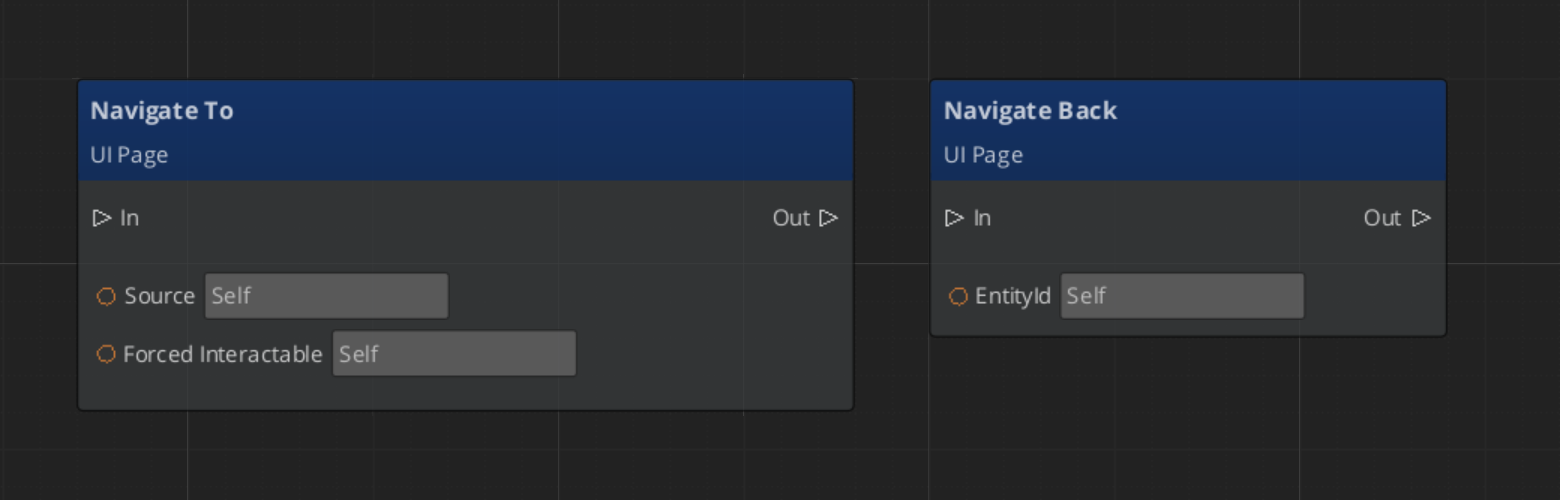

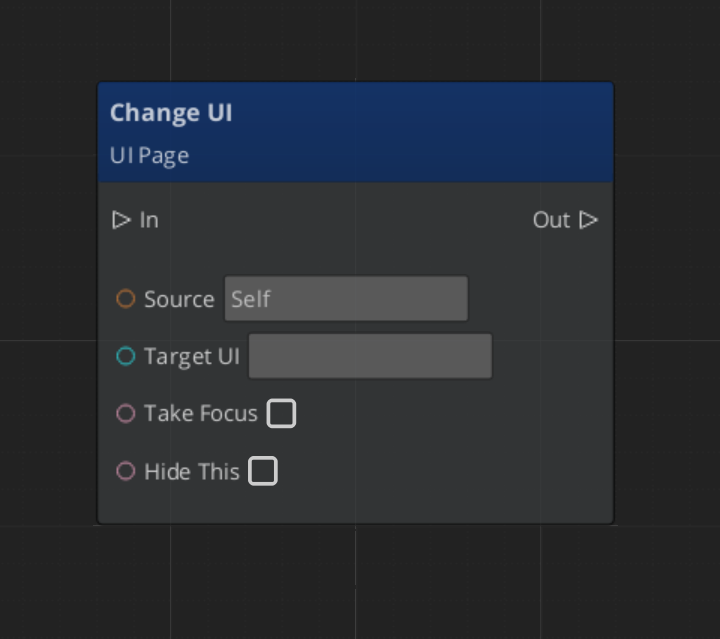

Page Navigation

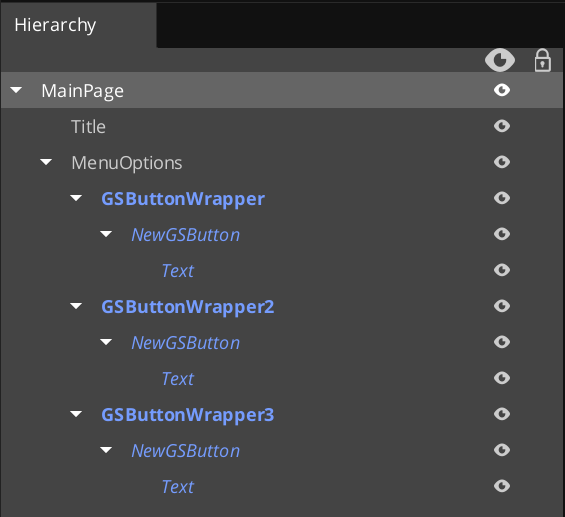

GS_UIPageComponent — root canvas registration, nested page managementNavigationReturnPolicy — RestoreLast or AlwaysDefault back navigation behaviorFocusEntry — focus state data for page transitions- Cross-canvas boundary navigation support

- Companion Component Pattern: domain-specific page logic lives on companion components that react to page bus events

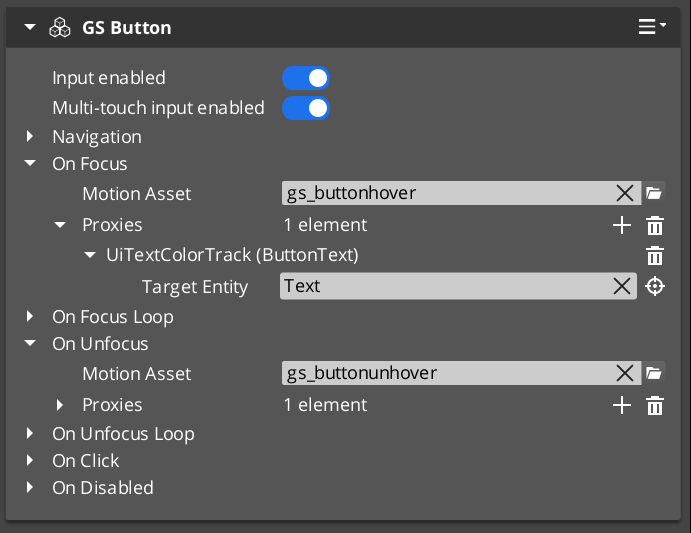

UI Interaction

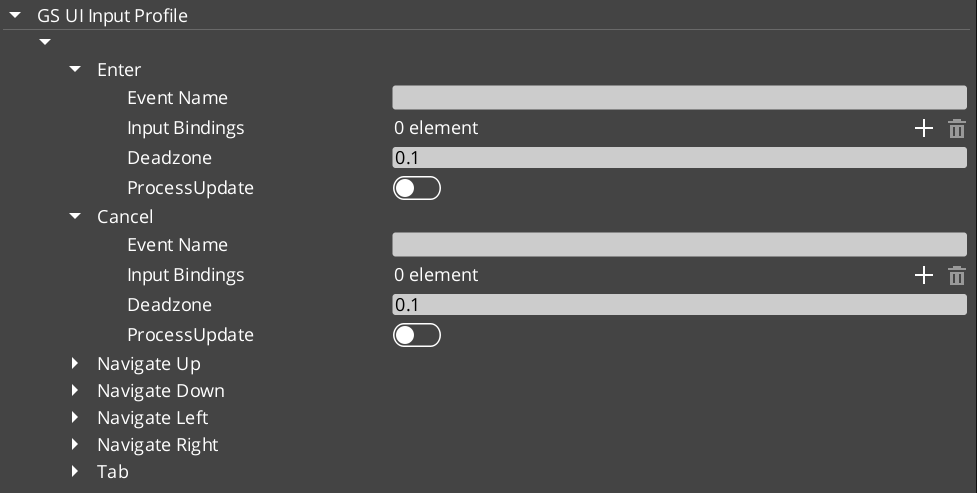

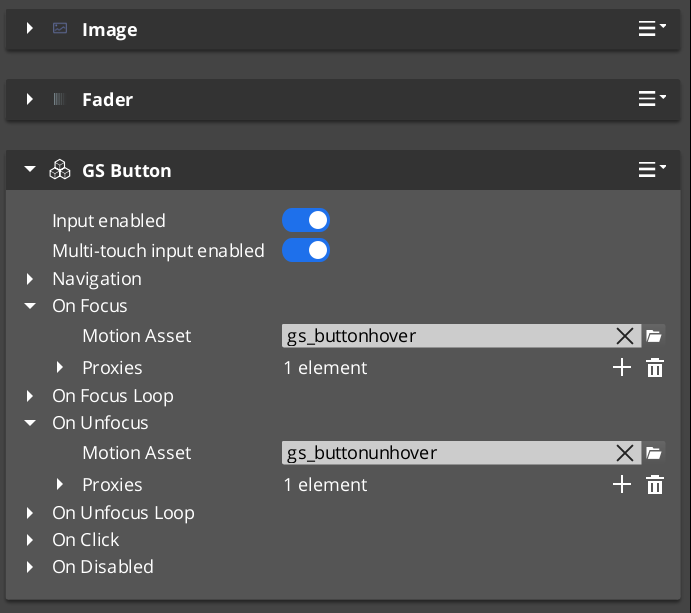

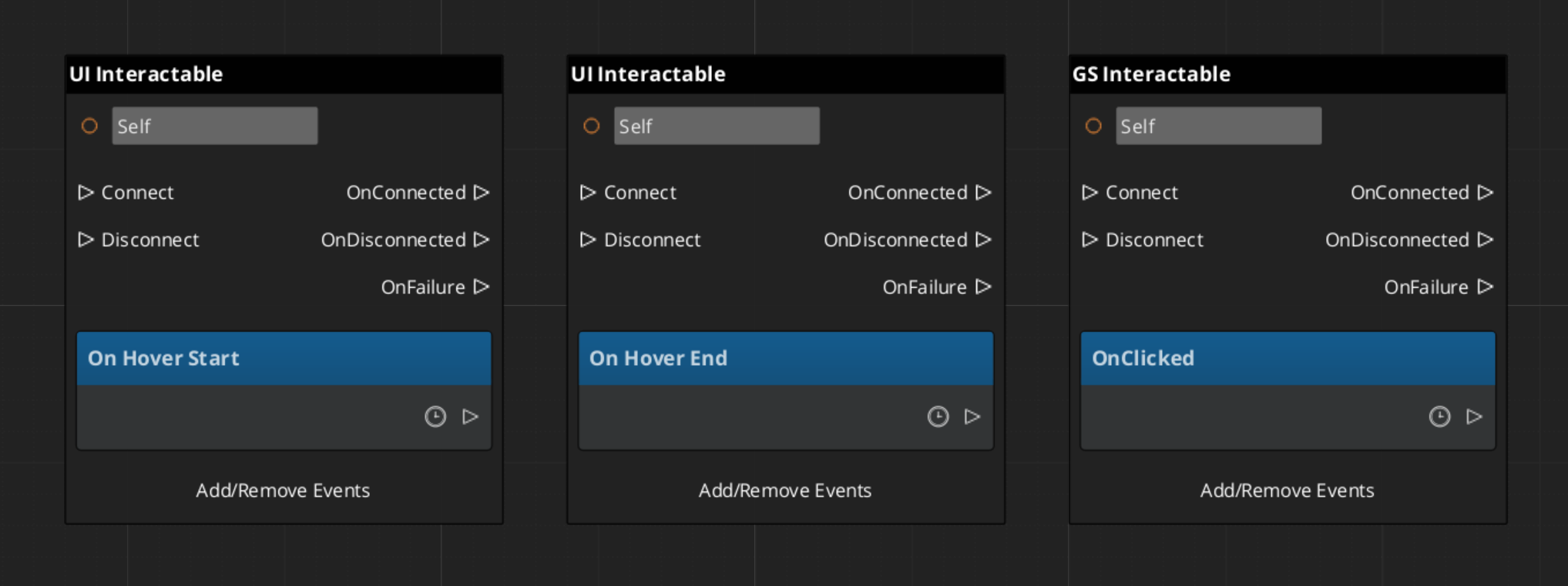

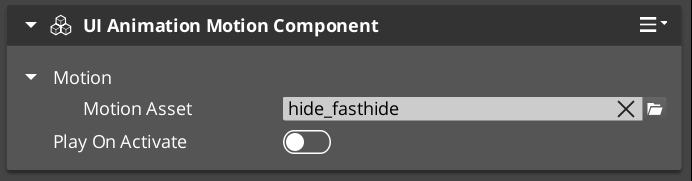

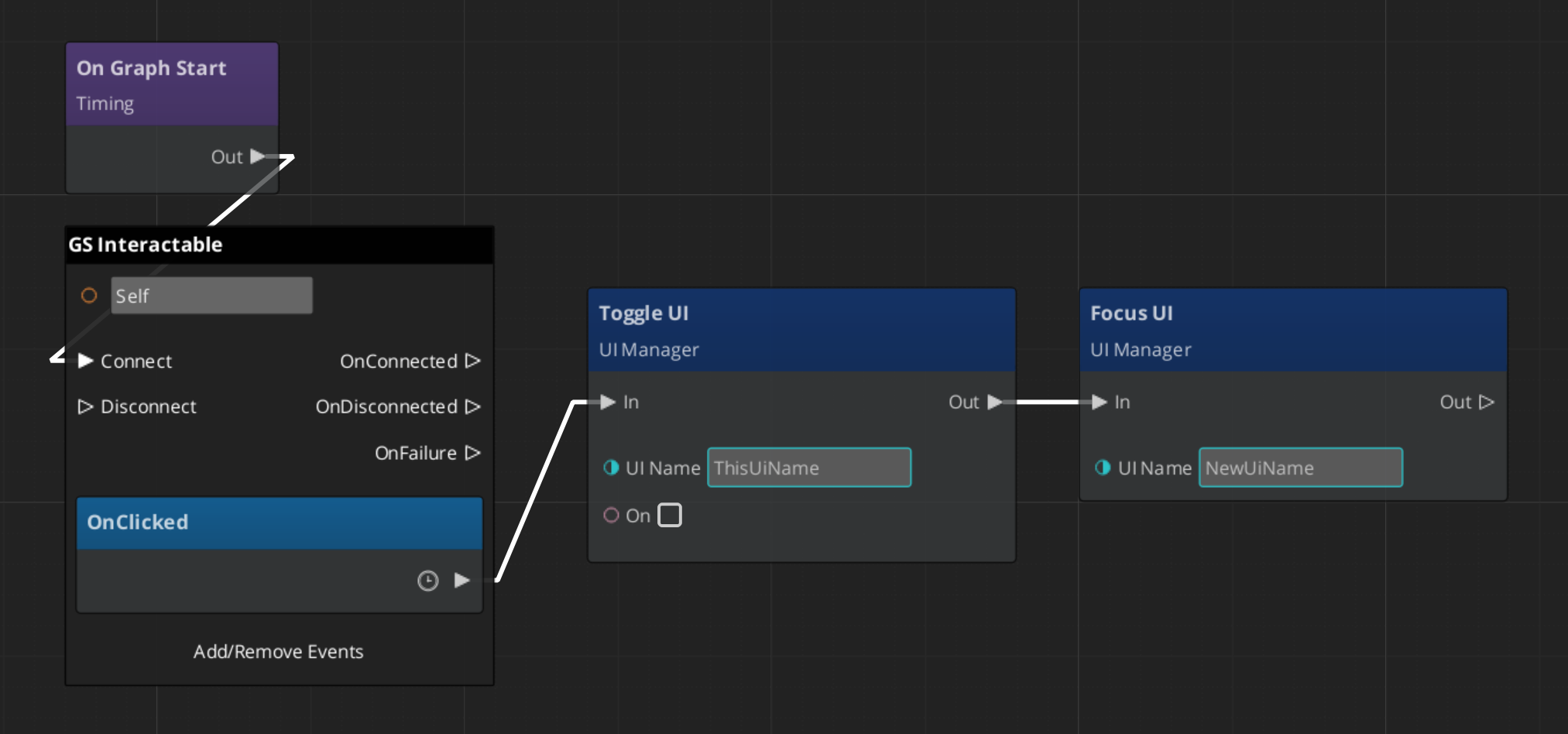

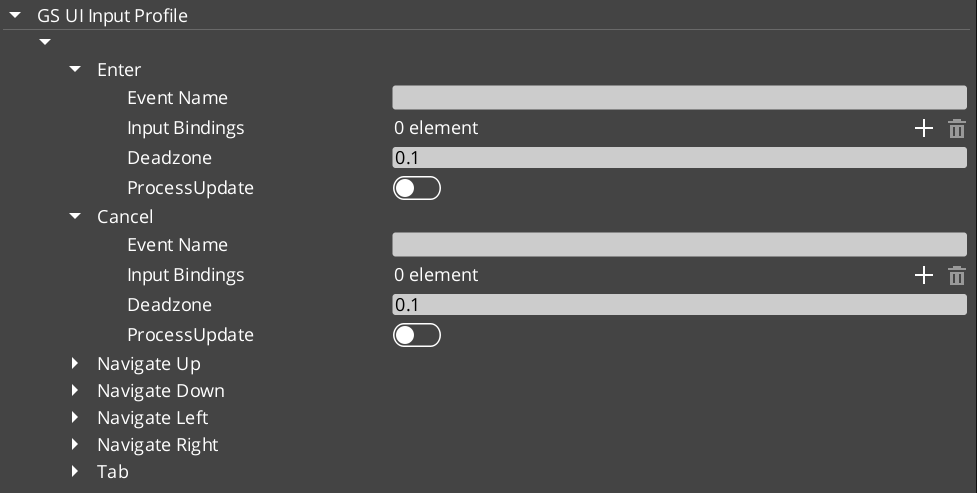

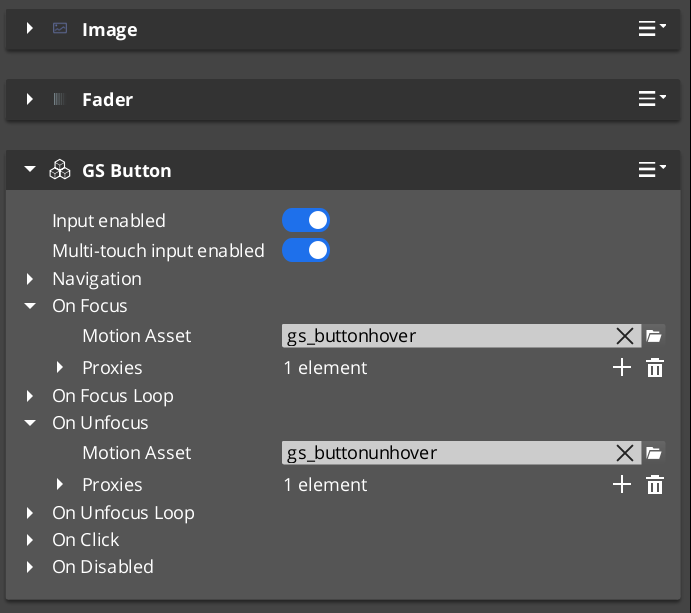

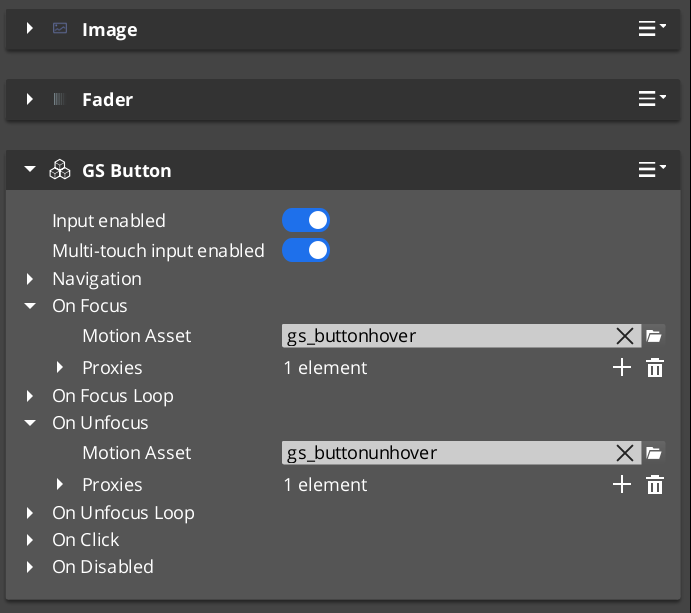

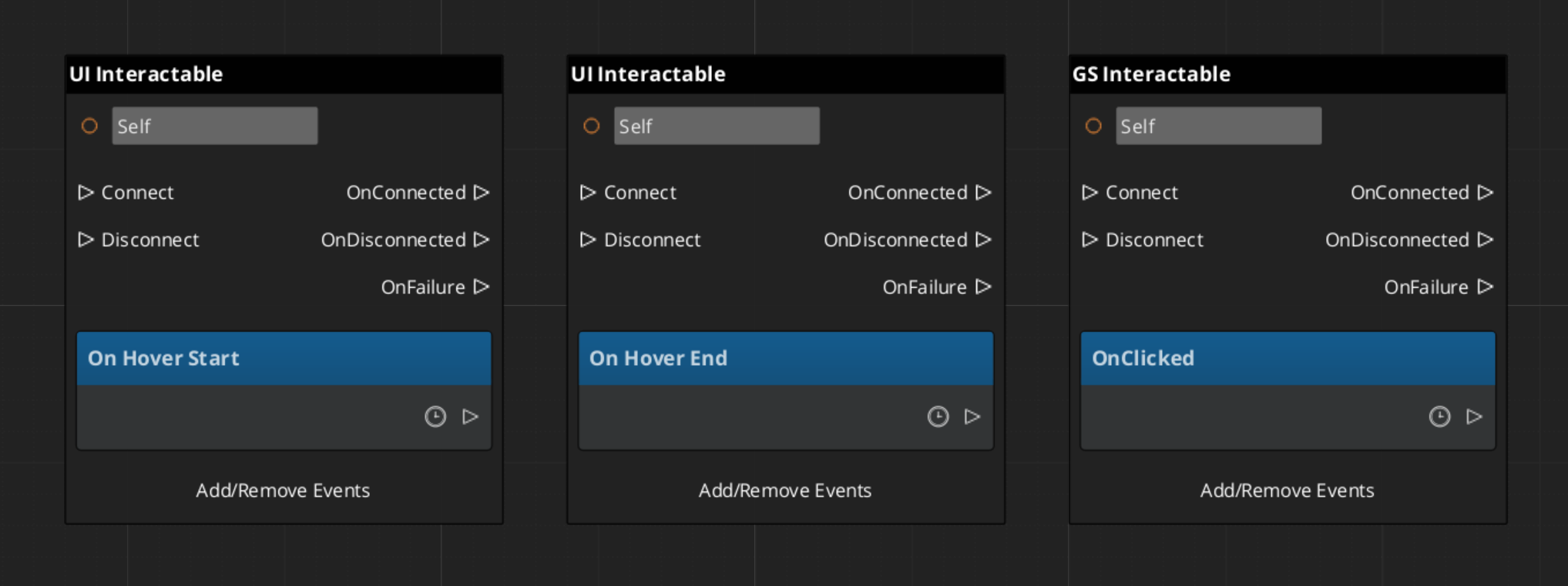

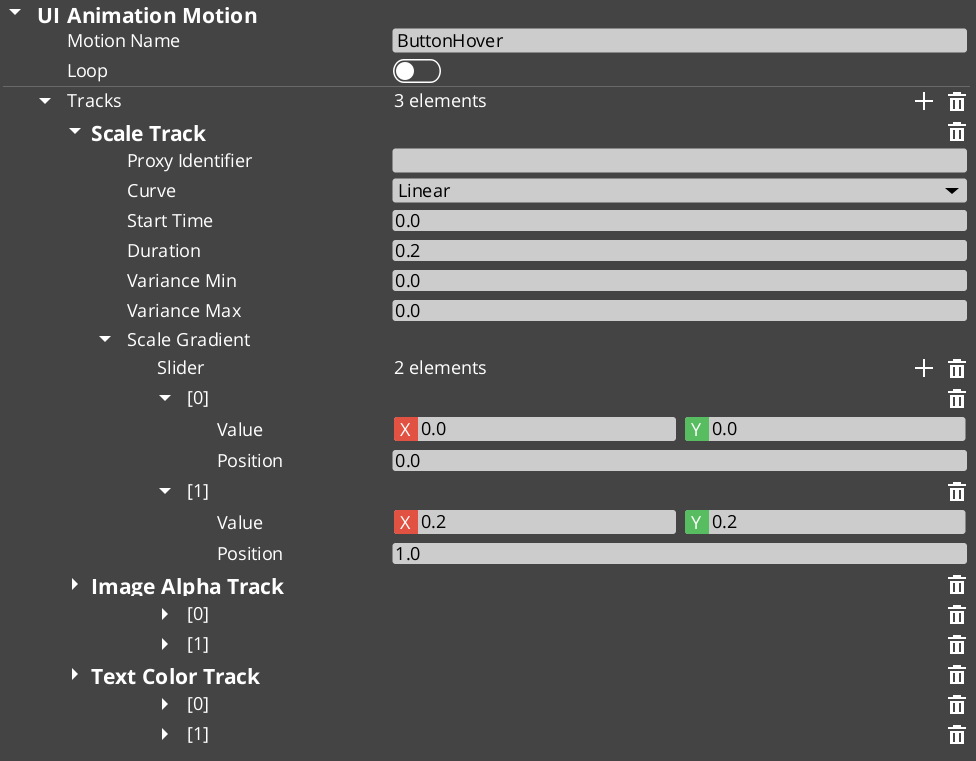

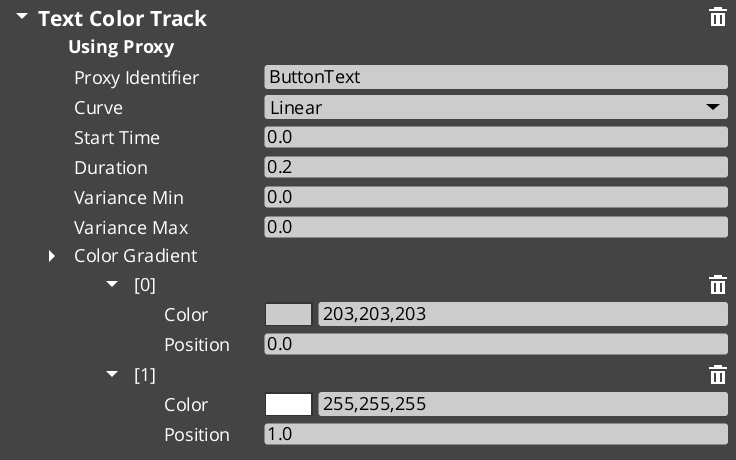

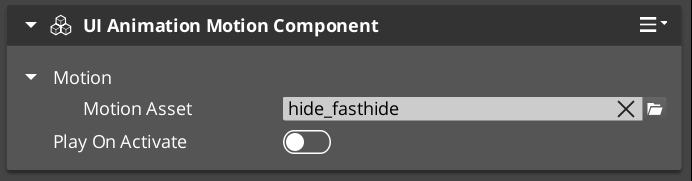

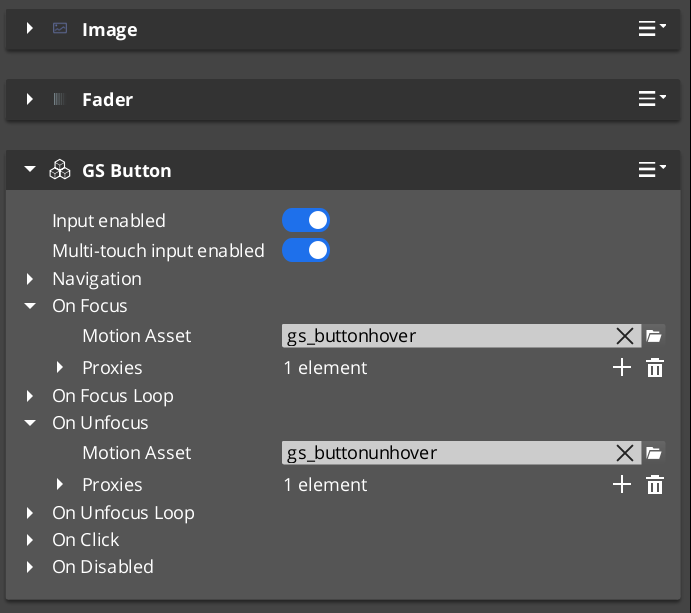

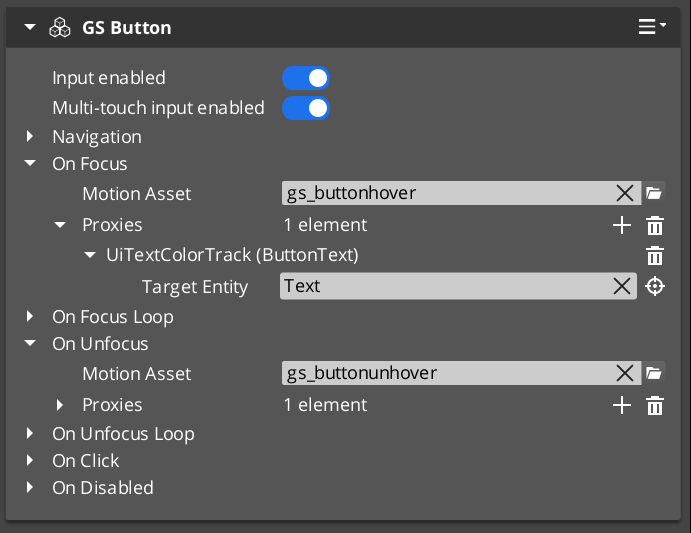

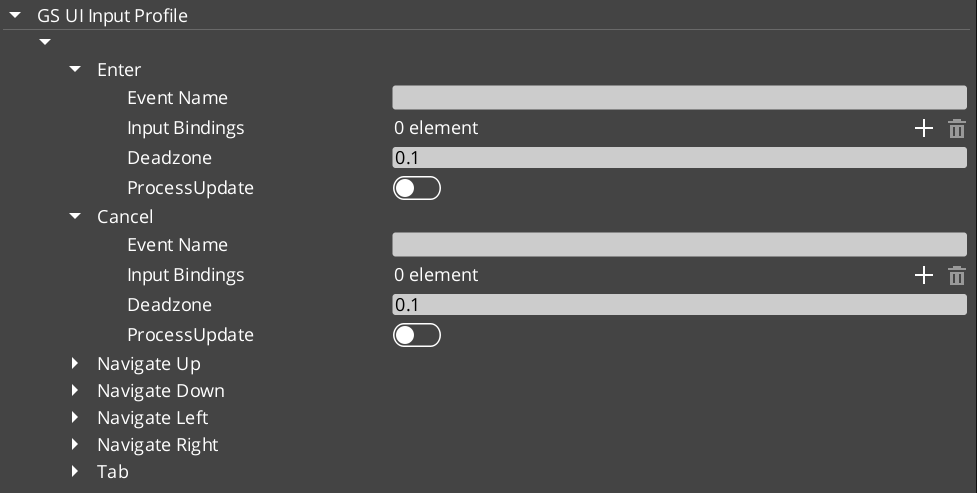

GS_ButtonComponent — enhanced button with hover and select UiAnimationMotion supportGS_UIInputInterceptorComponent — input capture and blocking for UI canvasesGS_UIInputProfile — input mapping configuration for UI contexts

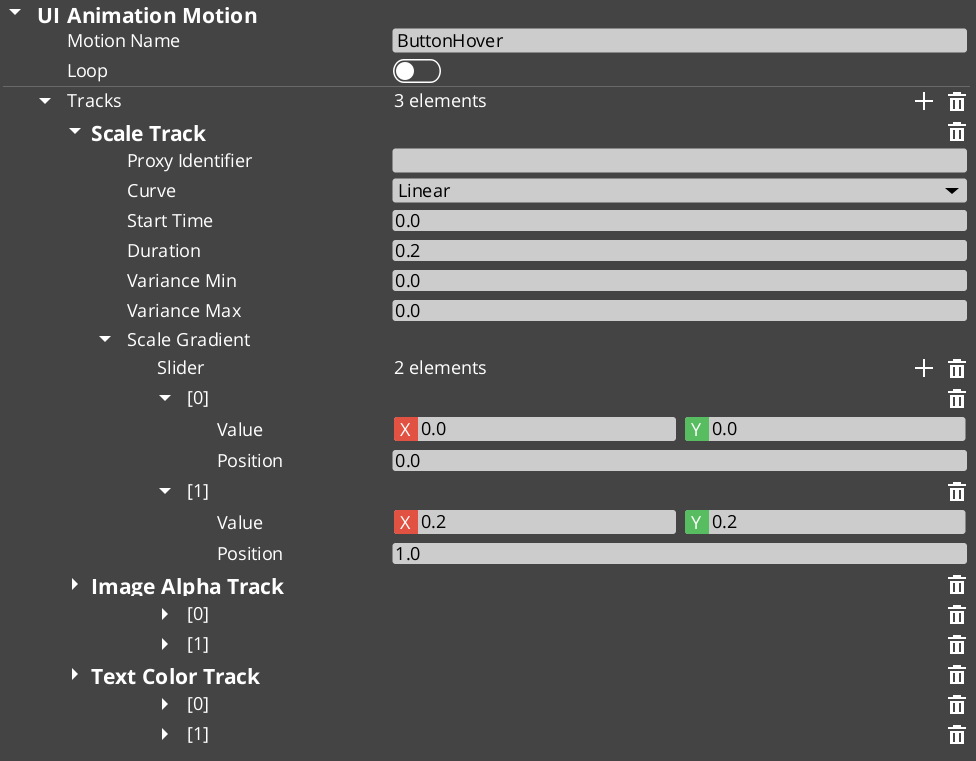

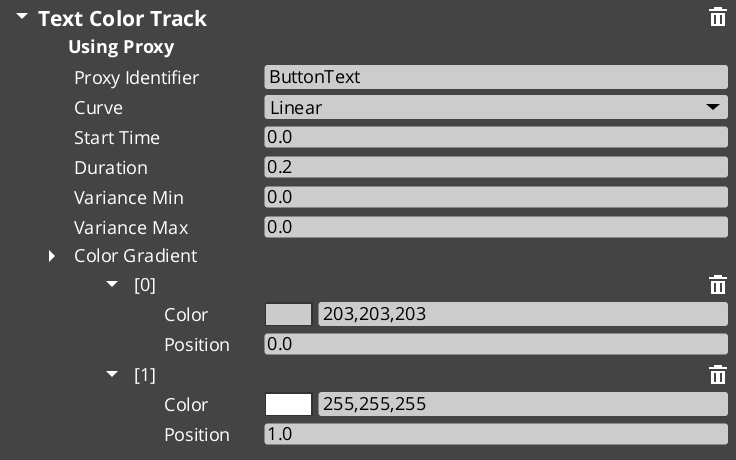

UI Animation

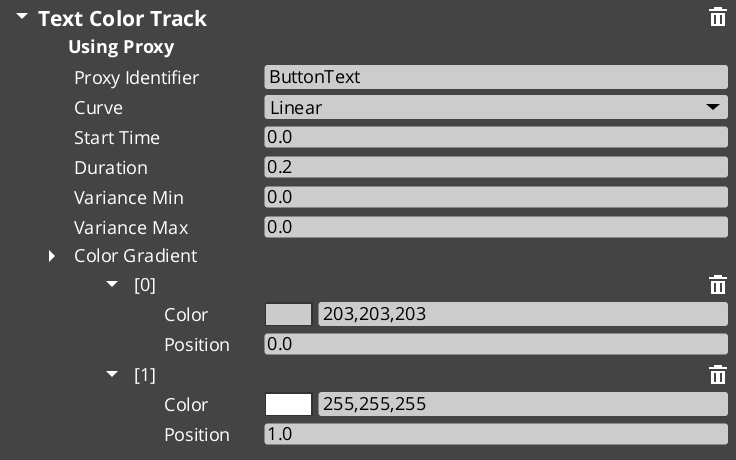

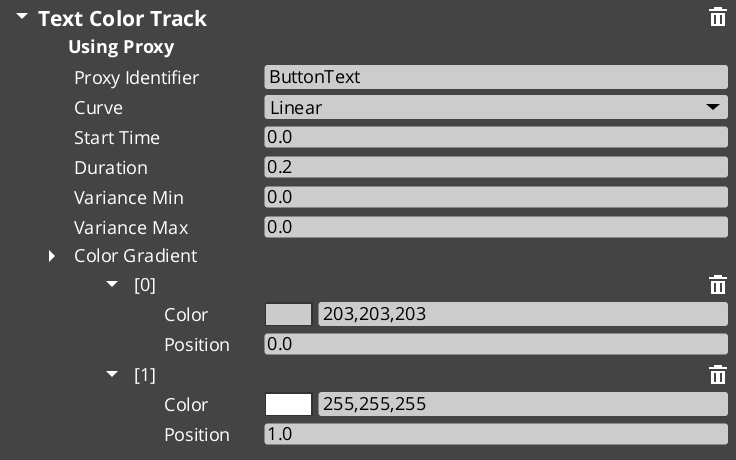

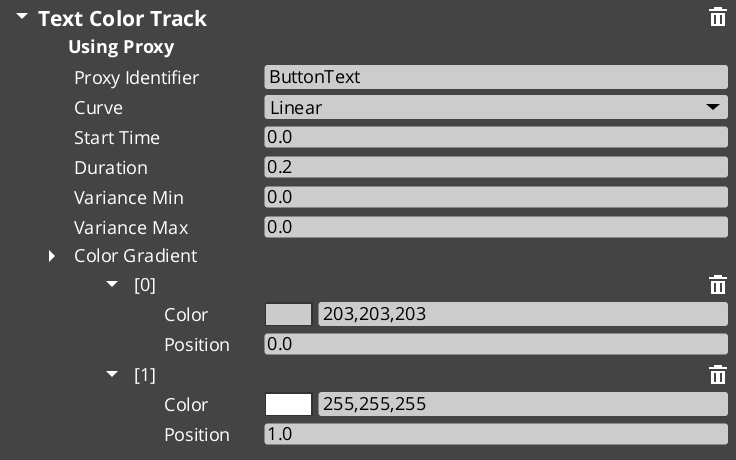

UiMotionTrack (domain base) — extends GS_Core’s GS_Motion system- 8 concrete tracks:

UiPositionTrack, UiScaleTrack, UiRotationTrack, UiElementAlphaTrack, UiImageAlphaTrack, UiImageColorTrack, UiTextColorTrack, UiTextSizeTrack UiAnimationMotionAsset — authored animation assetUiAnimationMotion — runtime instance wrapper

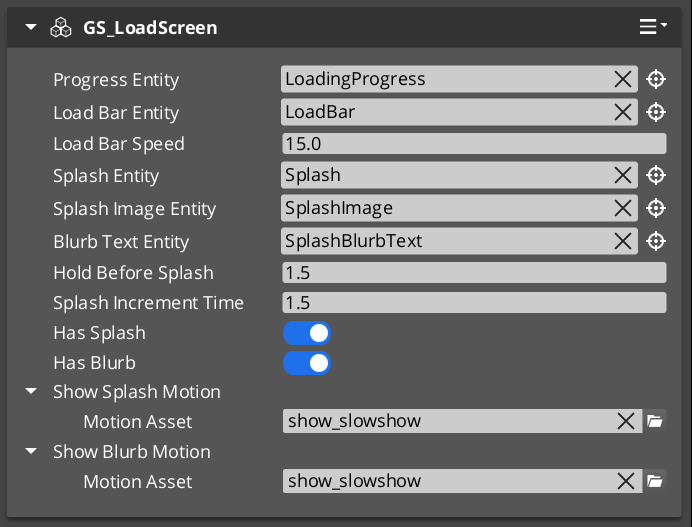

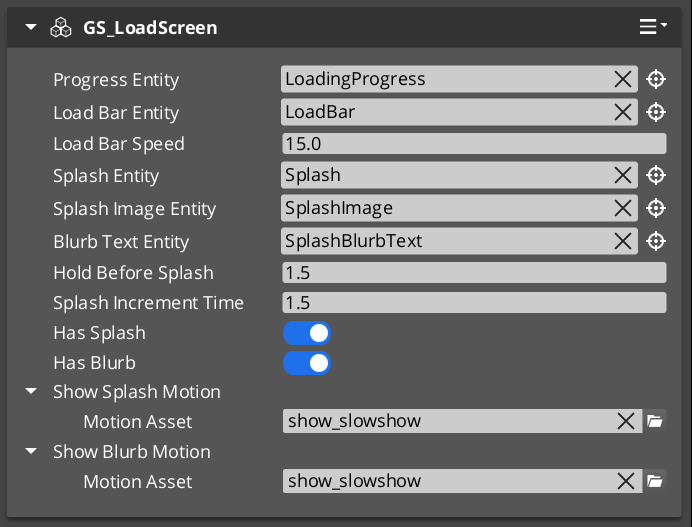

Load Screen

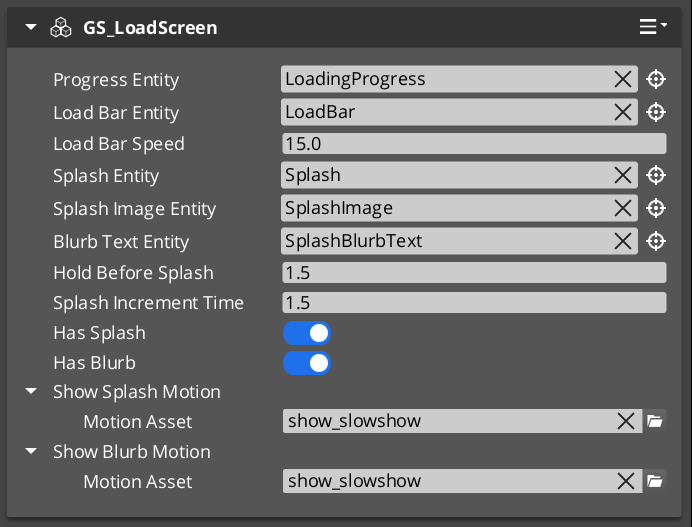

GS_LoadScreenComponent — manages load screen display during level transitions

PauseMenuComponent — pause state management and pause menu page coordination

Removed

GS_UIWindowComponent — removed entirelyGS_UIHubComponent / GS_UIHubBus — deprecated legacy layer

2.1.10 - Unit Change Log

GS_Unit version changelog.

Logs

Unit 0.5.0

First official base release of GS_Unit.

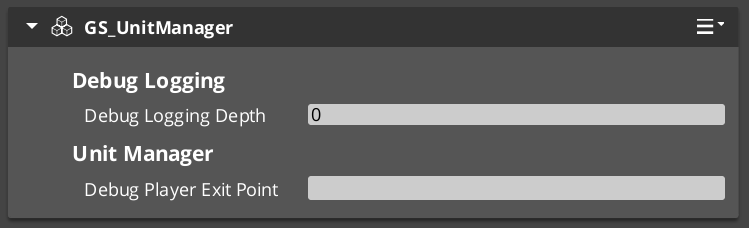

Unit Manager

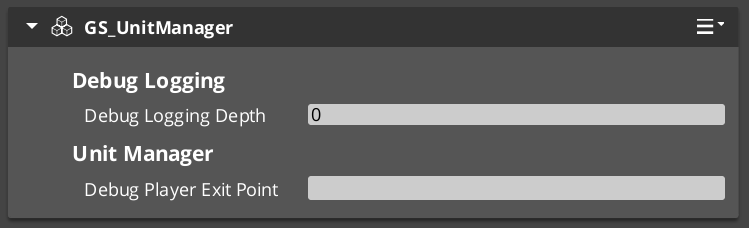

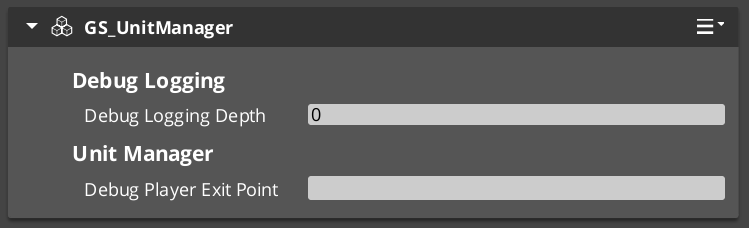

GS_UnitManagerComponent — unit registration and lifecycle trackingUnitManagerRequestBus

Unit Component

GS_UnitComponent — unit identity and state componentUnitRequestBus

Controllers

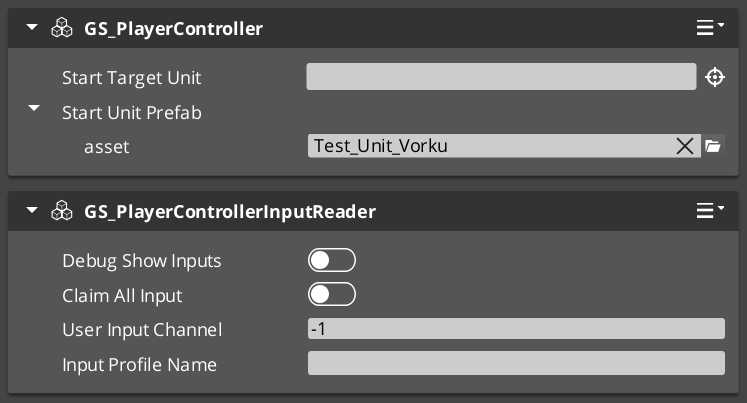

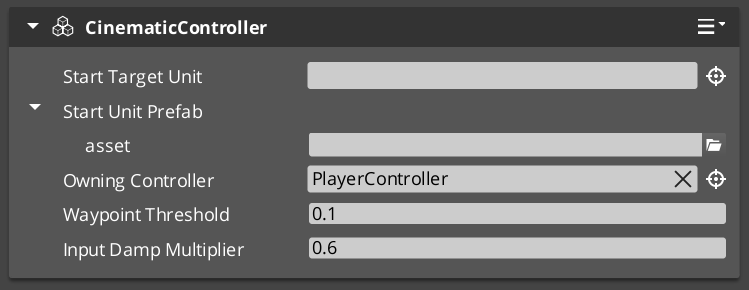

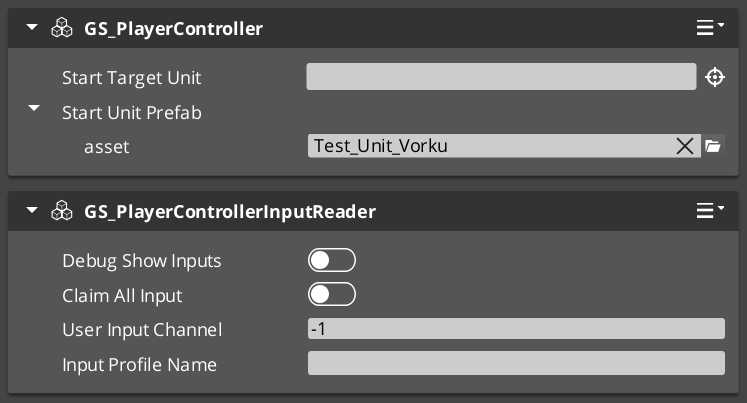

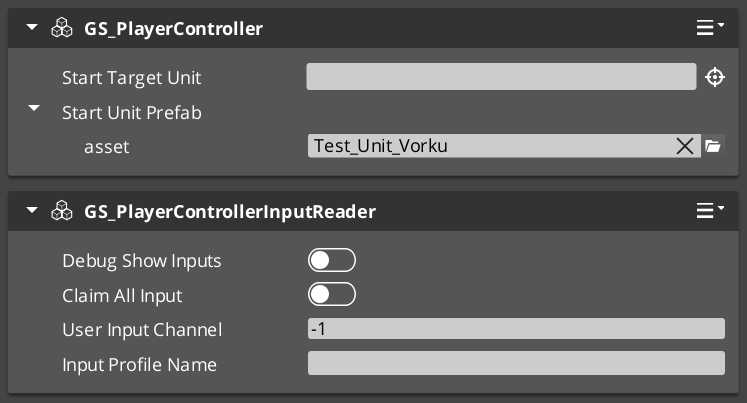

GS_UnitControllerComponent — abstract controller base, possess/release patternGS_PlayerControllerComponent — human player possessionGS_AIControllerComponent — AI-driven possessionGS_PlayerControllerInputReaderComponent — reads hardware input and routes to the possessed unit

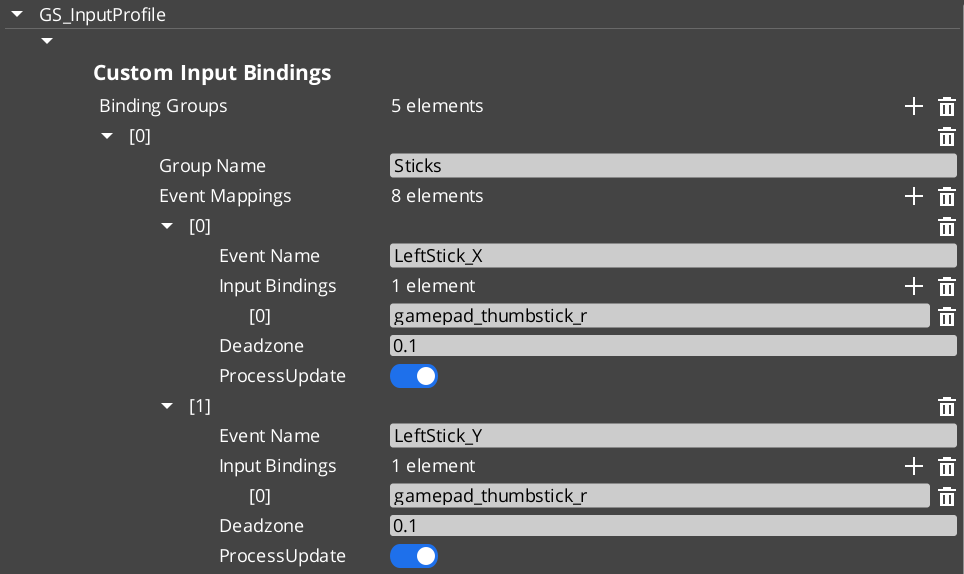

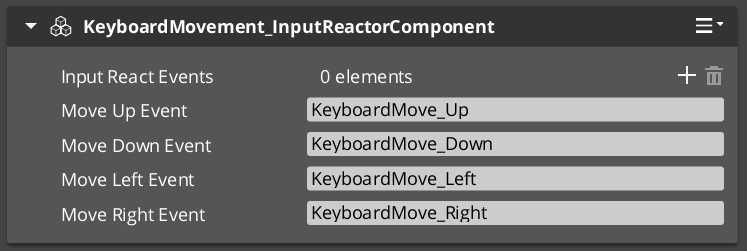

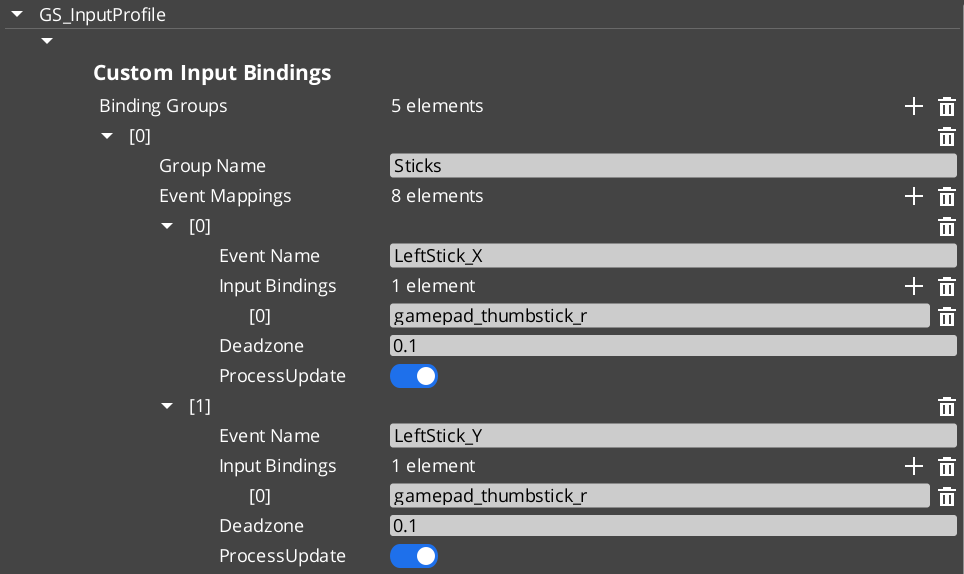

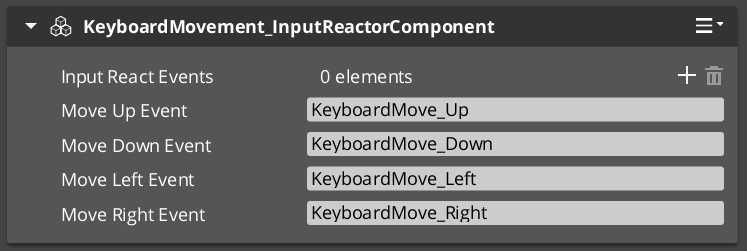

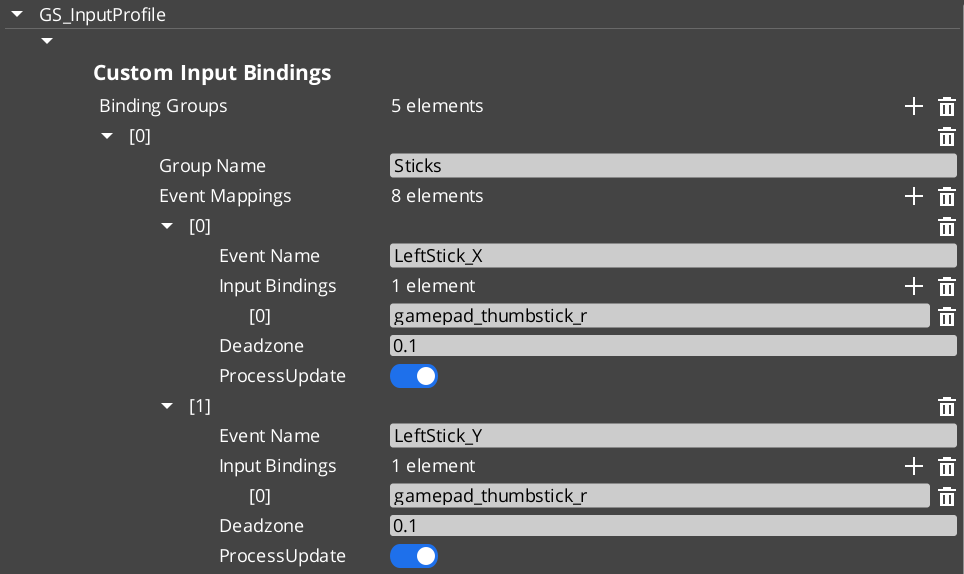

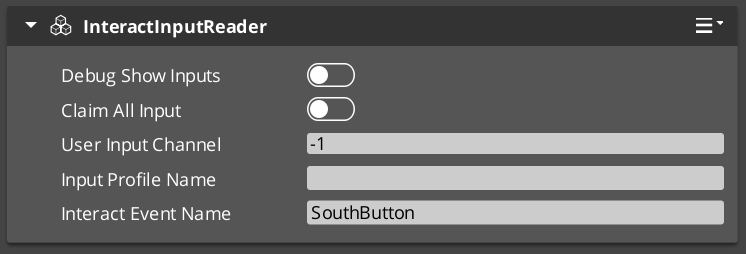

- 3-stage architecture: controller entity reads hardware → routes to

InputDataComponent on the unit → reactor components act on the structured state InputDataRequestBus / InputDataNotificationBusGS_InputReactorComponent — reacts to binary input states (button/action)GS_InputAxisReactorComponent — reacts to axis input values (sticks, triggers)

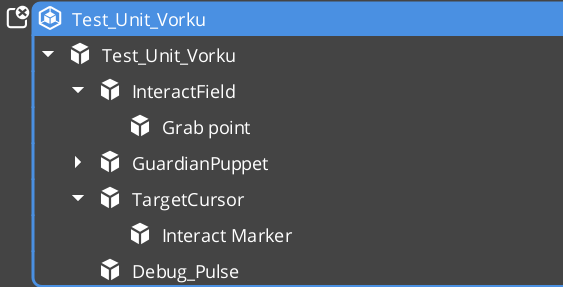

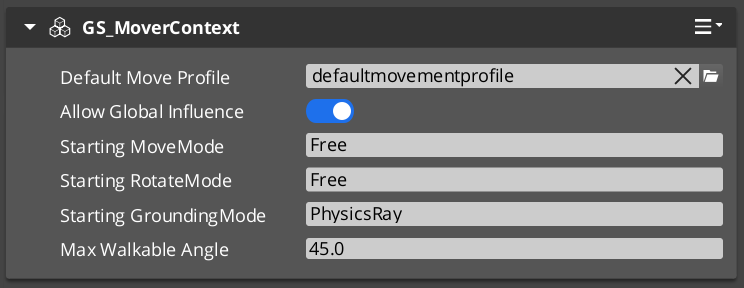

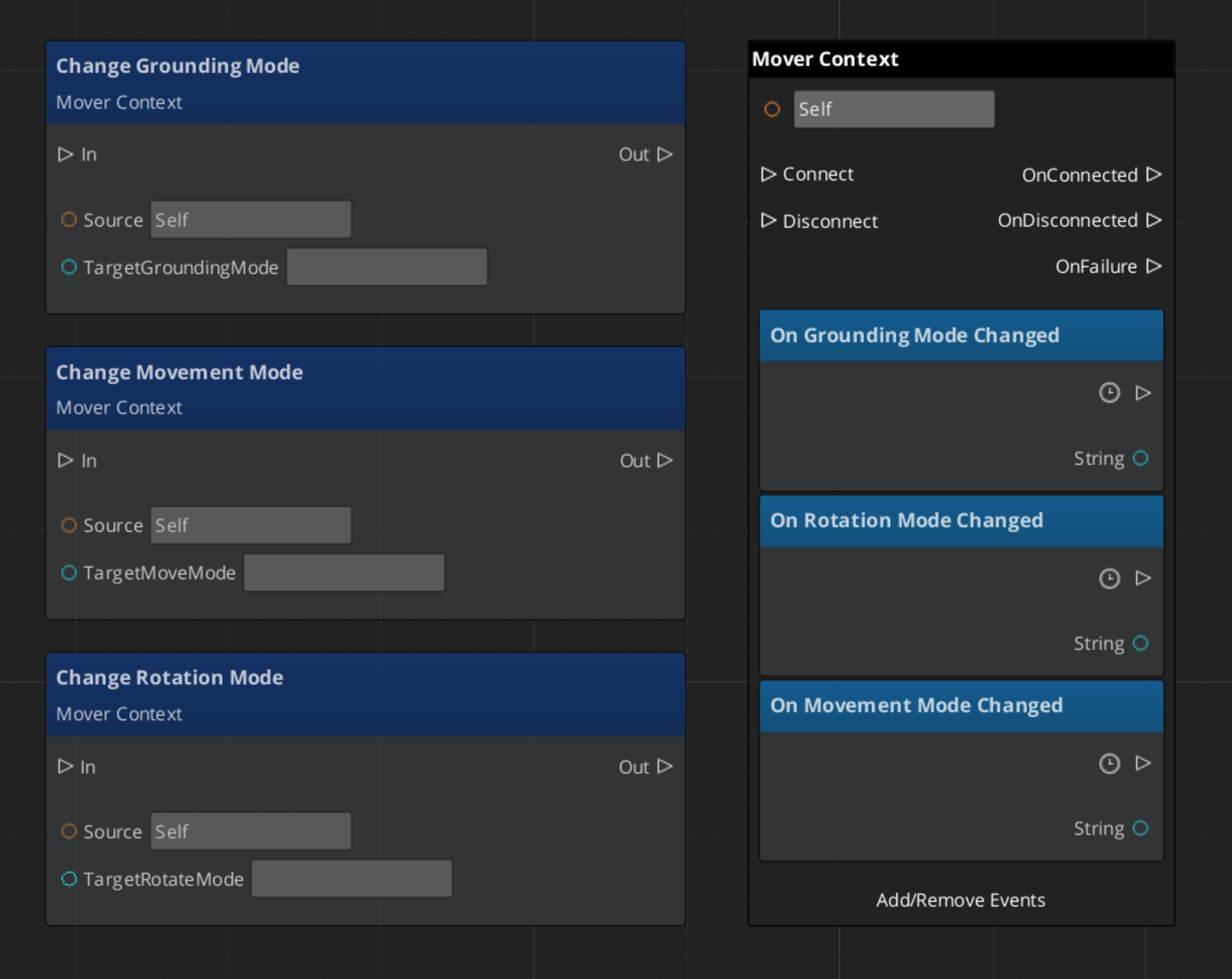

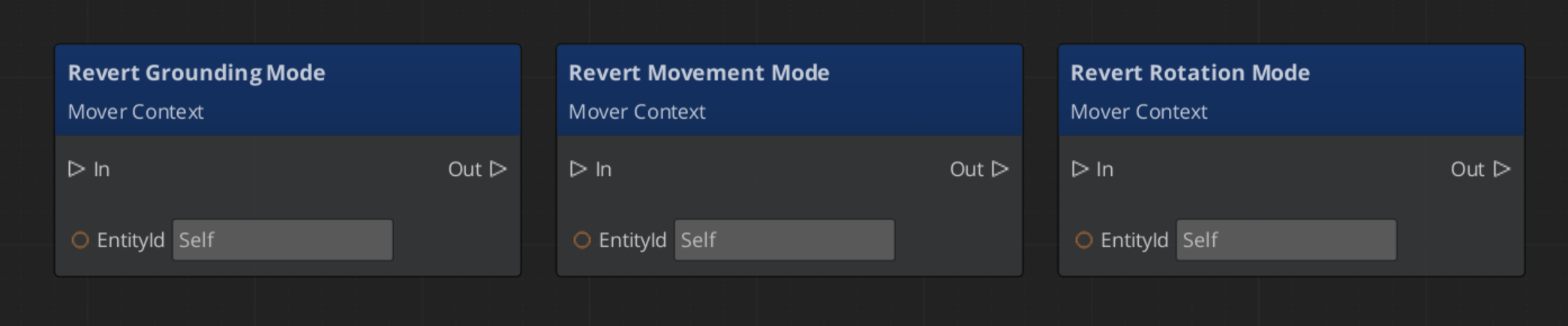

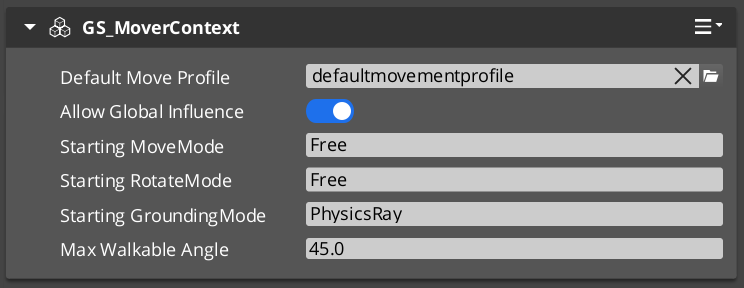

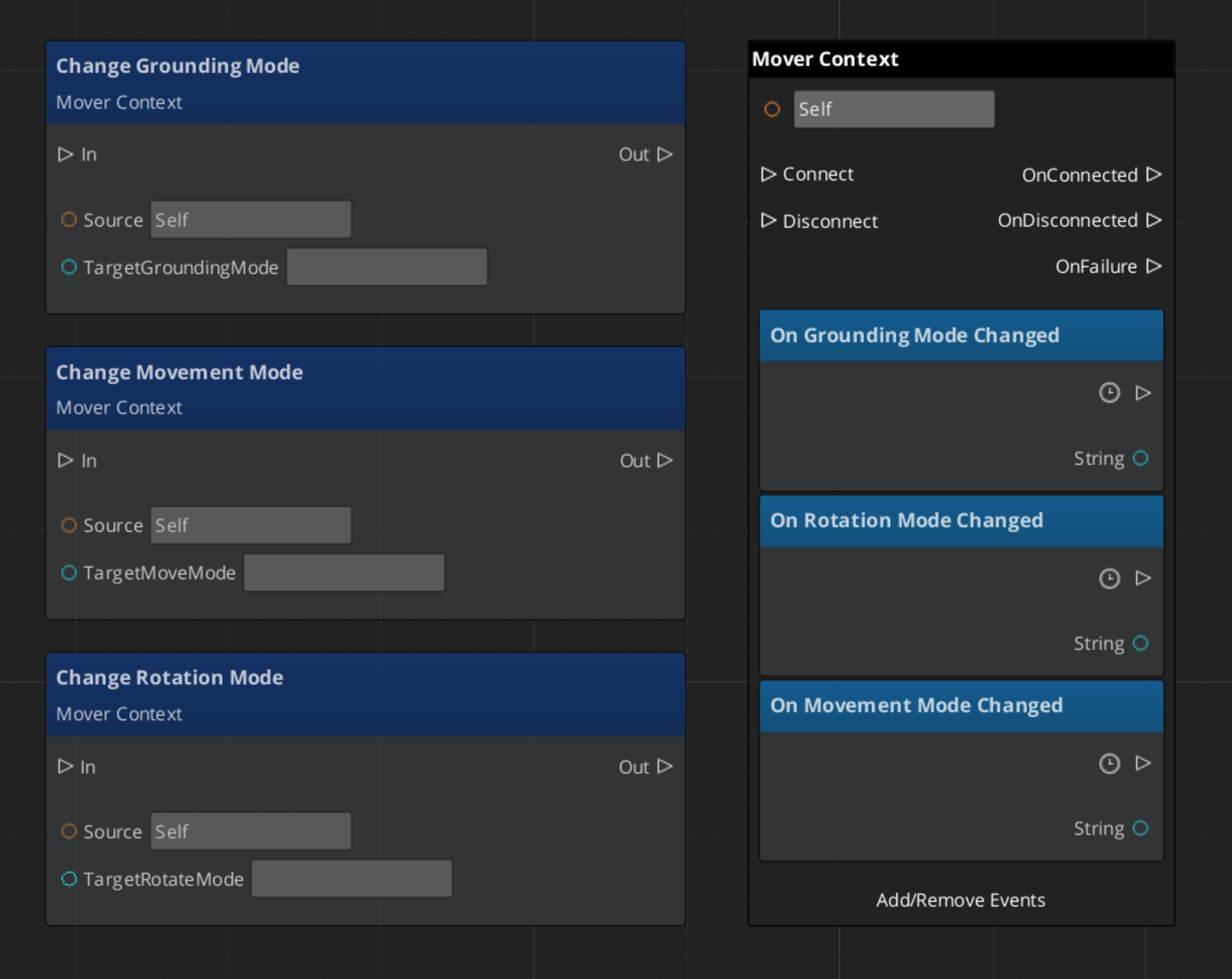

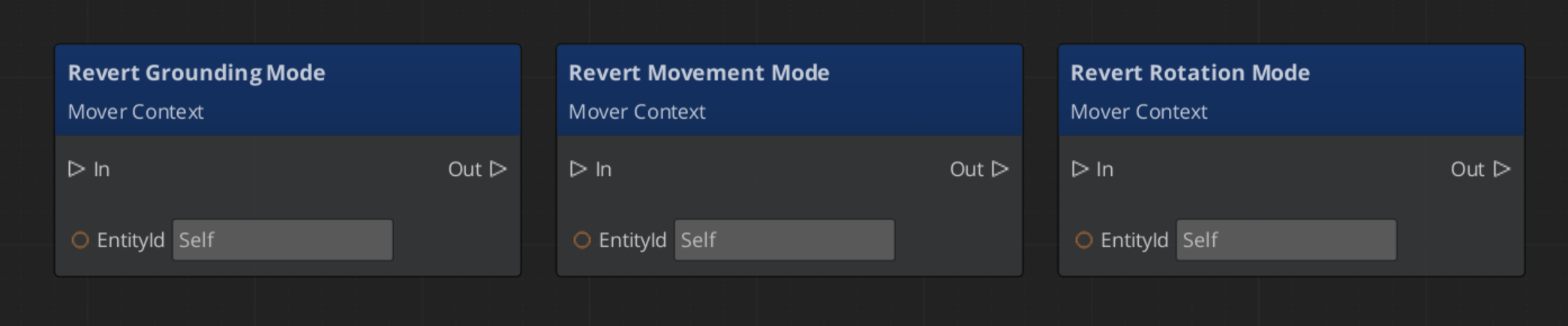

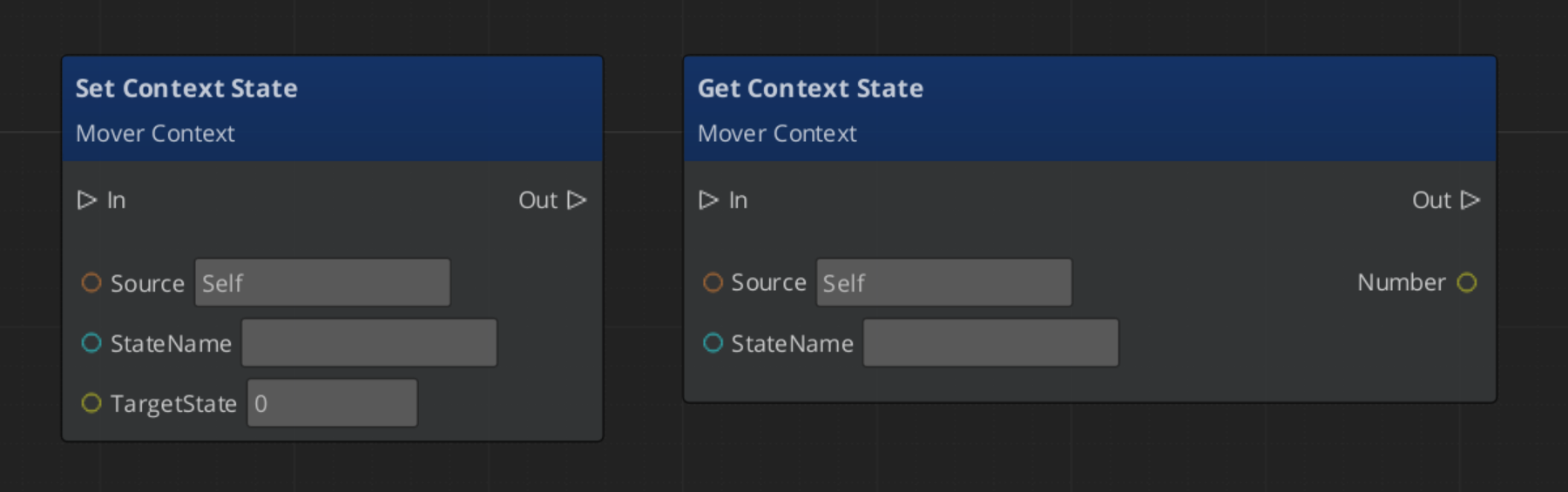

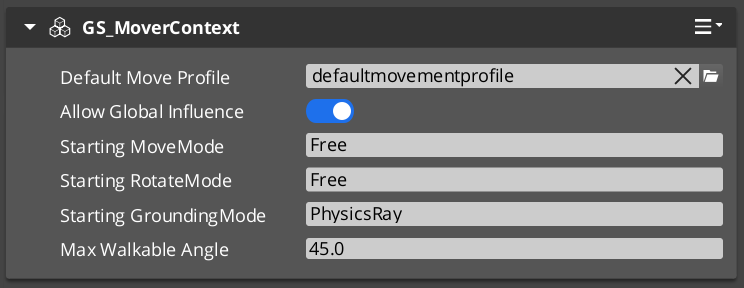

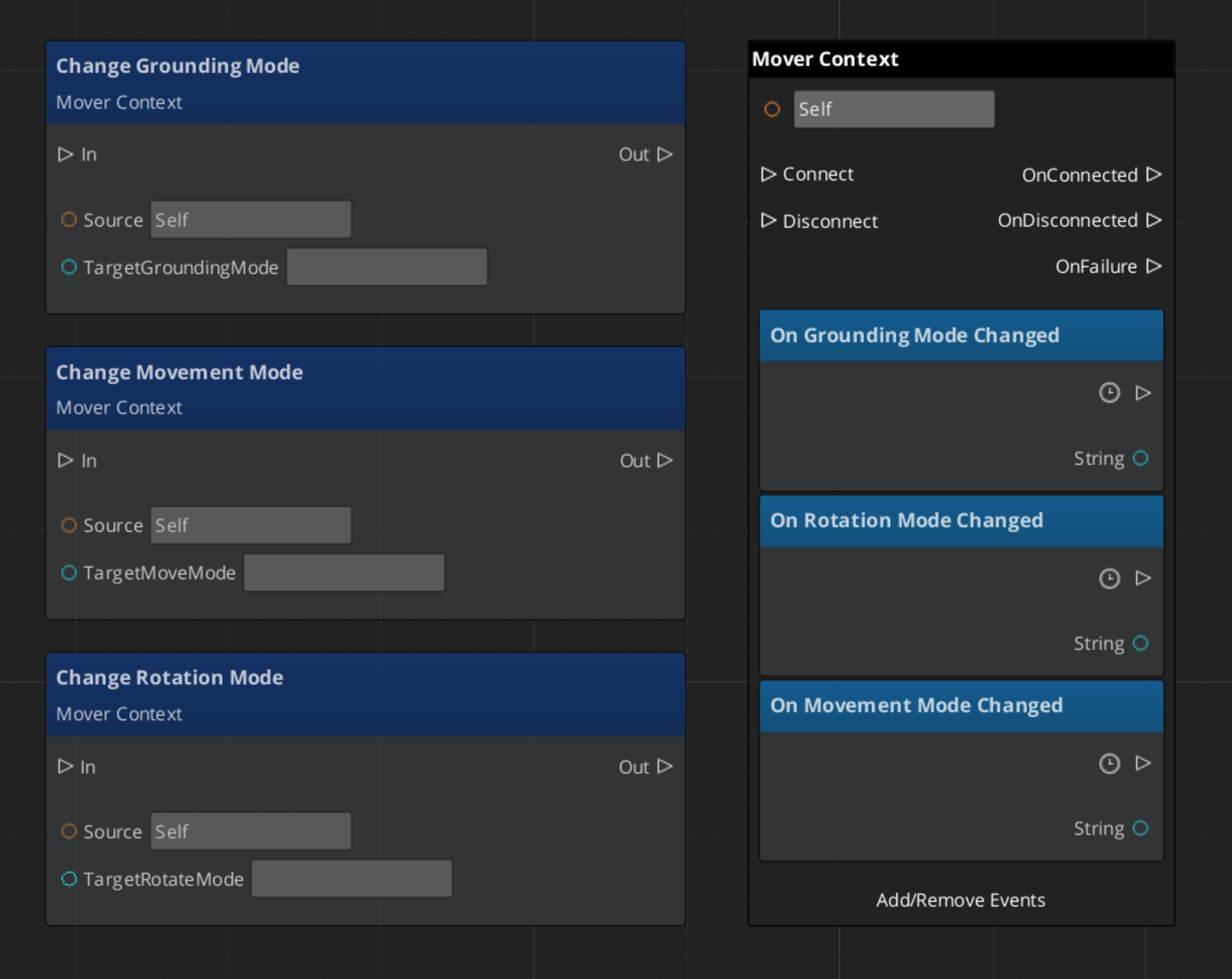

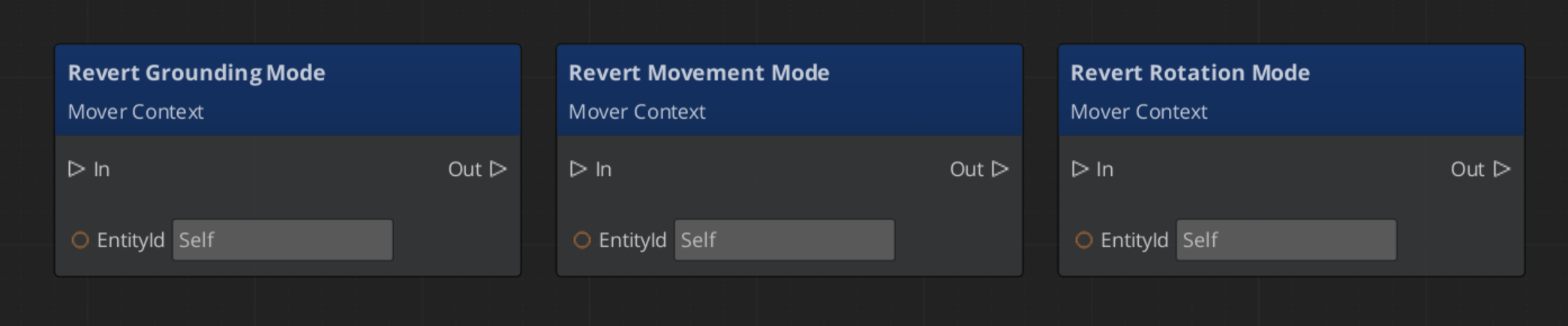

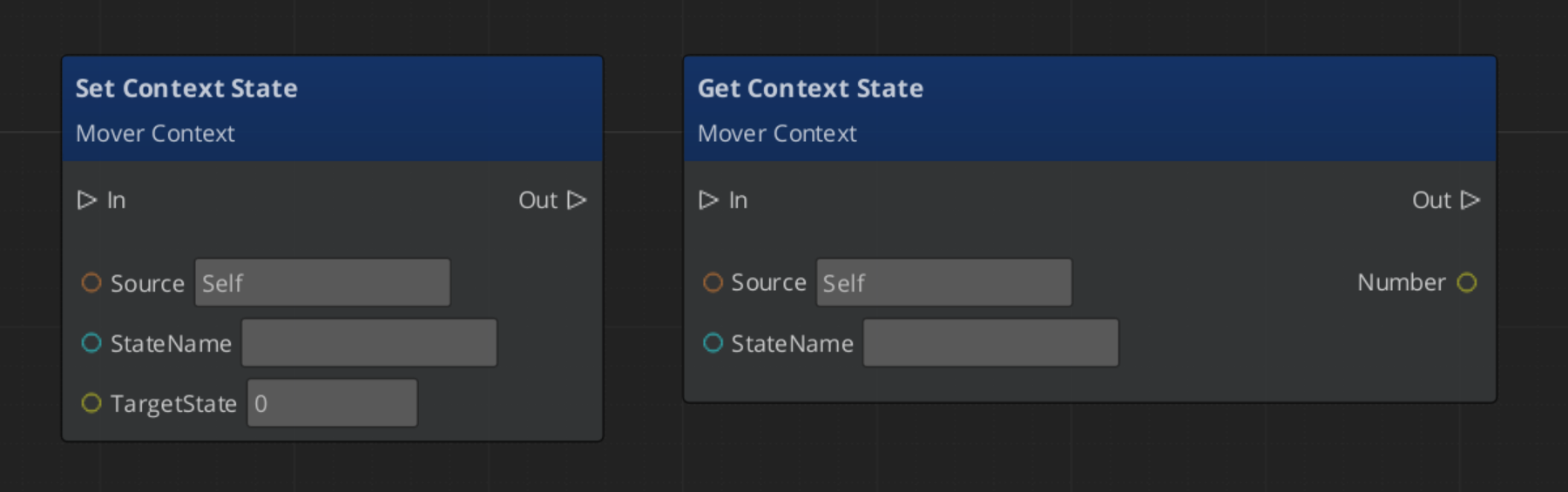

MoverContext

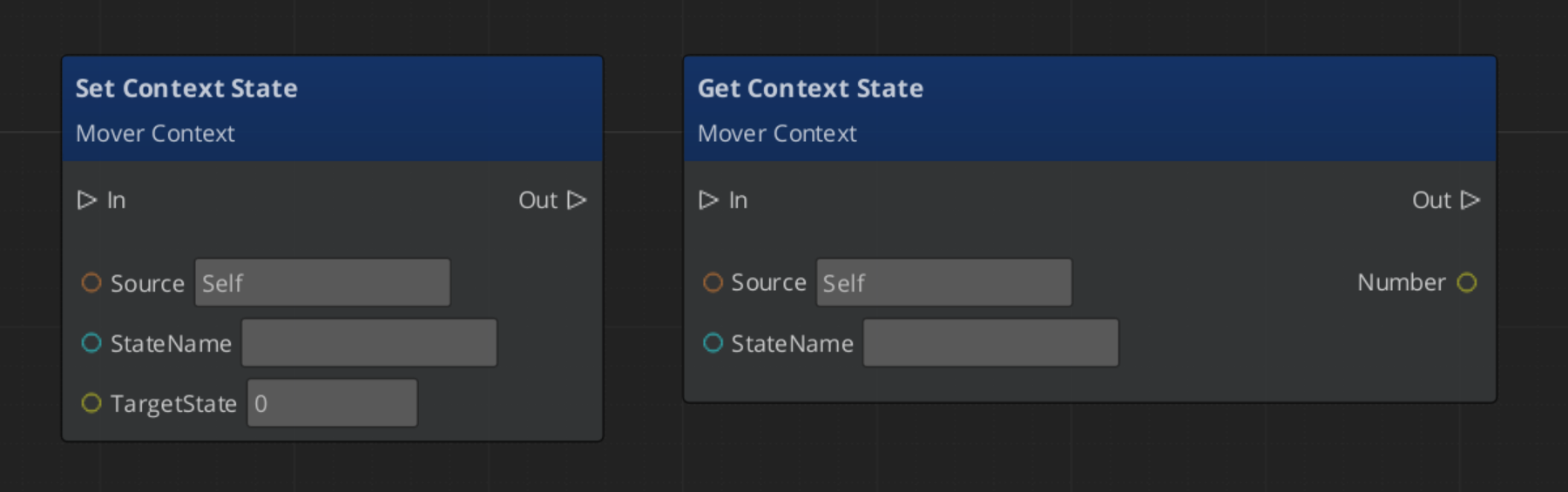

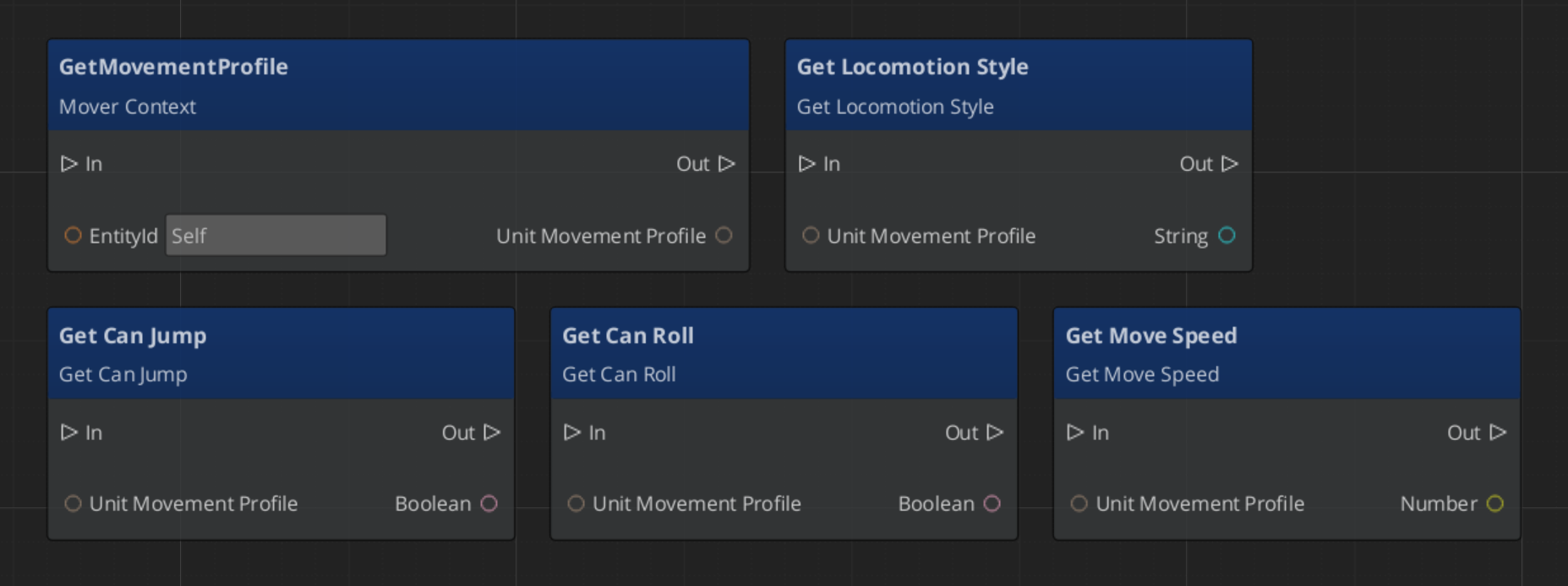

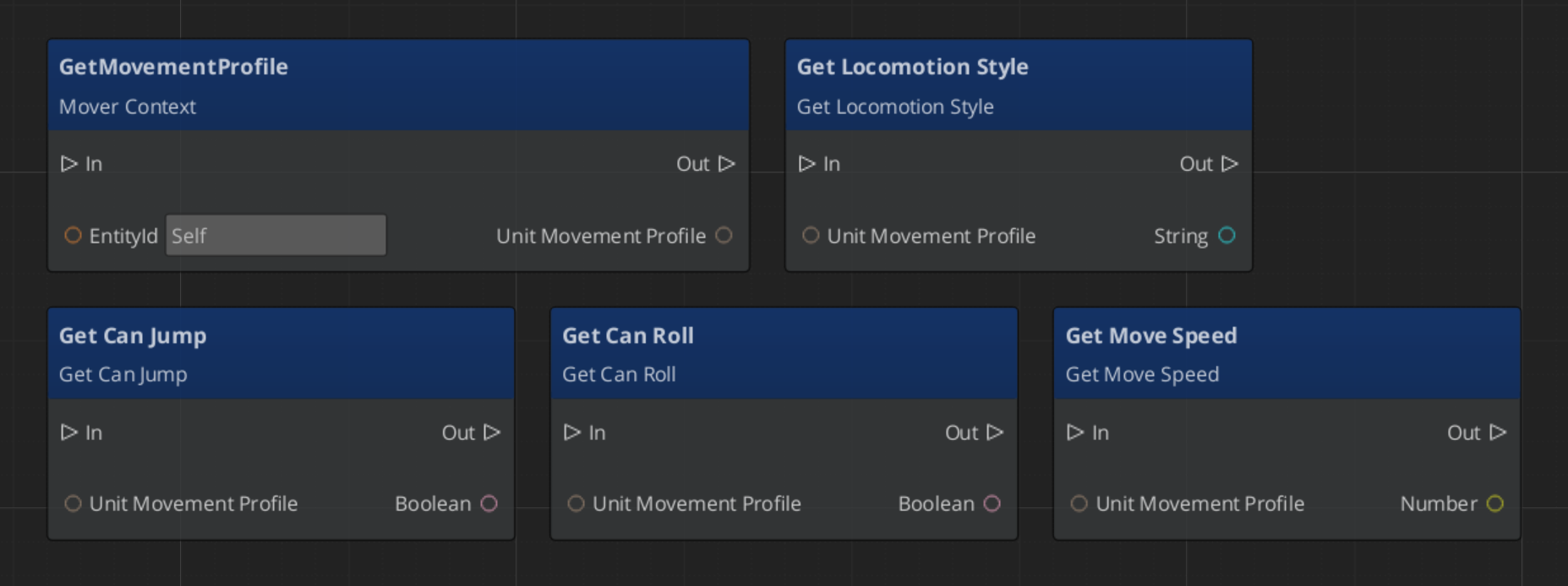

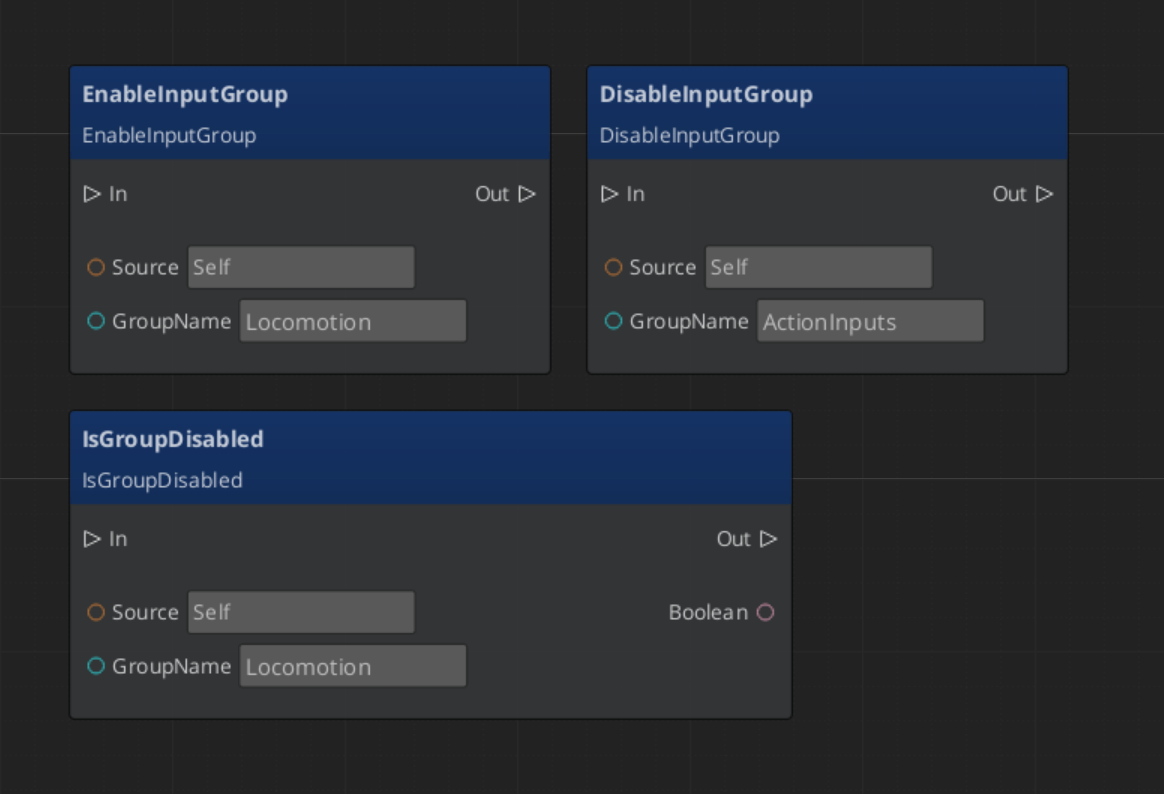

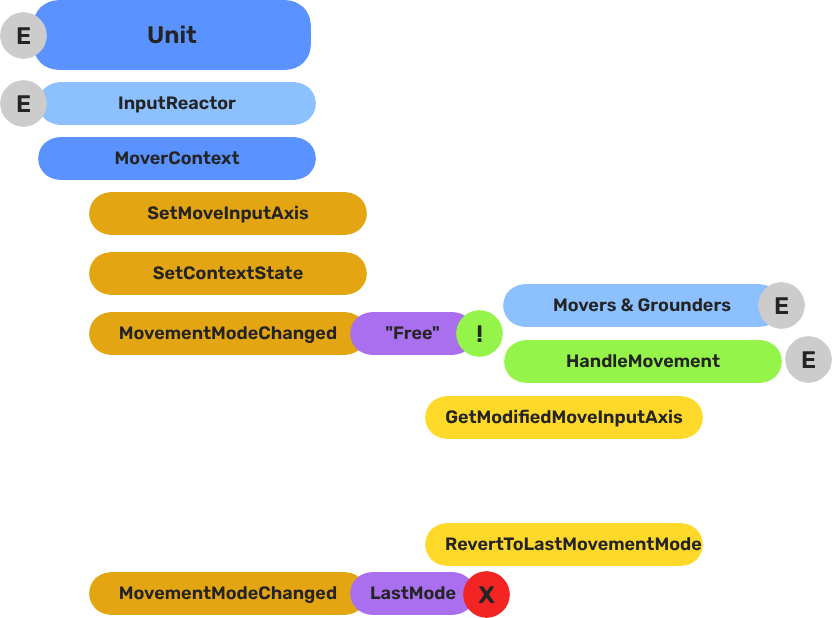

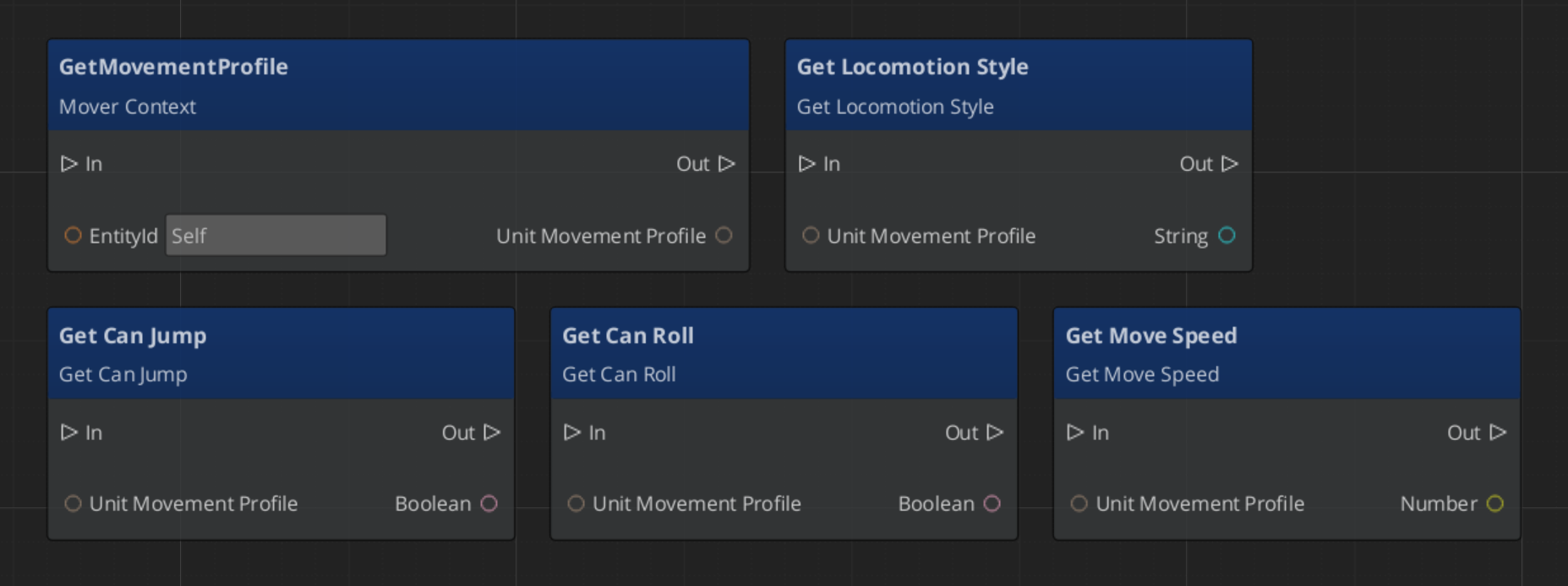

GS_MoverContextComponent — transforms raw input into movement intent, manages active mode and profilesMoverContextRequestBus / MoverContextNotificationBus- Mode-driven movement: one named mode active at a time; only the mover and grounder matching that mode run

Movers

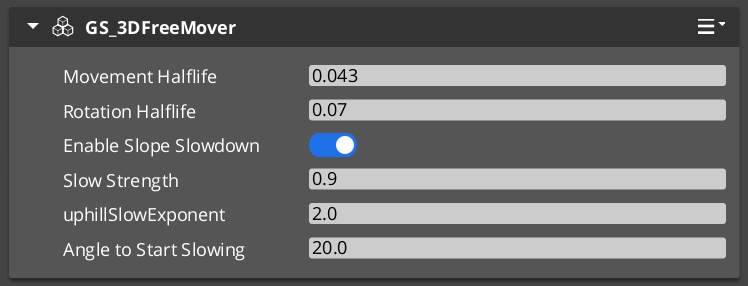

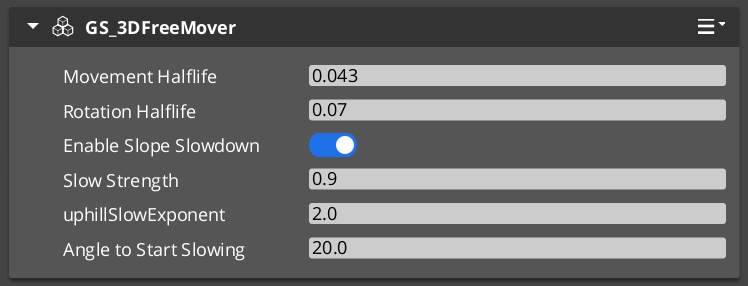

GS_MoverComponent — mode-aware mover base classGS_PhysicsMoverComponent — physics-driven movement via PhysXGS_3DFreeMoverComponent — unconstrained 3D free movementGS_3DSlideMoverComponent — slide-and-collide surface movement

Grounders

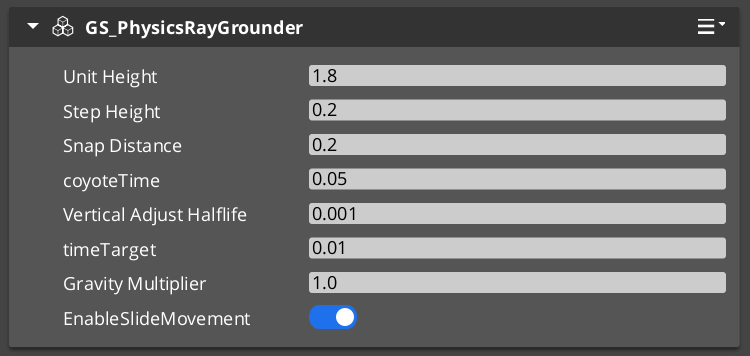

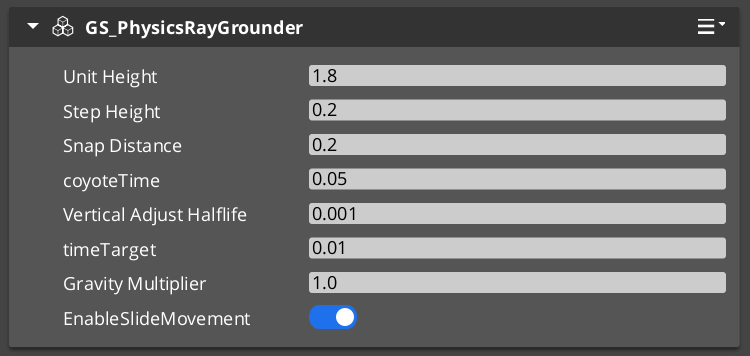

GS_PhysicsRayGrounderComponent — raycast-based grounding for the “Free” movement mode

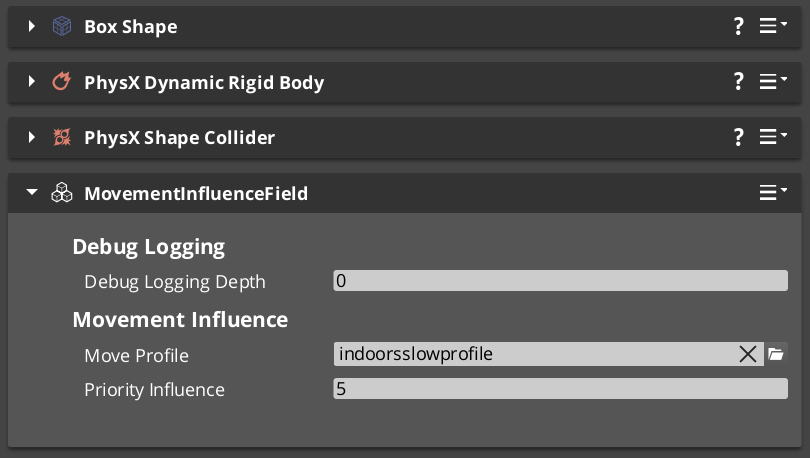

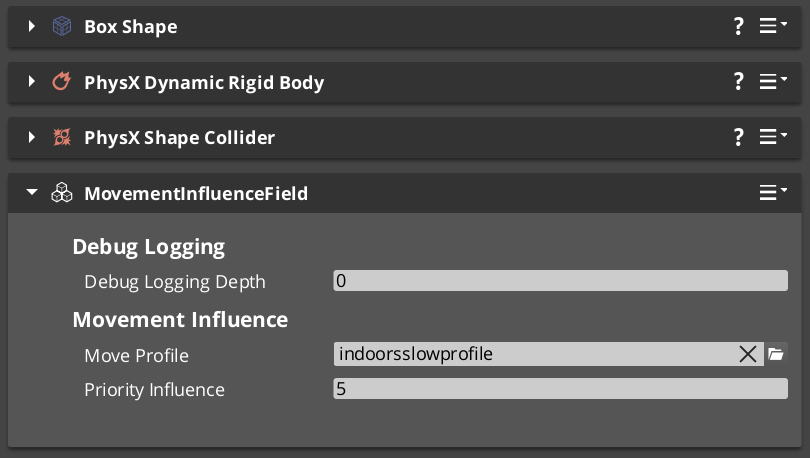

Movement Influence

MovementInfluenceFieldComponent — spatial zone that modifies unit movement within its boundsGlobalMovementRequestBus — global movement modifier interface

Profiles

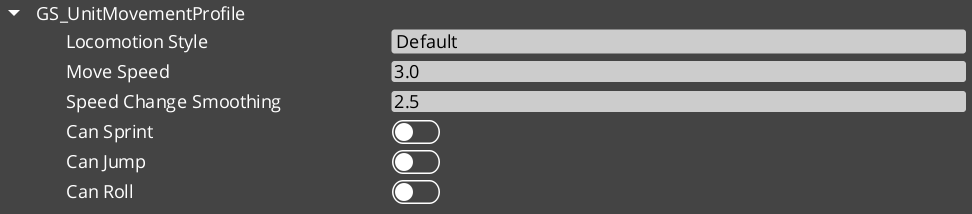

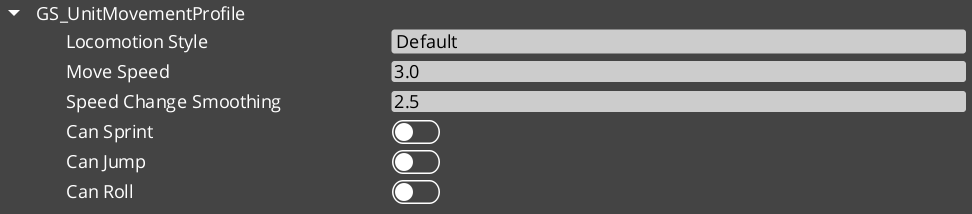

GS_UnitMovementProfile — per-mode speed, acceleration, and movement parameter configuration

2.1.11 - Docs Change Log

Updated changelog.

Logs

Docs 1.0.0

Welcome page finalized.

Get Started and Index sections finalized.

No missing pages. Final organization.

Patterns Complete.

Feature List, Glossary fully updated.

Get Started T1 page filled.

Index T1 page filled.

Final Logs format and arrangement.

Print page + print section links.

Homepage complete.

About Us complete.

Product pages complete.

Docs 0.9.0

Full first implementation of direct documentation formatting and content.

With this release we have our first fully working catalogue of featuresets, functionality, and API.

Feature Roots are called “Tier 2”. They are overviews with quick indexes for their subsystems.

Content Pages are called “Tier 3”. They carry the body and substance of any given feature or subfeature.

Nested pages below these tiers are combinations of both T2, and T3, depending on the depth an overview needs to be to segue into the child pages.

Established base organization of information.

- Get Started is very thin and for user onboarding.

- Index replaces Get Started for regular users. Allowing for easy navigation across categorical necessities for users.

- Basics is conceptual and script driven knowledge for the framework.

- Framework API is the engineering and veteran user knowledgebase.

- Learn is for Youtube video funnelling, or text driven Guide access.

Cross linking is prioritized to move users to the knowledge they seek, asap.

Agentic Guidelines is an LLM based seed for how to use GS_Play and navigate the documentation for information access. The website has been optimized behind the scenes for LLM scraping.

Basics

Basics Implemented.

- Implemented Core

- Implemented Audio

- Implemented Cinematics

- Implemented Environment

- Implemented Interaction

- Implemented Juice

- Implemented Performer

- Implemented PhantomCam

- Implemented UI

- Implemented Unit

Framework API

Framework API Implemented.

- Implemented Core

- Implemented Audio

- Implemented Cinematics

- Implemented Environment

- Implemented Interaction

- Implemented Juice

- Implemented Performer

- Implemented PhantomCam

- Implemented UI

- Implemented Unit

2.2 - Feature List

Quick index of all GS_Play Features.

Contents

GS_Core

| Feature | API | Does |

|---|

| GS_Managers | API | Start a new game, continue from a save, load a specific file, or return to the title screen |

| GS_Save | API | Save and load game data, or track persistent flags and counters across sessions |

| GS_StageManager | API | Move between levels, or configure per-level spawn points and navigation settings |

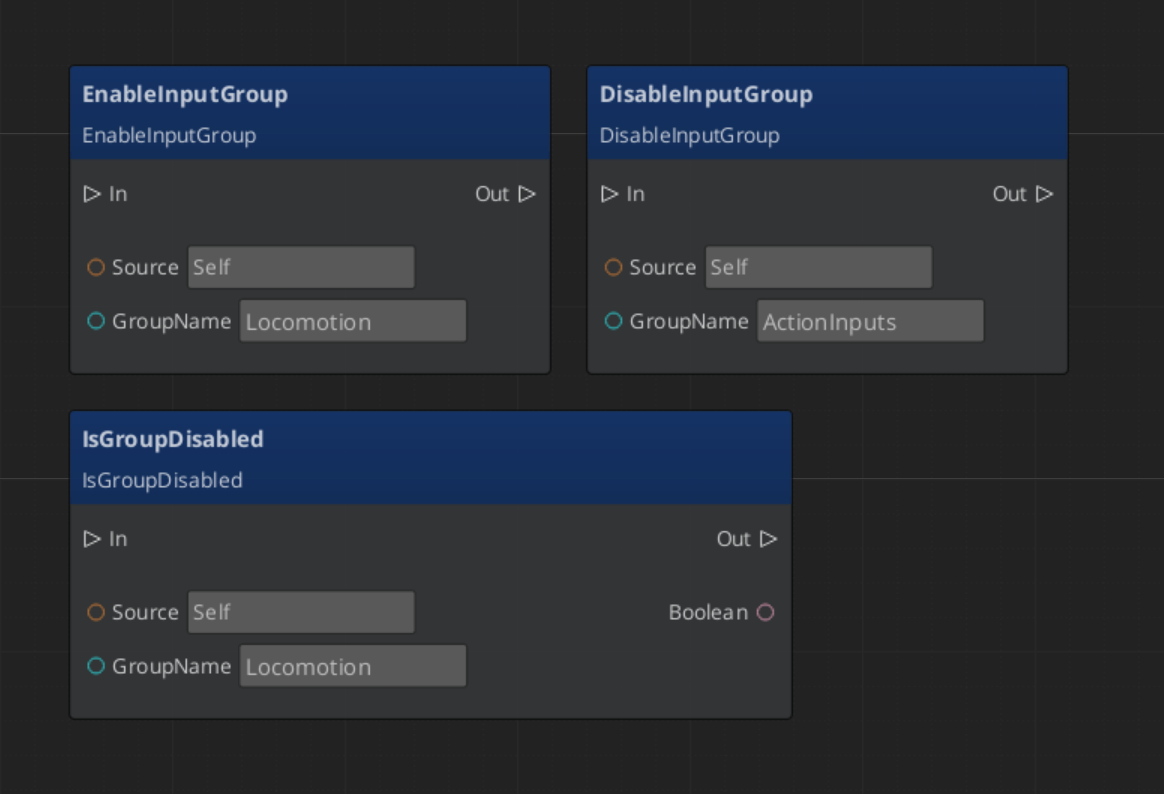

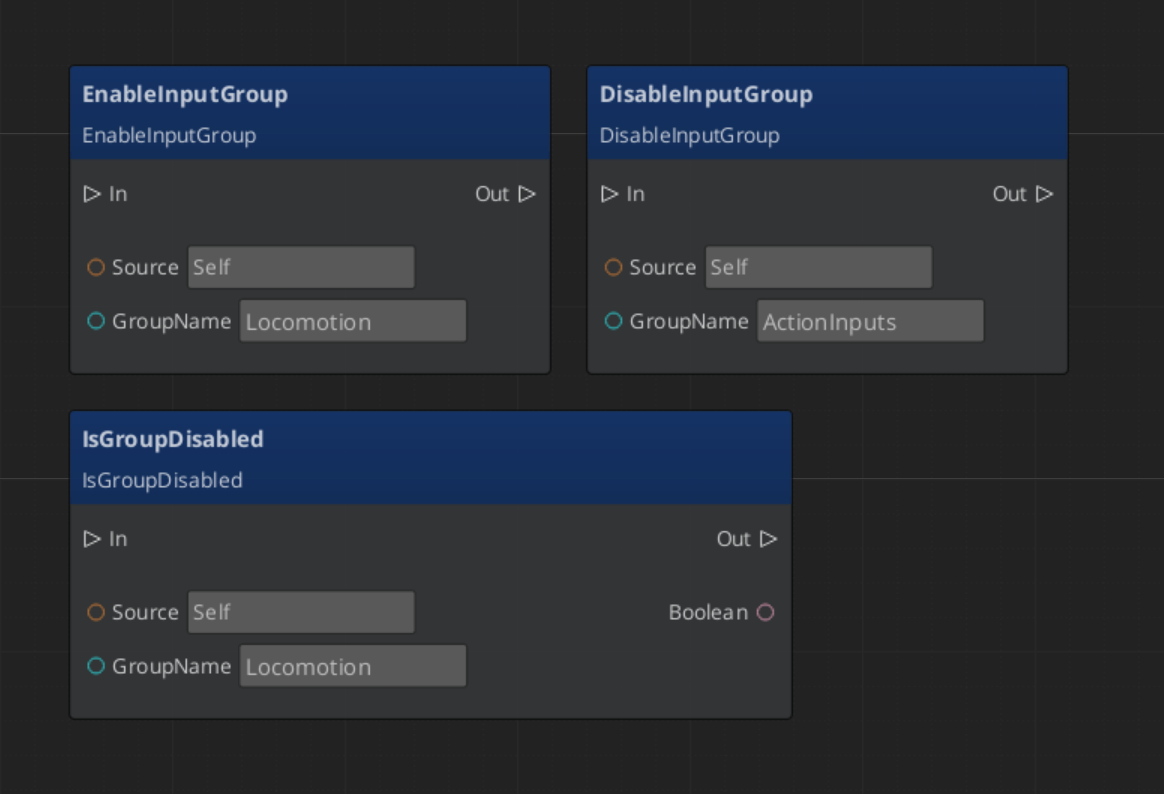

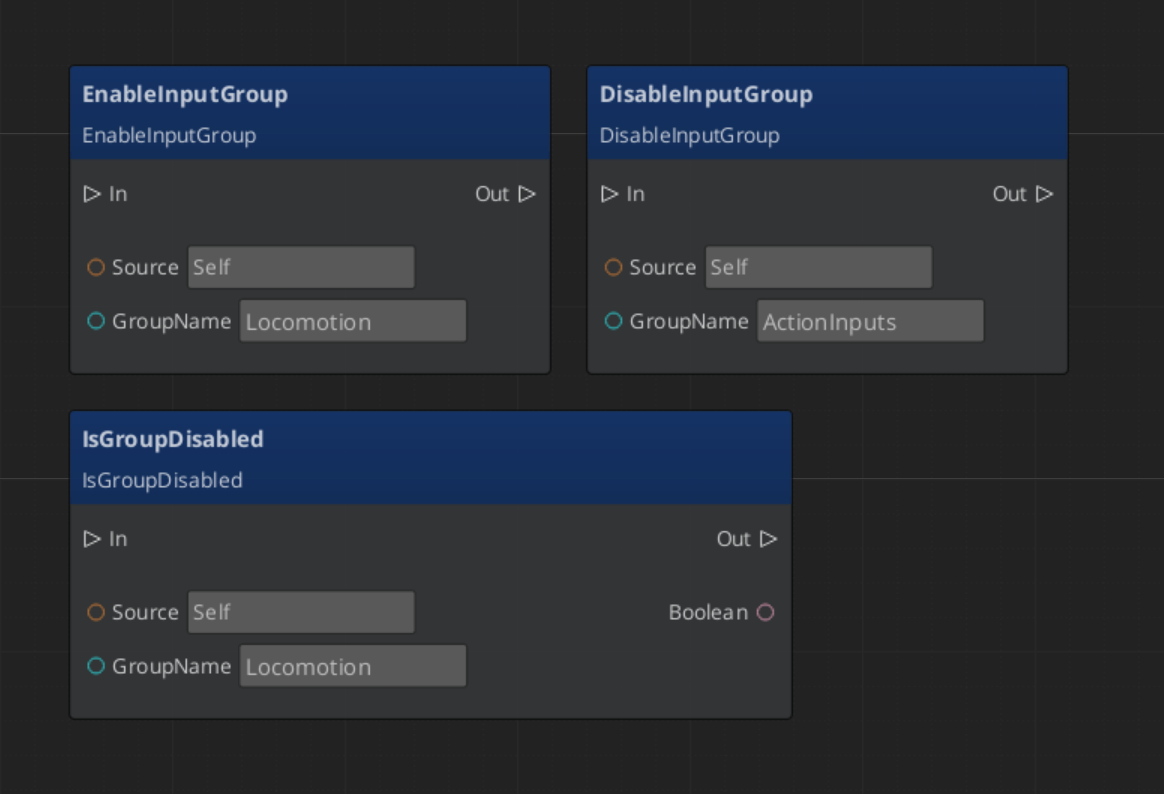

| GS_Options | API | Read player input, disable input during menus, or swap control schemes at runtime |

| Utilities | API | Use easing curves, detect physics zones, smooth values, pick randomly, or work with splines |

| GS_Actions | API | Trigger a reusable behavior on an entity from a script, physics zone, or another action |

| GS_Motion | API | Animate a transform, color, or value smoothly over time |

GS_Audio

| Feature | API | Does |

|---|

| Audio Manager | API | Manage the audio engine, load event libraries, or control master volume |

| Audio Events | API | Play sounds with pooling, 3D spatialization, and concurrency control |

| Mixing & Effects | API | Configure mixing buses with filters, EQ, and environmental influence effects |

| Score Arrangement | API | Layer music tracks dynamically based on gameplay state |

| Klatt Voice | API | Generate text-to-speech with configurable voice parameters and 3D spatial audio |

GS_Cinematics

| Feature | API | Does |

|---|

| Cinematics Manager | API | Coordinate cinematic sequences and manage stage markers for actor positioning |

| Dialogue System | API | Author branching dialogue with conditions, effects, and performances |

| Dialogue UI | API | Display dialogue text, player choices, and speech babble on screen or in world space |

| Performances | API | Move actors to stage markers during dialogue with navigation or teleport |

| Timeline Expansion | API | Extend the sequence timeline with custom track and key types |

GS_Environment

| Feature | API | Does |

|---|

| Time Manager | API | Control world time, time passage speed, or respond to day/night changes |

| Sky Configuration | API | Define how the sky looks at different times of day with data assets |

GS_Interaction

| Feature | API | Does |

|---|

| Pulsors | API | Broadcast typed physics events from trigger volumes to receiving entities |

| Targeting | API | Find and lock onto the best interactable entity in proximity with a cursor overlay |

| World Triggers | API | Fire configurable responses from zones and conditions without scripting |

GS_Juice

GS_Juice is in Early Development. Full support planned soon: 2026.

| Feature | API | Does |

|---|

| Feedback System | API | Play screen shake, bounce, flash, or material glow effects on entities |

GS_Performer is in Early Development. Full support planned soon: 2026.

| Feature | API | Does |

|---|

| Performer Manager | API | Manage registered performers or query them by name |

| Skin Slots | API | Swap equipment visuals at runtime with modular slot-based assets |

| Paper Performer | API | Render billboard-style 2.5D characters that face the camera correctly |

| Locomotion | API | Drive animation blend parameters from entity velocity automatically |

| Head Tracking | API | Procedurally orient a character’s head bone toward a world-space look-at target |

| Babble | API | Generate procedural vocalisation tones synchronized to dialogue typewriter output |

GS_PhantomCam

| Feature | API | Does |

|---|

| Cam Manager | API | Manage the camera system lifecycle and know which camera is active |

| Phantom Cameras | API | Place virtual cameras with follow targets, look-at, and priority-based switching |

| Cam Core | API | Control how the real camera reads from the active phantom camera each frame |

| Blend Profiles | API | Define smooth transitions between cameras with custom easing |

| Influence Fields | API | Create spatial zones that modify camera behavior dynamically |

GS_UI

| Feature | API | Does |

|---|

| UI Manager | API | Load and unload UI canvases, manage focus stack, or set startup focus |

| Page Navigation | API | Navigate between pages, handle back navigation, or cross canvas boundaries |

| UI Interaction | API | Button animations and input interception for UI canvases |

| UI Animation | API | Animate UI elements with position, scale, rotation, alpha, and color tracks |

| Widgets | API | Reusable UI building blocks for counters, sliders, and interactive controls |

GS_Unit

| Feature | API | Does |

|---|

| Unit Manager | API | Register units, track active controllers, or spawn units at runtime |

| Controllers | API | Possess and release units with player or AI controllers |

| Input Data | API | Capture raw input and convert it into structured movement intent |

| Movement | API | Move characters with movers, grounders, and movement influence fields |

2.3 - Patterns

Patterns associated with the GS_Play featuresets.

Outline

The strength of the GS_Play framework is in it’s straightforward, and intuitive patterns.

By creating simple ways to look at certain featuresets, you can reliably expand your gameplay knowing you’re always going to properly connect with the underlying system.

Using our extensive list of templates, you can even more rapidly develop the features that make your project unique.

Use this quick list to jump to the features you want to look at.

You can expect a diagram outlining the relationships between feature elements, and then explanations on how the pattern plays out.

Have fun!

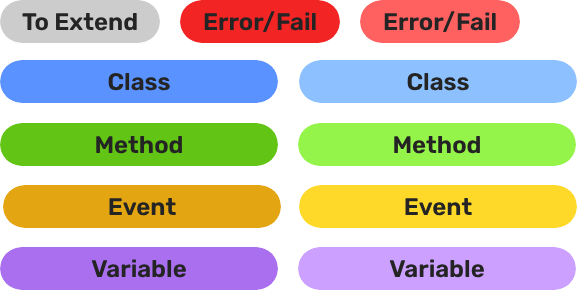

Legend

Contents

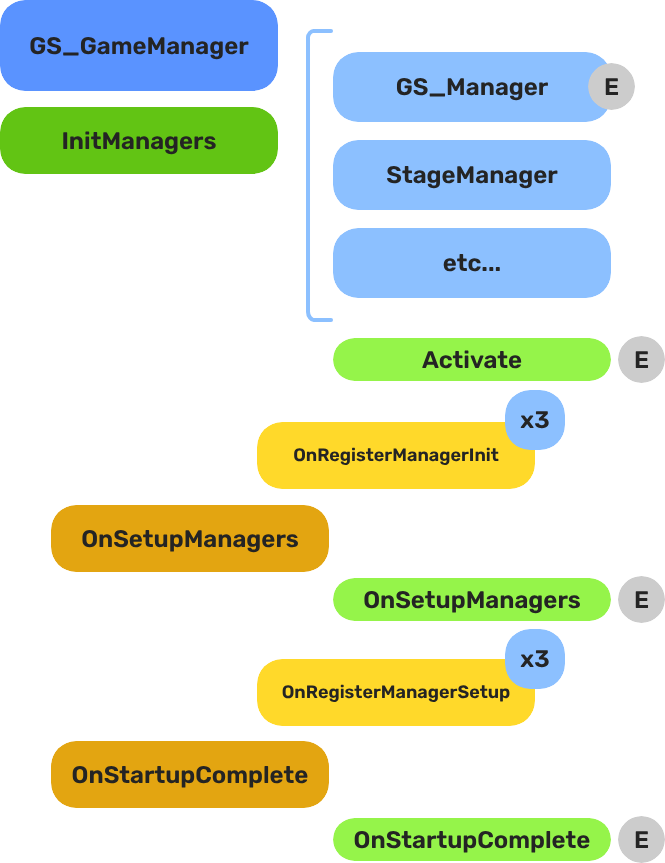

Managers & Startup - GS_Core

Breakdown

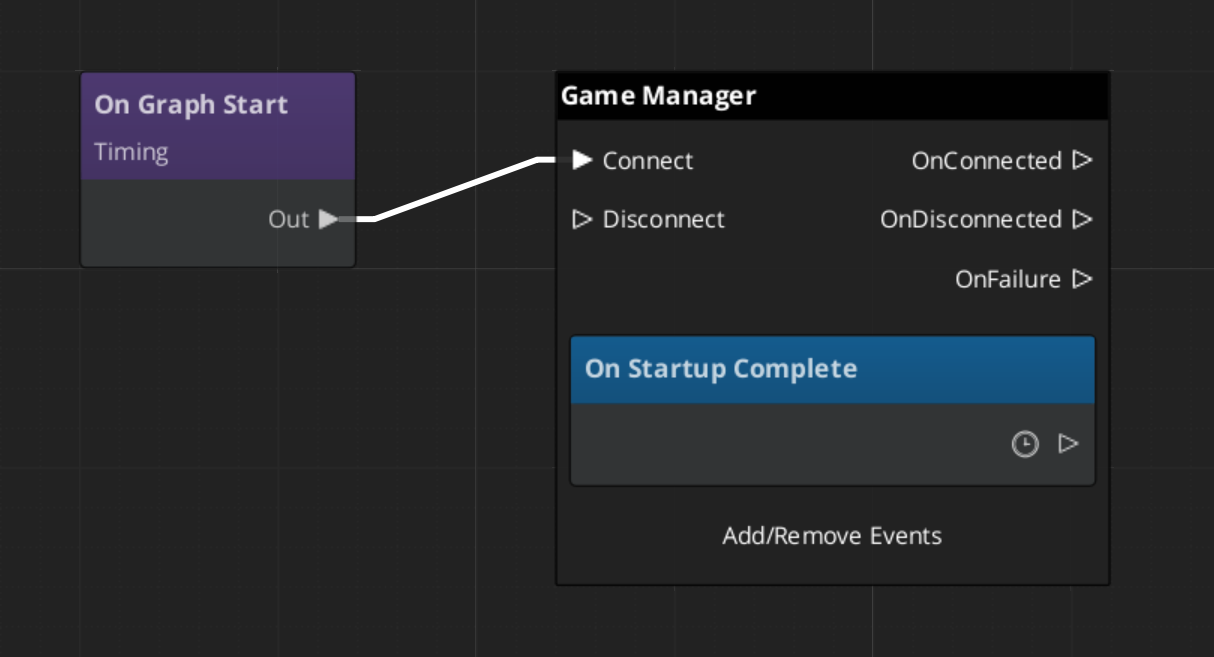

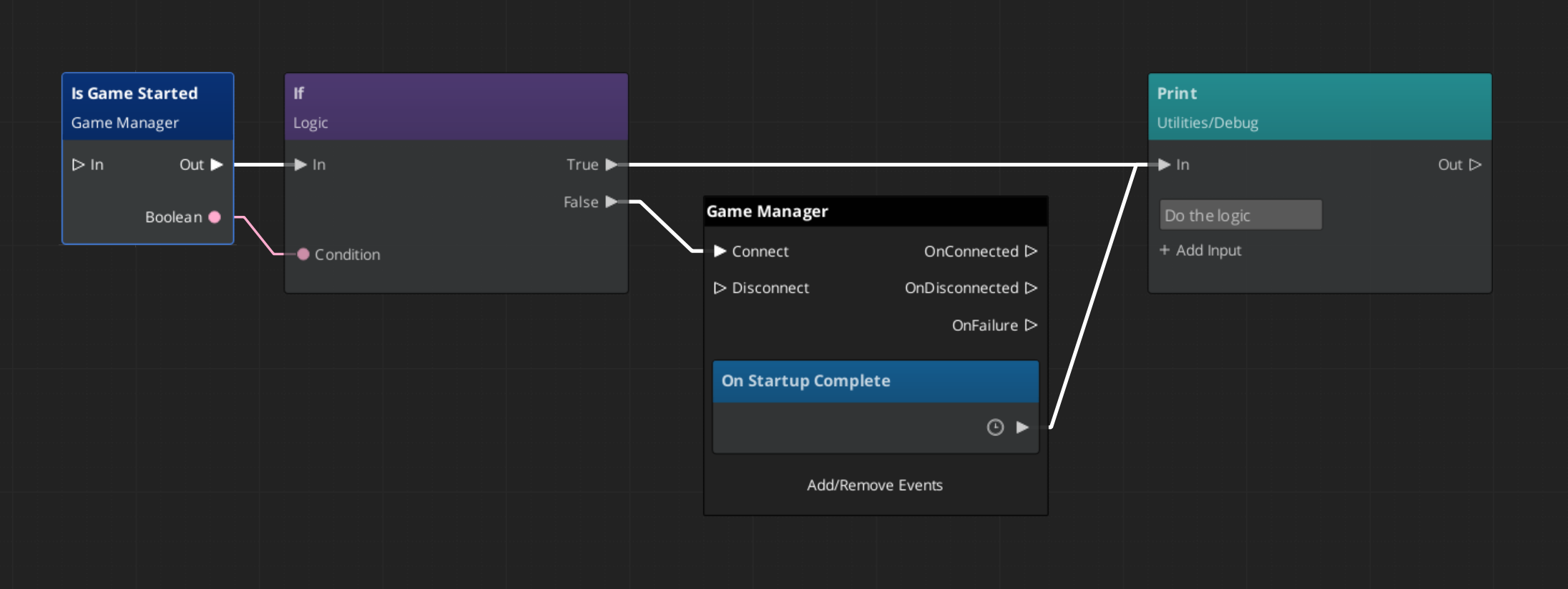

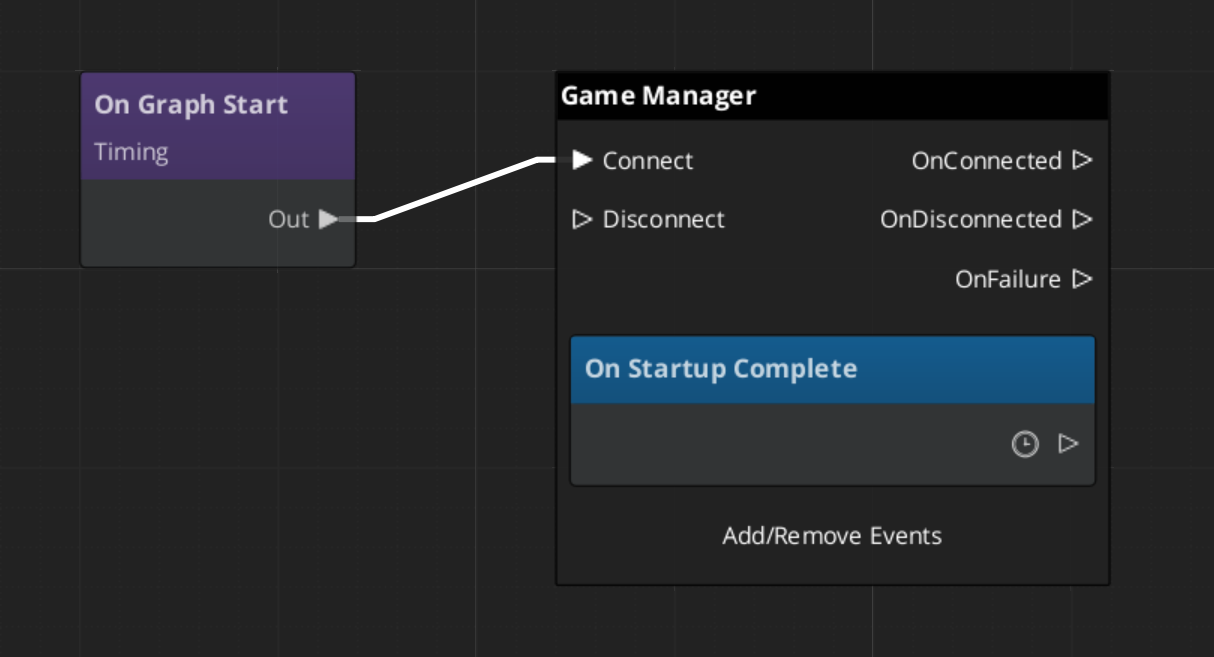

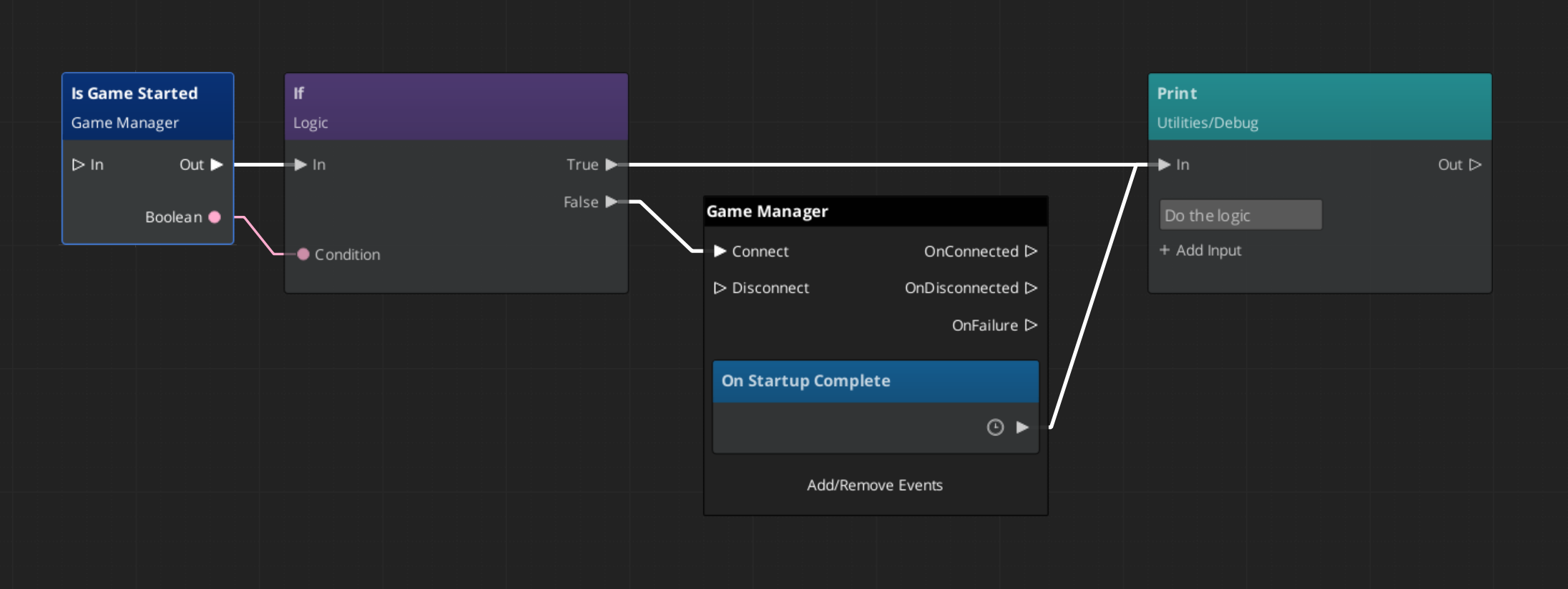

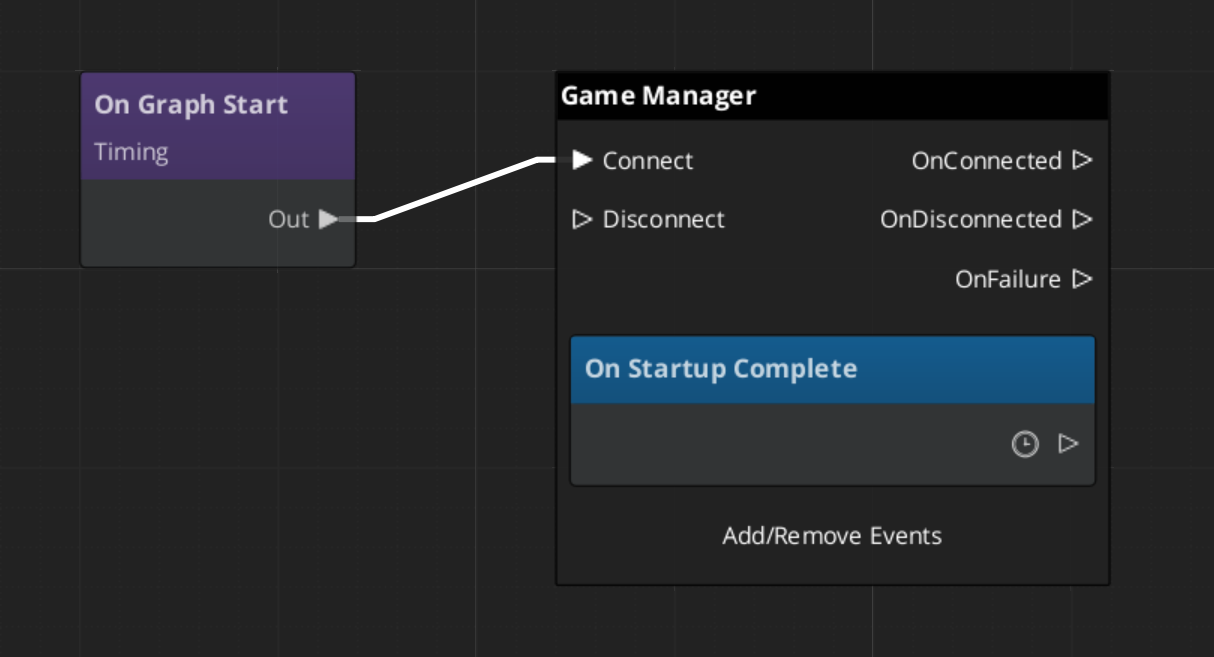

When the project starts, the Game Manager runs three stages before the game is considered ready:

| Stage | Broadcast Event | What It Means |

|---|

| 1 — Initialize | (internal) | Each manager is spawned. They activate, then report ready. |

| 2 — Setup | OnSetupManagers | Setup stage. Now safe to query other managers. |

| 3 — Complete | OnStartupComplete | Last stage. Everything is ready.

Do any last minute things. Now safe to begin gameplay. |

For most scripts, you only need OnStartupComplete. Wait for this event before doing anything that depends on managers to be completely setup.

Back to top…

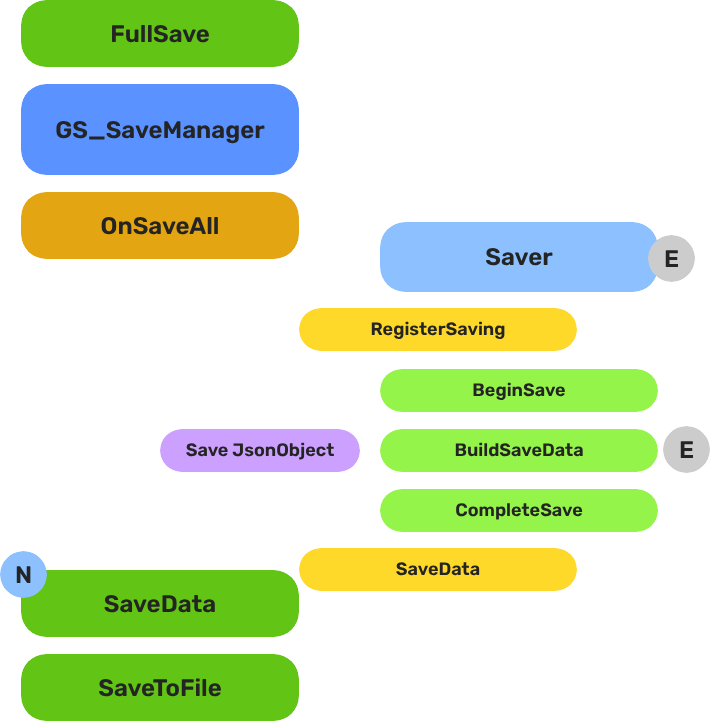

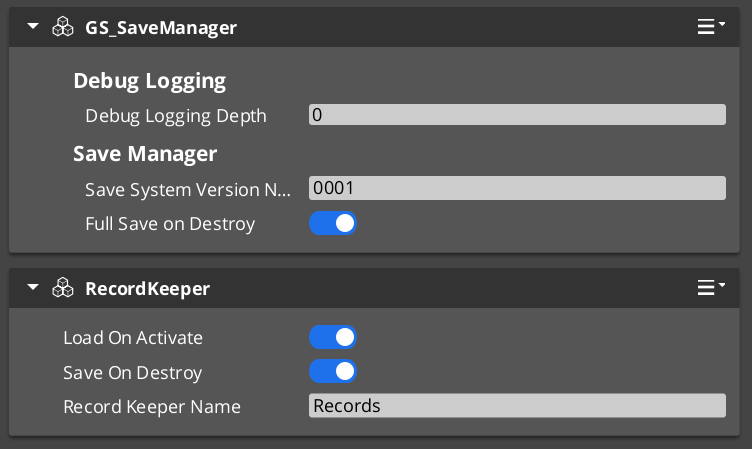

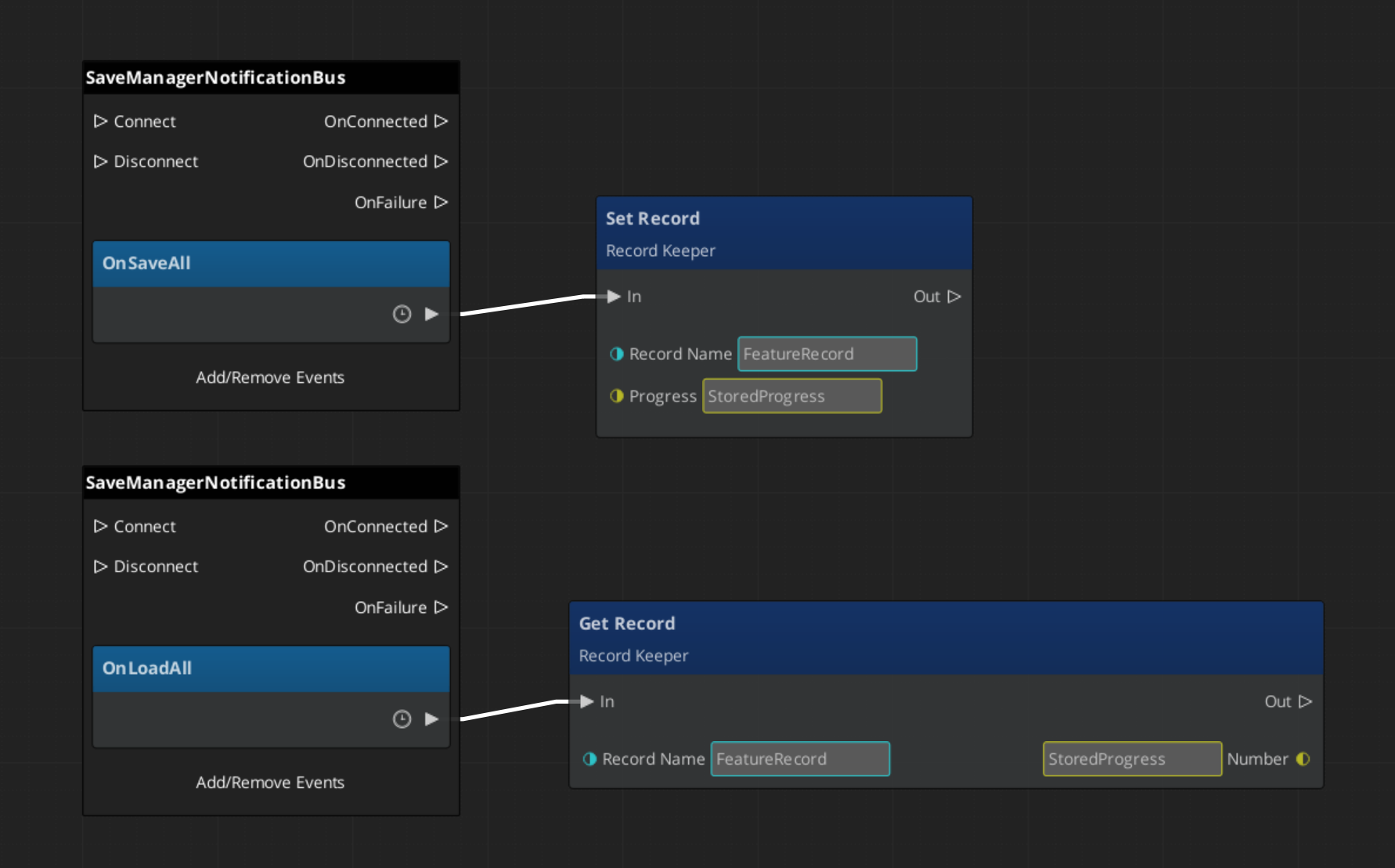

Save - GS_Core

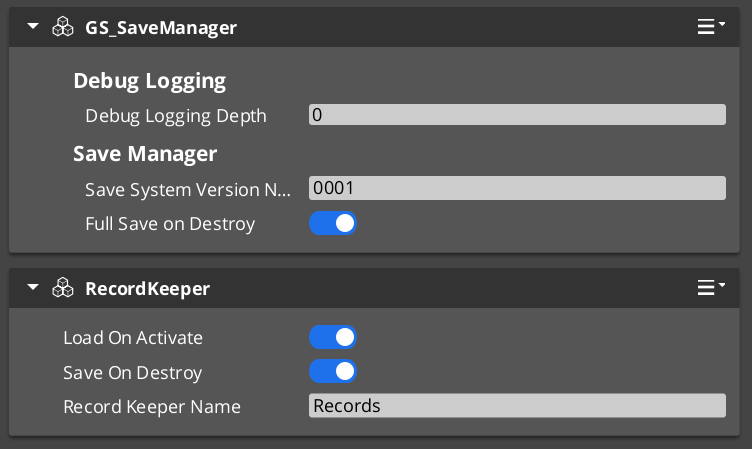

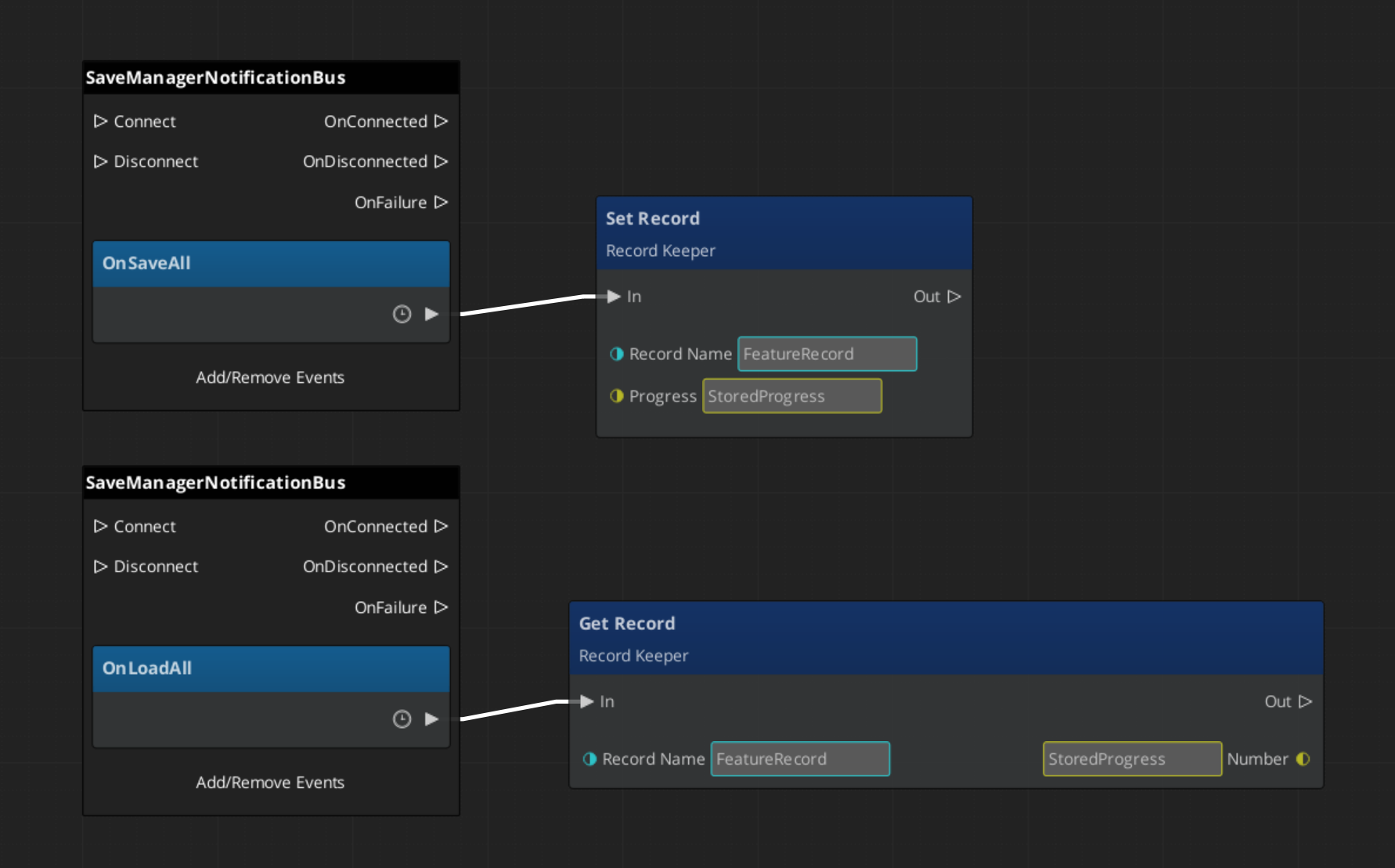

Breakdown

When a save or load is triggered, the Save Manager broadcasts to every Saver in the scene. Each Saver independently handles its own entity’s state. The Record Keeper persists flat progression data alongside the save file.

| Part | Broadcast Event | What It Means |

|---|

| Save Manager | OnSaveAll | Broadcasts to all Savers on save. Each serializes its entity state. |

| Save Manager | OnLoadAll | Broadcasts to all Savers on load. Each restores its entity state. |

| Record Keeper | RecordChanged | Fires when any progression flag is created, updated, or deleted. |

Savers are per-entity components — add them to anything that needs to persist across sessions. The Record Keeper is a global singleton for flags and counters.

Back to top…

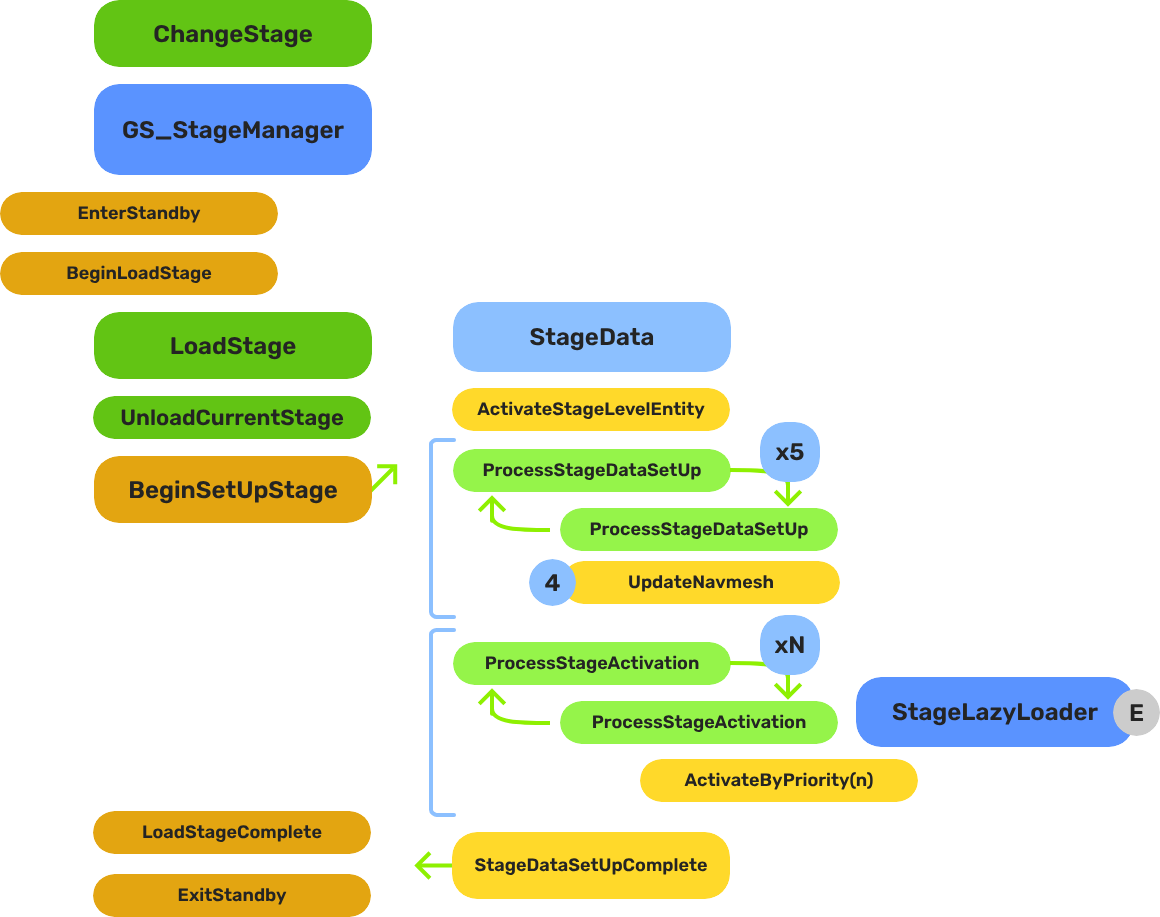

Stage Change - GS_Core

Breakdown

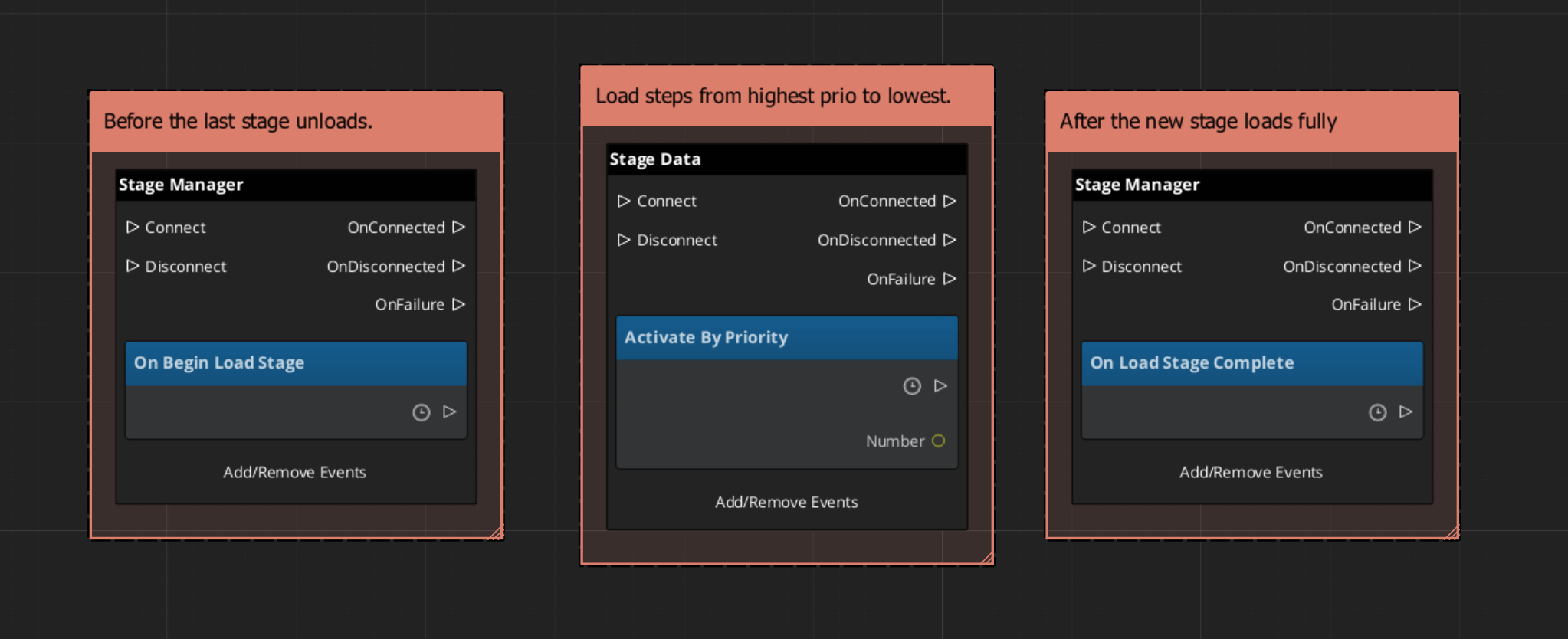

When a stage change is requested, the system follows a five-step sequence before gameplay resumes in the new level:

| Step | Broadcast Event | What It Means |

|---|

| 1 — Standby | OnEnterStandby | Game Manager enters standby, halting all gameplay systems. |

| 2 — Unload | (internal) | The current stage’s entities are torn down. |

| 3 — Spawn | (internal) | The target stage prefab is instantiated. |

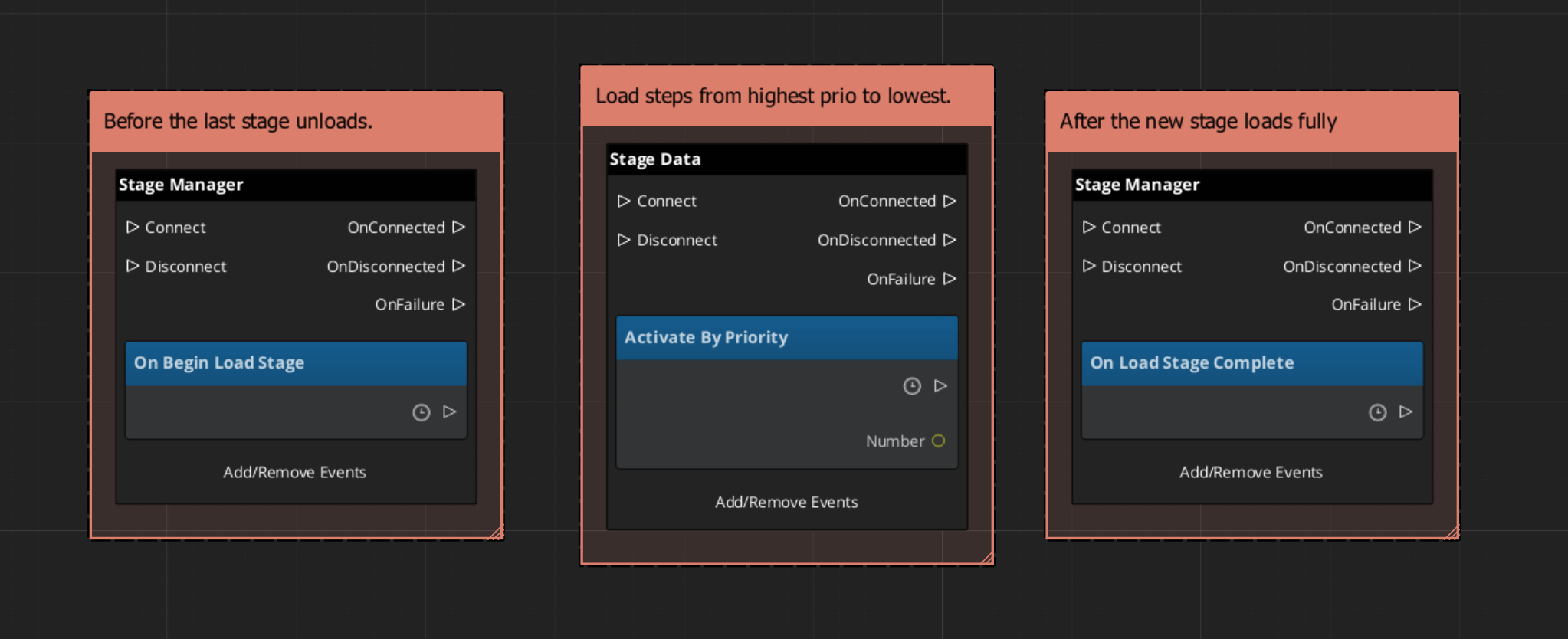

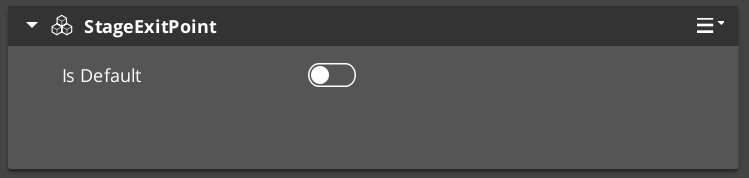

| 4 — Set Up | OnBeginSetUpStage | Stage Data runs its layered startup: SetUpStage → ActivateByPriority → Complete. |

| 5 — Complete | LoadStageComplete | Stage Manager broadcasts completion. Standby exits. Gameplay resumes. |

The Stage Data startup is layered — OnBeginSetUpStage, ActivateByPriority, then OnLoadStageComplete — so heavy levels can initialize incrementally without causing frame-time spikes.

Back to top…

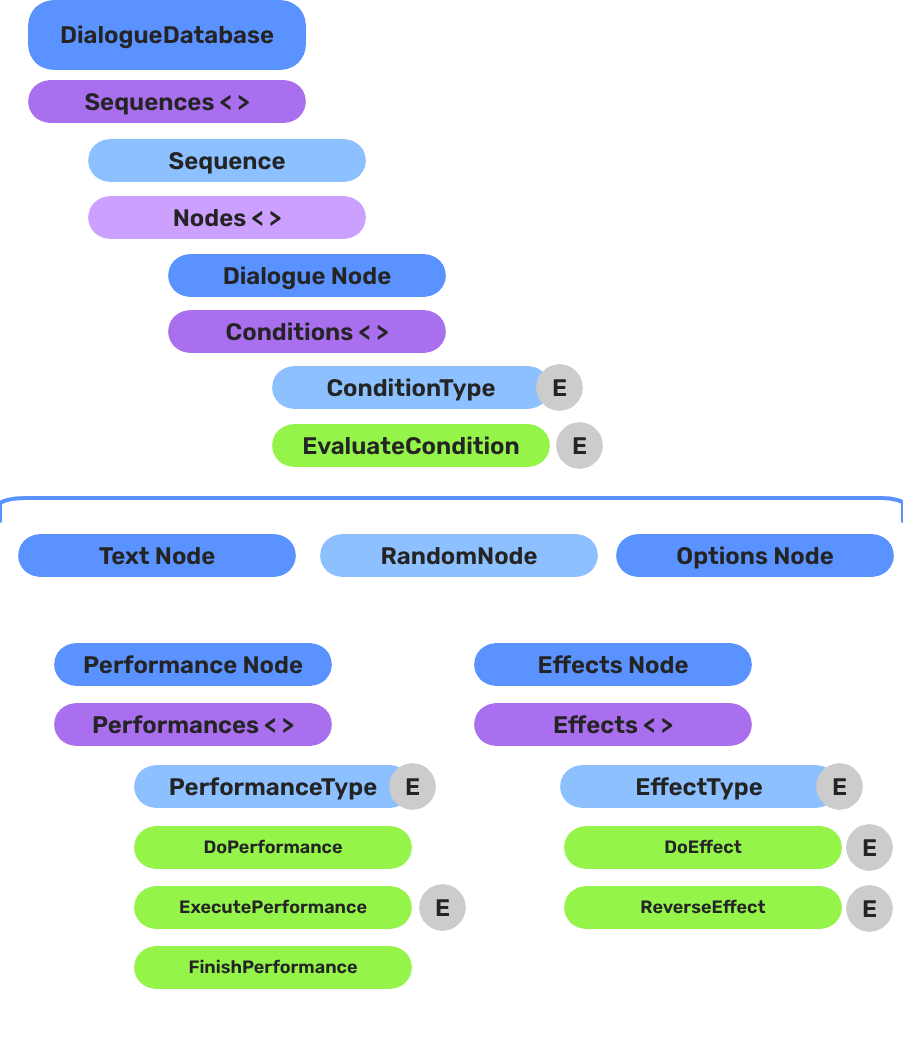

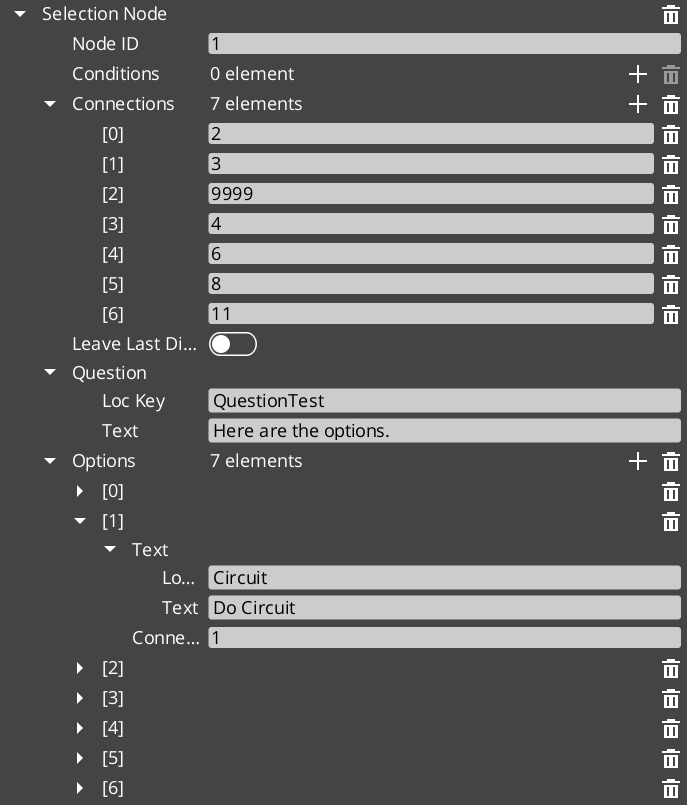

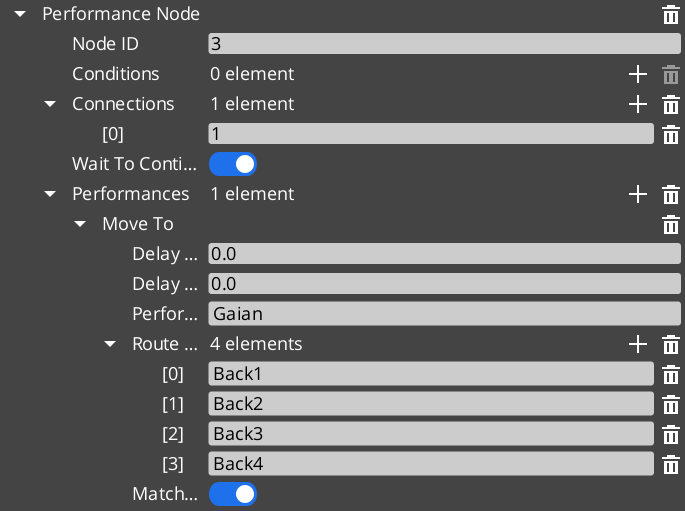

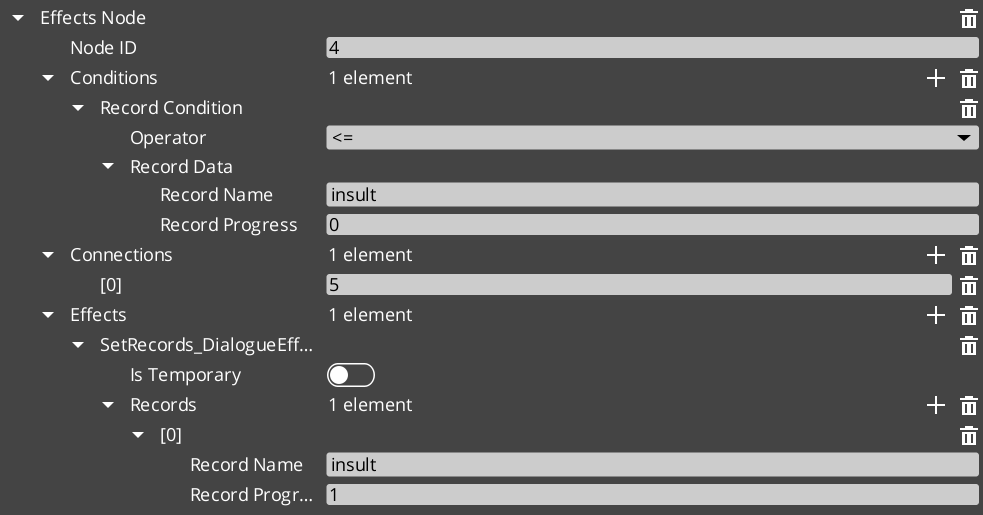

Dialogue Authoring - GS_Cinematics

Breakdown

A dialogue sequence is authored in the node editor, stored in a .dialoguedb asset, and driven at runtime by the Dialogue Manager and Sequencer:

| Layer | What It Means |

|---|

| DialogueDatabase | Stores named actors and sequences. Loaded at runtime by the Dialogue Manager. |

| DialogueSequence | A directed node graph. The Sequencer walks from startNodeId through Text, Selection, Effects, and Performance nodes. |

| Conditions | Polymorphic evaluators on branches. Failed conditions skip that branch automatically. |

| Effects | Polymorphic actions at EffectsNodeData nodes — set records, toggle entities. |

| Performers | Named actor anchors in the level. Resolved from database actor names via DialoguePerformerMarkerComponent. |

Conditions, effects, and performances are discovered automatically at startup — custom types from any gem appear in the editor without modifying GS_Cinematics.

Back to top…

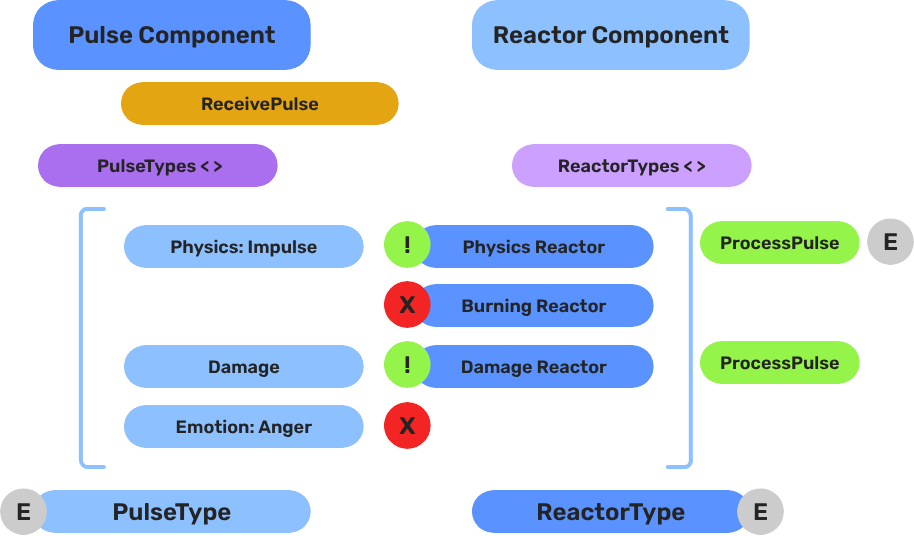

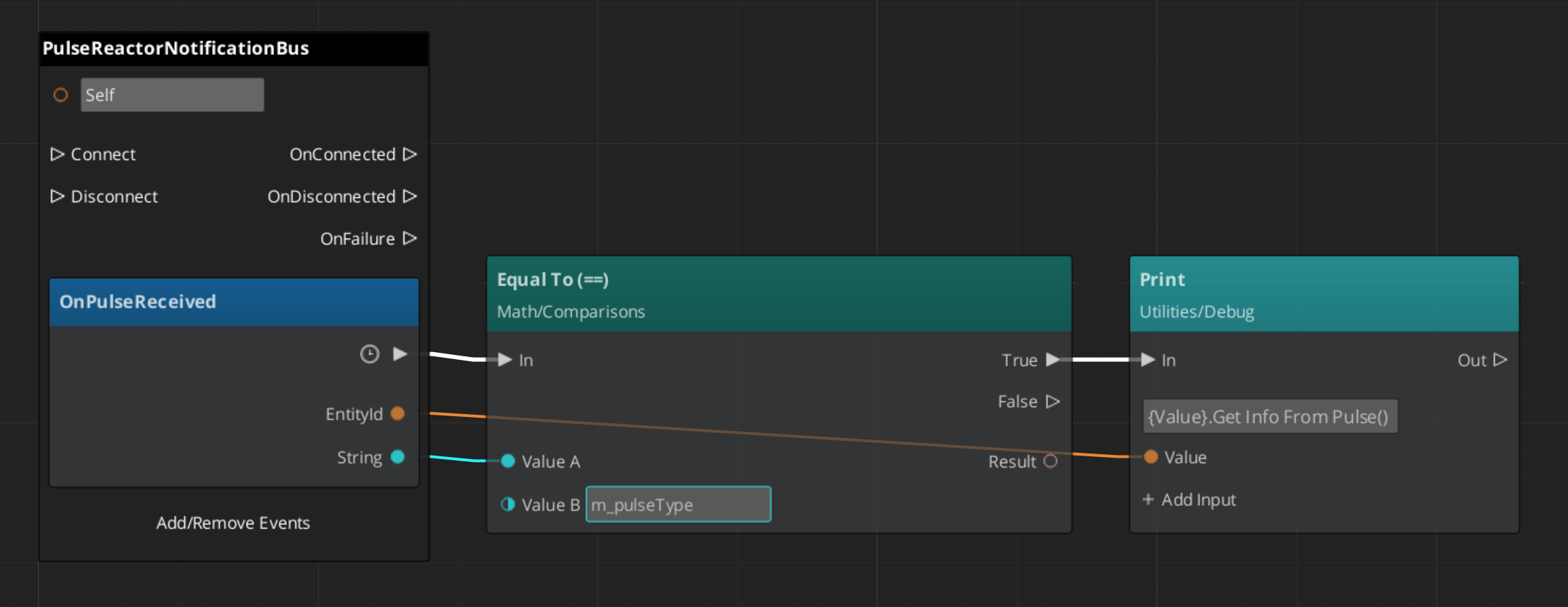

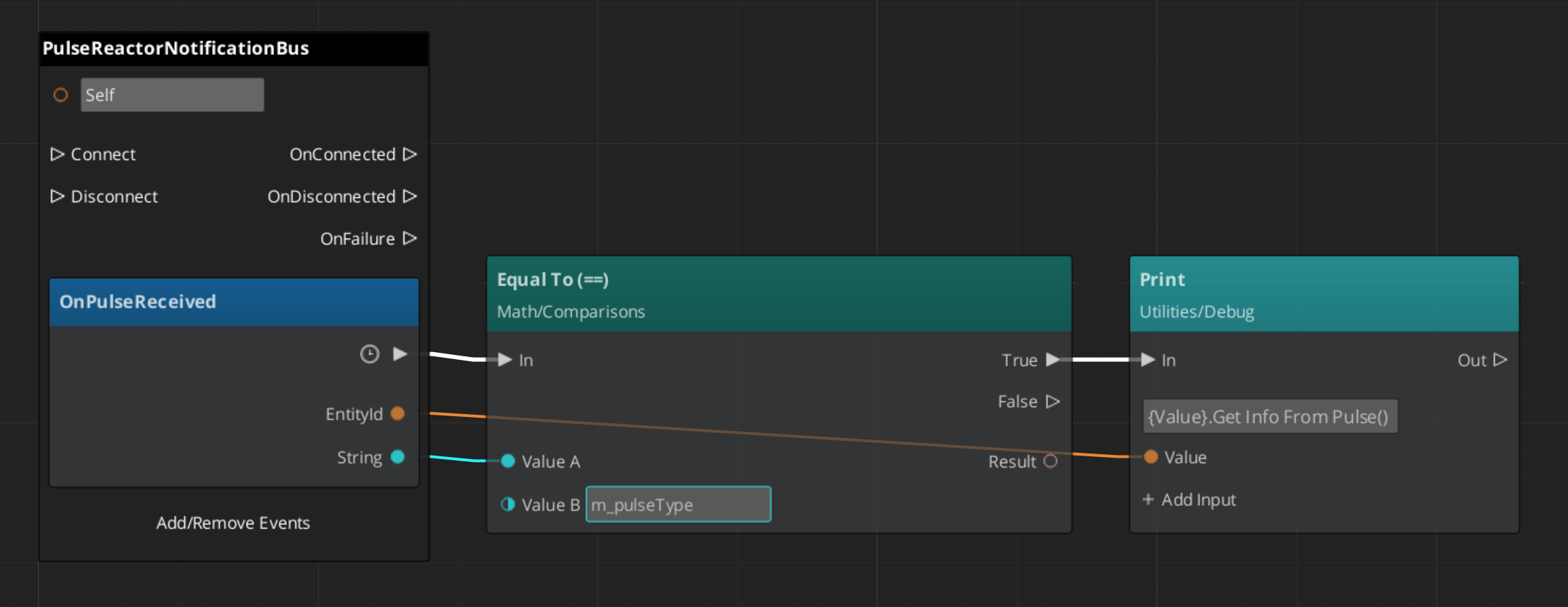

Pulse - GS_Interaction

Breakdown

When an entity enters a Pulsor’s trigger volume, the Pulsor emits its configured pulse type to all Reactors on the entering entity:

| Step | What It Means |

|---|

| 1 — Collider overlap | Physics detects an entity entering the Pulsor’s trigger volume. |

| 2 — Pulse emit | PulsorComponent reads its configured PulseType and prepares the event. |

| 3 — Reactor query | Each PulseReactorComponent on the entering entity is checked with IsReactor(). |

| 4 — Reaction | Reactors returning true have ReceivePulses() called and execute their response. |

Pulse types are polymorphic — new types are discovered automatically at startup. Any gem can define custom interaction semantics without modifying GS_Interaction.

Back to top…

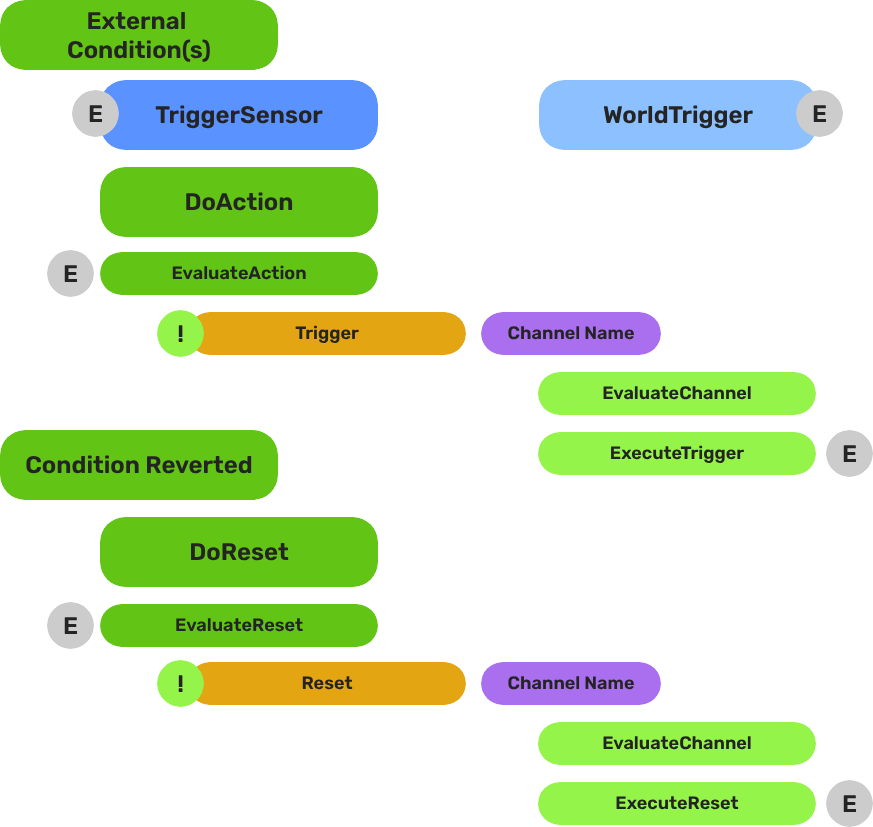

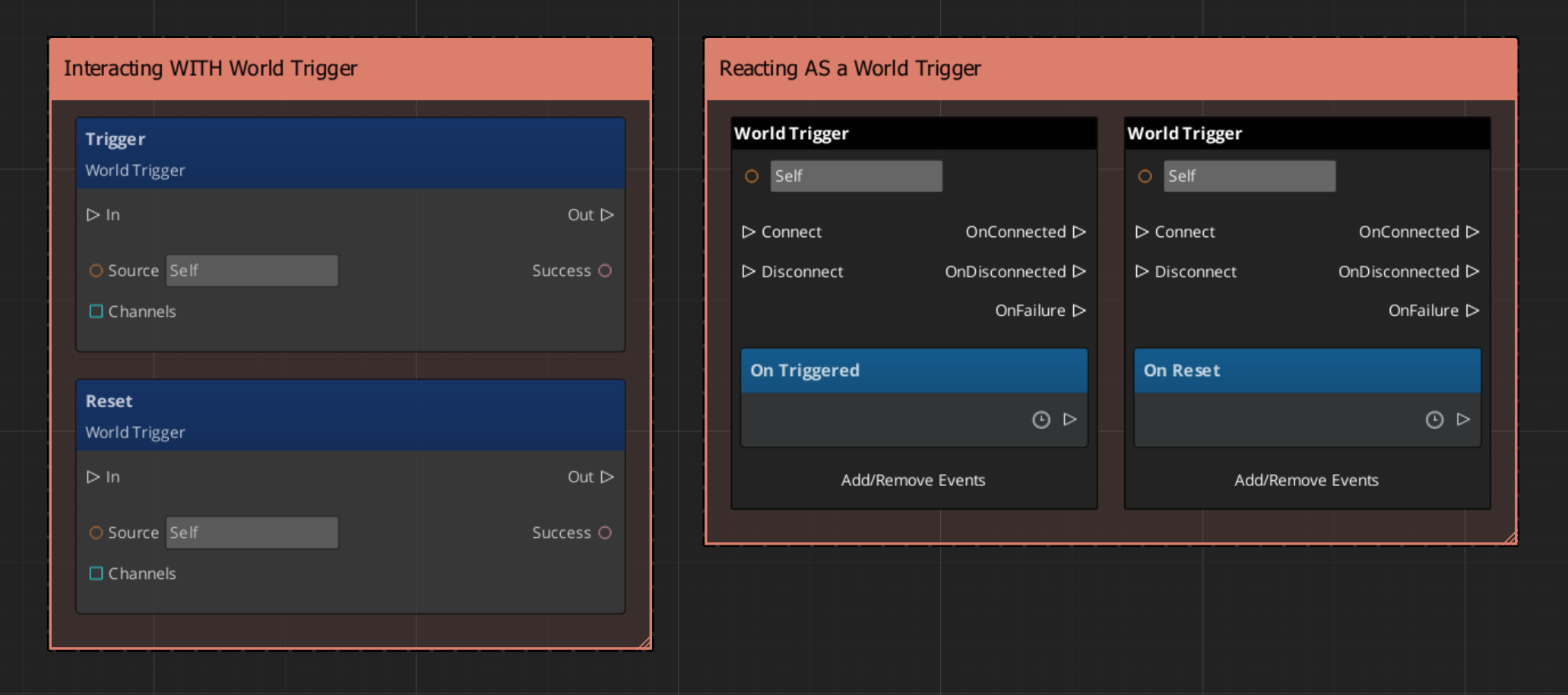

World Triggers - GS_Interaction

Breakdown

World Triggers split conditions from responses. Any number of World Triggers can stack on a single entity — each fires its own response independently when the condition is met.

| Part | What It Means |

|---|

| Trigger Sensor | Monitors for a condition. When satisfied, calls Trigger() on all WorldTriggerComponent instances on the same entity. |

| World Trigger | Executes a response when Trigger() is called. Can be reset with Reset() to re-arm for the next activation. |

No scripting is required for standard patterns. Compose Trigger Sensors and World Triggers in the editor to cover the majority of interactive world objects without writing any code.

Back to top…

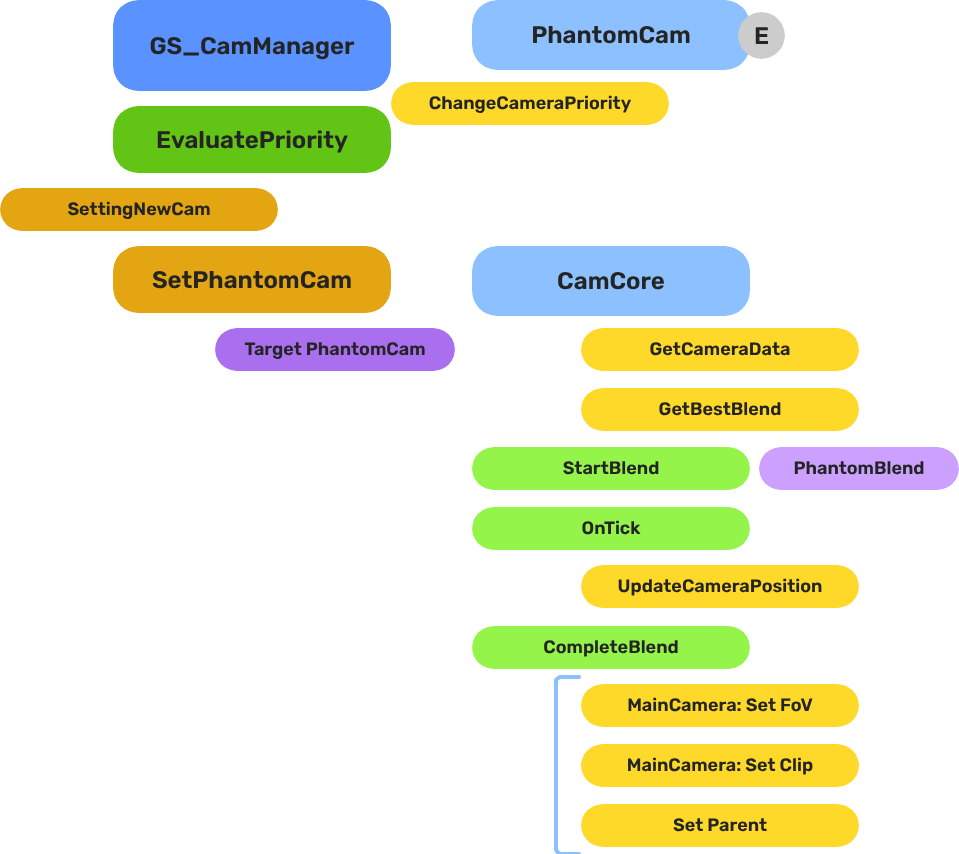

Camera Blend - GS_PhantomCam

Breakdown

When the dominant Phantom Camera changes, the Cam Manager queries the Blend Profile to determine transition timing and easing:

| Step | What It Means |

|---|

| 1 — Priority change | A Phantom Camera gains highest priority or is activated. |

| 2 — Profile query | Cam Manager calls GetBestBlend(fromCam, toCam) on the assigned GS_PhantomCamBlendProfile asset. |

| 3 — Entry match | Entries are checked by specificity: exact match → any-to-specific → specific-to-any → default fallback. |

| 4 — Interpolation | Cam Core blends position, rotation, and FOV over the matched entry’s BlendTime using the configured EasingType. |

Because Blend Profiles are shared assets, editing one profile updates every camera that references it.

Back to top…

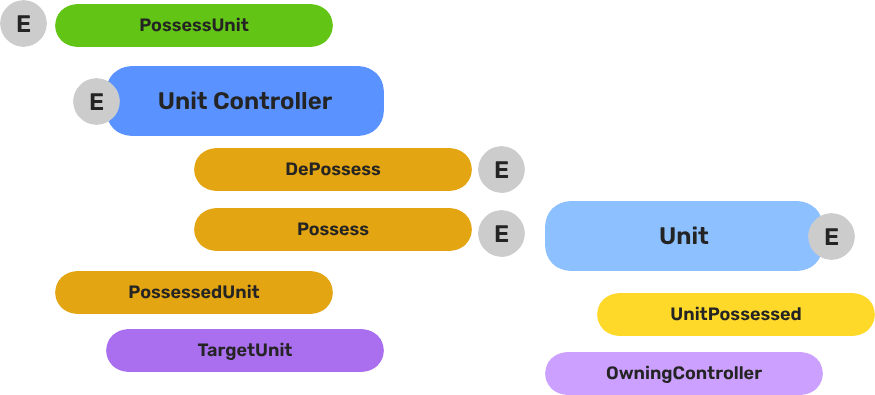

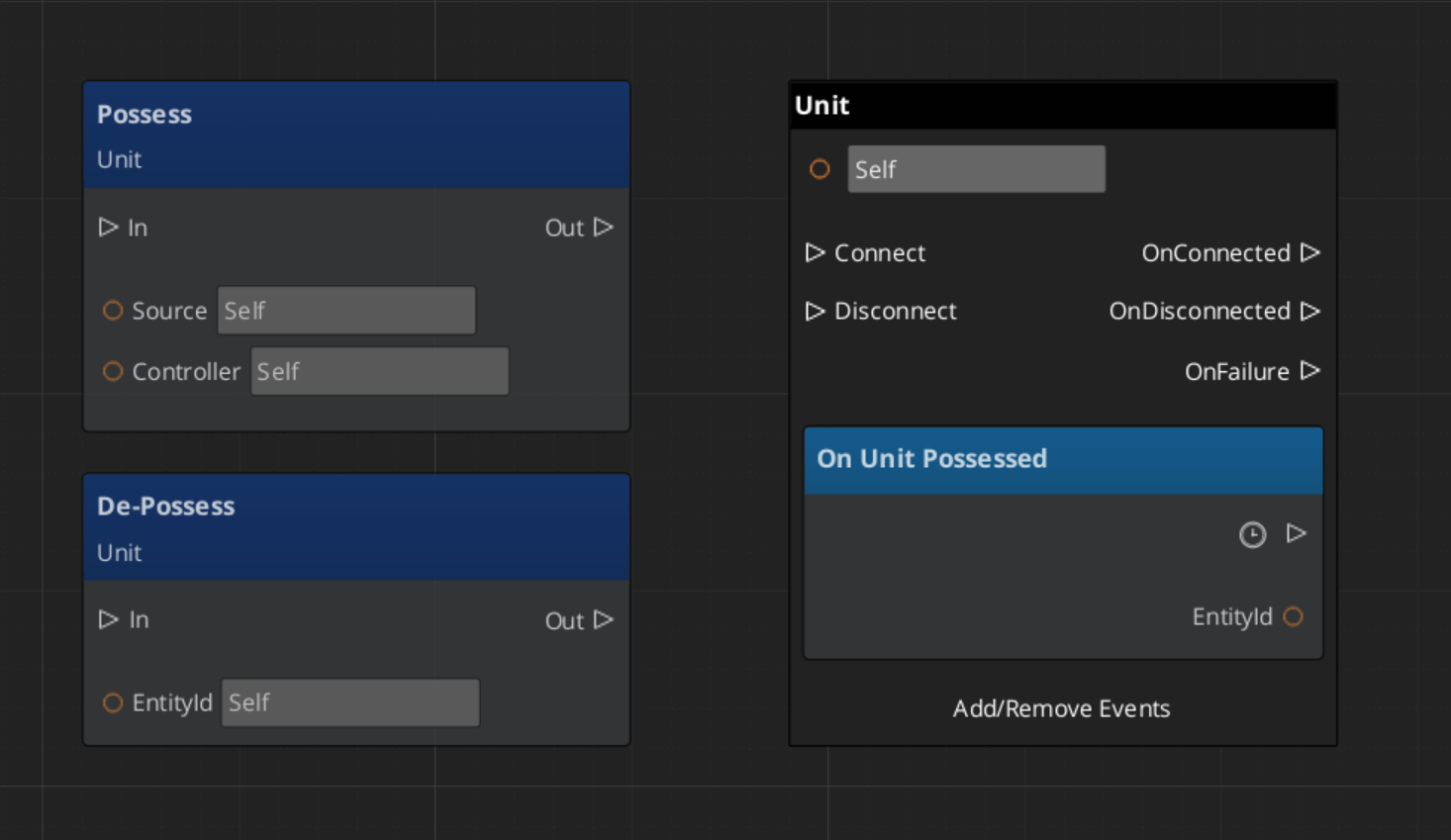

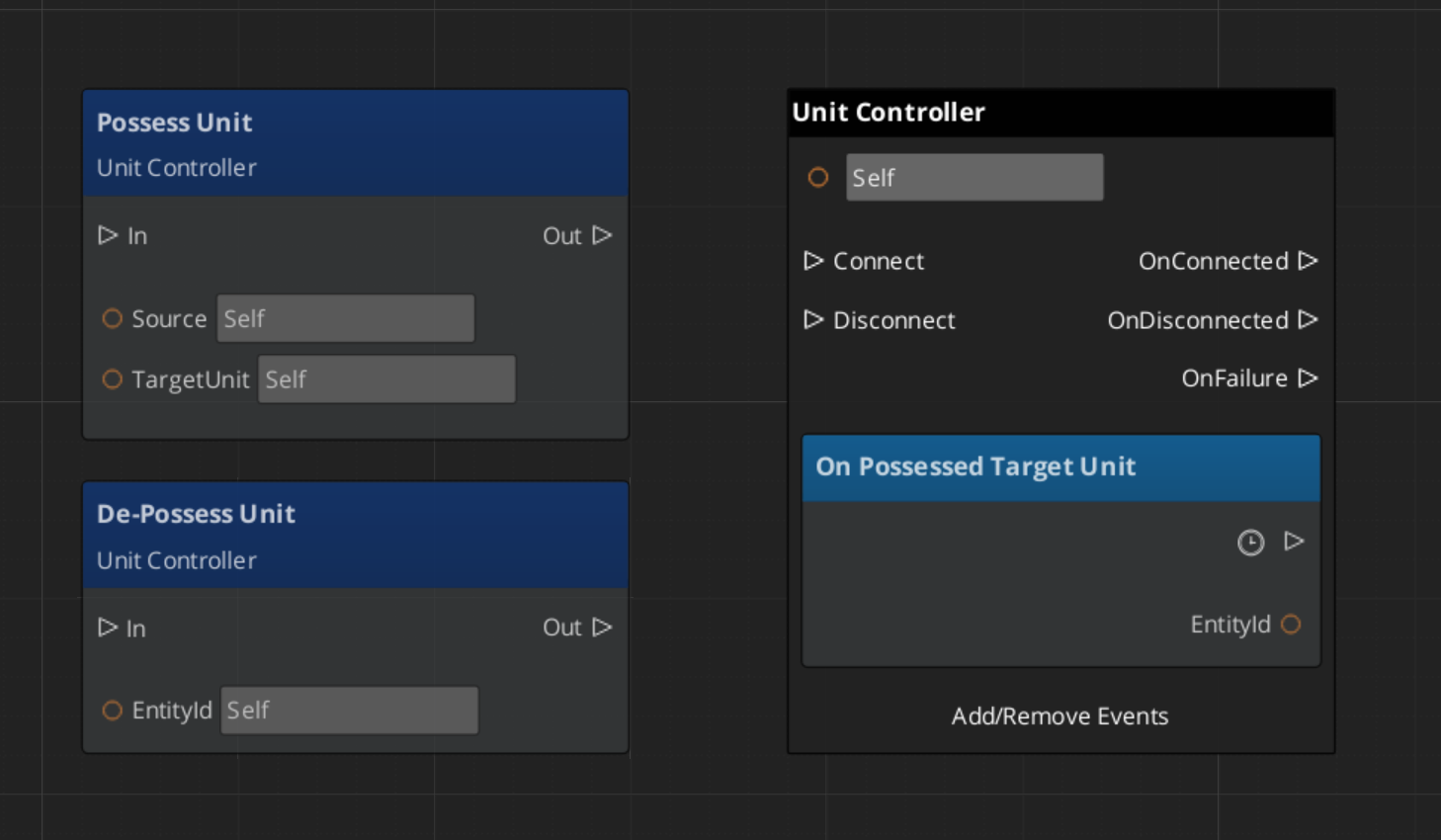

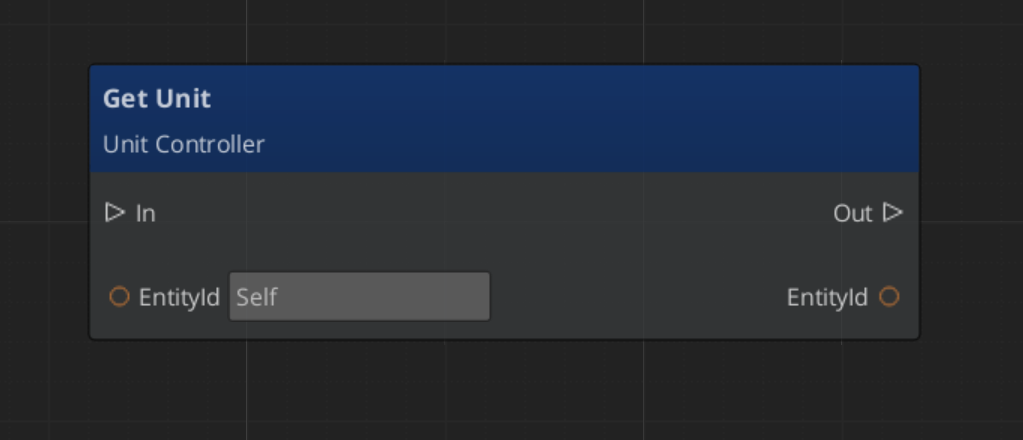

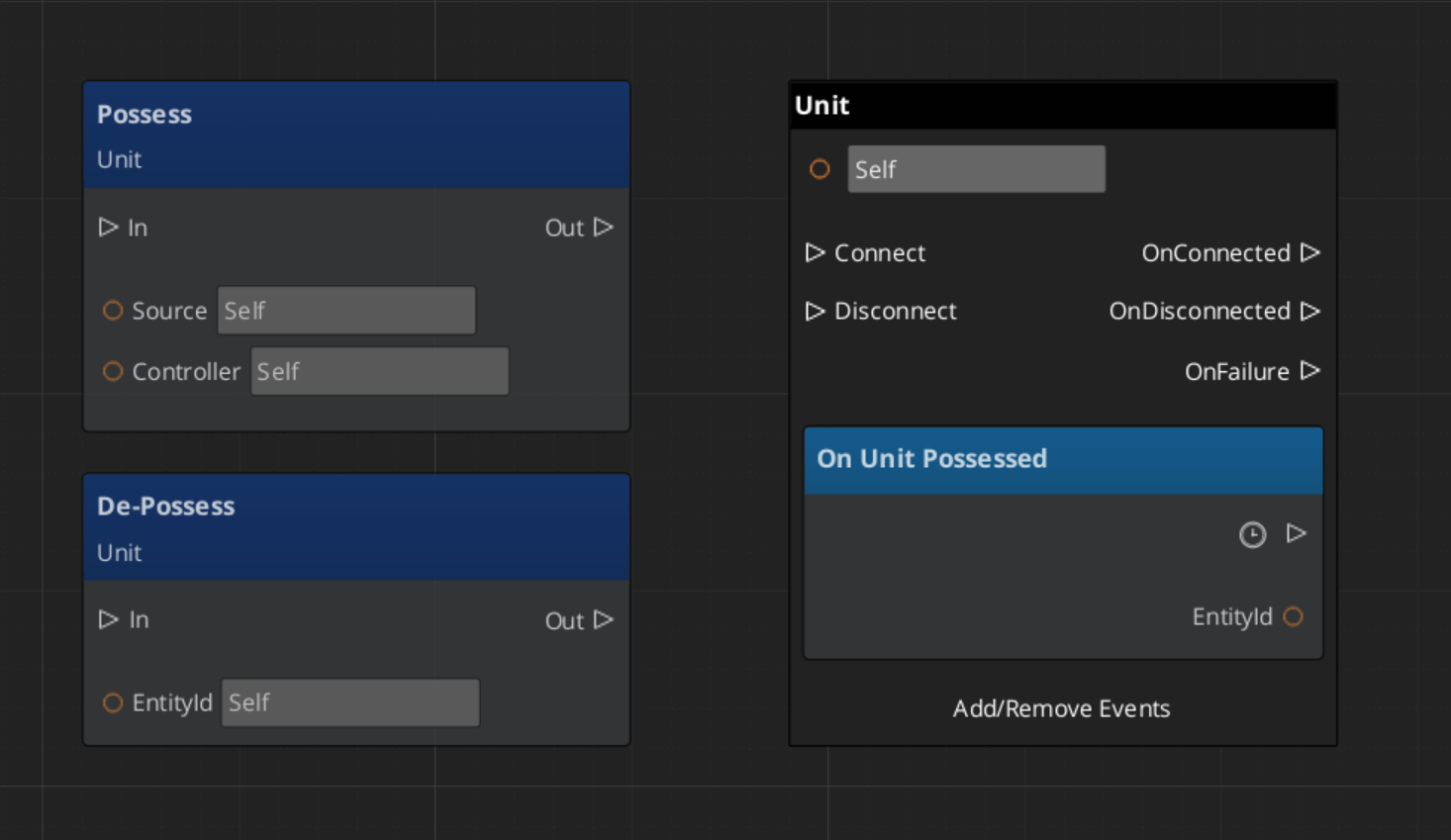

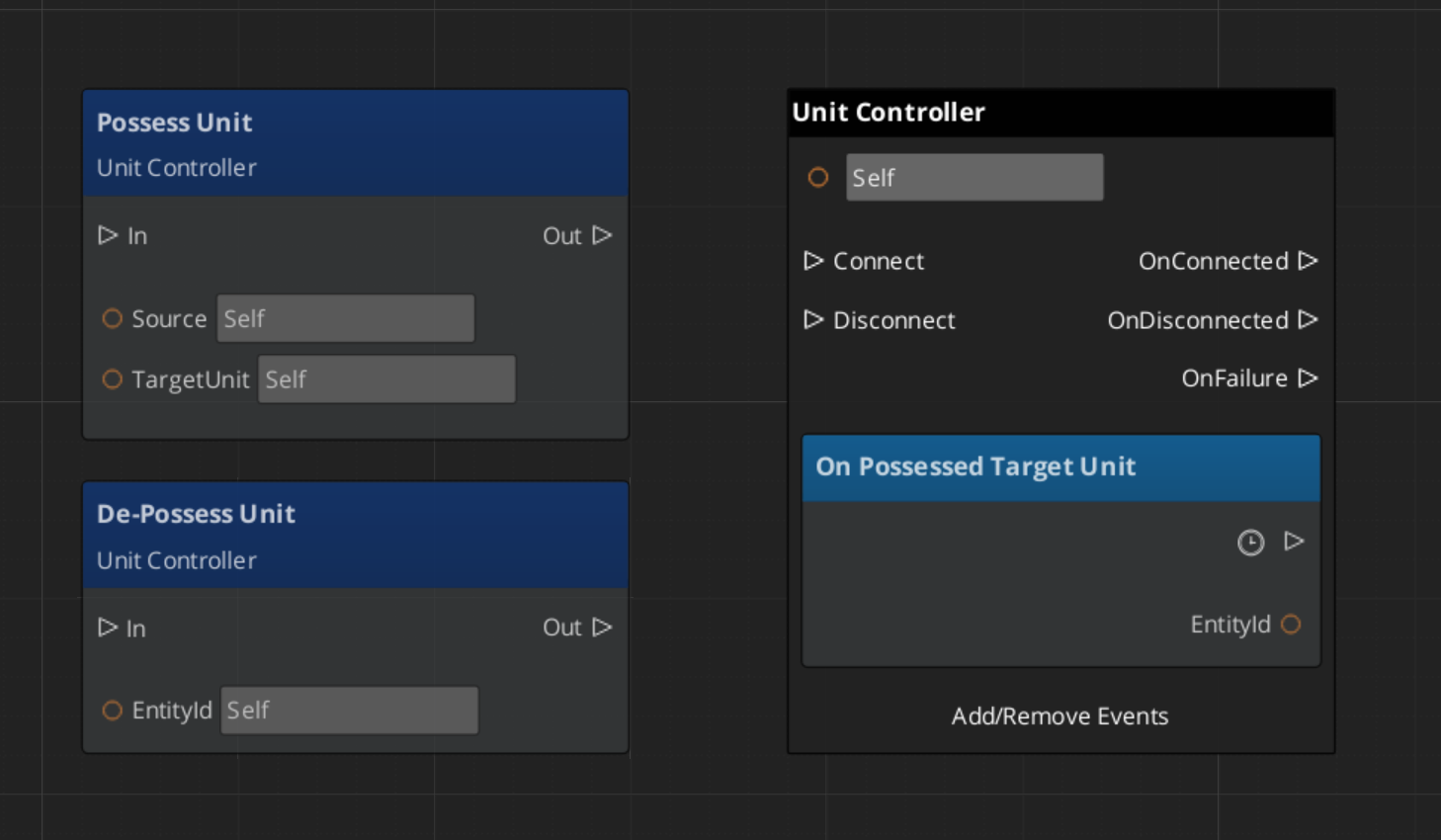

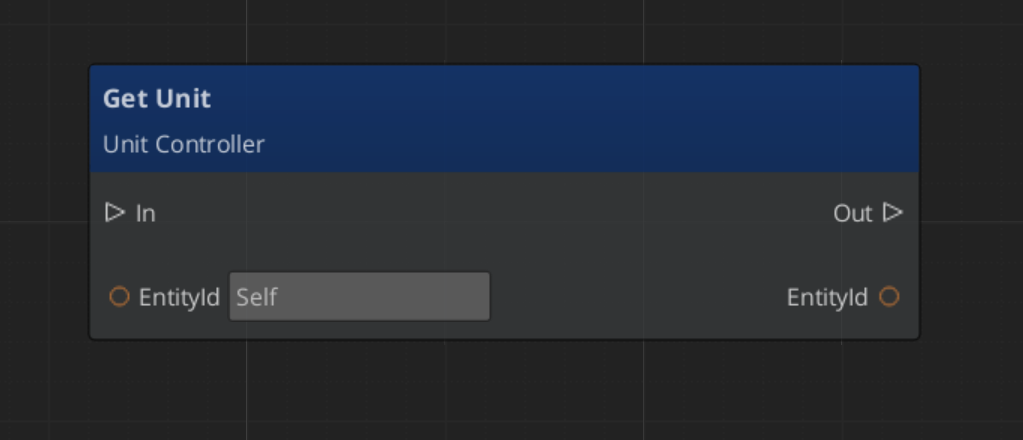

Unit Possession - GS_Unit

Breakdown

Every unit has exactly one controller at a time, or no controller at all. Possession is established by calling Possess on the unit and released by calling DePossess. The unit fires UnitPossessed on UnitNotificationBus whenever ownership changes so other systems can react.

| Concept | Description |

|---|

| Possession | A controller attaches to a unit. The unit accepts input and movement commands from that controller only. |

| DePossession | The controller releases the unit. The unit halts input processing and enters a neutral state. |

| UnitPossessed event | Fires on UnitNotificationBus (addressed by entity ID) whenever a unit’s controller changes. |

| GetController | Returns the entity ID of the current controller, or an invalid ID if none. |

| GetUniqueName | Returns the string name assigned by the Unit Manager when this unit was spawned. |

Back to top…

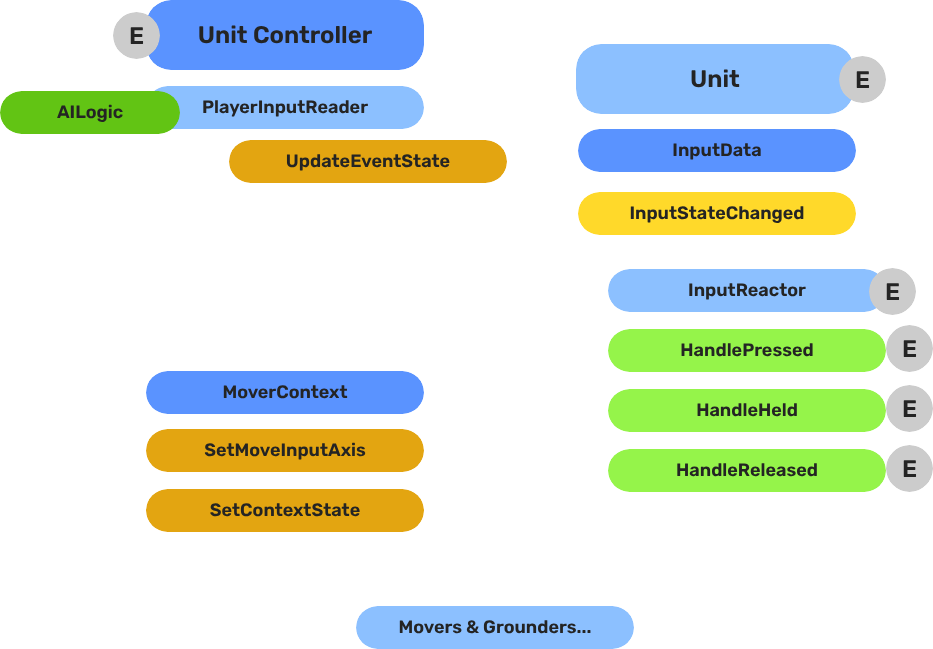

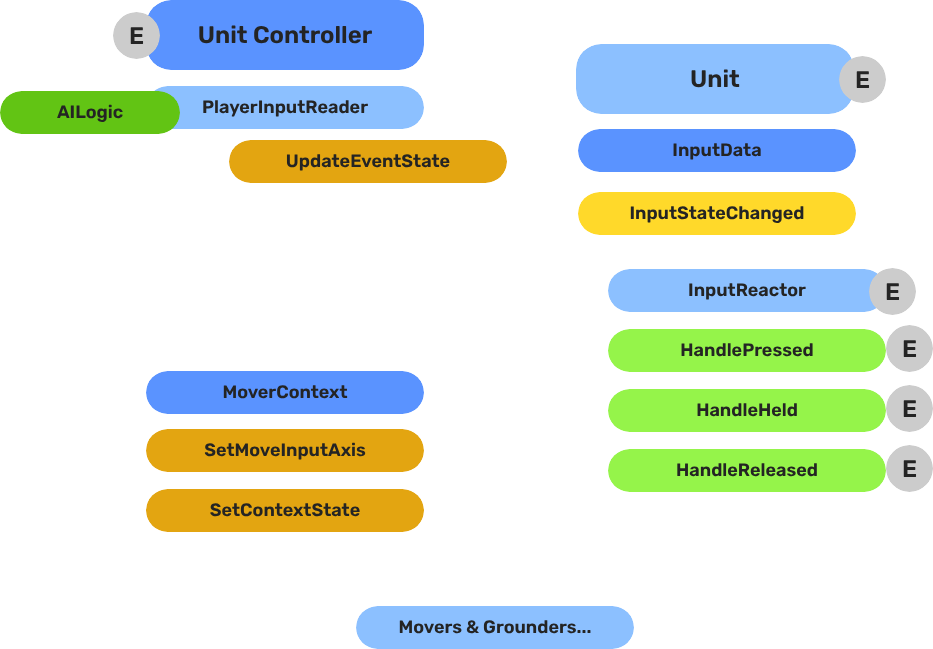

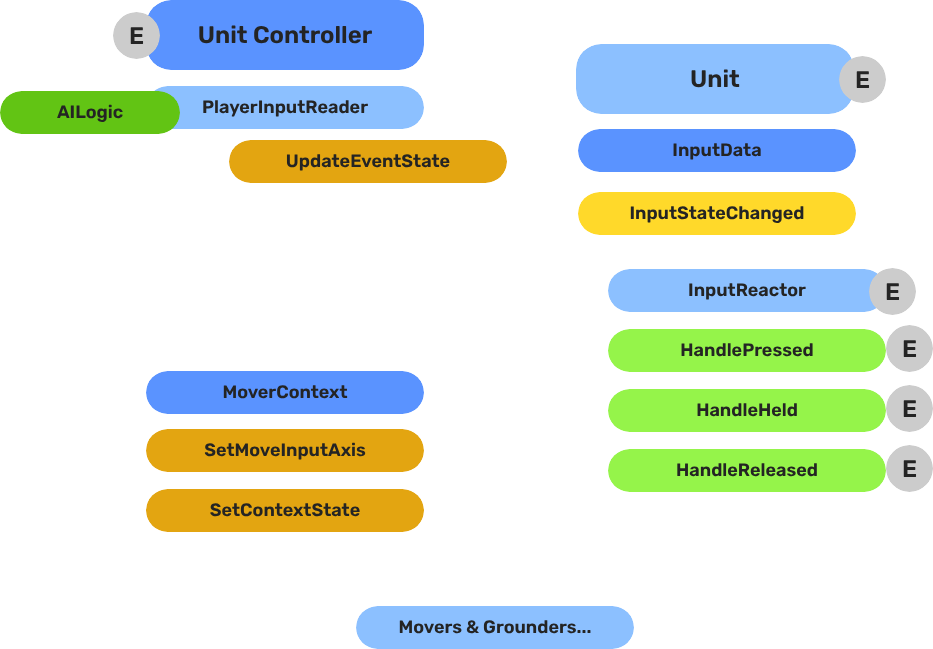

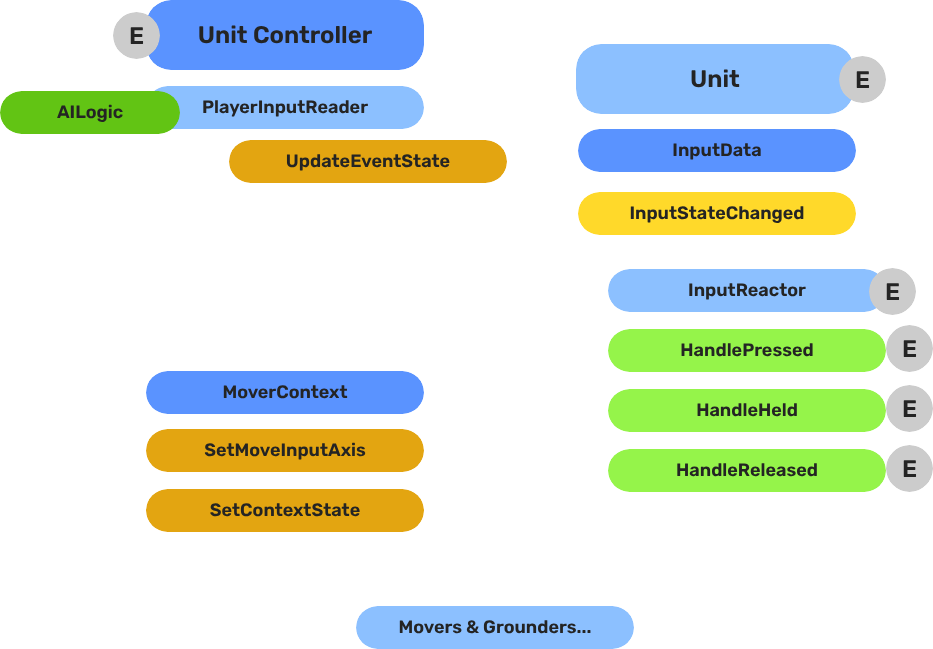

Breakdown

The input pipeline has three stages. Each stage is a separate component on the unit entity, and they run in order every frame:

| Stage | Component | What It Does |

|---|

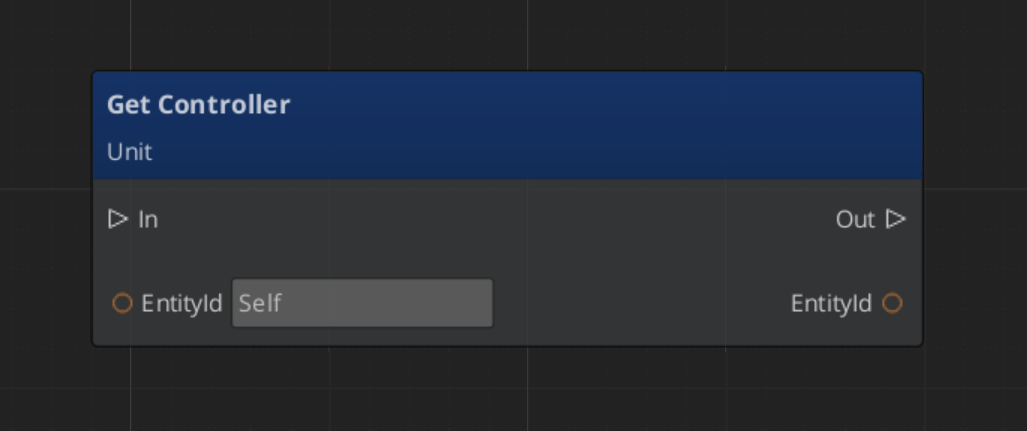

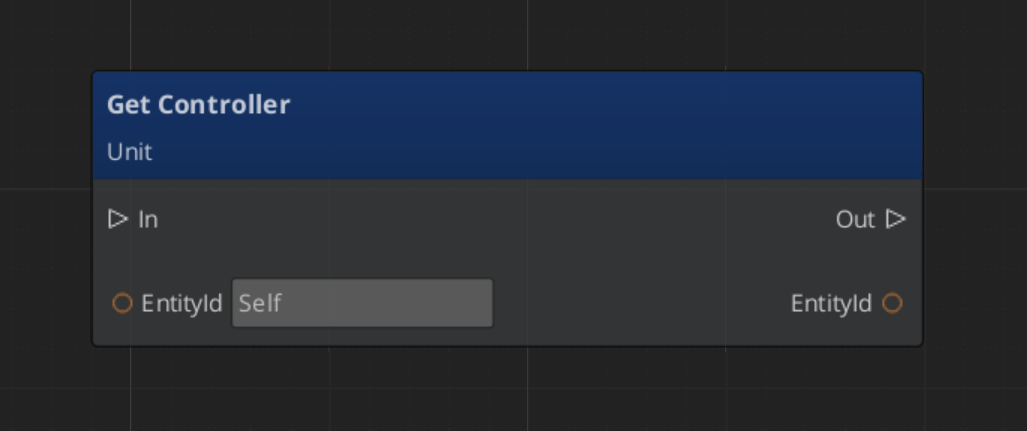

| 1 — Read | GS_PlayerControllerInputReaderComponent | Reads raw input events from the active input profile and writes them into GS_InputDataComponent. |

| 2 — Store | GS_InputDataComponent | Holds the current frame’s input state — button presses, axis values — as structured data. |

| 3 — React | Reactor components | Read from GS_InputDataComponent and produce intent: movement vectors, action triggers, etc. |

All reactor components downstream of the store stage read from the same GS_InputDataComponent, so there is no duplicated hardware polling and no risk of two reactors seeing different input states for the same frame.

- The Basics: Controllers — Possession, input routing, and controller assignment.

- The Basics: Core Input — Input profiles, action mappings, and options setup.

- The Basics: Unit Input — Input readers, axis configuration, and ScriptCanvas usage.

- Framework API: Unit Controllers — Controller components, possession API, and C++ extension.

- Framework API: Input Reader — Profile binding, action event reading, and C++ extension.

- Framework API: Input Reactor — InputDataComponent schema, reactor base class, and C++ extension.

- Framework API: Mover Context — Context state, mode authority, and C++ extension.

Back to top…

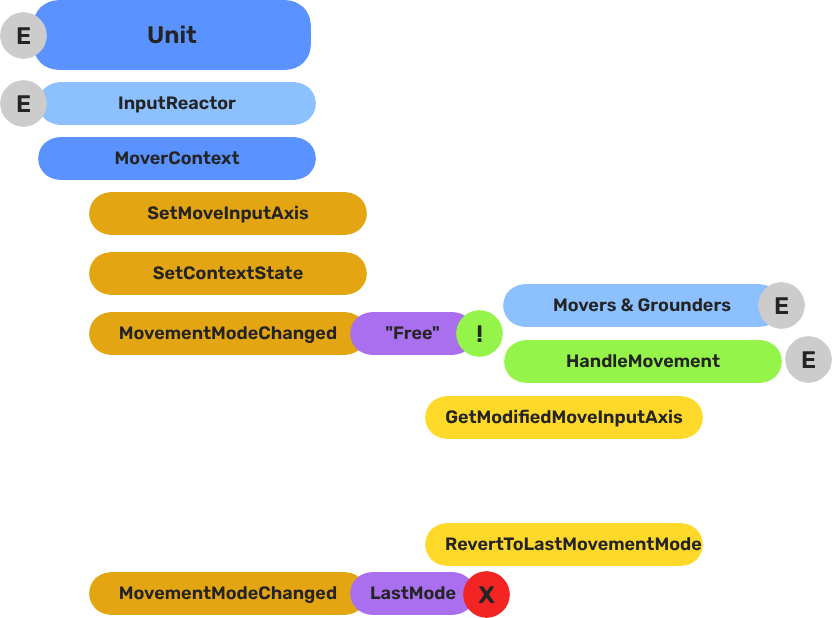

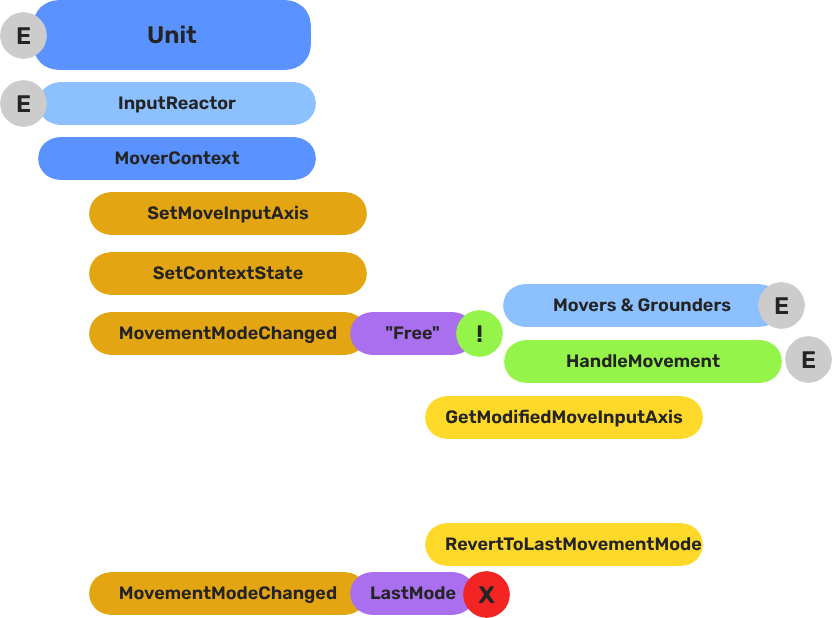

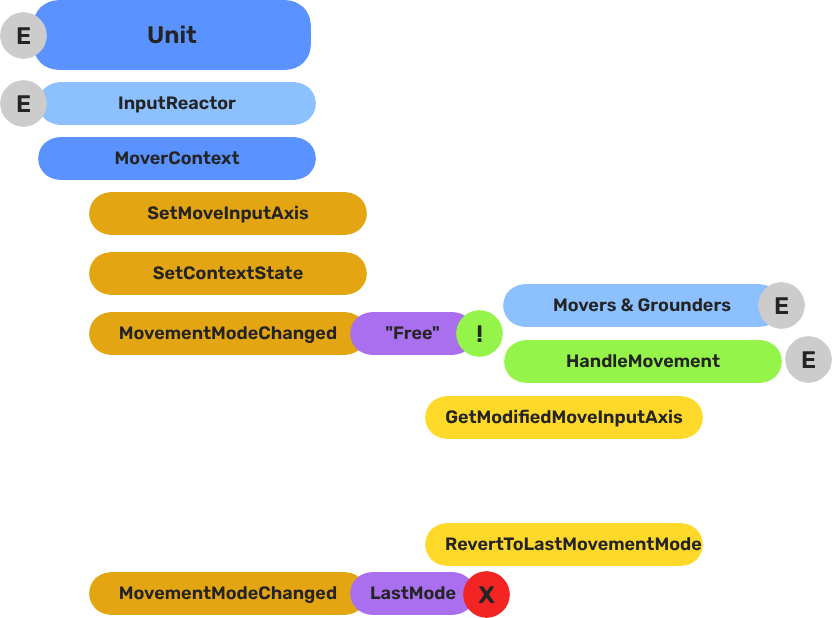

Movers & Grounders - GS_Unit

Breakdown

Movers and Grounders are multiple components on a Unit that are constantly changing activation based on the units state. When a mover is active, it freely processes the unit’s movement based on it’s functionality. At any moment, through internal or external forces, the Movement, Rotation, or Grounding state can change, which disables the old movers, and activates the new one to continue controlling the Unit.

Input

↓ InputDataNotificationBus::InputStateChanged

Input Reactor Components

↓ MoverContextRequestBus::SetMoveInputAxis

GS_MoverContextComponent

↓ ModifyInputAxis() → camera-relative

↓ GroundInputAxis() → ground-projected

↓ MoverContextNotificationBus::MovementModeChanged("Free")

GS_3DFreeMoverComponent [active when mode == "Free"]

↓ AccelerationSpringDamper → rigidBody->SetLinearVelocity

GS_PhysicsRayGrounderComponent [active when grounding mode == "Free"]

↓ MoverContextRequestBus::SetGroundNormal / SetContextState("grounding", ...)

↓ MoverContextRequestBus::ChangeMovementMode("Slide") ← when slope too steep

GS_3DSlideMoverComponent [active when mode == "Slide"]

The Slide mover activates when the Unit is walking on too steep an angle. It takes control over the unit, slides down the hill, then restores the previous movement behaviour.

- The Basics: Mover Context — Movement modes, grounding states, and configuration.

- The Basics: Movement — Mover types, grounder types, and ScriptCanvas usage.

- Framework API: Input Reactor — InputDataComponent schema, reactor base class, and C++ extension.

- Framework API: Mover Context — Context state, mode authority, and C++ extension.

- Framework API: Movers — Mover and grounder components, movement APIs, and C++ extension.

Back to top…

2.4 - Templates List

All ClassWizard templates for GS_Play gems — one-stop reference for generating extension classes across every gem.

All GS_Play extension types are generated through the ClassWizard CLI. The wizard handles UUID generation, cmake file-list registration, and module descriptor injection automatically. Never create these files from scratch.

python ClassWizard.py \

--template <TemplateName> \

--gem <GemPath> \

--name <SymbolName> \

[--input-var key=value ...]

Contents

GS_Core

Full details: GS_Core Templates

| Template | Generates | Use For |

|---|

GS_ManagerComponent | ${Name}ManagerComponent.h/.cpp + optional ${Name}Bus.h | Manager-pattern components with EBus interface |

SaverComponent | ${Name}SaverComponent.h/.cpp | Custom save/load handlers via the GS_Save system |

GS_InputReaderComponent | ${Name}InputReaderComponent.h/.cpp | Controller-side hardware input readers |

PhysicsTriggerComponent | ${Name}PhysicsTriggerComponent.h/.cpp | PhysX trigger volumes with enter/hold/exit callbacks |

GS_Interaction

Full details: GS_Interaction Templates

| Template | Generates | Use For |

|---|

PulsorPulse | ${Name}_Pulse.h/.cpp | New pulse type for the Pulsor emitter/reactor system |

PulsorReactor | ${Name}_Reactor.h/.cpp | New reactor type that responds to a named channel |

WorldTrigger | ${Name}_WorldTrigger.h/.cpp | New world trigger that fires a named event |

TriggerSensor | ${Name}_TriggerSensor.h/.cpp | New trigger sensor that listens and responds to events |

GS_Unit

Full details: GS_Unit Templates

| Template | Generates | Use For |

|---|

UnitController | ${Name}ControllerComponent.h/.cpp | Custom controller for player or AI possession logic |

InputReactor | ${Name}InputReactorComponent.h/.cpp | Unit-side input translation from named event to bus call |

Mover | ${Name}PhysicsMoverComponent.h/.cpp or ${Name}MoverComponent.h/.cpp | Custom locomotion mode (walk, swim, climb, etc.) |

Grounder | ${Name}PhysicsRayGrounderComponent.h/.cpp or ${Name}GrounderComponent.h/.cpp | Custom ground state detection (ray, capsule, custom) |

GS_UI

Full details: GS_UI Templates

| Template | Generates | Use For |

|---|

UiMotionTrack | ${Name}Track.h + .cpp | New LyShine property animation track for .uiam assets |

GS_Juice

Full details: GS_Juice Templates

| Template | Generates | Use For |

|---|

FeedbackMotionTrack | ${Name}Track.h + .cpp | New world-space entity property animation track for .feedbackmotion assets |

GS_PhantomCam

Full details: GS_PhantomCam Templates

| Template | Generates | Use For |

|---|

PhantomCamera | ${Name}PhantomCamComponent.h/.cpp | Custom camera behaviour (follow, look-at, tick overrides) |

GS_Cinematics

Full details: GS_Cinematics Templates

| Template | Generates | Use For |

|---|

DialogueCondition | ${Name}_DialogueCondition.h/.cpp | Gate dialogue node connections — return true to allow |

DialogueEffect | ${Name}_DialogueEffect.h/.cpp | Fire world events from Effects nodes; optionally reversible |

DialoguePerformance | ${Name}_DialoguePerformance.h/.cpp | Drive world-space NPC actions; sequencer waits for completion |

Registration Quick Reference

| Template | Auto-registered by CLI | Manual step required |

|---|

| Manager Component | Yes (cmake + module) | None |

| Saver Component | Yes (cmake + module) | None |

| InputReader Component | Yes (cmake + module) | None |

| Physics Trigger Component | Yes (cmake + module) | None |

| Pulsor Pulse | Yes (cmake) | None |

| Pulsor Reactor | Yes (cmake) | None |

| World Trigger | Yes (cmake) | None |

| Trigger Sensor | Yes (cmake) | None |

| Unit Controller | Yes (cmake + module) | None |

| Input Reactor | Yes (cmake + module) | None |

| Mover Component | Yes (cmake + module) | Set mode name strings in Activate() |

| Grounder Component | Yes (cmake + module) | Set mode name string in Activate() |

| Phantom Camera | Yes (cmake + module) | None |

| UiMotionTrack | Yes (cmake) | Add Reflect(context) to UIDataAssetsSystemComponent |

| FeedbackMotionTrack | Yes (cmake) | Add Reflect(context) to GS_JuiceDataAssetSystemComponent |

| DialogueCondition | Yes (cmake) | Add Reflect(context) to DialogueSequencerComponent |

| DialogueEffect | Yes (cmake) | Add Reflect(context) to DialogueSequencerComponent |

| DialoguePerformance | Yes (cmake) | Add Reflect(context) to DialogueSequencerComponent |

2.5 - Powerful Utilities

Powerful Utilities associated with the GS_Play featuresets.

Powerful Utilities Outline

Powerful Utilities

2.6 - Glossary

Descriptions and resources around common terminology used in the documentation.

GS_Play Definitions

Action

An Action is a reusable, entity-scoped behavior triggered by name. Actions are authored as ActionComponent instances and fired via ActionRequestBus. Any trigger source — a physics zone, dialogue effect, another action, or scripted logic — can invoke an action by name without needing a direct reference to the target component.

Babble

Babble is procedural audio vocalisation synchronized to the Typewriter text display system in GS_Cinematics. The BabbleComponent generates tones keyed to a speaker’s identity and tone configuration, producing low-fidelity speech sounds as each character is revealed. See Babble API.

Controller

See Unit Controller.

Coyote Time

Coyote Time is a grace period during which a grounded jump remains valid for a brief window after the unit walks off a ledge. It prevents the frustrating experience of pressing jump a frame too late when stepping off an edge. Configured per mover component as a float duration in seconds.

Debug Mode

Debug Mode is a setting on the Game Manager component that makes the game start in the current editor level instead of navigating to the title stage. All manager initialization and event broadcasting proceed normally — only the startup navigation changes. Use it for rapid iteration on any level without going through the full boot flow.

Extensible

Extensible means able to be inherited from to augment and expand on the core functionality. Extensible classes and components, identified by the extensible tag in the documentation, are those available on the public Gem API build target and usable across gem and project boundaries. Extensible classes usually have ClassWizard templates to rapidly generate child classes.

Feedback Sequence

A Feedback Sequence is a data asset (.feedbackmotion) that drives world-space entity property animations — position, rotation, scale, material parameters — over time. Sequences are played on entities via GS_FeedbackRequestBus. Track types within a sequence are polymorphic and extensible via the FeedbackMotionTrack ClassWizard template. See Feedback System API.

Grounder

A Grounder is a component that detects whether a unit is standing on a surface and reports the ground normal, surface material, and grounded state. Grounders feed data to movers and to coyote time logic. The built-in GS_PhysicsRayGrounderComponent uses a downward raycast. Custom grounders can be created with the Grounder ClassWizard template. See Movement API.